Microbiome Data Normalization Explained: A Guide for Biomedical Research & Drug Development

This guide provides a comprehensive overview of microbiome data normalization techniques, crucial for accurate analysis in biomedical research.

Microbiome Data Normalization Explained: A Guide for Biomedical Research & Drug Development

Abstract

This guide provides a comprehensive overview of microbiome data normalization techniques, crucial for accurate analysis in biomedical research. It covers foundational concepts, key methodological approaches, common pitfalls, and best practices for method validation. Tailored for researchers and drug development professionals, the article aims to clarify why normalization is essential, how to implement it, and how to choose the right method for robust, reproducible results in clinical and translational studies.

Why Normalize? Understanding the Critical Need in Microbiome Analysis

The analysis of microbial community data, typically generated via high-throughput sequencing of 16S rRNA or shotgun metagenomes, begins with count tables. These tables record the frequency of sequences assigned to individual taxa across multiple samples. A fundamental thesis in microbiome data science is that these raw counts are not directly comparable due to variable sequencing depth. This necessitates normalization, a suite of techniques aiming to remove technical artifacts to reveal true biological variation. The most intuitive normalization is the conversion to relative abundance, where each count is divided by the total number of counts in its sample. However, this introduces the "compositional" nature of the data: an increase in the relative abundance of one taxon mathematically necessitates a decrease in the relative abundance of others. This guide explores the implications of this constraint and the analytical paradigms that move beyond it.

The core issue is that relative abundances sum to a constant (e.g., 1 or 100%). This closure property induces spurious correlations and obscures true associations. The following table summarizes key characteristics and consequences.

Table 1: Properties and Challenges of Compositional Microbiome Data

| Property | Mathematical Description | Analytical Consequence |

|---|---|---|

| Closure (Unit Sum) | ∑i=1D xi = κ (e.g., 1, 106) | Data resides in a simplex, not in Euclidean space. |

| Sub-compositional Incoherence | Inference from a subset of parts differs from the whole. | Conclusions depend on which taxa are included in the analysis. |

| Spurious Correlation | Correlation between parts arises from the closure constraint alone. | Can falsely infer ecological relationships (competition/cooperation). |

| Scale Invariance | Only relative information is retained; absolute abundances are lost. | Cannot distinguish between a doubling of Taxon A versus a halving of all other taxa. |

Experimental Protocols for Assessing Compositional Effects

To empirically demonstrate compositional constraints, researchers employ both in silico and in vitro experiments.

Protocol 1: In Silico Spike-in Simulation for Detecting Spurious Correlation

- Data Acquisition: Obtain a real microbiome count dataset (e.g., from Qiita or MG-RAST).

- Normalization: Generate a relative abundance table by dividing each count by its sample's total read count.

- Simulation: Randomly select two non-dominant taxa (Taxon X and Y). In a subset of samples, artificially "spike" the count of Taxon X by a random multiplier (2x-10x) without changing Taxon Y's count.

- Re-closure: Recalculate relative abundances for the manipulated samples.

- Analysis: Calculate the correlation (e.g., Spearman's ρ) between Taxon X and Taxon Y across all samples (spiked and unspiked).

- Expected Outcome: A significant negative correlation will be observed, induced purely by the re-normalization after the spike, demonstrating a spurious relationship.

Protocol 2: Mock Community Validation for Absolute Quantification

- Reagent Preparation: Acquire a commercial mock microbial community (e.g., ZymoBIOMICS Microbial Community Standard) with known, absolute cell counts for each member strain.

- Library Preparation & Sequencing: Split the community into multiple technical replicates. Process replicates through DNA extraction, library preparation (using kits like KAPA HyperPlus), and sequencing on platforms like Illumina MiSeq.

- Bioinformatic Processing: Process raw sequences through a standard pipeline (e.g., QIIME 2, DADA2) to generate count tables.

- Data Analysis:

- Calculate relative abundances from counts.

- Use an internal spike-in (e.g., known quantity of an exotic DNA not in the mock community, added prior to extraction) to estimate absolute genome copies.

- Comparison: Compare the inferred relative/absolute abundances to the known truth. This protocol highlights the discrepancy between relative proportions and absolute quantities.

Pathways and Workflows: From Data to Inference

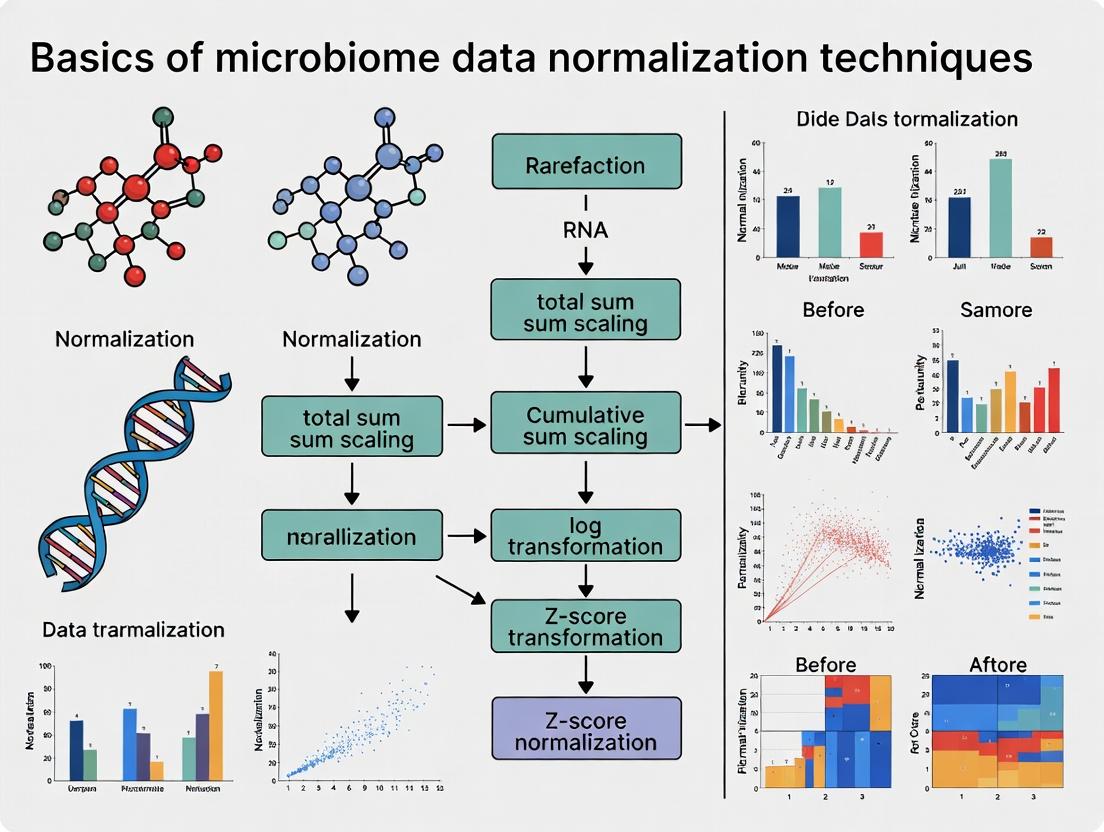

Diagram 1: Microbiome data analysis pathways highlighting the compositional choice.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Compositional Data Research

| Item | Function & Relevance |

|---|---|

| ZymoBIOMICS Microbial Community Standard | Defined mock community with known composition. Validates bioinformatic pipelines and highlights the difference between relative and absolute abundance. |

| External Spike-in Controls (e.g., SIRV, ERCC RNA) | Synthetic DNA/RNA sequences spiked into samples pre-extraction. Used to model technical variation and, when used at known concentrations, estimate absolute feature counts. |

| Internal Positive Control (IPC) DNA | Non-biological DNA (e.g., from Arabidopsis thaliana) added at a fixed concentration to all samples post-extraction. Monitors PCR amplification efficiency but cannot correct for extraction bias. |

| KAPA HyperPlus Kit | A consistent, high-performance library preparation kit. Reduces technical batch effects that would otherwise be confounded with compositional data analysis. |

Qiime 2 (w/ q2-composition plugin) |

Bioinformatic platform providing compositional tools like Aitchison distance, ANCOM, and robust Aitchison PCA. |

R package compositions or robCompositions |

Statistical suites for performing log-ratio transformations, dealing with zeros, and visualization within the compositional data framework. |

Moving Beyond Relative Abundance: Core Methodologies

The field has developed several key methods to account for compositionality.

A. Log-Ratio Transformations: Aitchison Geometry The core solution is to transform data from the simplex to real Euclidean space using log-ratios.

- Additive Log-Ratio (ALR): Log-transform ratios of taxa against a chosen reference taxon.

ALR(x) = log(x_i / x_ref). Simple but reference-dependent. - Centered Log-Ratio (CLR): Log-transform ratios of taxa against the geometric mean of all taxa.

CLR(x) = log(x_i / G(x)). Symmetric but creates singular covariance matrix. - Isometric Log-Ratio (ILR): Constructs orthonormal balances between sequential partitions of a phylogenetic tree, creating interpretable coordinates.

B. Differential Abundance Testing: Compositionally-Aware Tools Standard tests (t-test, DESeq2 on raw counts) fail under compositionality. Specialized tools are required.

Protocol: Analysis of Compositions of Microbiomes (ANCOM)

- CLR Transformation: Calculate the CLR for each taxon in each sample.

- Pairwise Log-Ratio Testing: For each taxon, compute all pairwise log-ratios with every other taxon (e.g., log(Taxon A / Taxon B)).

- Wilcoxon Rank Test: For a given taxon, apply a non-parametric test (e.g., Wilcoxon) to each of its pairwise log-ratios across sample groups (e.g., Healthy vs. Disease).

- W Statistic: For each taxon, count the number of pairwise tests where it was significantly different (p < α). This count is the W statistic.

- Hypothesis Testing: A high W statistic indicates the taxon's relative abundance to most others is consistent and different between groups, suggesting true differential abundance.

C. Incorporating Scale (Absolute Quantification) The ultimate solution is to measure absolute microbial loads.

- Flow Cytometry: Direct cell counting of microbial particles in a sample.

- Quantitative PCR (qPCR): Targeting a universal gene (e.g., 16S rRNA gene) with a standard curve.

- Spike-in-Based Normalization: Adding a known quantity of synthetic or foreign DNA prior to DNA extraction to estimate total microbial load per sample.

Diagram 2: Decision tree for choosing a microbiome data normalization or analysis method.

Within the foundational research on microbiome data normalization techniques, a critical first step is the identification and characterization of key sources of bias. Accurate interpretation of microbial community profiles from high-throughput sequencing data (e.g., 16S rRNA gene amplicon or shotgun metagenomic sequencing) is fundamentally confounded by multiple technical artifacts. These biases distort the true biological signal, making comparative analyses invalid if not properly accounted for. This guide details the primary sources of bias, from initial sample collection to final sequencing output, providing a framework for researchers and drug development professionals to critically assess their data.

Library Size Variation (Sampling Depth Heterogeneity)

The most conspicuous bias is the variation in the total number of sequences obtained per sample, known as library size or sequencing depth. This variation is non-biological, arising from technical steps in library preparation and sequencing. Comparing raw counts across samples with different library sizes directly leads to spurious conclusions, as a sample with deeper sequencing will artificially appear to have higher species richness and abundance.

Table 1: Impact of Variable Library Size on Apparent Diversity

| Sample ID | Total Reads (Library Size) | Observed ASVs | Shannon Index (Raw) |

|---|---|---|---|

| Sample_A | 15,000 | 150 | 3.8 |

| Sample_B | 45,000 | 220 | 4.5 |

| Normalized (Subsampled to 15k) | |||

| Sample_A | 15,000 | 150 | 3.8 |

| Sample_B | 15,000 | 185 | 4.2 |

Technical Artifacts Across the Experimental Workflow

Bias is introduced at every stage of the experimental pipeline. The following diagram outlines the primary sources.

Diagram Title: Microbiome Workflow and Key Technical Bias Sources

- Sample Collection & Storage: Variation in stabilization methods and storage conditions can alter microbial composition.

- Nucleic Acid Extraction: The primary major source of bias. Differential lysis efficiency across bacterial taxa (e.g., Gram-positive vs. Gram-negative) and co-extraction of inhibitory compounds significantly skews abundance profiles.

- PCR Amplification (for 16S studies): Primer mismatches and variable amplification efficiency due to GC content or template concentration cause quantitative inaccuracies and can exclude certain taxa.

- Library Preparation: Index PCR can introduce duplicate reads and index hopping (misassignment of reads between samples).

- Sequencing: Platform-specific biases (e.g., Illumina's GC bias during cluster amplification), sequencing errors, and PhiX spike-in effects.

- Bioinformatics: Quality filtering, chimera removal, reference database choice for taxonomy assignment, and clustering algorithms all influence final results.

Contamination and Batch Effects

- Kit and Laboratory Contamination: Reagent microbiomes, especially in low-biomass samples, are a critical concern. Negative controls are essential.

- Batch Effects: Systematic technical differences between experimental runs (different extraction kits, personnel, sequencing lanes, or reagent lots) can be stronger than biological effects.

Experimental Protocols for Bias Assessment

Protocol for Evaluating Extraction Bias Using Mock Communities

Objective: Quantify the bias introduced by DNA extraction kits. Materials: See The Scientist's Toolkit below. Methodology:

- Standardized Mock Community: Procure a commercially available genomic DNA mock community with known, equimolar abundances of 10-20 diverse bacterial strains.

- Multi-Kit Extraction: Aliquot the same physical mock community (lyophilized cells) or standardized cell pellet. Perform DNA extraction in triplicate using at least 3 different extraction kits/methods common in your field.

- Library Preparation & Sequencing: Process all extracts from step 2 simultaneously using the same 16S rRNA gene primers (e.g., V4 region) and library prep kit. Sequence on the same Illumina flow cell with balanced multiplexing.

- Bioinformatic Analysis: Process reads through a standardized pipeline (DADA2, QIIME 2). Assign taxonomy using a curated database.

- Quantification of Bias:

- Calculate the observed relative abundance for each strain.

- Compute the log2 fold-change between observed and expected abundance for each strain within each kit.

- Perform PERMANOVA to determine if the extraction kit explains a significant portion of the variance in composition.

Table 2: Representative Data from an Extraction Bias Experiment

| Bacterial Strain | Expected % | Kit A Observed % | Kit B Observed % | Log2FC (Kit A) | Log2FC (Kit B) |

|---|---|---|---|---|---|

| Pseudomonas aeruginosa | 10.0 | 15.2 | 8.5 | 0.60 | -0.23 |

| Staphylococcus aureus | 10.0 | 5.8 | 18.3 | -0.78 | 0.87 |

| Lactobacillus fermentum | 10.0 | 12.1 | 6.2 | 0.27 | -0.69 |

Protocol for Monitoring Batch Effects

Objective: Detect and quantify the impact of batch processing. Methodology:

- Inter-Batch Controls: Include the same positive control sample (e.g., a well-characterized stool extract or mock community DNA) in every extraction batch and every sequencing run.

- Statistical Analysis: Perform Principal Coordinate Analysis (PCoA) on a beta-diversity metric (e.g., Bray-Curtis). Color samples by batch. Statistically test for batch association using PERMANOVA.

- Corrective Action: If batch effect is significant (p < 0.05), apply batch correction methods (e.g., ComBat, limma's

removeBatchEffect) with caution, or include batch as a covariate in downstream linear models.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bias Assessment and Control

| Item | Function & Rationale |

|---|---|

| Genomic DNA Mock Community (e.g., ZymoBIOMICS Microbial Community Standard) | Provides a ground truth of known composition to quantify extraction and amplification bias. Essential for kit validation. |

| Process Controls (e.g., ZymoBIOMICS Spike-in Control I [Low Biomass]) | Added to samples to monitor extraction efficiency and detect inhibition across samples of varying biomass. |

| DNA Extraction Negative Control (e.g., nuclease-free water processed alongside samples) | Identifies contaminating DNA introduced from extraction kits and laboratory environment. Critical for low-biomass studies. |

| PCR Negative Control (Master mix + water used as template) | Detects contamination in PCR reagents and amplicon carryover. |

| PhiX Control v3 | Spiked into Illumina runs (1-5%) for improved base calling, cluster identification, and monitoring of lane performance. |

| Standardized Primer Sets (e.g., 515F/806R for 16S V4) | Using well-validated, peer-reviewed primer sets minimizes primer bias and improves cross-study comparability. |

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Reduces PCR errors and chimera formation during amplification, improving sequence fidelity. |

| Dual-Indexed Sequencing Adapters | Unique dual indexing (i7 and i5) minimizes index hopping (crosstalk) between samples on high-density Illumina flow cells. |

A rigorous understanding of bias sources—from profound library size variation to subtle technical artifacts introduced at each step—is the indispensable foundation for any research on microbiome data normalization. Effective normalization techniques aim to mitigate these biases, but their proper application requires knowing which bias is being addressed. The experimental protocols and controls outlined here provide a roadmap for researchers to audit their own pipelines, thereby producing more reliable and reproducible data for downstream analysis and therapeutic development.

Within the broader thesis on the basics of microbiome data normalization techniques, a foundational principle emerges: the primary objective of normalization is to enable biologically meaningful comparisons. This whitepaper delves into the core technical goal of normalization—removing non-biological variation to facilitate accurate within-sample (e.g., differential abundance across taxa in one sample) and between-sample (e.g., same taxon across different conditions) analyses. Without proper normalization, technical artifacts like varying sequencing depth and compositionality can dominate the signal, leading to spurious conclusions.

The Fundamental Challenge: Compositionality and Library Size

Microbiome data generated from high-throughput sequencing (e.g., 16S rRNA amplicon or shotgun metagenomics) is inherently compositional. The count of a given taxon is not independent; an increase in one taxon's proportion necessarily leads to a decrease in others. Furthermore, total read counts per sample (library size) are technical artifacts, representing an arbitrary sampling depth rather than a true measure of microbial load.

Table 1: Illustrative Example of Raw Count Data Demonstrating Compositionality

| Sample ID | Condition | Taxon A Count | Taxon B Count | Taxon C Count | Total Library Size |

|---|---|---|---|---|---|

| S1 | Control | 300 | 500 | 200 | 1000 |

| S2 | Diseased | 30 | 45 | 25 | 100 |

| S3 | Diseased | 900 | 1500 | 600 | 3000 |

From Table 1, a raw comparison suggests Taxon A is 10x more abundant in S3 than S1 (900 vs. 300). However, its proportion is identical (30%). This exemplifies the need for normalization to separate biological change from technical variation.

Core Normalization Methodologies

This section outlines key normalization techniques, detailing their protocols and intended effects.

Total Sum Scaling (TSS) / Rarefaction

Goal: Control for unequal sequencing depth to enable within-sample proportion estimation and between-sample comparison of relative abundances.

- Protocol:

- For TSS: Divide each taxon count in a sample by the sample's total library size and multiply by a scaling factor (e.g., 1,000,000 for counts per million - CPM).

- For Rarefaction: Randomly subsample (without replacement) each sample's reads to a common, pre-defined depth (e.g., the minimum library size across the dataset).

- Use the subsampled counts for all downstream analyses.

- Limitation: TSS preserves compositionality; rarefaction discards valid data.

Scaling with Phylogenetic Information (e.g., CSS)

Goal: Normalize based on the assumption that abundant, stable taxa are less variable, providing a more robust scaling factor.

- Protocol (Cumulative Sum Scaling - CSS):

- Sort taxa in each sample by increasing median abundance across all samples.

- Calculate the cumulative sum distribution for each sample.

- Identify the quantile (scaling factor) where each sample's cumulative sum distribution stabilizes (often via a data-driven approach).

- Divide all counts in a sample by its sample-specific scaling factor.

Additive Log-Ratio (ALR) / Centered Log-Ratio (CLR) Transformations

Goal: Move data from a constrained simplex space to real Euclidean space for standard statistical analysis.

- Protocol (CLR):

- For each sample, calculate the geometric mean of all taxon counts (often after adding a pseudocount of 1 to handle zeros).

- For each taxon in the sample, take the logarithm of the count divided by the geometric mean:

CLR(x_i) = log[ x_i / G(x) ].

- Protocol (ALR):

- Choose a reference taxon (e.g., a prevalent, abundant taxon).

- For each taxon in the sample, take the logarithm of the count divided by the count of the reference taxon:

ALR(x_i) = log[ x_i / x_ref ].

- Consideration: CLR is symmetric but requires special handling of zeros; ALR creates a reference dependency.

Experimental Protocol for Benchmarking Normalizations

A standard methodology to evaluate normalization efficacy:

- Dataset Creation: Use a synthetic (in-silico) community with known absolute abundances or a spiked-in control experiment (e.g., adding known quantities of external DNA).

- Introduce Variation: Simulate or experimentally generate samples with varying library sizes and compositionality.

- Apply Normalization: Process the raw count data through each target normalization method (TSS, CSS, CLR, etc.).

- Downstream Analysis: Perform a standard analytical task (e.g., differential abundance testing using DESeq2, LEfSe, or ANCOM-BC; beta-diversity calculation).

- Evaluation Metrics: Quantify performance by:

- False Positive Rate (FPR): Ability to avoid detecting differences where none exist.

- True Positive Rate (TPR/Sensitivity): Ability to recover known true differences.

- Distance/SD Correlation: Correlation between technical replicate distances post-normalization (should be low) and between biologically distinct groups (should be high).

Table 2: Comparative Summary of Core Normalization Techniques

| Method | Primary Goal | Handles Compositionality? | Preserves Zeros? | Key Assumption/Limitation |

|---|---|---|---|---|

| TSS/Proportions | Within-sample relative abundance | No | No (converts to proportions) | All reads are equally important; heavily influenced by dominant taxa. |

| Rarefaction | Between-sample comparison at even depth | Mitigates by sub-sampling | Yes (on subsampled data) | Discards data; choice of depth is critical. |

| CSS (MetagenomeSeq) | Robust between-sample scaling | Mitigates via scaling | Yes | Assumes a subset of taxa are stable across samples. |

| CLR Transformation | Move to Euclidean space for multivariate stats | Yes (theoretically) | No (requires pseudocount) | Sensitive to zeros; geometric mean can be unstable. |

| ALR Transformation | Differential abundance relative to a reference | Yes | No (for taxon/ref pair) | Results are interpreted relative to the chosen reference taxon. |

Visualization of Concepts and Workflows

Diagram 1: Normalization Method Selection Workflow (77 chars)

Diagram 2: The Compositionality Constraint Illustrated (59 chars)

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Controlled Normalization Studies

| Item | Function in Normalization Research | Example/Provider |

|---|---|---|

| Mock Microbial Community (DNA) | Provides a known composition and abundance standard to benchmark normalization methods and assess technical variation. | ZymoBIOMICS Microbial Community Standards, ATCC Mock Microbiome Standards. |

| External Spike-in Controls | Non-biological synthetic DNA sequences or organisms not found in the target samples, added in known quantities to differentiate technical from biological effects and estimate absolute abundance. | Spike-in PCR products (e.g., from alien oligonucleotide sets), Sequins (Synthetic Sequencing Spike-in Controls). |

| DNA Extraction Kits with Bead Beating | Standardizes the initial lysis step, a major source of bias. Inefficient extraction skews observed proportions, impacting all downstream normalization. | MP Biomedicals FastDNA Spin Kit, Qiagen DNeasy PowerSoil Pro Kit, ZymoBIOMICS DNA Miniprep Kit. |

| Quantitative PCR (qPCR) Reagents | To measure absolute abundance of total 16S rRNA genes or specific taxa, providing a "gold standard" against which relative, normalized data can be calibrated. | SYBR Green or TaqMan master mixes, primers for universal 16S rRNA gene or taxonomic targets. |

| High-Fidelity Polymerase & PCR Clean-up Kits | Minimizes amplification bias during library preparation, reducing one source of non-biological variation that normalization must later correct. | KAPA HiFi HotStart ReadyMix, Q5 High-Fidelity DNA Polymerase, AMPure XP beads. |

In microbiome research, raw sequencing data (e.g., 16S rRNA or shotgun metagenomics) is compositional. Without appropriate normalization, relative abundance data can lead to false correlations and erroneous conclusions regarding microbial diversity, differential abundance, and host-microbiome interactions. This guide details the technical pitfalls of unnormalized data and provides methodologies for robust analysis within the broader thesis on microbiome data normalization basics.

The Core Problem: Compositionality and Spurious Correlation

Microbiome count data is constrained by the total number of sequences obtained per sample (library size). This compositionality means an increase in one taxon's relative abundance necessitates an apparent decrease in others, inducing negative correlations independent of any biological reality.

Table 1: Example of Spurious Effects from Raw Counts

| Sample ID | Total Reads | Taxon A (Count) | Taxon B (Count) | Rel. Abundance A | Rel. Abundance B | Erroneous Inference |

|---|---|---|---|---|---|---|

| S1 | 10,000 | 1,000 | 2,000 | 10.0% | 20.0% | Baseline |

| S2 | 5,000 | 1,000 | 1,000 | 20.0% | 20.0% | Taxon A "increases" |

| S3 | 20,000 | 1,000 | 4,000 | 5.0% | 20.0% | Taxon A "decreases" |

Note: Taxon A count is biologically stable. Variation in library size (Total Reads) and a true increase in Taxon B in S3 create spurious relative changes in Taxon A.

Key Normalization Techniques: Protocols and Applications

Total Sum Scaling (TSS) / Cumulative Sum Scaling (CSS)

Protocol: Divide the count of each feature in a sample by the total number of counts for that sample (or a percentile of the counts distribution for CSS). Limitation: Highly sensitive to outliers and differentially abundant features.

Median-of-Ratios (DESeq2) Normalization

Detailed Experimental Protocol:

- Input: Raw count matrix with features (e.g., OTUs, ASVs, genes) as rows and samples as columns.

- Geometric Mean Calculation: For each feature, compute the geometric mean of counts across all samples.

- Ratio Calculation: For each sample, calculate the ratio of each feature's count to its geometric mean.

- Size Factor Derivation: The median of these ratios (excluding zeros) for each sample is the sample-specific size factor (SF).

- Normalization: Divide each feature count in a sample by its sample's SF. Formula: Normalized_Count_ij = Raw_Count_ij / SF_j

Centered Log-Ratio (CLR) Transformation

Detailed Protocol:

- Preprocessing: Replace zero counts using a pseudo-count (e.g., 1) or a more sophisticated model.

- Geometric Mean: For each sample j, calculate the geometric mean G(x_j) of all feature counts.

- Log-Ratio: Transform each feature i in sample j: CLR(x_ij) = log [ x_ij / G(x_j) ]. Advantage: Moves data to Euclidean space, suitable for many multivariate stats.

Rarefaction (Subsampling)

Protocol:

- Determine the minimum acceptable sequencing depth (N_min) across all samples.

- For each sample, randomly subsample (without replacement) N_min sequencing reads from the total read pool.

- Discard reads beyond N_min for analysis. Note: This method discards data and is statistically controversial but historically used.

Table 2: Comparison of Common Normalization Methods

| Method | Principle | Handles Zeros? | Addresses Compositionality? | Best For |

|---|---|---|---|---|

| Total Sum Scaling (TSS) | Proportional Scaling | No | No | Initial exploratory analysis |

| CSS (metagenomeSeq) | Scales to stable cumulative sum | Moderate (via pre-processing) | Partially | Differential abundance (DA) with spiked features |

| Median-of-Ratios (DESeq2) | Based on reference feature | No (requires pre-filtering) | Yes, via modeling | DA testing for RNA-seq, shotgun data |

| CLR (ALDEx2, etc.) | Log-ratio to geometric mean | Requires pseudo-count | Yes | Multivariate analysis, correlation |

| Rarefaction | Even-depth subsampling | Yes (removes them) | No, but equalizes depth | Alpha diversity comparisons (with caution) |

Experimental Case Study: Differential Abundance Analysis

Workflow: From Sequencing to Conclusion

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Microbiome Normalization Research |

|---|---|

| Mock Microbial Communities | Defined mixtures of known microbial strains (e.g., ZymoBIOMICS). Serve as positive controls to benchmark normalization methods and bioinformatics pipelines. |

| External Spike-in Controls | Known quantities of non-biological (synthetic oligonucleotides) or foreign biological sequences. Added pre-extraction to correct for technical variation and enable absolute abundance estimation. |

| Standardized DNA Extraction Kits | (e.g., MOBIO PowerSoil, MagAttract) Minimize bias in lysis efficiency across taxa, reducing a major source of pre-sequencing variation that normalization must address. |

| qPCR Reagents | For 16S rRNA gene or specific marker gene quantification. Used to measure total bacterial load, providing a scaling factor for moving from relative to absolute abundance. |

| Bioinformatics Software Packages | DESeq2, metagenomeSeq (fitZIG), ALDEx2, edgeR. Implement statistical models that incorporate normalization internally or require pre-normalized data for differential abundance testing. |

| Reference Databases | (e.g., Greengenes, SILVA, GTDB) Essential for taxonomic assignment. Consistency in annotation affects feature aggregation prior to normalization. |

Recommendations and Best Practices

- Never use raw relative abundances for correlation or differential abundance testing.

- Choose a normalization method appropriate for your biological question and data type (see Table 2).

- Incorporate spike-in controls or total microbial load measurements (e.g., via qPCR) when absolute abundance is relevant.

- Use statistical methods designed for compositional data (e.g., ANCOM-BC, Aldex2, DESeq2 with care).

- Report the normalization method used as a critical part of the methodology.

A Practical Guide to Common Microbiome Normalization Methods

Within the systematic investigation of microbiome data normalization techniques, Total Sum Scaling (TSS) represents a foundational and widely used approach. This guide provides a technical deconstruction of TSS, contextualizing its role in preparing microbial count data for downstream analysis.

Core Concept and Methodology

Total Sum Scaling, often termed "rarefaction" (though technically distinct) or simply "proportional normalization," converts raw count data into relative abundances. The operation is mathematically straightforward: each count in a sample is divided by the total number of counts (sequencing depth) for that sample, then multiplied by a scaling factor (e.g., 1,000,000 for counts per million).

Experimental Protocol for Applying TSS:

- Input: Obtain a microbial feature (e.g., OTU, ASV) by sample count matrix

C, whereC_ijis the count of featureiin samplej. - Calculate Library Size: For each sample

j, compute the total library sizeN_j = Σ_i C_ij. - Scale: Divide each feature count by its sample's library size and multiply by a constant scaling factor

K(e.g.,K=10^6).TSS_ij = (C_ij / N_j) * K - Output: A matrix of relative abundances, where the sum of all features in each sample is equal to

K.

Diagram Title: TSS Normalization Workflow

Quantitative Comparison of Normalization Techniques

The following table summarizes TSS against other common normalization methods within microbiome research, based on current literature.

Table 1: Comparison of Microbiome Normalization Techniques

| Method | Core Principle | Handles Compositionality? | Mitigates Library Size Effect? | Key Limitation | Typical Use Case |

|---|---|---|---|---|---|

| Total Sum Scaling (TSS) | Convert to proportions | Yes | Yes | Sensitive to high-abundance features; spurious correlations | Exploratory analysis, initial visualization |

| Rarefaction (Subsampling) | Random subsample to even depth | Yes | Yes (by force) | Discards valid data; increases variance | Pre-processing for beta-diversity metrics (historical) |

| Cumulative Sum Scaling (CSS) | Scale by a percentile of counts | Yes | Yes | Choice of percentile is data-sensitive | Pre-processing for metagenomic seq. (e.g., with metagenomeSeq) |

| Centered Log-Ratio (CLR) | Log-transform after geometric mean divisor | Yes, explicitly | Yes | Requires zero imputation (e.g., with a pseudo-count) | Most multivariate stats, differential abundance (e.g., ALDEx2) |

| MicrobiomeSeq (e.g., Wrench) | Scale factors based on feature characteristics | Yes | Yes | Model-dependent; can be complex | Differential abundance in structured experiments |

Limitations and Technical Artifacts

TSS's simplicity introduces critical limitations that researchers must acknowledge:

- Compositional Constraint: The fixed sum introduces a negative correlation between features, leading to spurious results in correlation analysis.

- Differential Sensitivity: Changes in the abundance of one feature artificially alter the relative proportions of all others.

- Bias from Dominant Taxa: A single highly abundant feature can drastically suppress the scaled values of all other features.

- Loss of Information: Absolute abundance data is irretrievably lost.

The following diagram illustrates the spurious correlation problem inherent to compositional data like TSS outputs.

Diagram Title: Spurious Correlation Induced by TSS

Table 2: Key Research Reagent Solutions for Microbiome Normalization

| Item / Tool | Function in Analysis | Example or Note |

|---|---|---|

| QIIME 2 / dada2 | Pipeline for generating raw ASV/OTU count tables from sequence data. | Provides the foundational count matrix for normalization. |

| R Programming Environment | Primary platform for statistical analysis and applying normalization methods. | Essential for executing specialized packages. |

| phyloseq (R Package) | Data structure and tools for handling microbiome count data and applying TSS. | transform_sample_counts() function easily performs TSS. |

| ANCOM-BC / ALDEx2 / DESeq2 | Packages for robust differential abundance testing that model or bypass compositionality. | Often used instead of or after careful normalization. |

| ZymoBIOMICS Microbial Standards | Defined mock microbial communities used to validate sequencing and bioinformatic pipelines. | Critical for benchmarking normalization performance. |

| Pseudo-Count Additives | Small value added to all counts to handle zeros before log-transformation (e.g., for CLR). | Typically 1 or a fraction determined by method. |

When to Use TSS: Practical Guidelines

TSS remains appropriate in specific contexts within a research workflow:

- Initial Exploratory Analysis: For generating quick bar plots of taxonomic profiles or initial PCA visualizations.

- Input for Certain Methods: Required for tools whose algorithms are explicitly designed for proportional data (e.g., some legacy diversity metrics).

- Communicating Results: Expressing findings as relative percentages is intuitive for a broad audience.

Decision Protocol:

Diagram Title: Normalization Method Decision Tree

Total Sum Scaling is a double-edged sword: its simplicity ensures computational efficiency and interpretability, making it a useful tool for initial data exploration and visualization within the broader study of normalization techniques. However, its inherent compositional nature severely limits its utility for most statistical inferences, including correlation and differential abundance testing. The informed researcher should treat TSS as a specific initial step in a toolkit, transitioning to more sophisticated, compositionally-aware methods for hypothesis-driven analysis. The choice of normalization must be a deliberate, hypothesis-aware decision recorded as a critical component of the analytical workflow.

In the study of microbial communities via high-throughput sequencing, normalization is a critical preprocessing step to address compositional bias and uneven sequencing depth. Among the various techniques, rarefaction is a contentious yet foundational method. This guide examines rarefaction as a subsampling approach for estimating diversity, situating it within the broader thesis on the basics of microbiome data normalization techniques. Its application and debate are pivotal for researchers, scientists, and drug development professionals who require robust, interpretable data for downstream analysis.

Core Concept and Quantitative Comparison

Rarefaction involves randomly subsampling sequences from each sample without replacement to a standardized sequencing depth (library size). This aims to mitigate the influence of varying library sizes on alpha and beta diversity metrics.

Table 1: Quantitative Comparison of Normalization Techniques in Microbiome Analysis

| Technique | Core Principle | Key Metric Affected (e.g., Alpha Diversity) | Data Lost? | Handles Zero-Inflation? | Suitability for Differential Abundance |

|---|---|---|---|---|---|

| Rarefaction | Random subsampling to even depth | Observed OTUs/ASVs, Shannon (subsampled) | Yes, discards reads | No | Poor; statistical power reduced |

| Total Sum Scaling (TSS) | Proportional transformation (relative abundance) | All metrics on relative scale | No | No | Moderate; compositional bias remains |

| CSS (Cumulative Sum Scaling) | Scales by a percentile of count distribution | All metrics on scaled counts | No | Better than TSS | Good (used in MetagenomeSeq) |

| DESeq2's Median of Ratios | Models counts based on gene-wise dispersion | Not directly for diversity | No | Yes, via modeling | Excellent for gene expression, adapted for microbiome |

| ANCOM-BC | Bias correction for compositional effects | -- | No | Yes, via modeling | Excellent for log-ratio differential abundance |

| GMPR / Wrench | Addresses compositionality and zero-inflation | -- | No | Yes | Good for case-control studies |

Table 2: Impact of Rarefaction Depth on Data Retention (Hypothetical Dataset)

| Initial Median Library Size | Chosen Rarefaction Depth | % of Samples Retained* | % of Total Sequences Retained | Avg. Loss of OTUs per Sample |

|---|---|---|---|---|

| 50,000 reads | 40,000 reads | 95% | ~80% | 8-12% |

| 50,000 reads | 10,000 reads | 100% | ~20% | 35-45% |

| *Samples with library size below the threshold are discarded. |

Detailed Experimental Protocol for Rarefaction

Protocol: Performing Rarefaction for Alpha Diversity Analysis in 16S rRNA Amplicon Data

Objective: To calculate comparable alpha diversity metrics across samples by subsampling to an even sequencing depth.

Materials & Software:

- Input Data: Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) table (samples x features, raw counts).

- Software: QIIME 2 (via

qiime diversity core-metrics-phylogenetic), R (vegan packagerrarefyfunction), or MOTHUR.

Procedure:

- Data Preprocessing: Start with a denoised, chimera-checked, and taxonomically classified feature table. Remove mitochondrial and chloroplast sequences if relevant.

- Determine Rarefaction Depth:

- Generate a table of per-sample sequencing depths.

- Plot alpha diversity (e.g., observed features) against sequencing depth using a rarefaction curve.

- Rule of Thumb: Choose a depth where curves approach an asymptote for most samples. A common heuristic is to use the minimum library size among the majority of samples, balancing data retention and even sampling. For example, if 95% of samples have >20,000 reads, 20,000 may be chosen.

- Execute Rarefaction:

- In QIIME 2:

- In QIIME 2:

- Downstream Analysis: Use the rarefied table for calculating alpha diversity indices (Observed, Shannon, Simpson) and beta diversity metrics (e.g., unweighted UniFrac, Bray-Curtis). Caution: Do not use the rarefied table for differential abundance testing (e.g., DESeq2), as the stochastic subsampling invalidates variance assumptions.

Visualizing the Role of Rarefaction in Workflow

Diagram 1: Rarefaction Decision in Microbiome Analysis Workflow (760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for 16S rRNA Studies Involving Rarefaction

| Item | Function in Context of Rarefaction | Example/Supplier |

|---|---|---|

| High-Fidelity DNA Polymerase | Critical for accurate PCR amplification of the 16S target region with minimal bias, forming the initial count library. | Q5 Hot Start (NEB), KAPA HiFi |

| Indexed PCR Primers | Allows multiplexing of samples. Inconsistent PCR efficiency can bias initial library sizes, impacting rarefaction depth choice. | Illumina Nextera XT, 16S V4 primers (515F/806R) |

| Quantitation Kit (dsDNA) | Accurate library quantification ensures balanced pooling. Uneven pooling directly causes variable sequencing depth. | Qubit dsDNA HS Assay (Thermo Fisher) |

| Mock Microbial Community (Control) | Validates the entire workflow. Rarefaction curves of mock communities should saturate, confirming sufficient sequencing depth. | ZymoBIOMICS Microbial Community Standard |

| Negative Extraction Control | Identifies background contamination. Low-count control samples may be discarded during rarefaction, highlighting the need for this step. | Nuclease-free water processed alongside samples |

| Bioinformatics Pipeline | Software that performs the subsampling algorithm and generates rarefaction curves. | QIIME 2, mothur, USEARCH |

| Statistical Software | For implementing rarefaction and analyzing resulting diversity metrics. | R (vegan, phyloseq), Python (scikit-bio) |

The Central Debate: A Structured Analysis

Pros:

- Intuitive Simplicity: Easy to understand and implement.

- Mitigates Library Size Effect: Directly removes the confounding factor of uneven sequencing effort for diversity comparisons.

- Community Standard: Historically prevalent, facilitating comparison with past studies.

- No Distributional Assumptions: Non-parametric, unlike many model-based approaches.

Cons:

- Data Discard: Throws away valid, often expensive, sequence data, reducing statistical power.

- Stochasticity: Results vary slightly with each subsampling run (can be mitigated with seeding).

- Sample Loss: Samples with library sizes below the chosen threshold must be excluded entirely.

- Inappropriate for Differential Abundance: The subsampled table violates the independence assumptions of statistical tests like DESeq2 or edgeR.

- Arbitrary Depth Choice: The selection of the subsampling depth is often subjective and can influence results.

Within the landscape of microbiome normalization techniques, rarefaction serves a specific, debated purpose. It remains a defensible, if not optimal, method for standardizing data specifically for ecological diversity metrics (alpha and beta diversity). However, for research questions centered on differential abundance testing, modern, model-based normalization methods (e.g., DESeq2, ANCOM-BC, or robust CSS) that use the full data and account for compositionality are strongly recommended. The choice should be dictated by the biological question, with an awareness that rarefaction is a tool for comparability, not a comprehensive normalization solution.

Within the broader thesis on the Basics of Microbiome Data Normalization Techniques Research, a central challenge is addressing data compositionality. Microbiome sequencing data, such as 16S rRNA gene amplicon or shotgun metagenomic counts, are inherently relative. A change in the abundance of one taxon alters the perceived proportions of all others, complicating differential abundance analysis. This whitepaper provides an in-depth technical comparison of two seminal normalization approaches designed to mitigate compositional effects: Cumulative Sum Scaling (CSS) from metagenomeSeq and DESeq2's Median of Ratios method.

Core Concepts and Mathematical Foundations

The Compositionality Problem

Microbiome data is constrained sum data; counts are normalized to library size (e.g., sequences per sample), resulting in a simplex. This violates the assumptions of many standard statistical models which assume data are absolute and unconstrained.

CSS Normalization (metagenomeSeq)

CSS posits that a biologically valid scaling factor can be found at a lower quantile of the count distribution, assuming that counts up to this quantile are not differentially abundant in expectation. The method scales counts by the cumulative sum of counts up to a data-driven percentile.

Protocol:

- For each sample i, calculate the cumulative sum of counts ordered by the features' (e.g., OTUs) mean abundance across all samples.

- For each sample, identify the quantile ( l^{[i]} ) where the cumulative sum reaches a predefined threshold (e.g., the median of the per-sample cumulative sum distributions at a range of quantiles).

- The scaling factor for sample i is the cumulative sum at quantile ( l^{[i]} ).

- Divide all counts in sample i by its CSS scaling factor.

DESeq2's Median of Ratios

Originally developed for RNA-Seq, this method estimates size factors to account for library composition. It assumes that most features are not differentially abundant. The size factor for a sample is the median of ratios of each feature's count to its geometric mean across all samples.

Protocol:

- Compute the geometric mean for each feature (e.g., gene, OTU) across all samples.

- For each sample i, compute the ratio of each feature's count to its geometric mean.

- The size factor ( s_i ) for sample i is the median of these ratios, excluding ratios that are zero or infinite.

- Divide all counts in sample i by its size factor ( s_i ).

Table 1: Technical Comparison of CSS and DESeq2 Median of Ratios Normalization

| Feature | CSS (metagenomeSeq) | DESeq2 Median of Ratios |

|---|---|---|

| Primary Field | Microbiome (16S, metagenomics) | RNA-Seq transcriptomics, adapted to microbiome |

| Underlying Assumption | A stable scaling factor exists within a low-abundance quantile. | The majority of features are not differentially abundant. |

| Handles Zero Inflation | Explicitly designed for sparse microbial data. | Robust to zeros, but may be sensitive in extreme sparsity. |

| Dependency on | Full feature count distribution shape. | Feature-wise ratios across samples. |

| Output | Normalized scaled counts. | Normalized count matrix (with size factors applied). |

| Integrates with | Differential abundance testing in metagenomeSeq (fitZig). |

Differential testing in DESeq2 (Negative Binomial GLM). |

Table 2: Illustrative Normalization Results on a Simulated Dataset (n=10 samples, 100 features)

| Sample | Raw Library Size | CSS Scaling Factor | DESeq2 Size Factor | Normalized Count (Feature X) - CSS | Normalized Count (Feature X) - DESeq2 |

|---|---|---|---|---|---|

| Sample_1 | 50,000 | 12,500 | 0.95 | 4.0 | 105.3 |

| Sample_2 | 75,000 | 21,000 | 1.45 | 2.9 | 69.0 |

| Sample_3 | 52,000 | 13,800 | 1.02 | 3.6 | 98.0 |

| ... | ... | ... | ... | ... | ... |

Experimental Protocols for Benchmarking

Protocol 1: Benchmarking Normalization Performance with Spike-Ins

- Objective: Evaluate accuracy in recovering known fold-changes.

- Materials: Mock microbial community DNA with known composition; synthetic spike-in oligonucleotides (e.g., External RNA Control Consortium - ERCC spikes) added at known, varying concentrations.

- Method:

- Split mock community into aliquots. Spike each with a unique combination of ERCC controls at defined ratios.

- Perform sequencing (16S rRNA gene amplicon or shotgun).

- Apply CSS and DESeq2 normalization separately to the native microbial features.

- For spike-ins (considered "absolute" truths), test for differential abundance using the normalized microbial data matrices. Assess correlation between measured fold-changes (from microbial data) and known fold-changes (from spike-in concentrations).

Protocol 2: Evaluating Compositional Effect Mitigation

- Objective: Assess sensitivity to "balancing" effects where an increase in one taxon causes artificial decreases in others.

- Materials: A real microbiome dataset; synthetic differential abundance signal.

- Method:

- Select a real, relatively stable dataset as a baseline.

- Artificially inflate the counts of a randomly selected set of taxa in a subset of samples by a known multiplier (absolute increase).

- In the same samples, proportionally reduce the counts of all other taxa to maintain the original library size (compositional effect).

- Apply CSS and DESeq2 normalization to both the original and the synthetically altered datasets.

- Perform differential abundance testing between the altered and unaltered sample groups. Compare the false positive rate (taxa not artificially increased but called significant) between methods.

Logical Workflow Diagrams

CSS Normalization Computational Workflow

DESeq2 Median of Ratios Normalization Workflow

Decision Logic for Method Selection

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Normalization Benchmarking

| Item | Function in Context | Example/Note |

|---|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | Provides a ground truth microbial composition for controlled method validation and spike-in experiments. | ZymoBIOMICS Microbial Community Standard (D6300/D6305/D6306). |

| Synthetic Spike-In Controls (e.g., ERCC) | Absolute abundance standards added prior to sequencing to evaluate normalization accuracy and detect compositional bias. | Thermo Fisher Scientific ERCC RNA Spike-In Mix. |

| High-Fidelity Polymerase | Ensures accurate amplification in 16S protocols, minimizing technical variation that confounds normalization assessment. | Q5 High-Fidelity DNA Polymerase (NEB), KAPA HiFi HotStart. |

| Metagenomic DNA Extraction Kit | Standardized, efficient cell lysis and DNA recovery across diverse taxa, critical for generating reproducible count matrices. | DNeasy PowerSoil Pro Kit (QIAGEN), MagAttract PowerSoil DNA Kit. |

| Bioinformatics Pipeline (e.g., QIIME 2, DADA2) | Generates the raw Amplicon Sequence Variant (ASV) or OTU count matrix which is the input for CSS or DESeq2 normalization. | Must be consistent across compared samples. |

| R/Bioconductor Packages | Implementation of the core normalization algorithms and statistical testing frameworks. | metagenomeSeq (for CSS), DESeq2, phyloseq (for data handling). |

In microbiome data analysis, raw sequence counts are compositionally constrained, heteroskedastic, and plagued by an excess of zeros. Normalization is a critical pre-processing step to separate biologically meaningful signal from technical artifacts. This guide details advanced normalization techniques designed to address these specific challenges, framed within the broader thesis that effective normalization is foundational for robust differential abundance testing and downstream inference in microbiome research.

Core Techniques: Methodologies and Protocols

Trimmed Mean of M-values (TMM)

Protocol:

- Select a Reference Sample: Choose one sample as a reference (e.g., the library whose upper quartile is closest to the mean upper quartile across all samples).

- Compute M-values and A-values: For each feature i in test sample k vs. reference sample r, calculate:

- M-value: Mi = log₂( (Ciₖ / Nₖ) / (Ciᵣ / Nᵣ) )

- A-value: Ai = ½ * log₂( (Ciₖ / Nₖ) * (Ciᵣ / Nᵣ) ) Where C is count and N is total library size.

- Trim and Weight: Trim the extreme log-fold changes (default: 30% from each tail of the M-values) and the extreme average abundance (default: 5% from each tail of the A-values). Apply a weight for each feature based on inverse approximate asymptotic variances.

- Calculate Scaling Factor: The TMM scaling factor for sample k is the weighted mean of the remaining M-values: TMMₖ = exp( Σ w_i * M_i / Σ w_i ).

- Apply Normalization: Use the scaling factor to adjust library sizes for downstream analyses (e.g., in a statistical model).

Geometric Mean of Pairwise Ratios (GMPR)

Protocol: GMPR is specifically designed for zero-inflated sequencing data.

- Compute Median Ratios: For a given sample j, calculate its size factor SFⱼ using all other samples m (m ≠ j).

- Pairwise Ratio Calculation: For each pair (j, m), compute the ratio of counts for features common to both samples (i.e., features with non-zero counts in both).

- R{jm}^{(i)} = C{ij} / C_{im} for feature i present in both.

- Compute Sample-specific Median: For each sample j, collect all ratios R_{jm}^{(i)} across all other samples m and all common features i. Let Sⱼ be the median of this combined set.

- Calculate Size Factor: The size factor for sample j is the geometric mean of all pairwise medians relative to itself: GMPRⱼ = exp( median_{m≠j} ( log(Sⱼ / S_m) ) ).

- Normalize: Divide the counts in each sample by its GMPR size factor.

Addressing Zero-Inflation: Strategies Beyond Scaling

Zero-inflation arises from both biological absence and technical undersampling (dropouts). Strategies include:

- Pre-normalization Filtering: Remove features with zeros in >X% of samples (e.g., 90%).

- Zero-Inflated Models: Use statistical models like zero-inflated negative binomial (ZINB) that separately model the probability of a zero (dropout) and the count abundance.

- Imputation: Carefully apply methods like minimum abundance replacement (replace zeros with a small value) or more sophisticated model-based imputation, though these can introduce bias if not validated.

Data Presentation

Table 1: Comparison of Normalization Techniques for Microbiome Data

| Technique | Primary Goal | Key Assumption | Robust to Zero-Inflation? | Output |

|---|---|---|---|---|

| Total Sum Scaling (TSS) | Equalize sequencing depth | Counts are proportionally representative. | No | Relative Abundances |

| TMM | Correct for RNA composition | Most features are not differentially abundant. | Moderate (trimming helps) | Scaling Factors |

| GMPR | Normalize zero-inflated data | The median of pairwise ratios is stable. | Yes (core strength) | Size Factors |

| CSS (MetagenomeSeq) | Handle varying sampling depths | Features with consistently low variance are not differential. | Low | Cumulative Sum Scaled Counts |

| Rarefying | Standardize library size | Loss of data is acceptable; induces correlation. | No (can increase zeros) | Subsampled Counts |

Table 2: Typical Impact of Normalization on Differential Abundance Test Performance (Simulated Data)

| Normalization Method | False Discovery Rate (FDR) Control | Statistical Power | Bias in Effect Size Estimation |

|---|---|---|---|

| None (Raw Counts) | Poor | Low | High |

| TSS | Moderate | Moderate | Moderate |

| TMM | Good | High | Low |

| GMPR | Good | High | Low |

| Rarefying | Moderate | Low (due to data loss) | Variable |

Visualizations

GMPR Normalization Workflow

Normalization Method Selection Guide

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Microbiome Normalization Experiments

| Item/Category | Function/Description | Example Tool/Package |

|---|---|---|

| Statistical Programming Environment | Provides the computational backbone for implementing normalization algorithms. | R (>=4.0), Python (>=3.8) |

| Normalization & Analysis Packages | Pre-built functions for TMM, GMPR, and related analyses. | R: edgeR (TMM), GMPR package, metagenomeSeq (CSS), DESeq2. Python: scikit-bio, statsmodels. |

| Zero-Inflated Model Packages | Enable formal modeling of dropout and count processes. | R: pscl (zeroinfl), glmmTMB. Python: statsmodels (discrete). |

| High-Performance Computing Resources | Handle large-scale microbiome dataset computations. | Local clusters (SLURM), cloud computing (AWS, GCP). |

| Benchmarking Datasets | Validate normalization performance using mock community (known composition) or spiked-in control data. | ATCC MSA-1000, ZymoBIOMICS Microbial Community Standards. |

| Data Visualization Libraries | Create publication-quality figures to assess normalization impact. | R: ggplot2, ComplexHeatmap. Python: matplotlib, seaborn. |

Microbiome data generated via amplicon sequencing is inherently compositional and sparse, making normalization a critical pre-processing step. Within the broader thesis on the basics of microbiome data normalization techniques, this guide provides a technical framework for implementing standard methods in R (using phyloseq) and Python (with QIIME 2 artifacts). Normalization mitigates technical artifacts like uneven sequencing depth, allowing for meaningful biological comparisons.

The choice of normalization method depends on the data's characteristics and the downstream analysis goals. The table below compares key techniques.

Table 1: Comparison of Common Microbiome Normalization Methods

| Method | Key Principle | Best Use Case | Pros | Cons |

|---|---|---|---|---|

| Total Sum Scaling (TSS) | Scales counts to relative abundances (sums to 1 or 100%). | Community composition profiling, PCA. | Simple, interpretable. | Reinforces compositionality; sensitive to outliers. |

| Cumulative Sum Scaling (CSS) [1] | Scales by a percentile of the count distribution (e.g., median). | Differential abundance (DA) on moderately sparse data. | Less sensitive to outliers than TSS. | Implementations vary (e.g., metagenomeSeq). |

| Relative Log Expression (RLE) | Scales by the geometric mean of counts relative to a reference sample. | DA for RNA-seq; adaptable for microbiome. | Robust to composition shifts. | Fails with many zero counts. |

| Centered Log-Ratio (CLR) | Log-transforms relative abundances centered by geometric mean. | Compositional data analysis, PCA, CoDa. | Aitchison geometry compliant. | Requires pseudo-count for zeros. |

| Rarefying | Random subsampling to an even depth. | Alpha diversity comparisons. | Simple, reduces bias from depth. | Discards valid data; introduces randomness. |

| Variance Stabilizing Transformation (VST) [2] | Models variance-mean trend to stabilize variance. | DA with high sparsity (e.g., DESeq2). |

Handles sparsity well; no pseudo-count. | Complex model fitting. |

Sources: [1] Paulson et al., Nat Methods (2013); [2] McMurdie & Holmes, PLoS Comput Biol (2014).

Experimental Protocols for Benchmarking Normalization

Protocol 1: Benchmarking Impact on Differential Abundance (DA)

- Data Simulation: Use the

SPsimSeqR package to simulate case-control OTU tables with known differentially abundant taxa. Introduce varying sequencing depth (e.g., 1k to 100k reads/sample) and sparsity. - Normalization: Apply each method from Table 1 to the simulated dataset.

- DA Analysis: Perform DA testing (e.g., Wilcoxon rank-sum test on CLR,

DESeq2on raw counts with internal VST,ANCOM-BC). - Evaluation Metrics: Calculate the Area Under the Precision-Recall Curve (AUPRC) and False Discovery Rate (FDR) to assess power and error control in recovering the true positive taxa.

Protocol 2: Assessing Beta Diversity Preservation

- Data Preparation: Use a mock community dataset (e.g., from the

microbiomeR package) with known ground-truth structure. - Normalization & Distance: Generate Bray-Curtis (for TSS, CSS, rarefied) and Aitchison (for CLR) distance matrices for each normalized output.

- Ordination: Perform Principal Coordinates Analysis (PCoA).

- Evaluation Metric: Compute the Procrustes correlation (via

protestinvegan) between the PCoA of the normalized data and the ground-truth expected structure.

Implementation Workflows

Diagram 1: Core Normalization Decision Workflow (Width: 760px)

Workflow A: R Implementation withphyloseq

Workflow B: Python/QIIME 2 Implementation

Diagram 2: R & QIIME 2 Normalization Pipelines (Width: 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Microbiome Normalization Experiments

| Item | Function in Normalization Context | Example/Note |

|---|---|---|

| Mock Community Standards | Gold-standard for benchmarking normalization performance. Known composition allows accuracy assessment. | ZymoBIOMICS Microbial Community Standards (D6300/D6305/D6306). |

| Negative Extraction Controls | Identifies contaminant sequences, informing minimum thresholding pre-normalization. | Sterile water or buffer taken through extraction kit. |

| Positive Control (Spike-ins) | Evaluates technical variance and can inform batch correction normalization. | Known quantities of exogenous organisms (e.g., Salmonella bongori). |

| Standardized DNA Extraction Kits | Reduces batch-effect variance, simplifying downstream normalization needs. | Qiagen DNeasy PowerSoil Pro Kit, MoBio PowerLyzer. |

| Amplicon Sequence Variant (ASV) Caller | Generates the feature table for normalization. DADA2 and Deblur produce denoised tables. | DADA2 (R), Deblur (QIIME 2). |

| Normalization Software/Packages | Implementation vehicles for the mathematical techniques described. | phyloseq, DESeq2, metagenomeSeq (R); q2-composition (QIIME 2). |

Overcoming Common Pitfalls and Optimizing Your Normalization Strategy

In the systematic study of microbiome data normalization techniques, the preliminary diagnostic assessment of raw sequencing data is paramount. The choice of an appropriate normalization method—be it rarefaction, Total Sum Scaling (TSS), or more advanced techniques like DESeq2 or CSS—depends entirely on the intrinsic properties of the dataset: namely, its library size distribution and sparsity. This guide provides a technical framework for diagnosing these two critical characteristics, serving as the essential first step in any robust microbiome analysis pipeline. Without this assessment, normalization may inadvertently introduce bias or obscure true biological signal.

Core Concepts and Quantitative Benchmarks

Library Size

Library size, or sequencing depth, refers to the total number of reads (or counts) assigned to a sample. Variability in library size is a technical artifact that must be accounted for before comparative analysis.

Sparsity

Sparsity describes the proportion of zero counts (unobserved taxa) in the feature-by-sample matrix. High sparsity is inherent in microbiome data due to biological and technical reasons, posing challenges for many statistical models.

Table 1: Benchmark Ranges for Data Assessment

| Metric | Low/Moderate Range | High/Problematic Range | Typical Action |

|---|---|---|---|

| Library Size Coefficient of Variation (CV) | < 20% | > 50% | Low variation may permit TSS; High variation requires robust normalization (e.g., CSS, Median). |

| Overall Sparsity (% of Zeros) | < 70% | > 80-90% | Consider zero-inflated models, careful use of prevalence filtering, or specific normalization (e.g., GMPR). |

| Skewness of Library Size Distribution | Absolute value < 1 | Absolute value > 1 | Strong positive skew indicates a few large libraries dominating; suggests non-parametric normalization. |

Experimental Protocol for Diagnostic Assessment

Protocol 3.1: Calculating Library Size Distribution

- Input: Raw count table (OTU/ASV table), dimensions m samples x n taxa.

- Step 1 - Compute Per-Sample Sums: For each sample i, calculate library size Li = Σj count_ij.

- Step 2 - Compute Distribution Statistics:

- Mean & Median: Calculate mean and median of all L_i.

- Range & Coefficient of Variation (CV): CV = (standard deviation of Li / mean of Li) * 100.

- Skewness: Use Fisher-Pearson coefficient. A value > 0 indicates right skew.

- Step 3 - Visualize: Generate a histogram or boxplot of L_i.

- Output: Table of statistics and visualization to inform normalization choice.

Protocol 3.2: Assessing Data Sparsity

- Input: Raw count table.

- Step 1 - Calculate Global Sparsity: Sparsity = (Total number of zero entries) / (m * n) * 100.

- Step 2 - Calculate Per-Sample Sparsity: For each sample, compute % of zero counts.

- Step 3 - Calculate Per-Taxon Prevalence: For each taxon, compute % of samples in which it is observed (count > 0). Plot the distribution of prevalence.

- Step 4 - Visualize: Create a heatmap of the count table (log-transformed, with zeros), ordered by library size and taxon prevalence.

- Output: Global and per-feature sparsity metrics, prevalence distribution plot, heatmap.

Diagram 1: Data Assessment Workflow (71 chars)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Microbiome Data Diagnostics

| Item | Function in Diagnostic Assessment |

|---|---|

| High-Quality DNA Extraction Kit | Ensures unbiased lysis of diverse community members; poor extraction increases technical zeros (spurious sparsity). |

| Mock Community Control | Defined mixture of microbial genomes; used to validate sequencing depth and detect technical dropouts affecting sparsity estimates. |

| Library Quantification Kit (Qubit/qPCR) | Accurate quantification prior to sequencing prevents extreme library size variation. |

| Sequencing Platform-specific | Choice of 16S rRNA gene region primers or shotgun adapters directly influences sparsity via amplification bias or genomic coverage. |

| Bioinformatics Pipeline | DADA2, QIIME 2, or mothur for generating count tables; parameter choices in denoising/clustering affect sparsity and perceived library size. |

| Statistical Software (R/Python) | Essential for computing diagnostic metrics (e.g., phyloseq, vegan in R; scikit-bio, pandas in Python). |

Interpretation and Pathway to Normalization

The diagnostics from Protocols 3.1 and 3.2 create a decision matrix for normalization.

Diagram 2: Normalization Decision Pathway (39 chars)

Table 3: Normalization Method Selection Based on Diagnostics

| Diagnostic Profile | Recommended Normalization | Rationale |

|---|---|---|

| Low library size variation, Moderate sparsity | Total Sum Scaling (TSS) | Simple proportional scaling is sufficient; minimal bias introduced. |

| Moderate variation, Any sparsity | Cumulative Sum Scaling (CSS) | Robust to uneven sampling depths and moderately sparse data. |

| High variation, Low/Moderate sparsity | DESeq2 Median of Ratios | Assumes most features are not differentially abundant; handles large size differences. |

| Any variation, Extreme sparsity | Geometric Mean of Pairwise Ratios (GMPR) | Specifically designed for zero-inflated, compositional data. |

| Exploratory, for diversity | Rarefaction | Subsampling to even depth for alpha/beta diversity comparisons only. |

A rigorous diagnostic assessment of library size distribution and sparsity is the non-negotiable foundation of microbiome data analysis. This process directly determines the validity of subsequent normalization and statistical inference. By following the protocols and utilizing the decision framework outlined herein, researchers can move forward with confidence, selecting a normalization technique that mitigates technical artifacts while preserving biological truth, thereby advancing the core thesis of robust microbiome data science.

Within the fundamental research on basics of microbiome data normalization techniques, the initial and most critical decision is selecting the appropriate sequencing method. The choice between 16S rRNA gene sequencing, shotgun metagenomics, and metatranscriptomics dictates the biological questions that can be addressed and, consequently, the normalization strategies required for downstream analysis. This guide provides a technical comparison to inform researchers and drug development professionals.

Core Technologies Compared

Table 1: High-Level Comparison of Microbiome Profiling Methods

| Feature | 16S rRNA Gene Sequencing | Shotgun Metagenomics | Metatranscriptomics |

|---|---|---|---|

| Target | Hypervariable regions of the 16S rRNA gene | All genomic DNA | All expressed RNA (mRNA) |

| Primary Output | Operational Taxonomic Units (OTUs) or Amplicon Sequence Variants (ASVs) | Microbial taxa & functional gene catalog (e.g., KEGG, COG) | Gene expression profiles & active pathways |

| Taxonomic Resolution | Genus to species (rarely strain-level) | Species to strain-level | Species to strain-level for active members |

| Functional Insight | Inferred from reference databases | Direct measurement of genetic potential | Direct measurement of actively expressed functions |

| Typical Sequencing Depth | 50,000 - 100,000 reads/sample | 20 - 60 million reads/sample | 50 - 100 million reads/sample |

| Key Normalization Concerns | Library size (rarefaction), compositional bias, primer bias | Library size, genome size bias, horizontal gene transfer | Library size, RNA extraction efficiency, rRNA depletion efficiency, mRNA stability |

| Relative Cost (per sample) | $ | $$ | $$$ |

Table 2: Quantitative Data on Method Performance Metrics (Representative Values)

| Metric | 16S rRNA (V4 region) | Shotgun Metagenomics (Illumina NovaSeq) | Metatranscriptomics (rRNA-depleted) |

|---|---|---|---|

| Host DNA/RNA Reads | Typically 0% | 50-99% (host-rich sites) | >90% without prokaryotic enrichment |

| Bases per Sample | 0.03 - 0.05 Gb | 6 - 12 Gb | 10 - 20 Gb |

| Turnaround Time (Data Generation) | 1-2 days | 3-7 days | 5-10 days |

| Computational Storage (Raw Data) | ~50 MB/sample | ~40 GB/sample | ~60 GB/sample |

| Detectable Taxa (% of community) | >0.1% abundance | >0.01% abundance | Highly variable; depends on expression level |

Detailed Methodologies

16S rRNA Gene Sequencing Protocol (Illumina MiSeq, paired-end 2x300 bp)

Experimental Workflow:

- Genomic DNA Extraction: Use a bead-beating kit (e.g., Qiagen PowerSoil Pro) for mechanical lysis of diverse cell walls.

- PCR Amplification: Amplify hypervariable region(s) (e.g., V4) using barcoded primer pairs (e.g., 515F/806R). Include a negative control.

- Amplicon Purification: Clean PCR products with magnetic beads (e.g., AMPure XP) to remove primers and dimers.

- Library Quantification & Pooling: Quantify with fluorometry (e.g., Qubit), normalize concentrations, and pool equimolar amounts.

- Sequencing: Run on Illumina MiSeq with 10-15% PhiX spike-in for library diversity.

- Bioinformatic Processing (QIIME 2, DADA2):

- Trim primers, denoise, merge paired-end reads, and remove chimeras to create Amplicon Sequence Variants (ASVs).

- Assign taxonomy using a reference database (e.g., Silva 138 or Greengenes2).

- Normalize by rarefaction to an even sampling depth before alpha/beta diversity analysis.

Shotgun Metagenomic Sequencing Protocol

Experimental Workflow:

- High-Quality DNA Extraction: Use a method that yields high-molecular-weight DNA (e.g., MoBio PowerMax Soil Kit). Quantity via Qubit and check fragment size on TapeStation.

- Library Preparation: Fragment DNA via sonication (e.g., Covaris). Perform end-repair, A-tailing, and adapter ligation (e.g., Illumina DNA Prep). Include internal control standards.

- Size Selection & PCR Enrichment: Select fragments (~350-550 bp) with beads. Perform limited-cycle PCR to index libraries.

- Sequencing: Pool libraries and sequence on a high-output platform (e.g., Illumina NovaSeq 6000) to achieve target depth.

- Bioinformatic Processing (MetaPhlAn 4, HUMAnN 3):

- Perform quality trimming (Trimmomatic) and remove host reads (Bowtie2 against host genome).

- For taxonomic profiling, align reads to marker gene databases (MetaPhlAn).

- For functional profiling, align reads to protein family databases (HUMAnN via DIAMOND).

- Normalize gene families to copies per million (CPM) or use a variance-stabilizing transformation.

Metatranscriptomic Sequencing Protocol

Experimental Workflow:

- RNA Preservation & Extraction: Immediately stabilize samples in RNAlater. Extract total RNA using a phenol-chloroform method with bead-beating. Treat with DNase I.

- RNA Quality Control: Assess RNA Integrity Number (RIN) on Bioanalyzer. Proceed only if RIN > 6.5 for microbial communities.

- rRNA Depletion: Deplete eukaryotic (e.g., human) and prokaryotic (e.g., bacterial/archaeal) rRNA using sequence-specific probes (e.g., Illumina Ribo-Zero Plus).

- Enriched mRNA Library Prep: Fragment enriched mRNA, synthesize cDNA, and prepare library (e.g., Illumina Stranded Total RNA Prep).

- Sequencing: Sequence on Illumina NovaSeq to achieve high depth for low-abundance transcripts.

- Bioinformatic Processing (KneadData, Salmon):

- Trim adapters, remove low-quality bases, and deplete residual host and rRNA reads.

- Perform pseudoalignment to a reference gene catalog (e.g., from matched metagenomes) for quantitation (Salmon).

- Normalize transcript counts to Transcripts Per Million (TPM), accounting for gene length and sequencing depth.

Visualized Workflows

Title: Comparative Workflow of Three Microbiome Sequencing Methods

Title: Decision Tree for Selecting a Microbiome Sequencing Method

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Microbiome Sequencing Experiments

| Item | Function | Example Product (for illustration) |

|---|---|---|

| Bead-Beating Tubes (Lysis Matrix) | Mechanical disruption of robust microbial cell walls (Gram-positive, spores) for unbiased extraction. | MP Biomedicals FastPrep Lysing Matrix E |

| RNAlater Stabilization Solution | Preserves in vivo RNA expression profiles at collection by inhibiting RNases. | Thermo Fisher Scientific RNAlater |

| Magnetic Bead Clean-up Kits | Size-selective purification of nucleic acids post-amplification or for library size selection. | Beckman Coulter AMPure XP |

| Indexed PCR Primers (16S) | Amplifies target hypervariable region while adding unique sample barcodes for multiplexing. | Illumina 16S V4 Primer Set (515F/806R) |

| Ribo-Zero/rRNA Depletion Kits | Removes abundant ribosomal RNA to increase mRNA sequencing depth in metatranscriptomics. | Illumina Ribo-Zero Plus Epidemiology |

| PhiX Control v3 | Provides a balanced nucleotide library as an internal control for Illumina sequencing runs. | Illumina PhiX Control Kit |

| Quant-iT PicoGreen dsDNA Assay | Fluorometric quantitation of low-concentration DNA libraries with high sensitivity. | Thermo Fisher Scientific PicoGreen |

| Bioanalyzer RNA Nano Chip | Assesses RNA integrity (RIN) critical for metatranscriptomic library success. | Agilent 2100 Bioanalyzer Chip |

| Mock Microbial Community (Control) | Defined mix of known genomes/strains used as a positive control for extraction and sequencing bias. | ZymoBIOMICS Microbial Community Standard |

| DNase/RNase-free Water | Prevents enzymatic degradation of sensitive nucleic acid samples during processing. | Invitrogen UltraPure DNase/RNase-Free Water |

Within the broader thesis on the basics of microbiome data normalization techniques, the integration of normalization and batch correction represents a critical, non-trivial step. Microbiome sequencing data (e.g., from 16S rRNA or shotgun metagenomics) is inherently compositional, sparse, and high-dimensional. Batch effects—systematic technical variations introduced by differing sequencing runs, laboratories, or DNA extraction kits—can confound biological signals, leading to spurious findings. Normalization aims to render samples comparable by addressing issues like uneven sequencing depth, while batch correction aims to remove non-biological technical variation. Performing these steps in isolation or in an incorrect order can introduce artifacts or remove genuine biological signal. This guide addresses the conundrum of strategically integrating these two processes for robust microbiome data analysis.

Core Concepts and Quantitative Challenges

Table 1: Common Microbiome Data Characteristics Requiring Attention

| Characteristic | Typical Range/Manifestation | Primary Tool to Address |

|---|---|---|

| Sequencing Depth (Library Size) | 10,000 - 200,000 reads/sample | Normalization |

| Sparsity (Zero Inflation) | 50-90% zeros in OTU/ASV table | Specialized Normalization/Batch Methods |

| Compositionality | Data sums to a constant (total reads) | Compositional Data Analysis (CoDA) |

| Batch Effect Strength | Can explain >20% of variance in PCA (Pots et al., 2019) | Batch Correction |

| Biological Signal of Interest | Often explains <5% of total variance | Careful Integration of Steps |

Table 2: Quantitative Impact of Batch Effect in Microbiome Studies (Summarized Literature)

| Study Reference (Example) | Technology | Reported Batch Variance (%) | Method Used for Assessment |

|---|---|---|---|

| Sinha et al., 2017 (Cell) | 16S rRNA Sequencing | 15-30% | PERMANOVA on PCoA |

| Gibbons et al., 2018 (mSystems) | Metagenomics | Up to 40% for extraction batches | Principal Variance Component Analysis |

| Recent Multi-Center Study (2023) | Shotgun Metagenomics | 10-25% (center-specific) | R² from Linear Model on PC1 |

Detailed Methodologies and Experimental Protocols