16S Amplicon Data Quality Control: A Comprehensive Guide to Best Practices for Microbiome Researchers

This article provides a detailed, step-by-step guide to 16S rRNA gene amplicon data quality control, tailored for researchers, scientists, and drug development professionals.

16S Amplicon Data Quality Control: A Comprehensive Guide to Best Practices for Microbiome Researchers

Abstract

This article provides a detailed, step-by-step guide to 16S rRNA gene amplicon data quality control, tailored for researchers, scientists, and drug development professionals. Covering foundational concepts through to advanced validation, it addresses critical intents: establishing the importance of QC for robust conclusions, detailing current methodological pipelines (including primer selection and bioinformatics tools), offering solutions for common pitfalls and data optimization, and guiding the validation of results against standards and complementary methods. The goal is to empower users to implement rigorous QC protocols that ensure the reliability and reproducibility of their microbiome data for biomedical and clinical applications.

Why 16S Data QC is Non-Negotiable: Understanding Errors, Bias, and Impact on Microbiome Science

Introduction to 16S Amplicon Sequencing and Its Inherent Vulnerabilities

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My sequencing run returned a very low number of reads. What are the primary causes?

- A: Low read counts typically originate from sample preparation or library quantification steps.

- Poor PCR Amplification: Inhibitors in the DNA extract or suboptimal primer-template matching can cause this. Troubleshooting: Re-quantify gDNA using fluorometry, dilute to reduce inhibitors, and verify primer specificity for your target community.

- Inefficient Library Ligation/Poor Quantification: Inaccurate quantification before pooling leads to under-clustering on the flow cell. Troubleshooting: Use qPCR-based library quantification (e.g., Kapa Biosystems kit) instead of fluorometry for precise molarity determination.

- Sequencer Flow Cell Issue: This is less common but possible. Troubleshooting: Check the sequencer’s quality control metrics and contact the sequencing facility.

- A: Low read counts typically originate from sample preparation or library quantification steps.

Q2: My negative control (blank extraction) shows high read counts and diversity. What does this indicate and how should I proceed?

- A: This signals contamination, a critical vulnerability in 16S sequencing. It invalidates low-biomass results.

- Identify the Source: Review the experimental workflow. Common sources are contaminated reagents (e.g., polymerase, water), consumables, or the laboratory environment.

- Immediate Action: Discard the affected batch of reagents. Implement strict molecular-grade, dedicated reagents for microbiome work. Include multiple negative controls (extraction, PCR, library prep) to trace contamination.

- Data Remediation: In silico, you can subtract ASVs (Amplicon Sequence Variants) present in the negative controls from your samples using tools like

decontam(R package) orSourcetracker. However, this is a correction, not a cure. The experiment should ideally be repeated with cleaner conditions.

- A: This signals contamination, a critical vulnerability in 16S sequencing. It invalidates low-biomass results.

Q3: I observe unexpected dominance of a single bacterial taxon across all my samples. Is this a biological result or an artifact?

- A: This is likely a technical artifact, such as primer bias or contamination.

- Primer Bias Investigation: In silico, check the primer binding regions of the dominant sequence for perfect matches, which can cause preferential amplification.

- Cross-Contamination Check: Was this taxon present in a high-biomass sample processed in the same batch? Review lab practices (separate pre- and post-PCR areas, use of UV hoods, dedicated pipettes).

- Protocol Verification: Ensure PCR cycle number was not excessively high, which can amplify minor contaminants or lead to chimera formation.

- A: This is likely a technical artifact, such as primer bias or contamination.

Q4: My analysis shows a high percentage of chimeric sequences. How can I minimize them experimentally?

- A: Chimeras are hybrid sequences formed during PCR and are a major vulnerability.

- Experimental Protocol to Minimize Chimeras:

- Template Integrity: Start with high-quality, non-degraded genomic DNA.

- Optimized PCR: Use a low number of PCR cycles (25-30 cycles). Employ a high-fidelity, proofreading polymerase.

- Modified Cycling Conditions: Implement a two-step PCR (e.g., 5-10 cycles of annealing/extension at a lower temperature, followed by 15-20 cycles at a higher temperature) or use "Touchdown" PCR to increase initial specificity.

- Experimental Protocol to Minimize Chimeras:

- A: Chimeras are hybrid sequences formed during PCR and are a major vulnerability.

Research Reagent Solutions Table

| Reagent / Material | Function in 16S Amplicon Workflow |

|---|---|

| Magnetic Bead-based Cleanup Kits | Size selection and purification of PCR amplicons and final libraries, removing primers, dimers, and contaminants. |

| PCR Bias-Reduction Polymerase | High-fidelity DNA polymerase (e.g., Q5, KAPA HiFi) to minimize amplification errors and chimera formation. |

| qPCR Library Quantification Kit | Enables accurate molar concentration of final libraries with adapters for precise, equitable pooling and optimal sequencer loading. |

| Mock Microbial Community | Defined mix of genomic DNA from known species. Serves as a positive control to evaluate bias, sensitivity, and accuracy of the entire workflow. |

| DNA LoBind Tubes/Plates | Reduce nonspecific adsorption of low-concentration DNA to plastic surfaces, improving yield and reproducibility. |

| UV-treated Laminar Flow Hood | Provides a sterile, nuclease-free workspace for pre-PCR steps to minimize environmental contamination. |

Summary of Common Data Quality Issues (Quantitative Data)

| Issue | Typical Impact on Data | Recommended QC Threshold |

|---|---|---|

| Chimeric Sequences | False diversity; erroneous OTUs/ASVs. | <1-3% of total reads post-filtering. |

| PCR/Sequencing Errors | Inflated diversity; spurious variants. | Denoising (DADA2, Deblur) recommended over clustering. |

| Contamination (in controls) | False positives, invalidates low-biomass data. | Negative control reads should be <0.1% of sample reads. |

| Primer/Amplification Bias | Skewed community composition. | Use mock community to quantify bias. |

| Low Sequencing Depth | Incomplete community representation. | Rarefaction curves must plateau for alpha diversity. |

Detailed Experimental Protocol: Mock Community Analysis for Workflow Validation

Purpose: To quantify the bias, sensitivity, and error rate of your specific 16S rRNA gene amplicon sequencing workflow.

Materials:

- Commercial mock community genomic DNA (e.g., ZymoBIOMICS Microbial Community Standard).

- All standard 16S library preparation reagents (primers, polymerase, etc.).

- Bioinformatic pipeline (QIIME 2, mothur).

Methodology:

- Processing: Include the mock community as a sample in your next sequencing run, processing it identically to your biological samples (extraction if needed, PCR, library prep, sequencing).

- Bioinformatic Analysis:

- Process raw reads through your standard pipeline (demultiplexing, quality filtering, denoising/OTU picking, taxonomy assignment).

- Generate an ASV/OTU table for the mock community sample.

- Validation Metrics Calculation:

- Recall/Sensitivity: Proportion of expected species that were detected. (Target: >95%).

- Specificity: Absence of species not in the mock community. (Target: 100%).

- Compositional Accuracy: Correlation between the expected relative abundance and the observed relative abundance of each species (e.g., calculate Bray-Curtis dissimilarity between expected and observed profiles. Target: BC < 0.1).

- Error Rate: Percentage of reads assigned to incorrect taxa due to sequencing/PCR errors or database issues.

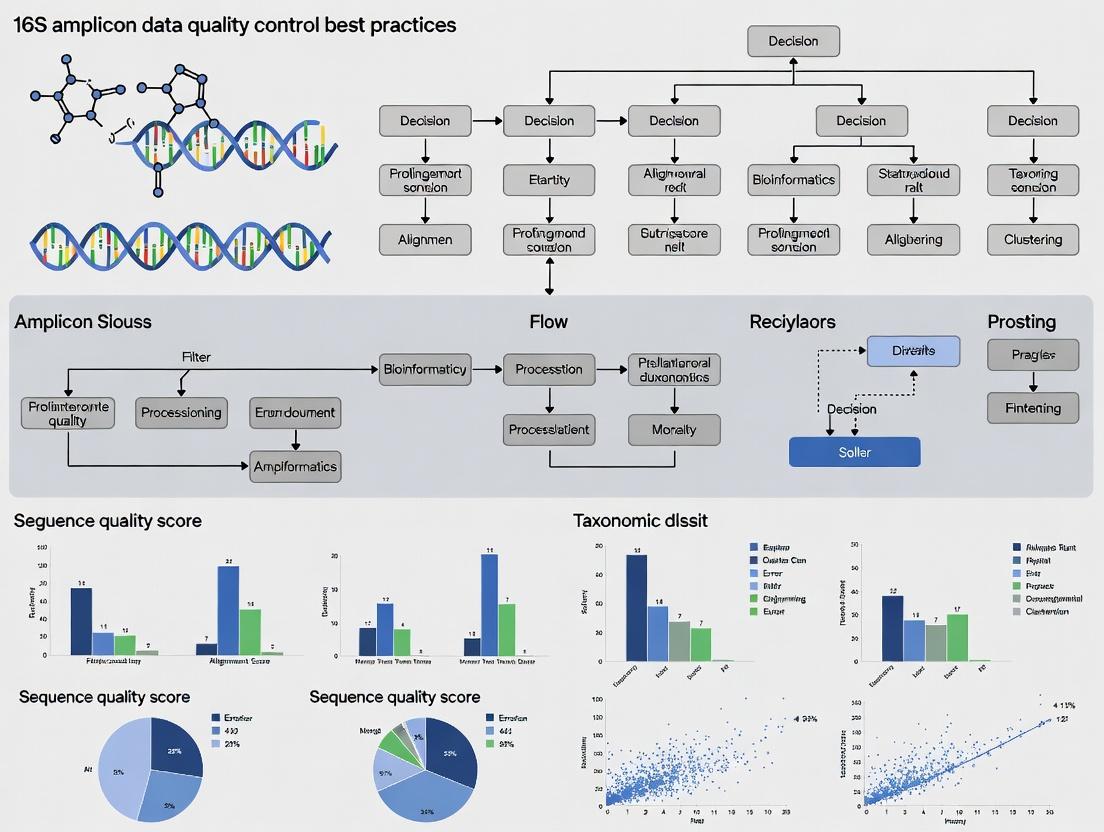

Workflow Diagram

Title: 16S Amplicon Workflow with Key Vulnerabilities

Bioinformatic QC & Filtering Logic Diagram

Title: Bioinformatic Filtering Decision Tree for 16S Data

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My alpha diversity (e.g., Shannon Index) shows unusually low values and high variability between replicates. What could be the cause? A: This is a classic symptom of inconsistent or insufficient sequence depth per sample, often due to poor library quantification or PCR inhibition. Low read counts skew diversity metrics, making rare taxa appear absent and inflating variability.

- Protocol: Library QC with Fluorometric Quantification

- Reagent: Use a dsDNA high-sensitivity assay kit (e.g., Qubit).

- Method: Quantify purified amplicon libraries according to kit instructions. Do not rely on spectrophotometer (e.g., Nanodrop) readings alone, as they overestimate concentration due to primer-dimers and free nucleotides.

- Normalization: Pool libraries based on fluorometric concentrations, not molarity from bioanalyzer traces alone.

- Verification: Run a pooled library on a bioanalyzer or tapestation to confirm uniform fragment size and the absence of a large primer-dimer peak (~100-150bp).

Q2: I suspect contamination in my negative controls. How do I determine if it's affecting my results and what thresholds should I use? A: Contamination from reagents or cross-sample "bleed" can introduce non-biological signals. Systematic analysis of controls is mandatory.

- Protocol: Contamination Assessment & Filtering

- Sequence Controls: Include both an extraction blank (no template) and a PCR no-template control (NTC) in every batch.

- Data Processing: Process controls through the exact same bioinformatics pipeline as samples.

- Threshold Setting: Apply a prevalence-based filter. For example, remove any ASV/OTU that appears in less than 10% of your true samples but is present in any negative control. Alternatively, use a statistical package like

decontam(R) in prevalence mode. - Quantitative Filter: If controls have high read counts, consider subtracting the maximum count observed in any control from all samples.

Q3: My beta diversity PCoA plot shows strong batch effects clustering by sequencing run or extraction date. How can I diagnose and correct this? A: Technical variation from different reagent lots, personnel, or sequencing runs can overwhelm biological signal. This requires pre- and post-sequencing mitigation.

- Protocol: Batch Effect Mitigation

- Experimental Design: Include inter-run calibrators (the same sample library) across all sequencing batches.

- Bioinformatic Diagnosis: Perform PERMANOVA on your distance matrix using

run_dateorbatchas a factor. A significant p-value confirms the batch effect. - Correction: Use a batch-correction tool such as

ComBat(from thesvaR package) on the ASV/OTU count matrix (after center-log-ratio transformation) or on principal coordinates.

Q4: My positive control (mock community) shows unexpected taxa or imbalances. What does this indicate? A: This indicates bias in your wet-lab or analysis steps. A mock community with known, even abundances is the gold standard for assessing fidelity.

- Protocol: Mock Community Analysis

- Expected vs. Observed: Create a table comparing the theoretical composition to your observed composition.

- Calculate Metrics:

- Recall: % of expected taxa detected.

- Precision: % of detected taxa that were expected.

- Bias: Log-ratio of observed vs. expected abundance for each taxon.

- Action: Low recall suggests primer bias or loss during purification. Low precision indicates contamination. Systematic bias indicates PCR bias.

Table 1: Impact of Read Depth on Alpha Diversity Metrics

| Mean Reads/Sample | Shannon Index (Mean ± SD) | Observed ASVs (Mean ± SD) | Comment |

|---|---|---|---|

| >50,000 | 5.2 ± 0.3 | 250 ± 15 | Stable, reliable metrics. |

| 10,000 | 4.1 ± 0.8 | 180 ± 40 | Higher variability, rare taxa lost. |

| <5,000 | 3.0 ± 1.2 | 95 ± 55 | Metrics are unreliable and skewed. |

Table 2: Contamination Filtering Threshold Impact on Downstream Analysis

| Filtering Method | ASVs Remaining | % ASVs from Controls Removed | PERMANOVA R² (Condition) | PERMANOVA p-value (Batch) |

|---|---|---|---|---|

| No Filter | 1250 | 0% | 0.08 | 0.001 |

| Prevalence-Based | 980 | 12% | 0.15 | 0.003 |

| Prevalence + Quantitative | 875 | 18% | 0.22 | 0.120 |

Experimental Workflow Diagram

Diagram Title: 16S rRNA Amplicon Study Workflow with Critical QC Steps

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | Contains known, fixed ratios of microbial genomes. Serves as a positive control to quantify PCR/sequencing bias, compute recall/precision, and normalize across runs. |

| UltraPure Water/DNA Suspension Buffer | Certified nuclease-free and microbiome-free water for elution and PCR setup. Critical for reducing background contamination in negative controls. |

| High-Sensitivity Fluorometric DNA Assay Kit (e.g., Qubit) | Accurately quantifies double-stranded DNA without interference from primers, nucleotides, or RNA. Essential for equimolar library pooling. |

| Size-Selective Beads (e.g., AMPure XP) | For post-PCR clean-up to remove primer-dimers (<150bp) which consume sequencing reads and distort library quantification. |

| Phusion High-Fidelity DNA Polymerase | Polymerase with high fidelity and low GC bias to reduce amplification errors and compositional skewing during PCR. |

| Dual-Indexed Barcoded Primers (e.g., Nextera) | Unique barcodes for each sample to multiplex hundreds of samples per run while minimizing index-hopping crosstalk. |

| DNA LoBind Tubes | Reduce DNA adhesion to tube walls, improving yield and consistency, especially for low-biomass samples. |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Our 16S amplicon sequencing shows a very low diversity in one sample batch, but high diversity in others. The protocol was identical. What primer-related issue could cause this? A1: This is a classic sign of primer mismatch bias. The conserved regions targeted by your primer pair may have sequence variants in the specific microbial communities in that batch. This inhibits amplification for certain taxa. Verify your primer sequences against updated databases like SILVA or Greengenes using tools like TestPrime. Consider using a primer set with broader degeneracy or a multi-primer approach.

Q2: We observe significant contamination with Pseudomonas sequences in our negative controls. What are the likely sources? A2: Pseudomonas is a common lab and reagent contaminant. Key sources include:

- DNA Extraction Kits: Some kits have demonstrated bacterial DNA carryover, including from Pseudomonas.

- Polymerase Enzymes: Taq polymerase derived from E. coli can contain traces of genomic DNA.

- Water and Buffers: Nuclease-free water is not always DNA-free.

- Laboratory Environment: Aerosols from high-titer cultures.

- Solution: Implement rigorous negative controls at every stage (extraction, PCR, sequencing). Use validated, "microbiome-grade" reagents that have been treated with DNA-damaging agents (e.g., DNase, UV irradiation). Replicate experiments and use bioinformatics tools (e.g., decontam R package) to identify and subtract contaminant sequences.

Q3: Our sequencing run had a high percentage of chimeric reads (>10%). How can we reduce this during library prep? A3: Chimeras form during PCR when an incomplete amplicon acts as a primer on a heterologous template. To minimize:

- Reduce PCR Cycle Count: Use the minimum number of cycles necessary for library construction (often 25-30, not 35+).

- Optimize Extension Time: Ensure extension time is sufficient for full-length amplicon synthesis.

- Use High-Fidelity Polymerase: Enzymes with 3'→5' exonuclease (proofreading) activity reduce mis-priming and incomplete extensions, though they may produce blunt ends. A blend of high-fidelity and standard Taq is often used.

- Employ Modified Primers: Primer pairs with 5' tags (adapters) can reduce chimera formation compared to primers where the adapter is added in a subsequent PCR.

- Post-Sequencing: Always use a robust chimera detection/removal tool (e.g., DADA2, UCHIME2) in your pipeline.

Q4: We get inconsistent community profiles between technical replicates. Could this be due to sequencing chemistry? A4: Yes, particularly if the inconsistency is in low-abundance taxa. Key factors are:

- Cluster Amplification Bias on the Flow Cell: During bridge amplification on Illumina platforms, some templates amplify more efficiently than others, leading to uneven representation.

- Phasing/Pre-Phasing Errors: As read length increases, a small percentage of molecules get out of sync, causing increased errors and lower quality scores toward the 3' end. This can lead to misassignment or loss of reads.

- Low Complexity Libraries: Amplicon pools have limited nucleotide diversity, especially at the start of reads, which can impair cluster detection and base calling on certain Illumina platforms (e.g., MiSeq v2 kits). Always spike-in with a high-diversity library (e.g., 10-20% PhiX) to correct for this.

Q5: What is the impact of different DNA polymerases on error rates and bias in 16S amplicon generation? A5: The polymerase choice critically impacts both fidelity and representation.

| Polymerase Type | Typical Error Rate (per bp) | Pros for 16S | Cons for 16S |

|---|---|---|---|

| Standard Taq | ~1.1 x 10⁻⁴ | Low cost, adds 3'A overhang for easy cloning. Can handle difficult templates. | Higher error rate, no proofreading, may increase chimeras. |

| High-Fidelity (e.g., Phusion, Q5) | ~4.4 x 10⁻⁷ | Very low error rate, reduces chimeras. | Blunt-end product, may exhibit bias against GC-rich or complex templates, slower. |

| "Microbiome-Optimized" Blends | ~5 x 10⁻⁶ | Engineered for fidelity and reduced bias, often includes Taq for A-tailing. | Higher cost, proprietary formulations. |

Detailed Experimental Protocol: Evaluating Primer Bias with Mock Communities

Objective: To quantify the bias introduced by different 16S rRNA gene primer sets.

Materials (Research Reagent Solutions):

| Item | Function |

|---|---|

| Genomic DNA from Mock Microbial Community (e.g., ZymoBIOMICS, BEI Resources) | Provides a known, stable standard of defined composition to measure bias against. |

| Candidate Primer Pairs (e.g., 27F/338R, 515F/806R, etc.) | Amplify the target hypervariable region(s). Must have Illumina adapter overhangs. |

| High-Fidelity PCR Master Mix | Reduces PCR-introduced errors that could confound bias assessment. |

| Magnetic Bead-based Cleanup System (e.g., AMPure XP) | For reproducible size selection and purification of amplicons. |

| High-Sensitivity DNA Assay Kit (e.g., Qubit, Bioanalyzer) | Accurate quantification for equimolar pooling. |

| Illumina Sequencing Reagents (e.g., MiSeq v3 600-cycle kit) | Provides sufficient read length for common amplicons. |

Methodology:

- Extraction Control: Extract DNA from the mock community according to its protocol. Include a negative extraction control.

- Amplification: For each primer set, perform triplicate 25µL PCR reactions.

- Template: 1-10ng mock community DNA.

- Cycles: 25-28 (avoid plateau phase).

- Include a no-template control (NTC) for each primer set.

- Purification: Clean amplicons with magnetic beads (0.8x ratio). Elute in nuclease-free water.

- Quantification & Pooling: Quantify each product fluorometrically. Pool equimolar amounts of amplicons from each primer set reaction.

- Sequencing: Sequence the pooled library on an Illumina platform with ≥20% PhiX spike-in.

- Bioinformatic Analysis:

- Process reads through a standardized pipeline (DADA2, QIIME2).

- Assign ASVs/OTUs against a curated reference database.

- Calculate Bias: Compare the observed proportions of each taxon in the data to the known proportions in the mock community for each primer set. Use metrics like Mean Absolute Error (MAE) or fold-change deviation.

Visualizations

Title: Experimental Workflow for Primer Bias Evaluation

Title: Major Bias and Error Sources Across 16S Workflow

This guide, part of a broader thesis on 16S rRNA amplicon sequencing quality control best practices, provides troubleshooting support for key data quality metrics.

FAQs & Troubleshooting Guides

Q1: What is a Q-score and what does a low score indicate? A: A Q-score (Phred quality score) is a per-base logarithmic measure of sequencing accuracy. A score of Q30 means a 1 in 1000 chance of an incorrect base call (99.9% accuracy). Low Q-scores at the 3' ends of reads are common due to signal decay.

Q2: Why is my read length shorter than expected after primer trimming? A: This is typically due to poor sample quality (degraded DNA) or issues during PCR amplification (inhibitors, suboptimal cycling conditions). It can also result from overly aggressive quality trimming.

Q3: My chimera rate is extremely high (>20%). What went wrong? A: High chimera rates are primarily caused by over-amplification during PCR (too many cycles) or using too little template DNA. Template reannealing during later PCR cycles leads to incomplete extensions, which then act as primers in subsequent cycles.

Q4: How do I interpret the summary table from my sequencing provider? A: Refer to the following table of benchmark values for 16S amplicon sequencing (e.g., V4 region, Illumina MiSeq):

| Metric | Good/Passing Range | Warning Range | Failure Range | Primary Cause of Failure |

|---|---|---|---|---|

| Q30 Score | ≥ 80% of bases | 70-79% | < 70% | Instrument issue, poor cluster generation |

| Mean Read Length | Within 10bp of expected* | 10-20bp shorter | >20bp shorter | Degraded DNA, PCR failure |

| Chimera Rate | < 5% | 5-10% | > 10% | Excessive PCR cycles, low template |

| Total Reads per Sample | ≥ 50,000 | 10,000 - 50,000 | < 10,000 | Quantification error, pooling issue |

*Example: For a 250bp V4 amplicon, expect ~250bp raw reads.

Q5: Can I proceed with analysis if one metric fails? A: It depends. Low Q-scores can be filtered. Short reads may truncate the region. High chimeras must be removed prior to analysis, but if the rate is too high, it may irreparably reduce your sequence depth. Re-sequence if possible.

Detailed Protocols

Protocol 1: Calculating and Interpreting Q-scores from FASTQ Files

Method: Use tools like FastQC or bioinformatics quality control in Python.

- For each base position in the read, the ASCII quality character is converted to a Phred score:

Q = ord(ascii_char) - 33(for Sanger/Illumina 1.8+). - Calculate the average Q-score per base position across all reads.

- Visualize the per-base sequence quality. Expect a drop in scores towards the 3' end.

- Apply a quality trim (e.g., using

Trimmomaticorcutadapt) to remove bases below a threshold (e.g., Q20).

Protocol 2: Determining Chimera Rates with UCHIME or VSEARCH Method: De novo chimera detection followed by filtering.

- Dereplicate sequences (

vsearch --derep_fulllength). - Sort by abundance (

vsearch --sortbysize). - Chimera detection: Run

vsearch --uchime_denovoon the sorted, dereplicated sequences. - Filter: Remove chimeric sequences from your main sequence file using the output chimera list.

- Calculate Rate:

(Number of chimeric sequences / Total sequences before filtering) * 100.

Visualization: 16S Amplicon QC Workflow

Diagram 1: Core 16S Amplicon Data QC Workflow

Diagram 2: Chimera Formation via Incomplete PCR Extension

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in 16S Amplicon QC |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Reduces PCR errors and chimera formation due to superior processivity and proofreading. |

| Validated Primer Set (e.g., 515F/806R for V4) | Ensures specific amplification of target region; reduces off-target products. |

| Quantitation Kit (Qubit dsDNA HS Assay) | Accurately measures DNA concentration for optimal template input into PCR. |

| PCR Purification or Size-Selection Beads (SPRI) | Removes primer dimers and non-specific products to ensure clean library preparation. |

| Phix Control v3 (Illumina) | Balances diversity on flow cell for improved cluster detection and base calling. |

| DNeasy PowerSoil Pro Kit (Qiagen) | Standardized extraction for difficult samples; removes PCR inhibitors. |

The Critical Link Between Rigorous QC and Reproducible Microbiome Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our negative controls show high-amplitude 16S rRNA gene amplification. What are the likely causes and how can we resolve this? A: This indicates contamination, often from reagents or the lab environment. To resolve:

- Use Ultrapure Reagents: Employ commercially available, DNA-free, PCR-grade water and buffers certified for microbiome work.

- Include Rigorous Controls: Process multiple extraction blanks (no sample) and no-template PCR controls (NTCs) in every batch.

- Analyze Separately: Sequence controls alongside samples and apply bioinformatic contamination removal tools (e.g.,

decontamin R, prevalence or frequency method) before downstream analysis. If control reads exceed 1% of the average sample library size, the batch should be investigated and potentially re-run. - Dedicated Workspace: Use a UV PCR hood for master mix preparation, separate from post-PCR and extraction areas.

Q2: We observe significant batch effects across different sequencing runs. How can we minimize and correct for this? A: Batch effects arise from technical variation. Mitigation strategies include:

- Experimental Design: Distribute samples from all experimental groups across multiple sequencing runs/lanes.

- Use of Technical Replicates: Include a homogenized sample or a mock microbial community as an inter-run calibrator in every batch.

- Bioinformatic Correction: Utilize tools designed for batch correction, such as

ComBat-seq(part of thesvaR package), which models batch effects and adjusts counts. Note: This should be applied after core microbiome processing (DADA2, decontamination) but before diversity metrics or differential abundance testing.

Q3: Our replicate sample variability is higher than expected. What steps should we check in our wet lab protocol? A: High inter-replicate variability often stems from sample collection or early processing steps.

- Sample Homogenization: For solid samples (stool, soil), use a validated homogenization method (e.g., bead beating with defined bead size, speed, and time) to ensure a consistent microbial lysis across replicates.

- Standardized Input: Quantify input material by mass (for solids) or volume (for liquids) with high precision. Document any deviations.

- DNA Extraction Kit Lot Tracking: Record the lot numbers of all extraction and purification kits. Test new lots against old ones using a standard sample to identify kit-induced variability.

- PCR Cycle Number: Minimize PCR cycles (typically 25-35 cycles) to reduce stochastic amplification bias and chimera formation. Use a polymerase with high fidelity.

Q4: After bioinformatic processing, our Positive Control (Mock Community) does not match the expected composition. What does this signify? A: This indicates bias or error in your wet-lab or computational pipeline.

- Check 1: Verify the expected composition of your mock community (e.g., ZymoBIOMICS, ATCC MSC). Compare your observed relative abundances at the genus or species level.

- Check 2: Ensure your bioinformatics pipeline (from primer trimming to ASV/OTU clustering and taxonomy assignment) is using the correct reference database (e.g., SILVA, Greengenes) and version that matches your primer set (e.g., V4 region of 16S).

- Action: A significant deviation (e.g., a genus expected at 20% appearing at <5% or >40%) suggests PCR bias or database misalignment. You may need to optimize primer choice or use a mock-community-aware error correction in DADA2.

Experimental Protocols for Key QC Experiments

Protocol 1: Processing and QC of a Serial Dilution Mock Community

- Objective: To assess the sensitivity, limit of detection, and quantitative accuracy of the entire 16S amplicon pipeline.

- Materials: Defined mock community (e.g., ZymoBIOMICS Microbial Community Standard), DNA extraction kit, Qubit fluorometer, 16S rRNA gene primer set, polymerase.

- Method:

- Serially dilute the mock community genomic DNA across a 4-log range (e.g., from 1 ng/µL to 0.001 ng/µL).

- Process each dilution in triplicate through the identical PCR and sequencing protocol used for your samples.

- Sequence all replicates in the same run.

- Bioinformatic Analysis: Process data through your standard pipeline. For each dilution, calculate:

- Observed Richness: Number of ASVs/OTUs detected vs. expected.

- Relative Abundance Correlation: Pearson correlation between observed and expected relative abundances.

- Limit of Detection: The lowest dilution at which all expected community members are detected.

Protocol 2: Inter-Batch Calibration Using a Homogenized Control Sample

- Objective: To monitor and correct for technical variation between sequencing runs or extraction batches.

- Materials: A large, homogenized biological sample (e.g., pooled stool, soil), aliquoted for long-term use.

- Method:

- Create Control Aliquots: From the homogenized material, create single-use aliquots for DNA extraction.

- Include in Every Batch: In every extraction batch (max 1-2 per week), include one control aliquot. Subsequently, include its extracted DNA in every PCR/sequencing run.

- Analysis: After processing, perform Principal Coordinates Analysis (PCoA) on a beta-diversity metric (e.g., Weighted UniFrac). The control samples should cluster tightly. Dispersion indicates batch effect strength. Use these controls as a stable reference for tools like

ComBat-seq.

Data Presentation

Table 1: Impact of QC Steps on Data Reproducibility (Hypothetical Data from Mock Community Analysis)

| QC Step Implemented | Correlation (r) to Expected Composition* | Coefficient of Variation (CV) across Replicates* | ASVs Detected in NTCs* |

|---|---|---|---|

| No Specific QC (Baseline) | 0.65 | 25% | 15 |

| Ultrapure Reagents + Dedicated Hood | 0.78 | 18% | 3 |

| Baseline + Bioinformatic Decontamination | 0.80 | 22% | 0 |

| All Steps (Rigorous QC) | 0.95 | 8% | 0 |

Data represents simulated averages based on common findings in recent literature (e.g., *Microbiome, ISME J).

Table 2: Essential Research Reagent Solutions for 16S rRNA Gene Amplicon QC

| Item | Function | Example/Note |

|---|---|---|

| DNA-free Water | Serves as the elution and master mix component; critical for reducing background contamination. | Qiagen PCR Grade Water, Invitrogen UltraPure DNase/RNase-Free Water. |

| Certified Low-Biomass Extraction Kits | Optimized for maximal lysis with minimal contaminant DNA carryover. | Qiagen DNeasy PowerSoil Pro Kit, MoBio PowerLyzer PowerSoil Kit. |

| Defined Mock Community (gDNA) | Validates entire workflow from extraction to bioinformatics for accuracy and sensitivity. | ZymoBIOMICS Microbial Community Standard, ATCC MSA-1000. |

| High-Fidelity Polymerase | Reduces PCR errors and chimera formation, improving ASV accuracy. | Q5 Hot Start High-Fidelity (NEB), Phusion Plus (Thermo). |

| Quantification Standards | Accurately measures DNA concentration for standardized input. | Qubit dsDNA HS Assay Kit (preferred over UV absorbance). |

| Indexed Primers & Sequencing Kit | Enables multiplexing; kit quality affects read length and quality scores. | Illumina 16S Metagenomic Sequencing Library Prep, Nextera XT Index Kit. |

Mandatory Visualizations

Title: 16S Amplicon Workflow with Critical QC Checkpoints

Title: Balanced vs Confounded Batch Study Design

The Modern QC Pipeline: Step-by-Step Protocols from Raw Reads to Analysis-Ready Data

FAQs & Troubleshooting Guide

Q1: My FastQC report shows "Per base sequence quality" is a red 'FAIL' for my 16S amplicon reads (e.g., V3-V4 region). What does this mean and how do I fix it? A: A red 'FAIL' typically indicates a significant drop in median quality scores (often below Q20) towards the ends of reads. For 16S sequencing, this is common due to diminishing signal from sequencing cycles.

- Primary Cause: Sequencing chemistry artifacts or low-diversity library issues common in amplicon pools.

- Action:

- Trimming: Use a quality-aware trimmer like Trimmomatic or Cutadapt to remove low-quality bases from the 3' ends. A standard starting point is to trim where average quality drops below Q20.

- Review MultiQC: Check if the issue is systematic across all samples. If only one sample fails, it may be a library-specific issue.

- Confirm Adapter Content: Poor quality often coincides with adapter read-through. Ensure adapter trimming is performed.

Q2: After running FastQC on multiple samples, the volume of reports is overwhelming. How can I efficiently compare quality across my entire 16S dataset? A: This is the exact use case for MultiQC.

- Solution: Run MultiQC in the directory containing all your FastQC output (

multiqc .). It will aggregate key metrics into a single, interactive HTML report. - Troubleshooting MultiQC Output: If MultiQC fails to find reports, ensure the FastQC output files have the standard

.zipor_fastqc.htmlsuffix. Usemultiqc -f .to force a re-run.

Q3: The "Per sequence GC content" module in FastQC shows a sharp, abnormal peak for my 16S amplicon data. Is this a problem? A: Not necessarily. A sharp, unimodal peak in GC content is expected for 16S amplicon data because you are sequencing a conserved, specific genomic region across all bacteria in the sample.

- Interpretation: A single, narrow peak is a "PASS" for amplicon studies, indicating high specificity and lack of contamination from organisms with vastly different GC content. A broad or multi-peak distribution would be a cause for concern, suggesting contamination or poor amplification specificity.

Q4: My "Sequence Duplication Levels" are very high (>50%). Does this mean I have over-sequenced my 16S library? A: High duplication levels are standard and expected in 16S amplicon sequencing due to the limited diversity of the starting template (PCR amplicons of the same region).

- Key Distinction: In whole-genome sequencing, high duplication often indicates PCR over-amplification or insufficient sequencing depth. In 16S amplicon sequencing, it reflects the natural outcome of amplifying a conserved region. Focus on "De-duplication" in your DADA2 or Deblur pipeline, which identifies and merges biologically identical reads, rather than the FastQC duplication warning.

Q5: How do I differentiate between a systematic sequencing run failure and a single bad sample from the FastQC/MultiQC reports? A: Use MultiQC's trend plots and compare samples across the run.

- Systematic Issue (e.g., Flow Cell Defect): All samples will show a similar pattern of quality drop at a specific cycle, or uniformly low scores. This may require discussion with your sequencing facility.

- Single Sample Issue: One sample will be an obvious outlier in multiple modules (Quality Scores, GC Content, Adapter Content, Total Sequences). This sample likely had a library preparation problem and may need to be excluded or reprocessed.

Key Metrics & Interpretation Table

The following table summarizes critical FastQC modules and their interpretation in the context of 16S amplicon sequencing.

| FastQC Module | Typical "Good" Result (WGS) | Typical 16S Amplicon Result | Reason for 16S Deviation | Recommended Action for 16S QC |

|---|---|---|---|---|

| Per Base Sequence Quality | High scores (Q>30) across all bases. | Quality drop at read ends. | Sequencing chemistry limits. | Quality-based trimming of 3' ends. |

| Per Sequence GC Content | Roughly normal distribution. | Sharp, single peak. | Low sequence diversity from amplicon. | None required. Confirm single peak. |

| Sequence Duplication Levels | Low percentage of duplicates. | Very high duplication (>50%). | PCR amplification of identical templates. | Use DADA2/Deblur for biological deduplication. |

| Overrepresented Sequences | Few to none. | Common (primers, adapters). | Known primer sequences are expected. | Must identify and trim adapters/primers. |

| Adapter Content | Low to zero. | May increase at read ends post-quality drop. | Read-through after amplicon sequence ends. | Trim adapters with a dedicated tool (Cutadapt). |

Experimental Protocol: Integrated Raw Read QC for 16S Data

This protocol outlines the steps from receiving sequencing data to generating a cleaned feature table, with embedded QC.

1. Initial Quality Assessment & Report Aggregation

2. Primer/Adapter Trimming & Quality Filtering

- Tool: Cutadapt (for primers) + Trimmomatic (for quality) or a single-tool like

fastp. - Example (Cutadapt for V3-V4 primers):

- Example (Trimmomatic for quality):

3. Post-Cleaning QC Verification

4. Denoising & ASV/OTU Generation (with built-in QC)

- Tool: DADA2 (in R). This step inherently performs error modeling, read merging, and chimera removal.

Workflow Diagram

Title: 16S Amplicon Raw Read QC & Processing Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in 16S Amplicon QC |

|---|---|

| Cutadapt | Software tool to find and remove primer/adapter sequences from raw reads. Critical for preventing false merges and downstream errors. |

| Trimmomatic / fastp | Quality filtering tools that remove low-quality bases from read ends and discard reads below a length threshold. |

| DADA2 | R package that models and corrects Illumina-sequencing errors, merges paired-end reads, removes chimeras, and infers Amplicon Sequence Variants (ASVs). |

| QIIME 2 | A comprehensive, plugin-based microbiome analysis platform that can encapsulate the entire QC and processing pipeline (using plugins for demux, cutadapt, DADA2, etc.). |

| Phenol:Chloroform:Isoamyl Alcohol | Used in manual DNA extraction protocols to separate proteins and lipids from nucleic acids, providing high-quality template DNA for PCR. |

| Magnetic Bead-based Cleanup Kits | (e.g., AMPure XP). Used for PCR product purification to remove primers, dimers, and salts before library quantification and pooling. Essential for even sequencing depth. |

| Quant-iT PicoGreen dsDNA Assay | A fluorescent dye used to accurately quantify double-stranded DNA library concentration after cleanup, ensuring optimal loading onto the sequencer. |

| PhiX Control v3 | A spike-in control for Illumina runs. Adds sequence diversity to low-diversity amplicon libraries, improving cluster identification and base calling accuracy. |

Welcome to the Technical Support Center for Amplicon Sequence Quality Control. This resource, developed as part of a doctoral thesis on 16S amplicon data quality control best practices, provides targeted troubleshooting for primer and adapter trimming.

Frequently Asked Questions & Troubleshooting Guides

Q1: My post-trimming sequence length is much shorter than expected. What are the primary causes? A: This is often due to over-trimming. Common causes and solutions:

- Cause 1: Overlapping Primer/Adapter Sequences. If your adapter sequence partially matches your primer or gene region, the trimmer may remove valid sequence.

- Solution: Use the

--overlapparameter in Cutadapt or-Oin Trimmomatic to require a minimum overlap (e.g., 3-5 bp) for trimming. This increases specificity.

- Solution: Use the

- Cause 2: Low Quality Bases Within the Amplicon. Aggressive quality trimming can remove internal bases.

- Solution: Perform quality trimming before primer/adapter removal. Use a sliding window approach (e.g., Trimmomatic's

SLIDINGWINDOW:4:20) that trims only when average quality drops below a threshold within the window.

- Solution: Perform quality trimming before primer/adapter removal. Use a sliding window approach (e.g., Trimmomatic's

- Cause 3: Incorrect Primer Sequence Provided. A single nucleotide mismatch can prevent trimming, leading to retention of the full primer and skewed length reports.

- Solution: Verify your primer sequence files for typos. Consider allowing 1-2 mismatches (

-e 0.1in Cutadapt) to account for synthesis errors or minor sequence variants.

- Solution: Verify your primer sequence files for typos. Consider allowing 1-2 mismatches (

Q2: Should I allow mismatches when specifying primer sequences, and if so, how many? A: Yes, allowing a small number of mismatches is a recommended best practice to account for sequencing errors and natural variation. However, the value must be balanced to avoid non-specific trimming.

- Recommendation: Start with a 10-15% error rate (e.g.,

-e 0.1in Cutadapt for 1 mismatch in a 10bp overlap). For longer primer matches (>20 bp), you can be more stringent (e.g.,-e 0.05). - Critical Parameter: Always pair mismatch allowance with a minimum overlap requirement (

-Oor--overlap) to ensure the match is meaningful. A typical setting is-e 0.1 -O 5.

Q3: What is the difference between "trimming" and "cutting" primers, and which should I use? A: This distinction is crucial for downstream analysis.

- Trimming: Removes the primer/adapter sequence only if it is found. If not found, the read is kept unchanged. This is the standard mode in tools like Cutadapt.

- Cutting (or Hard-Trimming): Removes a fixed number of bases from the start and/or end of every read, regardless of sequence. Use this when you are absolutely certain of your amplicon length and primer position (e.g., for standardized mock communities).

- Recommendation: For environmental 16S studies with length variation, use sequence-based trimming. Reserve cutting for controlled quality assessment experiments.

Q4: How do I handle paired-end reads where only one read contains the adapter? A: Unbalanced trimming in paired-end reads can cause them to be discarded during merging, drastically reducing data yield.

- Solution: Use the

--pair-filteroption in Cutadapt. The setting--pair-filter=anywill discard a pair if either read fails a quality filter.--pair-filter=bothis more lenient. For maximum retention, run trimming in two passes: first on read 1, then on read 2, using the-A/-B/-Goptions to trim adapter sequences that may have been ligated in the reverse orientation.

Quantitative Tool Comparison

The following table summarizes key parameters and performance metrics for common trimming tools, as benchmarked in recent literature.

Table 1: Comparison of Primer/Adapter Trimming Tools for 16S Amplicon Data

| Tool | Primary Use | Key Strength for 16S | Critical Parameter for Specificity | Typical Runtime (1M PE reads)* |

|---|---|---|---|---|

| Cutadapt | Adapter/Primer Removal | Precise sequence matching, flexible error tolerance | -O (min overlap), -e (error rate) |

2-3 minutes |

| Trimmomatic | General Quality & Adapter Trimming | Integrated quality control in one step | LEADING, TRAILING, SLIDINGWINDOW |

3-5 minutes |

| fastp | All-in-one QC | Ultra-fast, integrated adapter & poly-G trimming | --detect_adapter_for_pe, --trim_poly_g |

<1 minute |

| Atropos | Adapter/Primer Removal | Supports multiple alignment algorithms | -a, -A, --aligner |

4-6 minutes |

*Runtime benchmarks are approximate and depend on system specifications.

Detailed Experimental Protocol: Validating Trimming Efficiency

Protocol: Spike-in Control for Trimming Accuracy This protocol is designed to empirically measure primer/adapter trimming performance within a 16S sequencing run.

Objective: To quantify the false-negative (missed trim) rate of your trimming parameters. Materials: See "Research Reagent Solutions" below. Methodology:

- Spike-in Oligo Design: Synthesize a 300 bp dsDNA fragment that is phylogenetically neutral (does not match your target sample) but contains your exact forward and reverse primer sequences at its 5' and 3' ends, respectively.

- Library Preparation: Spike this control fragment into your genomic DNA extract at a known molar ratio (e.g., 1% of total DNA) prior to PCR amplification.

- Sequencing & Processing: Sequence the library normally. Process the raw data through your standard trimming pipeline.

- Analysis:

- Map all post-trimmed reads to the reference spike-in sequence.

- Calculate the percentage of spike-in reads that retain any primer sequence (≥ 5 bp match) after trimming. This is your false-negative rate.

- Examine a subset of your genuine 16S reads to check for over-trimming (loss of conserved region bases).

Visualization of Workflows

Diagram 1: Decision Workflow for Trimming Parameter Selection

Diagram 2: Amplicon Read Processing Stages

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Trimming Validation Experiments

| Item | Function in Validation | Example/Note |

|---|---|---|

| Synthetic Spike-in DNA Control | Contains known primer sequences to empirically measure trimming efficiency. | Custom 300 bp gBlock or dsDNA fragment. Must differ from sample background. |

| Quantitative PCR (qPCR) Assay | Precisely quantifies spike-in DNA concentration for accurate spiking. | Assay specific to the spike-in fragment sequence. |

| Mock Microbial Community (DNA) | Provides a known truth set for evaluating over-trimming impact on community structure. | ZymoBIOMICS or ATCC Mock Community Standards. |

| Benchmarking Software | Automates calculation of precision/recall for trimming. | seqkit for sequence stats, custom Python/R scripts for analysis. |

| High-Fidelity Polymerase | Minimizes PCR errors in spike-in and mock community amplicons. | Q5, KAPA HiFi, or Phusion. Critical for accurate controls. |

Technical Support Center: Troubleshooting & FAQs

Q1: During DADA2 denoising, I receive the error: "Error in dada(...) : Sequence abundances do not agree with the denoised output. What does this mean and how do I resolve it?"

A1: This error typically indicates sample inference failure due to an insufficient number of reads after quality filtering or a severe drop in quality. First, inspect your quality profiles using plotQualityProfile(). Ensure your truncation parameters (truncLen) are appropriate and that you are not trimming into low-quality regions too aggressively. Increase the maxEE parameter to allow more expected errors per read. Also, verify that you have not accidentally swapped forward and reverse read files.

Q2: When running Deblur, the process is extremely slow on my large dataset. Are there parameters to improve performance?

A2: Yes. Deblur can be computationally intensive. Use the --jobs-to-start parameter to parallelize across multiple cores. For 16S data, ensure you are using the appropriate reference positive seeds (e.g., 88_otus.fasta for 88% OTU clustering reference) to reduce the search space. Pre-filtering your sequences to remove those with ambiguous bases (N) and very low-quality reads using tools like quality-filter before input into Deblur can significantly speed up the workflow.

Q3: In traditional OTU clustering with VSEARCH/UPARSE, I get very few OTUs compared to expected diversity. What could be the issue?

A3: This is often caused by overly aggressive chimera removal or clustering threshold mismatch. First, check the chimera detection step. Consider using a reference database (like SILVA) for chimera checking instead of de novo. Ensure the clustering identity threshold (--id) matches your region (e.g., 97% for full-length 16S). Also, check for low sequence count samples that may be discarded during singleton or low-count filtering; you may need to adjust the --minsize parameter.

Q4: After running any pipeline, my final feature table has samples with zero reads. Why did this happen?

A4: This is a sample drop-out issue. It commonly occurs during stringent quality filtering or denoising when all reads from a sample are removed. Diagnose by checking read counts after each step (trimming, filtering, denoising/merging). Loosen filtering criteria (maxEE, truncQ) for the affected samples in a separate run. Ensure your sample metadata file matches the sequence file names exactly. Batch effects from sequencing runs can also cause this; process problematic samples separately if needed.

Q5: How do I choose between DADA2's pool = "pseudo" and pool = FALSE options?

A5: Use pool = FALSE (independent sample inference) for large datasets (>100 samples) or when computational resources are limited. Use pool = "pseudo" for smaller datasets or when you have low-biomass samples with very few unique sequences; pseudo-pooling improves sensitivity to rare variants by sharing information between samples. Do not use pool = TRUE (full pooling) on large datasets due to excessive memory use.

Q6: I see "WARNING: Read ... too short after truncation." in my Deblur log. Should I be concerned?

A6: This warning indicates some reads were shorter than your specified trim length. If the number of such warnings is low (<1% of reads), it is generally not a problem. If high, revisit your trim length setting. Use the --mean-error parameter to adjust the acceptable error rate for truncation. Ensure your input sequences have been properly trimmed of primers and adapters prior to Deblur.

Quantitative Comparison Table

| Feature | DADA2 | Deblur | Traditional OTU Clustering (VSEARCH/UPARSE) |

|---|---|---|---|

| Core Algorithm | Parametric error model & sample inference. | Error profile based on positive filters & a greedy heuristic. | Distance-based clustering (e.g., at 97% identity). |

| Output Unit | Amplicon Sequence Variant (ASV). | Amplicon Sequence Variant (ASV). | Operational Taxonomic Unit (OTU). |

| Resolution | Single-nucleotide difference. | Single-nucleotide difference. | Defined by clustering threshold (e.g., 97%). |

| Chimera Removal | Integrated, based on consensus method. | Integrated, via positive filter alignment. | Separate step (e.g., uchime_denovo or uchime_ref). |

| Handles Indels | Yes (via alignment in core algorithm). | Yes (via sequence alignment to positive filter). | No, typically treats indels as mismatches. |

| Typical Run Time | Medium to High. | Low to Medium (after initial quality filtering). | Low. |

| Key Parameter | maxEE, truncLen, pool. |

trim_length, mean_error. |

--id (clustering %), --maxaccepts. |

| Denoises Sequencing Errors | Yes. | Yes. | No, errors can inflate OTU counts. |

| Requires Parameter Tuning | High (per-dataset quality inspection). | Medium (mainly trim length). | Low. |

Experimental Protocol: Benchmarking Denoising/Clustering Methods

Objective: To compare the performance of DADA2, Deblur, and Traditional OTU Clustering on a mock community 16S rRNA gene amplicon dataset.

Materials:

- Mock community FASTQ files (forward and reverse reads).

- Known reference sequences and composition for the mock community.

- Computing environment with QIIME 2, DADA2, and VSEARCH installed.

Procedure:

- Data Preparation: Import paired-end reads into QIIME 2 using

qiime tools import. Create a sample metadata file. - Primer Trimming: Trim primers using

qiime cutadapt trim-paired. - Method-Specific Processing:

- DADA2: Run

qiime dada2 denoise-pairedwith parameters set based onplotQualityProfileoutput (e.g.,--p-trunc-len-f 240 --p-trunc-len-r 200 --p-max-ee 2). Output: ASV table and representative sequences. - Deblur: First, join paired reads using

qiime vsearch join-pairs. Then, quality filter withqiime quality-filter q-score. Runqiime deblur denoise-16Swith--p-trim-length 400. - Traditional OTU Clustering: Use the DADA2-denoised but non-chimera-removed sequences as input. Cluster at 97% identity using

qiime vsearch cluster-features-de-novowith--p-perc-identity 0.97.

- DADA2: Run

- Evaluation: For each output table (ASV/OTU), compare to the known mock community truth set using

qiime fragment-insertion seppandqiime diversity beta-correlationor calculate recall (sensitivity) and precision (positive predictive value) of taxa identification.

Workflow Diagrams

Diagram 1: Comparative Analysis Workflow

Diagram 2: DADA2 Sample Inference Algorithm

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in 16S rRNA Amplicon QC & Analysis |

|---|---|

| Mock Community Genomic DNA | Positive control containing known bacterial sequences at defined ratios. Critical for benchmarking pipeline accuracy (recall/precision). |

| Nuclease-free Water | Used for PCR and library preparation dilutions. Prevents sample degradation and contamination. |

| High-Fidelity DNA Polymerase | Reduces PCR errors during initial amplification, providing more accurate starting sequences for denoising algorithms. |

| Dual-Indexed PCR Primers | Allows multiplexing of samples. Correct trimming of these indices is essential for demultiplexing before denoising. |

| AMPure XP Beads | For post-PCR cleanup and size selection. Ensures removal of primer dimers and non-target fragments, improving read quality. |

| PhiX Control v3 | Spiked into sequencing runs for quality monitoring and error rate calibration, indirectly supporting denoising. |

| Qubit dsDNA HS Assay Kit | Accurately quantifies DNA library concentration before sequencing to ensure balanced sample representation. |

| Bioanalyzer DNA High Sensitivity Kit | Assesses library fragment size distribution and quality, crucial for determining trim length parameters. |

| SILVA or Greengenes Database | Reference databases used for taxonomy assignment, chimera checking, and evaluation of results. |

Troubleshooting Guides & FAQs

Q1: After running UCHIME in de novo mode, an extremely high percentage of my sequences are flagged as chimeric. Is this expected? A: This can be normal for certain complex communities or datasets with high sequencing depth. The de novo mode is sensitive. First, verify your input data quality. High rates often indicate issues upstream:

- Primer/Adapter Contamination: Ensure these have been properly trimmed.

- Poor Quality Reads: Apply strict length and quality filters (e.g., maxEE=1.0, min length=200bp) before chimera checking.

- Overly Aggressive Settings: The default

-abskewparameter is 2.0. For your data, try increasing it to 3.0 or 4.0, which makes the algorithm more conservative. Re-run and compare the number of chimeras detected.

Q2: I get "Alignment too short" or "No candidates" errors in VSEARCH when using the --uchime_ref option. What does this mean?

A: This indicates the reference database sequences do not sufficiently align to your query sequences.

- Cause 1: Database Mismatch. You are likely using an inappropriate reference database (e.g., using a generalist like Greengenes/SILVA for a specialized fungal ITS study). Ensure your database matches your target gene region and taxonomy.

- Cause 2: Poor Sequence Quality. Input sequences may be too short, contain ambiguous bases, or be of very low quality. Re-inspect your filtering and trimming steps.

- Solution: Use the

--dbmask noneand--qmask noneoptions to disable masking and allow full alignments for diagnosis. Always use the same version of a database for training classifiers and chimera checking.

Q3: Should I use UCHIME (de novo), reference-based, or both methods for optimal results in my 16S analysis pipeline? A: Best practice, as established in recent methodology papers, is to use a combined approach. The consensus is that reference-based methods perform better when a high-quality, curated database is available, but de novo methods catch novel chimeras not in databases. The recommended workflow is to run both and take the union of the identified chimeric sequences for removal. Studies show this hybrid approach yields the highest sensitivity without disproportionate loss of biological diversity.

Q4: How do I choose between the "gold" and "specific" reference databases in UCHIME/VSEARCH?

A: The --db argument requires a specific formatted database.

- Gold Standard Databases (e.g.,

gold.fa): Used for evaluating the chimera detection algorithm itself, not for routine analysis. - Taxonomy-Specific Databases (e.g., SILVA, UNITE, RDP): Used for actual analysis. You must download the reference sequence file (e.g.,

silva.nr_v138.align) and format it for use with VSEARCH (--uchime_ref). For user experiments, always use the taxonomy-specific databases.

Q5: Does the order of quality filtering and chimera checking matter? A: Absolutely. Chimera detection must be performed AFTER rigorous quality control but BEFORE clustering or OTU picking. The standard pipeline order is: 1) Primer/Adapter removal, 2) Quality filtering & merging (for paired-end reads), 3) Chimera detection & removal, 4) Clustering/Denoising, 5) Taxonomy assignment.

Experimental Protocols & Data

Protocol 1: Standard Chimera Detection Workflow using VSEARCH

This protocol is designed for 16S rRNA gene amplicon data post quality-filtering and merging.

- Input: Quality-filtered FASTA file (

seqs.clean.fasta). - Dereplicate: Sort sequences by abundance.

Chimera Detection (de novo):

Chimera Detection (Reference-based): Download and format the SILVA reference database.

Final Output:

final_nonchimeras.fastais used for downstream OTU clustering or ASV analysis.

Protocol 2: Comparative Evaluation of Chimera Detection Tools

A cited methodology for benchmarking chimera tools within a thesis on QC best practices.

- Sample Data: Generate an in silico mock community dataset with a known proportion (e.g., 20%) of simulated chimeras using tools like

BELLEROPHONorMetaSim. - Tool Execution: Process the mock data through three pipelines: UCHIME (de novo), VSEARCH (uchime_ref), and a hybrid union approach.

- Metrics Calculation: For each pipeline, calculate:

- Sensitivity: (True Chimeras Detected) / (Total Chimeras in Mock).

- Precision: (True Chimeras Detected) / (Total Sequences Flagged as Chimeric).

- False Positive Rate: (Biological Sequences Incorrectly Flagged) / (Total Biological Sequences).

- Analysis: Compare metrics across tools (see Table 1) and statistically evaluate performance using McNemar's test.

Table 1: Performance Metrics of Chimera Detection Methods on an In Silico Mock Community (n=50,000 sequences, 20% chimeras)

| Method | Reference Database | Sensitivity (%) | Precision (%) | False Positive Rate (%) | Runtime (min) |

|---|---|---|---|---|---|

| UCHIME (de novo) | Not Applicable | 92.1 | 85.3 | 0.8 | 12 |

| VSEARCH (uchime_ref) | SILVA v138 | 88.7 | 96.5 | 0.2 | 8 |

| Hybrid (Union) | SILVA v138 | 95.4 | 90.1 | 0.5 | 20 |

Visualizations

Title: 16S Amplicon QC Workflow with Chimera Detection

Title: Reference-Based Chimera Detection Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Chimera Detection & Removal |

|---|---|

| Curated Reference Database (e.g., SILVA, RDP, UNITE) | Provides a set of verified, high-quality biological sequences used as a baseline to identify anomalous (chimeric) sequences by alignment and comparison. Essential for reference-based methods. |

Gold Standard Chimera Database (gold.fa) |

A controlled set of known chimeric and non-chimeric sequences used exclusively for benchmarking and validating the performance of chimera detection algorithms, not for routine analysis. |

| Quality-Filtered & Dereplicated FASTA File | The primary input reagent. Sequences must be cleaned of errors and duplicates to prevent false positives and reduce computational load during the chimera search. |

| Bioinformatics Tool Suite (VSEARCH/UCHIME) | The core software "reagent" that executes the chimera detection algorithm, performing pairwise alignments, statistical tests, and generating the output classifications. |

| In Silico Mock Community Data | A simulated dataset with a known composition, including artificially generated chimeras. Serves as a critical positive control for tuning parameters and validating pipeline accuracy. |

Contaminant Identification and Mitigation with Tools like Decontam and Source Tracking

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Decontam's isContaminant function returns an error: "Error in colSums(x > 0) : 'x' must be an array of at least two dimensions." What does this mean and how do I fix it?

A: This error indicates your input data is not in the correct format. The function expects a phyloseq object or a feature (ASV/OTU) abundance matrix where rows are features and columns are samples. Ensure your data object is not a vector or a single-column dataframe.

- Protocol: 1) Re-import your ASV table and ensure it's a matrix or dataframe. 2) If using

phyloseq, verify theotu_table()slot is present usingotu_table(physeq). 3) For a matrixdf, check dimensions withdim(df). It must have at least 2 rows and 2 columns.

Q2: I've run SourceTracker2, but the results show almost 100% "Unknown" source for my sink samples. What are the likely causes? A: A high "Unknown" proportion suggests your source environments are not well-represented in your source feature files.

- Protocol for Mitigation:

- Expand Source Libraries: Include more technical control samples (extraction blanks, PCR negatives, sequencing kit reagents) and sample-type specific negative controls in your source data.

- Review Metadata: Ensure source and sink samples were processed in the same batch (same extraction kits, sequencing run) to share contaminant profiles.

- Parameter Adjustment: Decrease the

--alphaparameter (default 0.001) to allow for more flexible source-sink matching. Test values like 0.01 or 0.1. - Rarefaction: Rarefy both source and sink data to the same sequencing depth before running SourceTracker2 to avoid depth bias.

Q3: How do I choose between Decontam's prevalence (method="prevalence") and frequency (method="frequency") methods?

A: The choice depends on your experimental design and the nature of your negative controls.

- Frequency Method: Use if you have a single, high-depth negative control (e.g., a pooled extraction blank) with a strong contaminant signal. It compares feature frequencies in samples versus the control.

- Prevalence Method: Use if you have multiple, lower-depth negative controls (standard practice). It identifies contaminants more prevalent in negative controls than in true samples. This is generally the recommended starting point.

Table 1: Method Selection Guide for Decontam

| Method | Best For | Key Input Requirement | Threshold Guidance |

|---|---|---|---|

| Prevalence | Multiple negative controls across batches. | A logical vector (is.neg) defining control samples. |

Start with threshold=0.5. Increase (e.g., to 0.6) for stricter filtering. |

| Frequency | A single, deeply sequenced negative control. | The quantitative DNA concentration from each sample. | Start with threshold=0.1. Adjust based on contaminant signal strength. |

Q4: Can I use Decontam and SourceTracker2 together in a workflow? Absolutely. What is the recommended order? A: Yes. The standard best-practice pipeline applies Decontam first for identification, then SourceTracker2 for quantification and attribution.

Diagram Title: Contaminant QC Workflow for 16S Data

Q5: My negative controls have very low sequencing depth (<100 reads). Will Decontam still work? A: It is challenging but possible. The prevalence method is more robust than frequency in this scenario.

- Protocol for Low-Depth Controls:

- Pool Controls: If you have multiple low-depth controls from the same batch/kit, create an in-silico pooled control by summing their counts.

- Adjust Threshold: Lower the

thresholdargument inisContaminant()(e.g., from 0.5 to 0.3-0.4) to increase sensitivity for detecting contaminants in sparse controls. - Manual Inspection: Always manually inspect the

p.prevorp.freqvalues and the raw prevalence/abundance plots generated byplot_frequency()to confirm the algorithm's call.

Q6: SourceTracker2 fails with a "MemoryError" on large datasets. How can I optimize it? A: SourceTracker2 uses a Bayesian approach that can be memory-intensive.

- Optimization Protocol:

- Aggregate Features: Perform taxonomic aggregation at the Genus level before analysis to drastically reduce the number of features.

- Subsample: Rarefy all samples to a uniform, lower depth (e.g., 5,000-10,000 reads per sample).

- Limit Sources: Include only the most relevant source environments (e.g., specific kit controls, not all possible samples).

- Job Parameters: Run on a server with increased RAM. Use the

--jobsparameter for parallel processing to reduce runtime.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Contaminant Control Experiments

| Item | Function in Contaminant Research |

|---|---|

| Molecular Grade Water | Used as a negative control substrate for extractions and PCR to identify reagent-borne contaminants. |

| DNA Extraction Kit Blanks | Kits-specific negative controls processed alongside samples to profile kit-specific contaminant signatures. |

| Mock Microbial Community (e.g., ZymoBIOMICS) | Known composition standard used to validate sequencing accuracy and differentiate true signal from contamination. |

| PCR Grade Nucleotide Mix (dNTPs) | High-purity dNTPs minimize microbial DNA background in reagents. |

| UltraPure BSA or Skim Milk | Additives to buffer PCR reactions and improve amplification of low-biomass samples without introducing contaminants. |

| UV-treated PCR Plates/Tubes | Laboratory consumables irradiated to fragment any contaminating DNA present in plasticware. |

| Dedicated Low-Biomass PCR Hood | A UV-equipped, sterile workspace for setting up extraction and PCR reactions to prevent airborne contamination. |

| High-Fidelity, Hostile DNA Taq Polymerase | Polymerase formulations designed to minimize amplification of contaminating bacterial DNA from enzyme production. |

Troubleshooting Guides and FAQs

Q1: After denoising with DADA2, my ASV table has an extremely high number of features (>10,000). Is this normal and how can I reduce potential noise? A: An initially high ASV count is common. This often indicates the presence of contaminant DNA, index-hopping artifacts, or non-target amplicons. We recommend applying a prevalence-based filtering step. A standard protocol is to filter out ASVs that appear in fewer than 5% of your samples. For a 96-sample run, retain only features present in ≥5 samples. This removes rare artifacts while preserving true biological rare biosphere.

Q2: My negative control samples contain ASVs with non-trivial read counts. How should I decontaminate my feature table? A: Contamination in negative controls is a critical QC issue. Follow this protocol:

- Identify Contaminants: Use the

decontampackage in R (method="prevalence") which statistically identifies contaminants based on their higher prevalence in negative controls vs. real samples. - Manual Curation: Create a "contaminant blacklist" of ASVs where the mean relative abundance in negative controls is >10% of their mean abundance in true samples.

- Subtraction: Remove blacklisted ASVs from all samples. Do NOT perform rarefaction before this step.

Q3: When merging paired-end reads, a significant percentage of my reads were lost. What are the main causes and solutions? A: High read loss during merging (>30%) typically indicates poor overlap due to:

- Cause 1: Amplicon length exceeding the combined read length (e.g., using 250x250 bp reads for a 550 bp V4 region).

- Solution: Truncate reads more aggressively in the DADA2 quality profile step before merging, ensuring a minimum 20 bp overlap.

- Cause 2: Excessive mismatch allowance in the merging algorithm.

- Solution: Stricter merging parameters. For DADA2's

mergePairs, usemaxMismatch=0andminOverlap=20.

Q4: How do I handle samples with very low total read counts after chimera removal? A: Samples with read depths below your chosen rarefaction depth must be addressed.

- Evaluate: Calculate the median read depth across all samples.

- Decision Threshold: Set a minimum sample depth threshold at 10% of the median depth. For a median of 50,000 reads, the threshold is 5,000.

- Action: Remove samples below this threshold entirely from the analysis. Do not attempt to "salvage" them by re-sequencing at a lower depth, as they skew beta-diversity metrics.

Q5: Should I normalize my count matrix using rarefaction or a proportional/relative abundance transformation? A: The choice depends on your downstream analysis goal. See the table below for a comparison.

| Normalization Method | Key Principle | Best For | Major Caveat |

|---|---|---|---|

| Rarefaction | Subsamples all samples to an equal sequencing depth. | Beta-diversity analyses (e.g., PCoA, PERMANOVA) where dissimilarity metrics (UniFrac, Bray-Curtis) are sensitive to library size. | Discards valid data; can increase variance. Use a depth that discards the fewest samples. |

| Proportional (Relative Abundance) | Converts counts to fractions of the total sample library size. | Within-sample (alpha-diversity) metrics and most statistical modeling (e.g., DESeq2, edgeR for differential abundance). | Compositional nature distorts between-sample comparisons. |

Protocol for Rarefaction:

- Generate a library size distribution plot.

- Choose a rarefaction depth that retains >95% of your samples. Use the

rarefy_even_depthfunction fromphyloseq(R) withrngseed=TRUEfor reproducibility. - Perform rarefaction AFTER all contaminant and low-count feature filtering, but BEFORE downstream ecological analysis.

Experimental Protocol: Generating a Curated Feature Table

Title: Protocol for Curation of 16S rRNA Gene Amplicon Feature Table

Objective: To generate a high-quality, biologically interpretable Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) count matrix from raw sequencing reads.

Materials & Reagents:

- Demultiplexed paired-end FASTQ files.

- High-performance computing cluster or workstation (≥16 GB RAM).

- QIIME 2 (2024.5 or later), DADA2 (R), or MOTHUR pipeline.

- Sample metadata file (CSV format) including negative control and positive control (mock community) identifiers.

- Reference database (e.g., SILVA 138.1, Greengenes 13_8) for taxonomy assignment.

Procedure:

- Initial Quality Assessment: Use

FastQCorDADA2::plotQualityProfileto visualize per-base sequence quality. Record average Phred scores. - Denoising & ASV Inference (DADA2 Workflow):

a. Filter and trim reads:

filterAndTrim(truncLen=c(240,200), maxN=0, maxEE=c(2,2), truncQ=2)b. Learn error rates:learnErrors(multithread=TRUE)c. Dereplicate:derepFastq()d. Core sample inference:dada(derep, err=learned_error_rates, pool="pseudo", multithread=TRUE)e. Merge paired ends:mergePairs(dadaF, derepF, dadaR, derepR, minOverlap=12)f. Construct sequence table:makeSequenceTable(merged)g. Remove chimeras:removeBimeraDenovo(seqtab, method="consensus") - Filtering & Curation:

a. Remove non-target sequences: Assign taxonomy using

assignTaxonomy()against the SILVA database. Filter out Mitochondria, Chloroplast, and Eukaryota. b. Prevalence Filtering: Remove features with a total count < 10 across all samples OR present in <2% of samples. c. Control-based Decontamination: Subtract ASVs where (Mean abundance in negative controls) / (Mean abundance in test samples) > 0.01. d. Low-Depth Sample Removal: Discard samples with a total count below your established threshold (e.g., 5,000 reads). - Normalization: Apply rarefaction to the median sequence depth for beta-diversity analyses OR convert to relative abundance for taxonomic profiling.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Feature Table Curation |

|---|---|

| DADA2 (R Package) | A model-based method for correcting Illumina-sequenced amplicon errors, inferring exact Amplicon Sequence Variants (ASVs). |

| decontam (R Package) | Statistical tool to identify and remove contaminant DNA sequences based on their prevalence in negative controls versus true samples. |

| SILVA SSU Ref NR Database | A comprehensive, curated database of aligned ribosomal RNA sequences used for high-quality taxonomic classification of ASVs. |

| ZymoBIOMICS Microbial Community Standard | A defined mock community with known composition and abundance, used as a positive control to validate sequencing accuracy, chimera rate, and taxonomy assignment. |

| QIIME 2 (BioBakery Workflow) | A reproducible, scalable, and extensible pipeline for performing microbiome analysis from raw sequencing data to statistical visualization. |

| Phyloseq (R Package) | An R object and toolbox for handling and analyzing high-throughput microbiome census data, integrating OTU/ASU table, taxonomy, sample data, and phylogenetic tree. |

Workflow Diagram

Title: 16S Amplicon Data Curation to Final Feature Table

| Curation Step | Typical Threshold | Rationale & Impact |

|---|---|---|

| Minimum Sample Read Depth | 10% of Median Library Size | Removes failed libraries that add noise to diversity analyses. |

| ASV Prevalence Filter | Present in ≥2-5% of samples | Eliminates rare, likely spurious sequences while preserving rare biosphere. |

| Negative Control Contaminant Removal | Abundance in control > 1% of abundance in samples | Statistically identifies and removes laboratory/kit contaminants. |

| Chimera Removal Rate | Expected 5-20% of sequences | Higher rates may indicate poor PCR optimization or primer choice. |

| Mock Community Recovery (Positive Control) | ≥95% expected genera identified | Validates overall pipeline accuracy from sequencing to classification. |

Diagnosing and Solving Common 16S QC Problems: A Troubleshooter's Handbook

Troubleshooting Guides & FAQs

Q1: My 16S amplicon sequencing run shows an abnormally high number of chimeric sequences. What is the primary cause and how can I fix it? A: Excessive chimera formation is predominantly caused by incomplete extension during PCR, especially with degraded or low-concentration DNA templates. This allows truncated amplicons to act as primers in subsequent cycles. Corrective actions include:

- Optimizing PCR cycle number: Reduce to the minimum necessary (often 25-30 cycles).

- Using a high-fidelity, proofreading polymerase mix.

- Ensuring template DNA integrity via gel electrophoresis or Fragment Analyzer.

- Applying post-sequencing chimera removal tools (e.g., DADA2, USEARCH, VSEARCH).

Q2: My samples yield very short read lengths after sequencing, suggesting primer dimer or off-target amplification. How do I diagnose and prevent this? A: This indicates poor PCR specificity, often from degraded DNA or suboptimal primer design.

- Diagnosis: Run post-PCR products on a high-sensitivity gel or Bioanalyzer. A smear or low-molecular-weight band confirms the issue.

- Corrective Actions:

- Template QC: Use fluorometric quantification (e.g., Qubit) and assess degradation (e.g., DIN/ RINe numbers). See Table 1.

- PCR Optimization: Increase annealing temperature, use touchdown PCR, and include a no-template control (NTC).

- Primer Validation: Use in-silico PCR checks against databases like TestPrime (SILVA) and perform empirical testing.

Q3: I suspect PCR errors are introducing false rare OTUs/ASVs. What experimental and bioinformatic steps are mandatory for control? A: PCR errors and index switching (misassignment) can create artificial rare variants.

- Experimental Protocol:

- Include negative controls (extraction blank, PCR NTC) and positive controls (mock community with known composition) in every run.

- Use dual-unique indexing to minimize index misassignment.

- Perform technical replicates to distinguish true signal from noise.

- Bioinformatic Protocol:

- Sequence Quality Filtering: Use Trimmomatic or Cutadapt to remove low-quality bases and primers.

- Error Rate Modeling: Apply a pipeline like DADA2 or USEARCH -unoise3, which models and corrects Illumina amplicon errors, rather than traditional clustering.

- Control Subtraction: Remove any sequences appearing in negative controls from all samples using a tool like

decontam(R package).

Data Presentation

Table 1: Quantitative Impact of DNA Input Quality on 16S Library Metrics

| DNA Quality Metric | Optimal Range | Sub-Optimal Range | Observed Effect on 16S Data |

|---|---|---|---|

| Degradation Index (DIN) | 7.0 - 10.0 | < 3.0 | Read length ↓ by >30%; Chimera rate ↑ >15% |

| DNA Concentration (Qubit) | 1-10 ng/µL | < 0.1 ng/µL | PCR cycles required ↑, Error rate ↑ exponentially |