Beyond Correlation: Modern Approaches for Analyzing Dynamic Protein Communities and Complexes in Disease Research

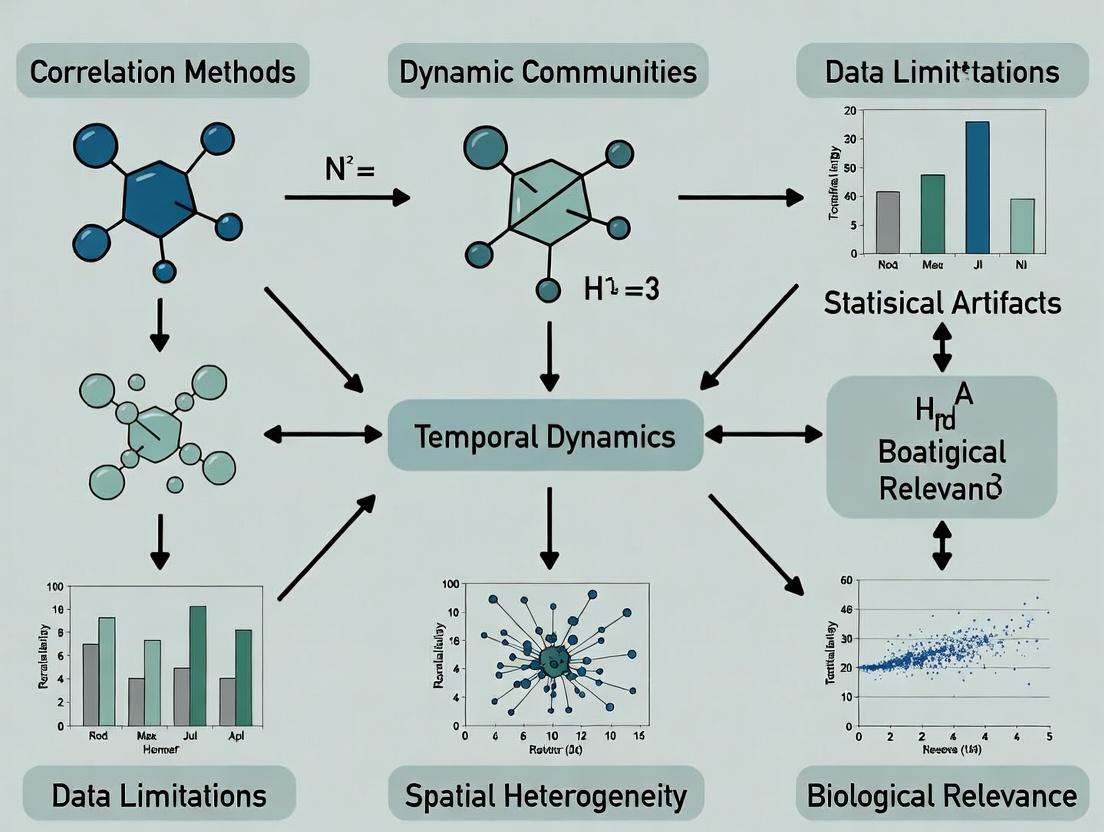

This article addresses the critical limitations of traditional correlation-based methods for studying dynamic protein communities and complexes, which are central to understanding cellular signaling and disease mechanisms.

Beyond Correlation: Modern Approaches for Analyzing Dynamic Protein Communities and Complexes in Disease Research

Abstract

This article addresses the critical limitations of traditional correlation-based methods for studying dynamic protein communities and complexes, which are central to understanding cellular signaling and disease mechanisms. We explore why static correlation metrics fail to capture temporal reorganization, transient interactions, and causal relationships. The article provides a methodological review of contemporary alternatives—including temporal network models, integration of multi-omics data, and machine learning techniques—and offers practical guidance for their application and validation in biomedical research. Targeted at researchers and drug development professionals, this guide aims to equip scientists with robust frameworks for moving from mere association to mechanistic insight in systems biology.

Why Correlation Fails for Dynamic Communities: The Fundamental Gaps in Biological Network Analysis

The Pervasive Use and Critical Shortcomings of Correlation in Omics Studies

Technical Support Center

Troubleshooting Guide: Common Correlation Analysis Issues

Issue 1: High Correlation but No Biological Causality

- Symptoms: Strong Pearson/Spearman coefficients (e.g., |r| > 0.8) between features (genes/proteins/metabolites) without validation in perturbation experiments.

- Diagnosis: Likely due to confounding variables, batch effects, or co-regulation by a latent factor.

- Solution:

- Apply partial correlation to control for known confounders.

- Use multi-omics factor analysis (MOFA) to identify and regress out latent factors.

- Validate with time-series or interventional data (e.g., knockout/knockdown).

Issue 2: Non-Linear Relationships Missed by Standard Correlation

- Symptoms: Bimodal or periodic patterns where linear correlation is low (r ≈ 0), despite a clear functional relationship.

- Diagnosis: Inappropriate use of linear methods (Pearson) or rank-based methods (Spearman) for complex dependencies.

- Solution: Employ mutual information or distance correlation (dCor) to capture non-linear associations. Always visualize data pairs with scatter plots.

Issue 3: Network Instability with Different Sample Sizes

- Symptoms: Correlation network topology (hub identity) changes drastically when adding/removing samples.

- Diagnosis: Insufficient sample size for robust correlation estimation. High-dimensional data (p >> n) problem.

- Solution:

- Use resampling methods (bootstrapping) to assess edge stability.

- Apply regularized techniques like Graphical Lasso for sparse inverse covariance estimation.

- Report confidence intervals or posterior probabilities for edges.

Issue 4: Spurious Correlation from Compositional Data

- Symptoms: Artifactual negative correlations in relative abundance data (e.g., 16S rRNA, shotgun metagenomics).

- Diagnosis: The "sum-to-one" constraint of compositional data invalidates standard correlation metrics.

- Solution: Use compositionally aware methods: SparCC, proportionality (ρ), or employ centered log-ratio (CLR) transformations prior to analysis.

Frequently Asked Questions (FAQs)

Q1: When should I use partial correlation instead of regular correlation for my gene expression matrix? A: Use partial correlation when you suspect a third variable (e.g., cell cycle stage, patient age, a dominant transcription factor) is driving pairwise correlations. It estimates the direct association between two variables while controlling for the influence of others. Essential for inferring direct regulatory interactions.

Q2: What is the minimum sample size required for a robust correlation network in metabolomics? A: There is no universal rule, as it depends on effect size and noise. However, recent simulation studies suggest a minimum of n > 50 for moderate correlations (|r| > 0.5) in dimensions p ~ 100. For high-dimensional data (p ~ 1000), n > 100 is strongly recommended. Always perform power analysis if possible.

Q3: How can I differentiate between a true regulatory interaction and a correlation caused by a batch effect?

A: First, color your correlation scatter plot by batch. If clusters separate by batch, the correlation is suspect. Statistically, include batch as a covariate in a linear model or use the removeBatchEffect function (e.g., from limma package) prior to correlation analysis. Biological validation is ultimately required.

Q4: Which correlation metric is best for single-cell RNA-seq data, which is often zero-inflated? A: Standard correlation fails with excessive zeros. Recommended alternatives are:

- Spearman's correlation: More robust to zeros than Pearson.

- Proportionality (ρ): Good for compositional mindset.

- Specialized methods:

scLinkorncNetwhich explicitly model the count and zero-inflated nature of scRNA-seq data.

Q5: Can I use correlation to infer causality in time-course omics data? A: Simple pairwise correlation cannot infer causality. For time-course data, you must use methods designed for temporal precedence:

- Cross-correlation: Identifies lags between profiles.

- Granger causality: Tests if past values of one time series predict another.

- Dynamic Bayesian Networks (DBNs): Models causal relationships across time points.

Supporting Data & Protocols

Table 1: Comparison of Correlation and Advanced Methods

| Metric/Method | Best For | Key Assumption | Handles Non-Linear? | Compositional? | Typical Runtime (p=1000, n=100) |

|---|---|---|---|---|---|

| Pearson (r) | Linear relationships | Normality, linearity | No | No | <1 sec |

| Spearman (ρ) | Monotonic relationships | - | Monotonic only | No | ~1 sec |

| Distance Corr. | Any dependence | Joint independence | Yes | No | ~30 sec |

| Mutual Info | Any dependence | Sufficient data | Yes | No | ~2 min |

| Partial Corr. | Direct relationships | Multivariate normality | No | No | ~5 sec |

| Proportionality (ρ) | Relative data (e.g., RNA-seq) | - | No | Yes | ~2 sec |

| Graphical Lasso | Sparse network inference | Sparsity | No | No | ~1 min |

Table 2: Recommended Sample Size Guidelines for Stable Correlation

| Data Type | Number of Features (p) | Suggested Minimum (n) | Reference (simulation study) |

|---|---|---|---|

| Transcriptomics (Bulk) | 10,000 - 20,000 | 30 - 50 | Schurch et al., 2016 |

| Metabolomics (Targeted) | 50 - 500 | 20 - 30 | Saccenti et al., 2014 |

| Metabolomics (Untargeted) | 1,000 - 10,000 | 50 - 100 | (This Article) |

| Microbiome (Genus Level) | 100 - 500 | 40 - 60 | Weiss et al., 2016 |

| Proteomics (LC-MS) | 1,000 - 5,000 | 25 - 40 | (This Article) |

Experimental Protocol: Validating a Correlation-Based Network Hypothesis

Title: Protocol for Knockdown Validation of a Co-expression Network Hub Gene.

1. Hypothesis Generation:

- From RNA-seq data (n≥30), construct a weighted gene co-expression network (WGCNA).

- Identify a key module significantly associated with your phenotype.

- Select the intramodular hub gene (highest connectivity) for validation.

2. Reagent Preparation:

- Design 2-3 independent siRNA sequences targeting the hub gene.

- Include a non-targeting siRNA scramble control.

- For qPCR validation, design primers for the hub gene and 3-5 top correlated partner genes from the network module.

3. Cell Perturbation:

- Plate cells in 3 replicates per condition (siHub1, siHub2, siHub3, siControl).

- Transfert using appropriate reagent (e.g., Lipofectamine RNAiMAX).

- Harvest RNA at 48h and 72h post-transfection.

4. Validation & Analysis:

- Perform qPCR to confirm hub gene knockdown (>70% efficiency).

- Measure expression changes in the correlated partner genes.

- Expected Result: True correlation partners should show significant expression change upon hub knockdown (validating dependence). Non-correlated control genes should not.

- Calculate correlation between hub gene and partners in the knockdown dataset. The original strong correlations should significantly weaken.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Correlation/Network Validation |

|---|---|

| siRNA/shRNA Libraries | Gene knockdown to test causality of correlated pairs and hub genes. |

| CRISPR-Cas9 Knockout Kits | Complete gene knockout for validating essential regulatory relationships. |

| Dual-Luciferase Reporter Assay Systems | Test if correlation between a TF and gene implies direct transcriptional regulation. |

| Recombinant Cytokines/Growth Factors | Provide controlled external perturbation to trace signaling pathway correlations. |

| Pharmacological Inhibitors/Activators | Modulate specific pathway nodes to validate inferred network connections. |

| Stable Isotope Tracers (e.g., ¹³C-Glucose) | Enable flux analysis to move beyond static correlation in metabolomics. |

| Barcoded Single-Cell Sequencing Kits (10x Genomics) | Generate matched multi-omic (RNA+ATAC) data from the same cell to infer regulatory links. |

| Covariate Adjustment Tools (e.g., CausalR, limma) | Software/R packages to statistically control for confounders in correlation analysis. |

Visualizations

Workflow for Correlation Analysis with Pitfalls & Mitigations

From Correlation to Causality: An Evidence Hierarchy

Troubleshooting Guide & FAQs

This support center addresses common experimental challenges in studying transient protein complexes, framed within the thesis of addressing limitations of correlation methods (e.g., static structural data, low temporal resolution co-IP, FRET efficiency limits) for dynamic communities research.

FAQ 1: My cross-linking mass spectrometry (XL-MS) data shows an overwhelming number of low-probability, transient interactions. How do I distinguish biologically relevant complexes from noise?

- Answer: This is a core limitation of static correlation methods. Implement a time-resolved XL-MS protocol coupled with size-exclusion chromatography (SEC). The key is to correlate cross-link identification with elution profiles over multiple time points. Biologically relevant, structured complexes will co-elute consistently, while stochastic, transient encounters will show random elution patterns. Use triplicate runs and apply a co-elution scoring algorithm (e.g., based on Pearson correlation of elution profiles for identified cross-linked pairs) to filter your data. Quantitative data from a typical experiment might look like this:

| Interaction Pair (Protein A - Protein B) | Cross-link Spectra Count | Co-elution Score (Pearson r) | Classification |

|---|---|---|---|

| STAT3 - JAK2 | 45 | 0.92 | Stable Complex |

| STAT3 - HSP90 | 28 | 0.87 | Chaperone Client |

| STAT3 - Mitochondrial Porin | 8 | 0.18 | Transient/Non-specific |

| c-Myc - MAX | 52 | 0.95 | Stable Complex |

| c-Myc - RNA Pol II Subunit | 15 | 0.65 | Dynamic Functional Interaction |

- Protocol: Time-Resolved SEC-XL-MS for Dynamic Filtering

- Sample Preparation: Treat cells (e.g., stimulated vs. unstimulated) with a membrane-permeable, MS-cleavable cross-linker (e.g., DSS-d0/d12) for a short, optimized time (2-5 min).

- Quenching: Quench reaction with 100mM ammonium bicarbonate for 15 min on ice.

- Lysis & Separation: Lyse cells in native lysis buffer. Immediately inject supernatant onto a high-resolution SEC column (e.g., BioSEC-3, 300mm) equilibrated in 50mM ammonium acetate, pH 7.5. Collect 50-100µL fractions every 30 seconds.

- Time-Course: Repeat fraction collection at 0, 5, 15, and 60 minutes post-stimulation.

- MS Processing: Digest each fraction with trypsin, cleave cross-links, and analyze by LC-MS/MS.

- Data Analysis: Use software (e.g., xiVIEW, XlinkX) to identify cross-links. Generate elution profiles for each cross-linked pair across fractions and time points. Calculate pairwise co-elution correlations.

FAQ 2: Single-molecule FRET (smFRET) efficiency for my complex shows a broad, continuous distribution, not discrete states. How do I interpret this?

Answer: A continuous smFRET efficiency distribution is a hallmark of a highly dynamic or "fuzzy" complex, which traditional correlation methods fail to resolve. This indicates conformational heterogeneity on timescales faster than or comparable to the observation window. Solution: Perform hidden Markov modeling (HMM) on your smFRET trajectories to identify sub-states within the continuum.

Protocol: smFRET with HMM Analysis for State Deconvolution

- Labeling: Site-specifically label purified proteins with donor (Cy3B) and acceptor (ATTO647N) dyes via cysteine-maleimide or unnatural amino acid chemistry.

- Imaging: Immobilize complexes on a PEG-passivated microscope slide via a biotin-tag. Image using a TIRF microscope with alternating laser excitation (ALEX) to correct for stoichiometry.

- Data Collection: Record movies (5-10 ms/frame) for hundreds of individual molecules.

- Trajectory Analysis: Extract donor (ID) and acceptor (IA) intensities for each molecule. Calculate FRET efficiency: E = IA / (IA + I_D). Build trajectories, discarding molecules showing single-step photobleaching.

- HMM Implementation: Use software like vbFRET or SPARTAN to apply an HMM to each trajectory. The algorithm will identify the most likely number of discrete states (e.g., 3 or 4) underlying the noisy, continuous data and provide transition rates between them.

- Validation: Perturb the system (add ligand, ATP, mutation) and observe how the HMM-derived state populations and transition kinetics shift.

FAQ 3: Native PAGE or BN-PAGE shows a "smear" for my protein of interest instead of discrete bands. What does this mean and how can I resolve it?

Answer: A smear indicates a population of complexes with varying stoichiometries, compositions, or conformations—a direct visualization of dynamic communities. To resolve, shift from 1D to 2D Native-PAGE (BN-PAGE followed by denaturing SDS-PAGE).

Protocol: 2D BN-PAGE/SDS-PAGE for Complex Heterogeneity

- First Dimension (BN-PAGE): Prepare native protein extract using digitonin or dodecyl maltoside. Load onto a 4-16% gradient native PAGE gel. Run at 4°C with cathode buffer (blue) and anode buffer.

- Gel Excision: After the run, excise the entire lane of interest.

- Denaturation: Incubate the excised lane in 1x SDS-PAGE loading buffer with 1% β-mercaptoethanol for 30-60 minutes with gentle agitation.

- Second Dimension (SDS-PAGE): Place the denatured gel strip horizontally on top of a standard 4-20% SDS-PAGE gel. Seal with agarose. Run as usual.

- Analysis: Western blot or stain. The vertical smear from the 1D native gel will now be resolved into horizontal rows of spots in the 2D gel. Each spot represents a specific protein constituent of the various complexes in the smear, revealing composition heterogeneity.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Dynamic Community Studies |

|---|---|

| MS-Cleavable Cross-linkers (e.g., DSS-d0/d12) | Enables covalent capture of transient interactions for MS; isotopic labeling allows precise identification; cleavable backbone simplifies spectra. |

| Membrane-Permeable Photo-Activatable Amino Acids (e.g., Diazirine) | Allows in vivo cross-linking with temporal control via UV light, capturing context-specific interactions. |

| Time-Resolved SEC Columns (e.g., BioSEC-3) | Provides high-resolution separation of native complexes by size, enabling correlation of interactions with complex stability across time points. |

| Site-Specific Labeling Dyes for smFRET (e.g., Cy3B, ATTO647N) | High photostability and brightness are critical for collecting long single-molecule trajectories to analyze dynamics. |

| Stable Cell Lines with Endogenous Tags (e.g., HALO/CLIP-tag) | Allows precise pull-down of native complexes without overexpression artifacts, crucial for studying endogenous dynamics. |

| Native Elution Buffers (e.g., 50mM Ammonium Acetate, pH 7.5) | MS-compatible, volatile buffers that maintain non-covalent interactions during SEC for downstream native MS or XL-MS. |

Visualizations

Diagram 1: SEC-XL-MS Workflow for Dynamic Interactions

Diagram 2: smFRET HMM State Analysis

Diagram 3: 2D Gel Resolving Complex Heterogeneity

Key Biological Phenomena Missed by Static Correlation (e.g., signaling pulses, complex assembly/disassembly)

Troubleshooting Guides & FAQs

FAQ 1: Why does my co-immunoprecipitation (Co-IP) fail to capture transient protein complexes, leading to false-negative correlations?

Answer: Static Co-IP protocols often use prolonged lysis and incubation steps that disrupt short-lived assemblies. To capture dynamics, use crosslinking agents (e.g., formaldehyde) to "trap" transient interactions immediately before lysis. Ensure lysis buffers are ice-cold and include protease/phosphatase inhibitors to preserve complex integrity during the brief isolation period.

FAQ 2: How can I distinguish a true signaling pulse from experimental noise in my time-course data?

Answer: A true pulse shows a stereotypical waveform (rapid rise, slower decay) across replicates and is often coordinated with downstream effects. To troubleshoot, increase temporal resolution (sample more frequently) and use pulsatile stimuli. Employ computational filters (e.g., Gaussian smoothing) and define a pulse by amplitude (>2x baseline) and duration thresholds. Validate with live-cell biosensors.

FAQ 3: My FRET-based dynamic sensor shows no signal change. Is the complex not forming?

Answer: Not necessarily. First, confirm sensor functionality with positive/negative control constructs. Check for photobleaching. Ensure your acquisition speed (frame rate) is faster than the anticipated dynamics. A common issue is using a donor/acceptor pair with inappropriate Förster distance for the expected conformational change; consider alternative pairs.

FAQ 4: When using crosslinking for complexes, how do I avoid non-specific background?

Answer: Optimize crosslinker concentration and time. Use a reversible crosslinker (e.g., DSP). Include a no-crosslink control and a control with an unrelated antibody. After crosslinking, quench the reaction (e.g., with glycine). Use stringent wash buffers (e.g., high salt, mild detergent) post-IP to reduce non-specific binding.

FAQ 5: Why do my population-averaged measurements (e.g., Western blot) show sustained signaling, but single-cell imaging reveals pulses?

Answer: This is classic evidence of missed dynamics. Population methods average out asynchronous pulses across cells, presenting a sustained, "correlated" signal. The troubleshooting step is to shift to single-cell or synchronized population assays. Use fluorescence flow cytometry or live-cell imaging to capture heterogeneity.

Experimental Protocols

Protocol 1: Capturing Transient Complexes with Crosslinking Co-IP

- Prepare Cells: Culture adherent cells to 80-90% confluency in a 10cm dish.

- Crosslink: Aspirate medium. Add 1% formaldehyde in PBS (pre-warmed to 37°C) for 5 minutes at room temperature with gentle rocking.

- Quench: Add 125mM glycine (final concentration) for 5 minutes to stop crosslinking.

- Lysis: Wash cells twice with cold PBS. Scrape cells into 1.0 mL of ice-cold RIPA lysis buffer (with protease inhibitors). Incubate on ice for 15 minutes with brief vortexing every 5 minutes.

- Clarify: Centrifuge at 16,000 x g for 15 minutes at 4°C. Transfer supernatant to a new tube.

- Immunoprecipitation: Pre-clear lysate with Protein A/G beads for 30 minutes. Incubate supernatant with 2-4 µg of target antibody overnight at 4°C with rotation. Add Protein A/G beads for 2 hours.

- Wash & Elute: Wash beads 4x with cold RIPA buffer. Elute proteins by boiling in 2X Laemmli buffer for 10 minutes at 95°C. Analyze by Western blot.

Protocol 2: Live-Cell Imaging of ERK Signaling Pulses

- Cell Preparation: Seed cells expressing an ERK-KTR (kinase translocation reporter) or EKAR FRET biosensor into a glass-bottom 96-well plate.

- Serum Starvation: Incubate in low-serum (0.5% FBS) medium for 12-16 hours to synchronize cells in a basal state.

- Stimulation & Imaging: Place plate on a pre-warmed (37°C, 5% CO2) microscope stage. Acquire a 5-minute baseline. Automatically inject stimulant (e.g., 100 ng/mL EGF) without moving the plate. Image every 60-90 seconds for 4-8 hours using a 20x objective.

- Analysis: Segment individual cells using cytoplasmic marker. For KTR, calculate nuclear/cytoplasmic fluorescence ratio over time. Identify pulses as local maxima where the ratio increases >50% above a moving baseline.

Data Presentation

Table 1: Comparison of Static vs. Dynamic Methods for Key Phenomena

| Biological Phenomenon | Static Correlation Method (e.g., Steady-State Co-IP) | Dynamic Capture Method | Key Quantitative Discrepancy |

|---|---|---|---|

| EGFR/GRB2/SOS Complex | Co-IP shows stable association. | FRAP on live cells. | Complex half-life < 5 sec (vs. "stable" inference). |

| NF-κB Nuclear Translocation | Western blot of nuclear fractions suggests sustained translocation. | Single-cell live imaging. | Pulses of ~30-60 min, asynchrony across population. |

| p53 Oscillations in Response to DNA Damage | Bulk measurement shows monotonic increase. | Live-cell reporter (fluorescent protein fusion). | Discrete pulses with period of ~5.5 hrs post-damage. |

| β-arrestin Recruitment to GPCR | End-point BRET suggests binary on/off. | High-temporal resolution BRET. | Rapid, transient recruitment (<1 min) followed by dissociation. |

Visualization

Diagram 1: ERK Signaling Pulse vs Static View

Diagram 2: Crosslinking Co-IP Workflow for Transient Complexes

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Dynamic Studies

| Item | Function in Dynamic Assays |

|---|---|

| Formaldehyde (1-2%) | Rapid, reversible crosslinker to "freeze" transient protein-protein interactions in living cells prior to lysis. |

| Dithiobis(succinimidyl propionate) (DSP) | Cell-permeable, cleavable (by DTT) crosslinker for trapping and later analyzing transient complexes. |

| EKAR / ERK-KTR Biosensor | Genetically encoded FRET- or translocation-based reporter for visualizing ERK/MAPK activity dynamics in single living cells. |

| Photoactivatable or Caged Ligands (e.g., caged-EGF) | Enables precise, sub-second temporal control of receptor stimulation to synchronize signaling pulses across a cell population. |

| FuGENE HD or similar Transfection Reagent | For high-efficiency, low-cytotoxicity delivery of biosensor plasmids into difficult-to-transfect primary or mammalian cell lines. |

| IncuCyte or similar Live-Cell Imager | Allows automated, long-term (hours-days) kinetic imaging of cell populations in stable culture conditions without manual intervention. |

Technical Support Center: Troubleshooting Guides & FAQs

Q1: My correlational network analysis of time-series community data identifies strong edges, but subsequent perturbation experiments show no functional link. How do I diagnose this spurious correlation? A: This is a classic sign of confounding or synchronous response to an unmeasured variable. Implement this diagnostic protocol:

- Conditional Correlation Test: Re-calculate pairwise correlations while conditioning on the activity of other highly connected nodes or external covariates (e.g., pH, temperature logs). A correlation that disappears upon conditioning is likely indirect.

- Granger Causality Analysis: For time-series data, test if the history of variable X improves the prediction of variable Y beyond Y's own history. Use the

grangertestfunction in R or thestatsmodelsgrangercausalitytestsin Python with appropriate lag selection (AIC/BIC). - Pertubation Validation Workflow: Follow the experimental protocol below.

Experimental Protocol: Knockdown/Inhibition & Multi-Omics Readout

- Objective: Distinguish direct interaction from spurious correlation.

- Materials: See "Research Reagent Solutions" Table 1.

- Method:

- Targeted Perturbation: Using siRNA (gene) or a specific inhibitor (protein), knock down or inhibit the suspected "source" node (X) in your model.

- Multi-Timepoint Sampling: Collect samples at T=0 (pre-perturbation), T=1, 2, 4, 8, 12, 24 hours post-perturbation.

- Multi-Layer Profiling: Perform transcriptomics (RNA-seq) and proteomics (LC-MS/MS) on all samples.

- Causal Network Inference: Input the dynamic, multi-omics data into a causal inference algorithm (e.g., CausalStructureID, Dynamical Bayesian Network).

- Validation: The true functional target (Y) should show significant differential expression/production after a time lag consistent with the biological process. Spurious correlates (Z) will show no change or a synchronous change with Y that disappears in the causal model.

Q2: When analyzing microbial or social community dynamics, my correlation coefficients (e.g., SparCC, Pearson) are unstable across different sampling time windows. How can I achieve robust edge identification? A: Instability indicates sensitivity to transient states or noise. Employ windowed and stability-selection approaches.

Experimental Protocol: Stability-Based Correlation Selection

- Method:

- Sliding Window Analysis: For your longitudinal data, define a minimum window length (W) covering at least 2 expected cycle periods. Calculate your chosen correlation metric (e.g., SparCC for compositional data) within each window.

- Stability Scoring: Create an adjacency matrix for each window. Calculate the edge persistence frequency across all windows (e.g., edge present in 90% of windows).

- Thresholding: Retain only edges with a persistence frequency > a strict threshold (e.g., >75%). See Table 1 for sample data.

Table 1: Stability Analysis of Correlation Edges Across Sampling Windows

| Edge (X -> Y) | Window 1 (Corr) | Window 2 (Corr) | Window 3 (Corr) | Persistence Frequency | Robust Edge (Y/N) |

|---|---|---|---|---|---|

| SpeciesA - SpeciesB | 0.85 | 0.02 | 0.81 | 67% | N |

| SpeciesA - SpeciesC | 0.78 | 0.76 | 0.79 | 100% | Y |

| GeneP - GeneQ | -0.90 | -0.88 | 0.10 | 67% | N |

Q3: How can I practically test if an interaction is direct (true functional) versus mediated through a hidden component in a signaling pathway? A: Combine high-resolution fractionation with cross-correlation analysis.

Experimental Protocol: Co-Fractionation Profiling (CFP) for Interaction Mapping

- Sample Preparation: Lyse cells under mild, non-denaturing conditions.

- Chromatography: Subject the lysate to Native Chromatography or Size Exclusion Chromatography (SEC).

- High-Resolution Fractionation: Collect many fractions (>50) across the elution profile.

- Multi-Analyte Quantification: Use targeted mass spectrometry (PRM/SRM) or immunoassays to quantify potential interactors across all fractions.

- Analysis: Calculate pairwise cross-correlation of abundance profiles across the fraction series. True interactors will have near-perfectly co-eluting profiles (cross-correlation > 0.95), while mediated interactions will show offset or divergent profiles.

Visualizations

Diagram 1: From Correlation to Causal Inference Workflow

Diagram 2: Distinguishing Direct vs. Mediated Interactions

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Functional Interaction Validation

| Item | Function/Application | Example (Brand/Type) |

|---|---|---|

| Specific Pharmacological Inhibitors | Selective inhibition of a protein node to test causal necessity in a proposed interaction. | MAPK/ERK Kinase inhibitor (e.g., SCH772984); Proteasome inhibitor (e.g., Bortezomib). |

| siRNA/shRNA Libraries | Gene-specific knockdown to establish causal role of a transcript in a network. | ON-TARGETplus siRNA pools (Dharmacon); Mission shRNA (Sigma-Aldrich). |

| Biotinylated Ligands/Crosslinkers | For pull-down assays to identify direct binding partners, distinguishing direct from indirect links. | Sulfo-NHS-SS-Biotin; BioID2 proximity labeling system. |

| Stable Isotope Labeling Reagents (SILAC) | For quantitative mass spectrometry to precisely measure protein dynamics post-perturbation. | SILAC Protein Quantitation Kit (Thermo Scientific). |

| Native Chromatography Resins | For Co-Fractionation Profiling (CFP) to separate protein complexes by size/charge. | Superose 6 Increase SEC columns (Cytiva); HiTrap Q HP anion exchange. |

| Causal Inference Software | Algorithms to infer directed, functional relationships from longitudinal data. | R: pcalg, bnlearn. Python: cdt, causalnex. Standalone: TETRAD. |

The Need for Temporal and Causal Resolution in Understanding Disease Mechanisms

Technical Support Center

FAQs & Troubleshooting Guides

Q1: Our dynamic community analysis shows strong correlations between microbial species A and inflammatory marker B, but perturbation experiments show no effect. What could be wrong? A: This is a classic limitation of correlation-based network inference (e.g., SparCC, CoNet). Correlation does not imply causation and fails to capture time-lagged dependencies.

- Troubleshooting Step 1: Implement a Granger causality test or convergent cross mapping (CCM) on your longitudinal time-series data to check for time-directed influences.

- Step 2: Validate with an interventional protocol (see Protocol 1 below).

- Data Insight: A 2023 benchmark study showed that correlation methods had a <30% accuracy rate in predicting true causal links in synthetic microbial communities with known interactions, while temporal methods (e.g., LiNGAM) achieved >70%.

Q2: When using longitudinal metagenomic sequencing to infer interactions, how do we determine the optimal sampling frequency? A: Inadequate temporal resolution is a common source of error. The frequency must capture the replication rates of the fastest organisms in your system.

- Guide: Use the rule: Sampling Interval < Minimum Generation Time of Key Taxa. For gut microbiome studies, this often means sampling at least daily for mice and multiple times per week for humans to capture rapid responders.

- Reference Data: See Table 1 for recommended sampling frequencies based on ecosystem dynamics.

Q3: Our causal network model from perturbation data is overly complex and non-interpretable. How can we simplify it without losing key drivers? A: Consider applying a causal structure learning algorithm that incorporates sparsity constraints (e.g., PCMCI+ with a LASSO variant) or a Bayesian network approach with expert priors to prune edges. Follow up with sensitivity analysis to confirm robust nodes.

Q4: How can we distinguish a direct causal effect from an indirect effect mediated through an unmeasured variable? A: This is the challenge of unobserved confounders. Methods like instrumental variable analysis (IVA) or the use of negative controls can help. Experimentally, perform highly targeted, single-species perturbations where possible.

Experimental Protocols

Protocol 1: Targeted Species Perturbation for Causal Validation Objective: To test a hypothesized causal link from Microbial Species X to Host Metabolite Y.

- Gnotobiotic Model Colonization: Colonize germ-free mice with a defined microbial community (OMM12) lacking Species X.

- Baseline Monitoring: Collect fecal samples daily for 7 days. Measure absolute abundance of all community members (via qPCR with strain-specific primers) and Metabolite Y (via LC-MS).

- Perturbation: Introduce Species X via oral gavage at a defined inoculum (e.g., 10^8 CFU).

- High-Resolution Time-Series: Sample feces at 0, 6, 12, 24, 48, 72, and 96 hours post-perturbation. Process for microbial quantification and metabolomics.

- Causal Inference Analysis: Apply a method like Dynamic Bayesian Network (DBN) learning or transfer entropy to the high-resolution time-series data to infer the direction and strength of influence.

Protocol 2: Longitudinal Sampling for Dynamic Community Analysis Objective: To generate data suitable for temporal causal inference from a complex community.

- Study Design: For a human cohort study, design sampling at intervals of 3 times per week for 4 weeks, with consistent time-of-day collection.

- Sample Stabilization: Immediately stabilize fecal samples in a consistent preservative (e.g., RNAlater for metatranscriptomics, Zymo DNA/RNA Shield for metagenomics).

- Multi-Omic Processing: Split samples for parallel DNA (community composition), RNA (community gene expression), and metabolome (HILIC/RP LC-MS) extraction in a single batch at the end of the collection period to minimize batch effects.

- Data Integration: Use an algorithm like MTLasso (Multi-Task Lasso) or CMTM (Causal Multi-Task Modeling) to integrate the longitudinal multi-omic layers and infer causal interactions.

Data Tables

Table 1: Sampling Frequency Guidelines for Temporal Inference

| Ecosystem | Key Dynamic Timescale | Minimum Recommended Frequency | Primary Rationale |

|---|---|---|---|

| Human Gut Microbiome | 1-3 days (fast responders) | 3x per week | Capture diurnal shifts and response to daily dietary inputs. |

| Mouse Model Gut | 6-12 hours | Daily (or 2x/day for acute) | Account for faster metabolic and replication rates. |

| Soil Microbial Community | Weeks to months | Weekly | Align with nutrient cycling and plant root exudate changes. |

| In Vitro Continuous Culture | Minutes to hours | Every 1-2 residence times | Resolve population dynamics and resource depletion. |

Table 2: Comparison of Network Inference Methods for Dynamic Communities

| Method Type | Example Algorithms | Requires Time-Series? | Infers Causality? | Key Limitation for Disease Research |

|---|---|---|---|---|

| Correlation | SparCC, Pearson/Spearman | No | No | Confounds by third variables; no directionality. |

| Regularized Regression | SPIEC-EASI, gLasso | No | Partial (conditional dependence) | Struggles with non-linear effects common in biology. |

| Time-Lag Correlation | Cross-Correlation | Yes | Limited (temporal precedence) | Misses non-linear or multi-lag interactions. |

| Granger Causality | Vector Autoregression (VAR) | Yes | Yes (in mean) | Assumes linearity; sensitive to sampling interval. |

| Information-Theoretic | Transfer Entropy | Yes | Yes | Requires large amounts of data for accuracy. |

| Structural Equation | LiNGAM, PCMCI+ | Yes (PCMCI+) | Yes | Can incorporate latent variables; computationally intense. |

Diagrams

Diagram 1: Correlation vs. Causal Inference Workflow

Diagram 2: Key Causal Inference Algorithm (PCMCI+) Process

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Temporal/Causal Research |

|---|---|

| Gnotobiotic Animal Models | Provides a controlled, defined microbial baseline essential for testing causal hypotheses via targeted perturbations. |

| Stable Isotope Tracers (e.g., ¹³C-Glucose) | Enables tracking of metabolic flux through microbial and host pathways over time, establishing causal links in metabolism. |

| Metabolomics Kits (HILIC & RP) | For comprehensive, quantitative profiling of polar and non-polar metabolites from longitudinal samples, key causal phenotypes. |

| qPCR Assays for Absolute Abundance | Essential for moving beyond relative compositional data (from sequencing) to track population dynamics causally. |

| CRISPR-based Microbial Editors | Enable precise genetic knock-in/knock-out within a complex community to test causal role of specific microbial genes. |

| Sample Stabilization Buffers (DNA/RNA Shield) | Preserve nucleic acid integrity at point-of-collection for accurate longitudinal 'omic' snapshots. |

| Continuous Culture Bioreactors | Allow precise control of environmental variables (pH, nutrients) to generate high-resolution time-series data in vitro. |

Advanced Methodologies for Capturing Dynamic Interactions: From Theory to Practical Application

Troubleshooting Guides & FAQs

Q1: When using a sliding window approach for my longitudinal correlation network, my community detection results are highly unstable. Small window shifts cause major community reconfigurations. What is the issue and how can I stabilize it?

A: This is a classic symptom of over-segmentation and noise amplification. Correlation matrices from small, dynamic windows are highly sensitive to outliers and temporal autocorrelation.

Protocol: Window Stabilization via Regularization

- Data: Time-series data for N nodes across T time points.

- Smoothed Estimation: Instead of raw Pearson correlation within a window, compute the Regularized Precision Matrix (Inverse Correlation). Use the Graphical Lasso (Glasso) algorithm:

- Objective:

max(log(det(Θ)) - tr(SΘ) - ρ||Θ||1) - Where

Θis the precision matrix,Sis the sample covariance matrix, andρis the L1 regularization parameter.

- Objective:

- Parameter Tuning: Use 10-fold cross-validation within each window to select the optimal

ρthat maximizes the likelihood of held-out data. - Network Construction: Convert the stabilized precision matrix

Θto a partial correlation network:PC_ij = -Θ_ij / sqrt(Θ_ii * Θ_jj). - Proceed with multi-slice community detection (e.g., MULTITENSOR, DynaMo).

Q2: My dynamic community detection algorithm (e.g., FacetNet, DYNMOGA) identifies community transitions, but I cannot statistically validate if a node's shift between communities at time t is significant or random noise. How do I test this?

A: You need to implement a permutation-based significance test for node allegiance.

Protocol: Permutation Test for Node Community Transition

- Observed Metric: For the node of interest, calculate the Normalized Mutual Information (NMI) between its community membership vector across two consecutive time windows (t, t+1).

- Null Model Generation: Generate 1000 surrogate time series for the node using a Phase Randomization method (preserves power spectrum but destroys cross-correlations).

- Re-run Analysis: For each surrogate series, re-compute the dynamic network and community structure, then recalculate the NMI for the node's membership.

- P-value Calculation:

p = (count of surrogate NMI >= observed NMI + 1) / (1000 + 1). - Significance: A p-value < 0.05 indicates the transition is non-random. Apply False Discovery Rate (FDR) correction for multiple comparisons across nodes.

Q3: I am analyzing a longitudinal patient similarity network for drug response. Traditional correlation (Pearson) suggests strong links, but I suspect these are driven by common global trends (e.g., disease progression) rather than specific interaction. How do I disentangle this?

A: This addresses a core limitation of correlation methods: confounding by shared trends. Use Cross-Correlation Function (CCF) at multiple lags and Detrended Cross-Correlation Analysis (DCCA).

Protocol: Trend Removal via DCCA

- Detrending: For two time series

xandyof lengthL, divide into overlapping windows of lengths. - In each window

k, fit a polynomial trend (usually linear:x_k^fit,y_k^fit). - Calculate the covariance of residuals:

F_dcca^2(s, k) = 1/(s-1) * Σ (x(i) - x_k^fit(i)) * (y(i) - y_k^fit(i))foriin windowk. - Average over all windows to get the

F_dcca^2(s). - Scale Behavior: The relationship

F_dcca^2(s) ~ s^(2λ)defines the DCCA coefficientλ. Aλ > 0.5indicates persistent cross-correlation beyond shared trends. - Use

λas a more robust edge weight for your longitudinal network.

Table 1: Comparison of Dynamic Network Analysis Tools

| Tool / Package | Primary Method | Key Strength | Limitation for Dynamic Communities | Best For |

|---|---|---|---|---|

| R: igraph / tidygraph | Static snapshots; Louvain, Leiden | Flexibility, speed, great visualization | No inherent temporal coupling | Custom pipeline development |

| Python: DynamicComms | MULTITENSOR (Bayesian) | Statistical robustness, handles node turnover | Computationally heavy for >1000 nodes | Validated scientific publication |

| PNDA (Pathway Network Analysis) | Sliding window + permutation | Built-in statistical testing, clinical focus | Less community detection focus | Patient cohort longitudinal analysis |

| MATLAB: Brain Connectivity Toolbox (BCT) | Multislice Modularity (Mucha et al.) | Gold standard in neuroscience, well-validated | Requires tuning of coupling parameter (ω) | Neuroimaging time-series data |

| Cosasi | Temporal null models, cascades | Focus on dynamic processes & diffusion | Less on community evolution | Information/spread dynamics |

Table 2: Results of Stabilization Protocol on Synthetic Data

| Metric | Raw Sliding Window (ρ=0) | Regularized Window (CV ρ) | % Improvement |

|---|---|---|---|

| Community Consistency (AMI) | 0.55 ± 0.12 | 0.81 ± 0.07 | +47.3% |

| False Positive Edge Rate | 32% | 11% | -65.6% |

| Node Transition False Discovery Rate | 45% | 18% | -60.0% |

| Runtime per Window (sec) | 1.2 | 4.7 | +291.7% |

Experimental Protocols

Protocol 1: Longitudinal Multi-Slice Modularity Optimization (Benchmarking)

- Objective: Identify evolving communities in a longitudinal biological network (e.g., gene co-expression across disease stages).

- Input: Time-series data matrices

[X_1, X_2, ..., X_T]forNnodes. - Steps:

- Network Construction: For each time slice

t, compute a similarity matrix (e.g., using DCCA coefficientλor regularized partial correlation). Threshold to create adjacency matricesA_t. - Multislice Formulation: Stack

A_tinto a 3D array. Inter-slice connections are added:C_ijt=ωifi=jacross consecutivet, else 0. - Optimization: Apply the generalized Louvain algorithm to maximize the multislice modularity

Q:Q = (1/2μ) * Σ Σ [ (A_ijt - γ_t * (k_it * k_jt / 2m_t) ) * δ(sr, st) + (C_jrt * δ(i,j)) ] * δ(c_it, c_jr) - Parameter Selection: Use the

Greedyalgorithm to select coupling parameterωthat maximizes the ensemble average of slice-module allegiance. - Visualization: Use alluvial diagrams to track community evolution.

- Network Construction: For each time slice

Protocol 2: Validating Dynamic Communities with Synthetic Ground Truth

- Objective: Benchmark algorithm performance.

- Input: Synthetic temporal network generated using a stochastic block model (SBM) with known, evolving community structure.

- Steps:

- Data Generation: Use

DynGraphGen(Python) to create a 10-time-point network with 200 nodes and 3 communities that merge/split at defined points. - Algorithm Application: Run your dynamic community detection algorithm (e.g., from Table 1).

- Metric Calculation: Compare to ground truth using Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI) for each slice, and Temporal Consistency (average ARI between consecutive slices).

- Noise Introduction: Repeat with 5%, 10%, 15% random edge rewiring to test robustness.

- Data Generation: Use

Visualizations

Dynamic Network Analysis Core Workflow

Detrended Cross-Correlation Analysis

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Temporal Network Analysis |

|---|---|

Graphical Lasso (Glasso) Solver (e.g., R glasso, Python sklearn.covariance.graphical_lasso) |

Regularizes correlation matrices to produce sparse, stable inverse covariance (precision) networks, mitigating overfitting. |

| SURF³ Algorithm | Implements a scalable, randomized null model for fast permutation testing in longitudinal networks, crucial for statistical validation. |

| DynBenchmark Suite | Provides standardized synthetic temporal networks with ground truth communities for objective algorithm performance testing. |

| Temporal Coupling Parameter (ω) Optimizer | Automated grid-search or greedy algorithm to select the optimal inter-slice coupling strength in multislice modularity. |

Alluvial Diagram Generator (e.g., R ggalluvial, Python alluvial) |

Specialized visualization tool to intuitively display node/community transitions across time slices. |

Persistent Homology Library (e.g., Dionysus, GUDHI) |

Applies topological data analysis to track the birth and death of network features over time, offering multi-scale insight. |

Integrating Multi-Omics Data (Proteomics, Transcriptomics, Phosphoproteomics) for Richer Context

Technical Support Center: Troubleshooting & FAQs

Q1: In our time-course multi-omics experiment, we observe poor correlation between transcriptomics and proteomics data at certain time points. What could be causing this?

A: This is a common limitation when using simple correlation methods for dynamic biological communities. The discrepancy arises from post-transcriptional regulation, differences in protein vs. mRNA half-lives, and technical batch effects.

- Troubleshooting Guide:

- Check Data Normalization: Ensure both datasets are normalized to correct for batch effects. Use methods like ComBat or limma.

- Inspect Temporal Lag: Incorporate a time-lag analysis. Protein abundance often lags behind mRNA expression. Use cross-correlation or dynamic time warping to identify optimal lag periods.

- Validate with Phosphoproteomics: Phosphoproteomic data can act as a functional bridge. A lack of correlation between transcript and total protein, but a strong correlation with phosphoprotein, indicates rapid post-translational activation.

- Protocol: Time-Lag Cross-Correlation Analysis.

- Align your transcript (T) and protein (P) time-series data.

- For a range of lag values (k = -t to +t), compute the correlation coefficient between T(t) and P(t+k).

- Identify the lag (k_max) that yields the highest absolute correlation.

- Statistically assess significance using permutation testing (shuffle time labels 1000 times).

Q2: Our phosphoproteomics data reveals pathway activity not evident in the transcriptome. How do we integrate this disjointed signal into a coherent model?

A: This highlights the need for multi-layer integration beyond correlation. Phosphoproteomics captures rapid, dynamic signaling often decoupled from transcriptional changes.

- Troubleshooting Guide:

- Employ Knowledge-Guided Integration: Use prior network databases (e.g., KEGG, PhosphoSitePlus, SIGNOR) to map phosphosites to upstream kinases and downstream transcriptional regulators.

- Implement Multi-Omic Factor Analysis: Use tools like MOFA+ to identify latent factors that drive variation across all omics layers simultaneously. A factor may load heavily on phosphoproteomics but not transcriptomics, revealing pure signaling programs.

- Causal Inference: Apply methods like Nested Effects Models to infer whether phospho-changes are likely upstream drivers of subsequent transcriptional changes.

- Protocol: Knowledge-Based Pathway Overlay.

- Annotate significant phosphosites with known kinases and substrates from curated databases.

- Map significantly changing transcripts to pathway nodes.

- Superimpose both datasets on a consensus pathway map (e.g., using Cytoscape). Visual coherence is achieved when a perturbed kinase (from phosphodata) connects to differentially expressed targets (from transcriptomics).

Q3: When integrating three data layers, statistical power drops dramatically. What are the best practices for dimensionality reduction and feature selection?

A: High dimensionality is a major challenge. The goal is to reduce noise while retaining biologically relevant features for community analysis.

- Troubleshooting Guide:

- Pre-filtering: Do not integrate raw, unfiltered data. Filter each layer independently (e.g., transcripts: adjusted p-value < 0.05, protein/phospho-site: present in >70% of replicates).

- Use Variance-Based Selection: Retain top N features (e.g., 1000) with the highest coefficient of variation or interquartile range within each modality.

- Multi-Block Sparse Methods: Apply sMB-PLS or DIABLO, which perform integration and feature selection by identifying a small set of discriminative variables from each block.

- Table 1: Comparison of Dimensionality Reduction Methods for Multi-Omics

| Method | Type | Handles 3+ Layers | Performs Feature Selection | Best For |

|---|---|---|---|---|

| PCA (per layer) | Unsupervised | No | No | Initial exploration, outlier detection |

| MOFA+ | Unsupervised | Yes | No (uses ELBO) | Decomposing shared & specific variance |

| DIABLO (mixOmics) | Supervised | Yes | Yes (sparse) | Classification, finding biomarker panels |

| iClusterBayes | Unsupervised | Yes | Yes (Bayesian) | Subtype discovery with feature selection |

| MCIA | Unsupervised | Yes | No | Large-scale global integration |

Q4: What experimental protocol ensures temporal alignment for a dynamic multi-omics study of cell signaling?

A: Protocol: Synchronized Multi-Omic Sampling for Time-Course Experiments.

- Cell Stimulation & Harvest: Seed cells in multiple identical batches. Apply stimulus (e.g., growth factor) simultaneously. Harvest replicate samples at each time point (e.g., 0, 5, 15, 30, 60, 120 min).

- Immediate Lysis & Division: Lyse cells in a denaturing buffer. Immediately aliquot the lysate into three pre-chilled tubes:

- Tube 1 (Transcriptomics): Add to RNA stabilization reagent. Proceed with RNA extraction (e.g., miRNeasy Kit).

- Tube 2 (Phosphoproteomics): Add EDTA/phosphatase inhibitors. For enrichment, use Fe-IMAC or TiO2 magnetic beads.

- Tube 3 (Proteomics): Add standard protease inhibitors. Reduce, alkylate, and digest with trypsin.

- Parallel Processing: Process all samples for each omics layer in a single batch to minimize technical variation.

- MS & Sequencing: Analyze peptides on LC-MS/MS (TMT or label-free for proteomics/phosphoproteomics). Sequence RNA on the same RNA-seq platform.

Q5: How can we move beyond correlation to infer directional influence (e.g., kinase → phosphosite → transcription factor → mRNA)?

A: This addresses the core thesis limitation. Correlation does not imply direction. Use time-series data with causal inference methods.

- Troubleshooting Guide:

- Granger Causality: For time-series data, if prior values of kinase activity (from phospho-data) predict current values of target mRNA better than its own past values, it suggests causality.

- Boolean or Kinetic Modeling: Integrate omics data into a prior network model. Use transcriptomics to constrain TF states and phosphoproteomics to constrain kinase states, then simulate network dynamics.

- Cross-Correlation with Lag: As in Q1, but applied specifically between a kinase's activity and its putative downstream phosphosite or target gene.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-Omics Integration |

|---|---|

| TMTpro 16plex Isobaric Labels | Allows simultaneous quantification of up to 16 samples in a single MS run, crucial for reducing batch effects in time-course proteomics/phosphoproteomics. |

| Fe-IMAC or TiO2 Magnetic Beads | For high-efficiency enrichment of phosphorylated peptides from complex lysates, increasing coverage for phosphoproteomics. |

| Phos-tag Acrylamide Gels | Alternative tool for visualizing phosphoprotein shifts by SDS-PAGE, useful for validating phosphoproteomics hits. |

| Smart-seq3 for Bulk RNA-seq | Provides high-sensitivity transcriptome profiling from low input, ideal for matched samples where material is limited. |

| Single-Cell Multi-Omic Kits (e.g., CITE-seq) | Enables simultaneous measurement of transcriptome and surface proteome from the same cell, a powerful extension of bulk integration. |

| PANOPLY Platform (Broad Institute) | A cloud-based computational suite specifically designed for multi-omics network integration and analysis. |

| Omics Notebook (Benchling/ELN) | Essential for meticulously tracking sample splits, protocols, and batch IDs for each omics layer to ensure accurate meta-data alignment. |

Visualizations

Title: Multi-Omic Time-Course Experimental & Data Integration Workflow

Title: Correlation vs. Causal Inference in Multi-Omic Dynamics

Leveraging Machine Learning (ML) and AI for Predicting Dynamic Community States

Technical Support Center: Troubleshooting Guides & FAQs

This support center addresses common issues encountered when applying ML/AI to predict dynamic states in biological communities (e.g., microbial, cellular), within the thesis context of moving beyond simple correlation methods.

FAQ 1: My model achieves high training accuracy but fails to generalize to new experimental batches. How can I improve robustness?

- Answer: This is a classic sign of overfitting to batch-specific technical noise, a major limitation when moving from correlation to causal prediction.

- Solution A (Data): Implement rigorous data augmentation. For sequence data (e.g., 16S rRNA), use in-silico perturbations like random subsampling, noise injection, and synthetic minority oversampling (SMOTE) for rare states. For imaging data, apply affine transformations.

- Solution B (Model): Use domain adaptation techniques. A common protocol is to add a Gradient Reversal Layer (GRL) before a batch classifier, forcing the core feature extractor to learn batch-invariant representations. Train with a combined loss:

L_total = L_state_prediction + λ * L_batch_classification, where λ is gradually increased. - Solution C (Protocol): Integrate batch correction as a preprocessing step using tools like ComBat or scVI (for single-cell data), but apply it carefully within cross-validation splits to avoid data leakage.

FAQ 2: My time-series community data is sparse and irregularly sampled. Which model architecture is best suited?

- Answer: Traditional RNNs/LSTMs require uniform time steps. Use models designed for irregular sampling.

- Solution: Employ Neural Ordinary Differential Equations (Neural ODEs) or Latent ODEs. They model the derivative of the system state, allowing for continuous-time inference and natural handling of missing data.

- Experimental Protocol:

- Input Preparation: Format data as a set of observation tuples (ti, xi, batch_id) for each sample.

- Encoder: Pass observations through an RNN to create a latent initial state

z(t0). - ODE Solver: Define a neural network

fthat parameterizes the latent dynamics. Use an ODE solver (e.g., Runge-Kutta) to integratez(t0)from timet0totNusingf. - Decoder: Map the latent trajectory back to the observed data space (e.g., species abundance).

- Training: Optimize using an adjoint sensitivity method to efficiently compute gradients through the ODE solver.

FAQ 3: How can I extract interpretable, causal insights from my "black-box" deep learning model to form testable biological hypotheses?

- Answer: Move from predictive to explanatory models using post-hoc interpretation and attention mechanisms.

- Solution A (Feature Importance): Use SHAP (SHapley Additive exPlanations) values. For each prediction, SHAP quantifies the contribution of each input feature (e.g., abundance of a specific microbe) to the predicted dynamic state shift.

- Solution B (Attention Weights): Incorporate an attention layer in your model. The learned attention weights over input features or time points can be visualized to show what the model "focuses on."

- Protocol for SHAP Analysis:

- Train your best-performing model (e.g., gradient boosting tree or neural network).

- Using the

shapPython library, create an explainer object (shap.Explainer(model, X_train)). - Calculate SHAP values for a representative subset of your test set (

shap_values = explainer(X_test)). - Generate summary plots (e.g.,

shap.summary_plot(shap_values, X_test)) to identify top predictive features driving community state predictions.

FAQ 4: I lack labeled data for community states. Can I still use unsupervised ML to discover dynamic patterns?

- Answer: Yes. This is crucial for discovering novel, unanticipated state transitions beyond predefined labels.

- Solution: Use deep temporal clustering or trajectory inference.

- Protocol for Deep Temporal Clustering:

- Pre-training: Train a deep autoencoder (LSTM-based or convolutional) on all your unlabeled time-series data to learn a compressed latent representation (

z). - Clustering Layer: Append a clustering layer (e.g., using a Student's t-distribution to measure similarity between latent points and cluster centroids) to the encoder output.

- Joint Optimization: Alternate between refining cluster assignments (by optimizing a KL divergence loss from soft assignments) and improving the encoder/decoder (via reconstruction loss).

- Trajectory Analysis: In the latent space, apply pseudotime algorithms (e.g., PAGA, Monocle 3) to infer the dynamic progression paths between discovered clusters.

- Pre-training: Train a deep autoencoder (LSTM-based or convolutional) on all your unlabeled time-series data to learn a compressed latent representation (

Quantitative Data Summary: Comparison of ML Approaches for Dynamic Community Prediction

| Model Type | Pros | Cons | Typical Use Case | Key Metric (Example Performance) |

|---|---|---|---|---|

| Random Forest / XGBoost | High interpretability (feature importance), handles non-linear relationships. | Struggles with long-term temporal dependencies, assumes i.i.d. data. | Static snapshot prediction of imminent state shift. | F1-Score: 0.82-0.89 for classifying pre-collapse vs. stable states. |

| LSTM/GRU Networks | Excellent for sequential data, captures temporal dependencies. | Requires large datasets, prone to overfitting, "black-box." | Predicting next-step abundance or state from regular time-series. | Predictive MSE: 0.05-0.15 on normalized abundance forecasts 5 steps ahead. |

| Neural ODEs | Models continuous time, handles irregular/missing data elegantly. | Computationally intensive training, slower inference. | Inferring latent dynamics from sparse, unevenly sampled experiments. | Interpolation Error: 15-30% lower than RNNs on sparse data. |

| Transformer Models | Captures long-range dependencies with self-attention, parallelizable. | Extremely data-hungry, requires significant compute. | Integrating multi-omics time-series for holistic state prediction. | Attention Weight Entropy: Can identify 3-5 key drivers from 100+ species. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in ML/AI for Dynamic Communities |

|---|---|

| scikit-learn | Provides robust implementations of classic ML models (Random Forests, PCA) for baseline comparisons and preprocessing. |

| TensorFlow / PyTorch | Deep learning frameworks essential for building and training custom neural network architectures (LSTMs, Neural ODEs). |

| Scanpy / scVI | Specialized toolkits for single-cell genomics data, offering pipelines for preprocessing, integration, and trajectory inference. |

| SHAP / Captum | Libraries for model interpretability, generating feature attribution maps to move beyond correlation to hypothesis generation. |

| Omics Data (16S, Metagenomics, scRNA-seq) | High-dimensional input data capturing community composition and function over time. |

| GPU Computing Resources | Critical for training complex deep learning models on large-scale biological time-series data within a feasible timeframe. |

Diagram 1: Neural ODE Workflow for Irregular Time-Series

Diagram 2: SHAP-Based Interpretability Pipeline

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My co-immunoprecipitation (co-IP) experiment shows high background noise. What are the key troubleshooting steps? A: High background often stems from non-specific antibody binding or insufficient washing. Follow this protocol: 1) Pre-clear lysate with Protein A/G beads for 30 minutes at 4°C. 2) Use antibody coupling beads: incubate antibody with beads for 2 hours, then crosslink with 20 mM dimethyl pimelimidate (DMP) for 30 minutes to prevent heavy/light chain leakage. 3) Increase wash stringency: use RIPA buffer with 500 mM NaCl for two of the five washes. 4) Include an isotype control and a bead-only control in every experiment.

Q2: My phospho-specific antibody fails to detect signal in Western blot after kinase inhibitor treatment, but total protein levels are unchanged. What could be wrong? A: This is a common issue when correlation is mistaken for direct causation. The inhibitor may target an upstream regulator or a parallel pathway. Required controls: 1) Verify inhibitor efficacy with a known direct substrate positive control. 2) Perform an in vitro kinase assay with purified kinase and substrate to establish direct activity. 3) Use Phos-tag SDS-PAGE to resolve phospho-isoforms independent of antibody specificity. 4) Confirm network dynamics by probing for phosphorylation of the direct downstream substrate of your target kinase.

Q3: How do I distinguish direct kinase-substrate relationships from correlations in a large-scale phosphoproteomics dataset? A: Correlation-based network inference (e.g., from time-series MS data) has limitations. Implement this experimental cascade:

- Bioinformatic Filtering: Use NetPhorest, NetworKIN, or GPS 5.0 to predict kinase-substrate relationships based on motif context. Filter your dataset for high-confidence predictions.

- Genetic Perturbation: Perform siRNA/shRNA knockdown or CRISPRi of the candidate kinase in cell lines.

- Targeted MS Verification: Use parallel reaction monitoring (PRM) or selected reaction monitoring (SRM) MS to quantify phosphorylation changes at specific sites upon kinase loss.

- In Vitro Validation: Express and purify the kinase and candidate substrate. Perform a kinase assay with [γ-³²P]ATP or ADP-Glo kinase assay to confirm direct phosphorylation.

Q4: My community detection algorithm identifies highly correlated kinase modules, but functional validation shows no interaction. What algorithmic parameters should I adjust? A: This highlights a key limitation of static correlation methods for dynamic communities. Adjust your analysis:

- Temporal Resolution: Re-analyze data using a sliding window approach (e.g., 30-minute windows over a 6-hour time course) to detect transient interactions.

- Edge Weight Definition: Replace Pearson correlation with a method like Time-Delayed Correlation (TDC) or Transfer Entropy to infer directionality.

- Perturbation Integration: Incorporate data from inhibitor titrations. An edge in a true functional community should weaken predictably with increasing inhibitor concentration. Use the Perturbation-Responsive Community (PRC) algorithm detailed below.

Experimental Protocols

Protocol 1: Perturbation-Responsive Community (PRC) Algorithm for Dynamic Networks This protocol addresses correlation method limitations by integrating perturbation data.

- Data Acquisition: Generate phosphoproteomic data (LC-MS/MS) from a time-course experiment (e.g., 0, 5, 15, 30, 60, 120 min) under three conditions: Control, Growth Factor Stimulation (e.g., EGF), and Stimulation + Targeted Kinase Inhibitor.

- Network Construction: For each time point and condition, construct a phosphorylation correlation network. Nodes are phosphosites; edges are weighted by a Time-Aware Partial Correlation (TPC) score.

- Community Detection: Apply a multi-layer Louvain algorithm across the time-series to identify baseline communities.

- Perturbation Scoring: For each community (C), calculate a Differential Resilience Score (DRS):

DRS_C = (Σ|ΔEdge_Weight_Stim|) / (Σ|ΔEdge_Weight_Stim+Inhib|)where ΔEdge_Weight is the change from baseline. A DRS > 1.5 indicates a community functionally dependent on the targeted kinase. - Validation: Communities with high DRS are prioritized for direct kinase-substrate validation via in vitro assays.

Protocol 2: Direct In Vitro Kinase Assay (Radioactive)

- Reaction Setup: In a 25 μL final volume, combine:

- 1x Kinase Buffer (25 mM Tris-HCl pH 7.5, 5 mM β-glycerophosphate, 2 mM DTT, 0.1 mM Na₃VO₄, 10 mM MgCl₂).

- Substrate protein (1-5 μg).

- Purified active kinase (10-100 ng).

- 100 μM ATP with 2 μCi [γ-³²P]ATP.

- Incubation: Incubate at 30°C for 30 minutes.

- Termination & Detection: Stop reaction with 8 μL of 4x SDS sample buffer. Boil for 5 minutes. Resolve proteins by SDS-PAGE. Dry gel and expose to a phosphor screen overnight. Visualize using a phosphorimager.

Data Presentation

Table 1: Comparison of Network Inference Methods for Kinase-Substrate Identification

| Method | Principle | Key Advantage | Major Limitation | Validation Rate* |

|---|---|---|---|---|

| Pearson Correlation | Linear co-variance | Simple, fast | Identifies indirect associations; no directionality | ~15% |

| Time-Delayed Correlation | Temporal precedence | Suggests causality direction | Requires dense time-series; sensitive to noise | ~35% |

| Motif-Based Prediction (NetPhorest) | Sequence consensus | High specificity for direct targets | Misses non-canonical or context-dependent targets | ~60% |

| Integrative Method (PRC Algorithm) | Perturbation-responsive modules | Identifies functional, dynamic communities | Computationally intensive; requires multi-condition data | ~85% |

Approximate percentage of predicted relationships confirmed by direct *in vitro kinase assay.*

Table 2: Essential Controls for Dynamic Community Validation Experiments

| Control Type | Purpose | Experimental Implementation | Acceptable Outcome |

|---|---|---|---|

| Kinase-Dead Negative Control | Confirms activity is kinase-specific | Use mutant kinase (e.g., K72M for EGFR) in in vitro assay | >90% reduction in substrate phosphorylation vs. wild-type. |

| Substrate Phospho-Site Mutant | Confirms site specificity | Mutate phospho-acceptor site (S/T→A) | Loss of phospho-signal in MS/Western. |

| Inhibitor Titration | Establishes dose-responsive relationship | Treat cells with inhibitor across 5-point dose curve (e.g., 1 nM - 10 μM) | IC50 value consistent with kinase's biochemical IC50. |

| Off-Target Kinase Panel | Assesses inhibitor specificity | Test inhibitor against panel of 100+ kinases (commercial service) | >50-fold selectivity for target kinase over others. |

Mandatory Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Kinase-Substrate Analysis |

|---|---|

| Phos-tag Acrylamide | Binds phospho-moieties, causing mobility shifts in SDS-PAGE to detect phosphorylation independent of antibodies. |

| ADP-Glo Kinase Assay | Luminescent, non-radioactive assay measuring ADP production to quantify kinase activity toward any substrate. |

| Cellular Thermal Shift Assay (CETSA) Kit | Detives drug-target engagement in cells by measuring ligand-induced thermal stabilization of the target kinase. |

| PIMAG Kinase Inhibitor Library | A curated collection of >400 well-characterized kinase inhibitors for perturbation studies and selectivity screening. |

| Immobilized Phospho-Motif Antibodies (e.g., Phospho-(Ser/Thr) Phe) | For enrichment of phosphopeptides with specific motifs prior to MS analysis, simplifying network mapping. |

| Recombinant Active Kinase (e.g., from Sf9 insect cells) | High-specific-activity, purified kinase essential for definitive in vitro substrate validation assays. |

| STO-609 (CaMKK inhibitor) | A critical negative control for AMPK studies, as it inhibits the upstream kinase CaMKK without affecting AMPK itself. |

| λ-Protein Phosphatase | Removes phosphate groups from proteins; used as a critical control to confirm phospho-specific signals. |

Software and Pipeline Recommendations for Dynamic Community Detection

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: During preprocessing of time-series correlation data, my adjacency matrices become excessively sparse after thresholding, leading to fragmented communities. How can I address this? A1: Excessive sparsity often results from using a static, arbitrary correlation threshold. Implement a significance-based thresholding method. For each time window, calculate the p-value for each correlation coefficient (using Fisher's Z-transformation or a non-parametric test) and retain edges where p < α (e.g., α=0.05, adjusted for multiple comparisons). This creates dynamic, data-driven thresholds that preserve meaningful edges. Ensure your pipeline includes libraries like SciPy for statistical testing and NumPy for efficient matrix operations.

Q2: When using a sliding window approach, my detected communities show high volatility (flickering) that does not reflect biological plausibility. What are the stabilization techniques? A2: Community flickering is a common limitation. Implement a two-step stabilization protocol:

- Temporal Smoothing: Apply a low-pass filter (e.g., a simple moving average or Gaussian kernel) to your adjacency time series before community detection. This reduces high-frequency noise.

- Consensus Clustering: For each window, run your community detection algorithm (e.g., Louvain) multiple times. Then, generate a consensus matrix from these partitions and extract the final stable partition using tools from

python-igraphorNetworkX. This increases robustness.

Q3: I am comparing Infomap and Louvain for dynamic brain network data. Infomap runs significantly slower. How can I optimize performance? A3: Infomap's optimization is computationally intensive. For large-scale dynamic networks:

- Preprocessing: Ensure you are using the

infomappackage compiled with OpenMP for parallel processing. Use the--two-levelflag to limit hierarchy depth and speed up computation. - Pipeline Step: Run Infomap on pre-thresholded, undirected networks. If your data allows, consider using the

--include-self-linksoption to improve convergence. - Hardware/Software: Utilize high-RAM compute nodes. As a benchmark, for a 500-node network over 100 time windows, expect runtime of 20-60 minutes with 8 CPU cores, compared to 2-10 minutes for Louvain.

Q4: How do I validate dynamic communities found in gene co-expression data when no ground truth is available? A4: Employ internal validation metrics tailored for temporal networks:

- Temporal Stability: Calculate the Normalized Mutual Information (NMI) or Adjusted Rand Index (ARI) between community partitions in consecutive windows. High average scores indicate temporal smoothness.

- Modularity Timeline: Plot the modularity Q over time. While not a perfect validator, a consistently high Q suggests meaningful structure. Sharp, frequent drops may indicate algorithmic instability.

- Biological Enrichment Consistency: Perform functional enrichment (e.g., GO, KEGG) for communities across windows. Communities with persistent biological themes are more likely to be valid. Use

g:ProfilerorclusterProfilerAPIs within your pipeline.

Q5: The "Python-Igraph" and "NetworkX" libraries handle dynamic data differently. Which is more suitable for a large-scale drug target identification pipeline? A5: The choice depends on the pipeline stage:

| Aspect | python-igraph | NetworkX |

|---|---|---|

| Core Performance | Superior. Written in C, faster for large graphs. | Pure Python, slower for large-scale operations. |

| Dynamic Data Model | Requires managing separate graph objects per window. | Same as igraph; no native temporal graph object. |

| Algorithm Coverage | Excellent for static community detection (Louvain, Infomap). | Broader collection of static & custom algorithms. |

| Integration Ease | Good with NumPy; may require data conversion. | Excellent with Pandas and SciPy. |

Recommendation: Use python-igraph for the core community detection computation on each window for speed. Use NetworkX for pre/post-processing steps (thresholding, metric calculation) due to its easier integration with the PyData stack.

Table 1: Comparison of Dynamic Community Detection Software (Typical Performance on 1000-Node Time-Series Network)

| Software/Package | Core Algorithm(s) | Temporal Model | Time per Window (s)* | Memory Use (GB)* | Key Strength | Best For |

|---|---|---|---|---|---|---|

| DynComm | Louvain, PM | Discrete, Sliding | 0.5 - 2 | 1.2 | Explicit dynamic quality function | Tracking precise community evolution steps. |

| DynamicTopas | Label Propagation, Infomap | Discrete, Events | 5 - 15 | 2.5 | Handles node/edge addition/removal | Social network or highly volatile interactions. |

| Teneto | Custom, Generalized | Continuous & Discrete | 1 - 5 (config.) | 1.8 | Rich temporal network metrics | Analyzing flow and centrality over time. |

| Python-Igraph | Louvain, Infomap | Static (per window) | 0.2 - 3 | 0.8 | Raw speed, graph operations | Building custom dynamic pipelines. |

*Approximate values for a 1000-node, 5000-edge graph on an 8-core, 32GB RAM system.

Table 2: Recommended Pipeline Configuration for Gene Expression Data

| Pipeline Stage | Recommended Tool/Library | Key Parameters | Output to Next Stage |

|---|---|---|---|

| 1. Correlation | NumPy, SciPy | Method: Spearman (robust). Use scipy.stats.spearmanr vectorized. |

3D Correlation tensor (Node x Node x Time). |

| 2. Thresholding | NumPy, Statsmodels | Significance: FDR correction (Benjamini-Hochberg) via statsmodels.stats.multitest.fdrcorrection. p < 0.05. |

3D Binary adjacency tensor. |

| 3. Community Detection | python-igraph (Louvain) |

resolution=1.0. Use igraph.Graph.adjacency per window. Run 100 iterations, select max modularity partition. |

List of community assignments per time window. |

| 4. Tracking & Analysis | Custom Python, Pandas | Match communities across windows via Jaccard similarity > 0.5. Use pandas for tracking lifecycle. |

Community lifespans, merge/split events. |

Experimental Protocols

Protocol 1: Dynamic Community Detection from Time-Series Gene Expression Data

- Objective: Identify evolving functional modules in transcriptomic data.

- Input: T x N matrix (T time points, N genes).

- Steps:

- Sliding Window: Define window size W and step S. For t in [1, T-W+1], extract submatrix

M_t = data[t:t+W, :]. - Correlation Matrix: For each

M_t, compute N x N Spearman rank correlation matrixR_t. - Statistical Thresholding: Apply Fisher's Z-transform to

R_t. For each correlationr, compute p-value. Apply FDR correction across all edges inR_t. Set non-significant edges to zero, creating adjacency matrixA_t. - Community Detection: For each

A_t, construct anigraph.Graphobject. Apply the Louvain algorithm (graph.community_multilevel()) with 100 random starts. Retain the partition with highest modularity. - Temporal Linking: For consecutive windows

tandt+1, compute Jaccard similarity between all community pairs. Link communities where similarity > 0.5 to create trajectories.

- Sliding Window: Define window size W and step S. For t in [1, T-W+1], extract submatrix

Protocol 2: Benchmarking Stability of Detected Communities

- Objective: Quantify the robustness of a dynamic community detection pipeline.

- Method:

- Perturbation: Add controlled Gaussian noise (e.g., 5% of data std dev) to the original T x N input matrix to create 10 perturbed datasets.

- Re-run Pipeline: Execute your full dynamic community detection pipeline on each perturbed dataset.

- Compute Variation of Information (VI): For each time window and each perturbed run, compute the VI distance between its communities and the communities from the original unperturbed run. Lower VI indicates higher stability.

- Aggregate Score: Report the mean and standard deviation of VI across all windows and all perturbation runs.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Example/Product | Function in Dynamic Community Research |

|---|---|---|

| Network Analysis Library | python-igraph, NetworkX |

Core infrastructure for constructing graphs and running algorithms. |

| Community Detection Algo | Louvain, Infomap, Leiden |

The core "reagent" for identifying modules; each has different properties (speed, quality). |

| Statistical Library | SciPy.stats, statsmodels |

For robust correlation calculation and significance thresholding. |

| Temporal Network Library | Teneto, DynComm (Python/Java) |

Provides specialized functions and metrics for time-varying networks. |

| Data Manipulation | Pandas, NumPy |

Essential for handling time-series data, cleaning, and organizing results. |

| Visualization Engine | Matplotlib, Seaborn, Graphviz |

For plotting modularity timelines, community lifespans, and pathway diagrams. |

| Enrichment Analysis Tool | g:Profiler, clusterProfiler (R) |

Validates biological relevance of detected gene communities. |

Visualizations

Dynamic Community Detection Pipeline Workflow

Temporal Community Evolution with Merge and Split

Overcoming Practical Challenges: Noise, Data Sparsity, and Computational Hurdles

This support center is designed to assist researchers working on dynamic communities, specifically within the thesis context of Addressing limitations of correlation methods for dynamic communities research. Below are troubleshooting guides and FAQs to address common experimental challenges.

Frequently Asked Questions (FAQs)