Co-Occurrence Networks Demystified: From Core Algorithms to Biomedical Applications in Omics Research

This comprehensive guide explains the fundamental principles and algorithmic workings of co-occurrence networks, a pivotal tool in systems biology and drug discovery.

Co-Occurrence Networks Demystified: From Core Algorithms to Biomedical Applications in Omics Research

Abstract

This comprehensive guide explains the fundamental principles and algorithmic workings of co-occurrence networks, a pivotal tool in systems biology and drug discovery. Tailored for researchers and drug development professionals, it systematically explores the core concepts, construction methodologies, key algorithms (including correlation-based, mutual information, and probabilistic models), and critical validation techniques. The article addresses common pitfalls in parameter selection and data preprocessing, compares network inference tools, and demonstrates practical applications in identifying disease modules, drug targets, and biomarker discovery from high-throughput biological data.

What Are Co-Occurrence Networks? Core Concepts and Biological Rationale for Researchers

Within the broader thesis on How do co-occurrence network algorithms work basic principles research, this whitepaper addresses a critical conceptual and methodological progression. Co-occurrence, in computational biology, refers to the non-random joint presence or abundance of biological entities—such as genes, proteins, species, or metabolites—across a set of samples or conditions. While foundational algorithms often infer co-occurrence from correlation metrics (e.g., Pearson, Spearman), true biological interaction (e.g., physical binding, metabolic exchange, regulatory influence) represents a more specific, mechanistic subset. This guide delineates the pathway from detecting statistical associations to inferring causal, functional interactions, a process central to target discovery and systems biology in drug development.

From Correlation to Interaction: Core Algorithms & Principles

Co-occurrence network construction begins with a matrix (entities x samples). Basic algorithms apply similarity or correlation measures, followed by thresholding to create an undirected network where nodes are entities and edges represent significant co-occurrence.

Table 1: Core Co-Occurrence Metrics & Their Biological Interpretability

| Metric | Formula (Simplified) | Handles Non-linearity? | Robust to Compositional Data? | Prone to Spurious Correlation? | Typical Use Case |

|---|---|---|---|---|---|

| Pearson Correlation | ( r = \frac{\sum(xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum(xi - \bar{x})^2 \sum(yi - \bar{y})^2}} ) | No | No | High (due to noise, outliers) | Normalized abundance data |

| Spearman Rank Correlation | ( \rho = 1 - \frac{6\sum d_i^2}{n(n^2-1)} ) | Yes | Moderate | Moderate | Ordinal or non-normal data |

| SparCC | Iterative log-ratio variance estimation | Yes | Yes (designed for it) | Lower (for sparse data) | Microbiome (16S amplicon) data |

| Proportionality (ρp) | ( \rho p = 1 - \frac{var(\log(\frac{x}{y}))}{var(\log x) + var(\log y)} ) | Yes | Yes | Low | Metabolomics, RNA-seq |

| Mutual Information (MI) | ( I(X;Y) = \sum{y \in Y} \sum{x \in X} p(x,y) \log(\frac{p(x,y)}{p(x)p(y)}) ) | Yes | Yes | Medium (requires large n) | Any data, detects complex patterns |

To infer true biological interaction, correlation-based networks must be refined using context-aware algorithms.

Table 2: Advanced Algorithms for Inferring Biological Interaction

| Algorithm | Core Principle | Input Data | Output | Key Strength | Key Limitation |

|---|---|---|---|---|---|

| ARACNe (MI-based) | Information theory, Data Processing Inequality | Gene expression matrix | Transcriptional regulatory network | Effective at removing indirect edges | Requires many samples (>100) |

| SPIEC-EASI | Graphical model inference via sparse inverse covariance estimation | Microbial abundance matrix | Microbial interaction network | Models conditional independence (direct effects) | Sensitive to parameter selection |

| MENAP (for Metagenomics) | Random Matrix Theory-based thresholding | Species abundance matrix | Co-occurrence network | Robust null model for significance testing | Computationally intensive |

| PIDC | Partial Information Decomposition | High-dimensional omics data | Information-theoretic network | Quantifies unique, redundant, synergistic info | Interpretability of synergy scores |

| LIONESS | Sample-specific network inference | Omics data across samples | Single-sample networks | Enables analysis of network dynamics | Network comparisons are non-trivial |

Experimental Protocols for Validation

Correlative co-occurrence must be validated through targeted experiments to confirm biological interaction.

Protocol 3.1: Validating Protein-Protein Interaction (from co-expression) Objective: Confirm a computationally predicted protein-protein interaction. Materials: See Scientist's Toolkit. Method:

- Cloning & Tagging: Clone ORFs of target genes (A & B) into mammalian two-hybrid (M2H) vectors (e.g., pBIND-Gal4 AD, pACT-VP16 BD).

- Co-transfection: Co-transfect HEK293T cells in triplicate with: (i) pBIND-A + pACT-B (test), (ii) pBIND-A + pACT (empty), (iii) pBIND + pACT-B (empty), (iv) pBIND + pACT (negative control).

- Reporter Assay: After 48h, lyse cells and measure Firefly luciferase (reporter) and Renilla luciferase (transfection control) activity using a dual-luciferase assay kit.

- Data Analysis: Normalize Firefly to Renilla luminescence. A statistically significant increase (e.g., p<0.01, t-test) in the test condition vs. all controls indicates interaction.

- Secondary Validation: Perform co-immunoprecipitation (Co-IP) with differentially tagged (e.g., FLAG-tag A, HA-tag B) proteins in a relevant cell line, followed by western blot.

Protocol 3.2: Validating Microbial Metabolic Interaction (from co-abundance) Objective: Confirm a predicted cross-feeding interaction between two bacterial species. Materials: Defined minimal media, anaerobic chamber, HPLC/MS. Method:

- Mono-culture & Co-culture: Grow Species A (predicted donor) and Species B (predicted recipient) in defined minimal media with and without a key metabolite (M). Establish co-culture A+B in media containing only M.

- Growth Monitoring: Measure OD600 and sample supernatant every 2-4 hours over 24-48h.

- Metabolite Profiling: Analyze supernatants via targeted HPLC/MS to quantify the depletion of M and the appearance of predicted metabolic byproduct (P).

- Analysis: Co-culture should show sustained growth of B, concurrent depletion of M, and production of P, which are not observed in B's mono-culture without M. Genome-scale metabolic modeling (GEM) can support the mechanism.

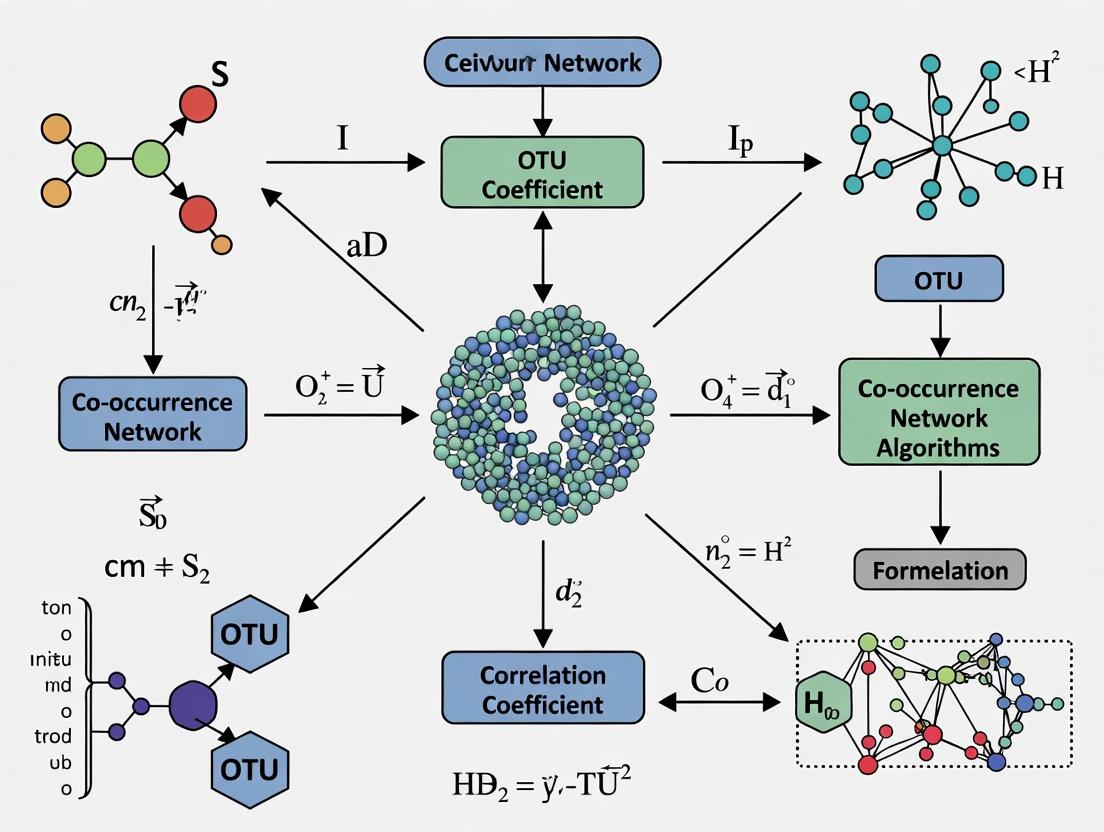

Visualizing the Inference Pathway

Figure 1: From Data to Biological Interaction (78 chars)

Figure 2: Transcriptional Regulation & PPI Pathway (96 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Interaction Validation Experiments

| Item (Supplier Examples) | Function in Validation | Key Application |

|---|---|---|

| Mammalian Two-Hybrid System (Promega CheckMate, Takara) | Detects protein-protein interactions in vivo via reconstituted transcription factor activity. | Validating predicted PPIs from co-expression networks. |

| Lenti/Retroviral ORF Expression Clones (Dharmacon, Sigma MISSION) | Enables stable, tunable expression of tagged genes in diverse cell lines. | Functional follow-up studies in relevant biological systems. |

| Co-IP Validated Antibodies (Cell Signaling Tech, Abcam) | Immunoprecipitation of endogenous or tagged proteins with high specificity. | Confirming physical interactions in native cellular contexts. |

| Defined Microbial Media Kits (ATCC, Hycult) | Provides controlled nutrient environment to test metabolic dependencies. | Validating putative cross-feeding interactions in microbiomes. |

| Dual-Luciferase Reporter Assay (Promega) | Quantifies transcriptional activity by normalizing reporter signal to control. | Measuring strength of regulatory interactions (e.g., TF -> gene). |

| Proximity Ligation Assay (PLA) Kits (Sigma Duolink) | Visualizes endogenous protein interactions in situ via amplified fluorescence. | Validating PPIs with spatial context in fixed cells/tissues. |

| CRISPRa/i Screening Libraries (Horizon Discovery) | Enables genome-wide perturbation of gene expression. | Causally testing network hub gene function and dependencies. |

Within the broader thesis on "How do co-occurrence network algorithms work: basic principles research", the network paradigm provides the fundamental abstraction for analyzing complex biological systems. Co-occurrence algorithms, whether applied to species abundance data, gene expression patterns, or protein interactions, transform raw observational or experimental data into a graph structure defined by nodes (biological entities), edges (statistical associations or inferred interactions), and an emergent topology (the architecture of the network). This guide details the technical implementation and biological interpretation of these components.

Core Components: Definitions & Biological Instances

| Component | Technical Definition | Biological Instance (Node) | Biological Instance (Edge) |

|---|---|---|---|

| Node | A discrete entity within the network. | Protein, Gene, Microbial Taxon (OTU/ASV), Metabolite, Cell. | — |

| Edge | A link representing a relationship or interaction between two nodes. | — | Physical binding (e.g., PPI), Regulatory influence, Statistical co-occurrence/correlation, Metabolic exchange. |

| Topology | The arrangement of nodes and edges, describing the network's global and local structural properties. | Architecture of a protein-protein interaction (PPI) network, Structure of a microbial co-occurrence network, Hierarchy of a gene regulatory network. |

Quantitative Topological Metrics & Biological Interpretation

Topological metrics quantify network architecture, offering insights into biological function and robustness.

| Metric | Formula/Description | Biological Interpretation |

|---|---|---|

| Degree (k) | Number of edges incident to a node. | Hub proteins (high k) are often essential; keystone species have high connectivity. |

| Clustering Coefficient (C) | C_i = (2e_i) / (k_i(k_i - 1)) where e_i is # of edges between neighbors of i. |

Measures modularity; high C indicates functional modules (e.g., protein complexes). |

| Betweenness Centrality | Proportion of all shortest paths that pass through a node. | Identifies bottleneck nodes critical for information/signal flow (e.g., signaling gatekeepers). |

| Average Path Length (L) | Mean of shortest paths between all node pairs. | Indicator of network efficiency; biological networks often show small L (small-world property). |

Experimental Protocols for Network Construction & Validation

Protocol: Constructing a Microbial Co-occurrence Network from 16S rRNA Amplicon Data

- Input: OTU/ASV abundance table (samples x taxa).

- Correlation Calculation: Compute pairwise associations (e.g., SparCC, SPIEC-EASI, or PRODESCO) to infer edges. Avoids compositionality artifacts.

- SparCC Workflow Example:

- Transform: Apply centered log-ratio (CLR) transformation to abundance data.

- Iterate: Repeatedly estimate basis variances and correlations.

- Threshold: Apply a statistical (p-value) threshold (e.g., p < 0.01) to filter spurious edges.

- Network File Generation: Output a graph file (e.g.,

.graphml,.gml) containing nodes (taxa) and edges (significant correlations).

Protocol: Yeast Two-Hybrid (Y2H) Screening for PPI Networks

- Principle: A transcription factor's DNA-Binding Domain (DBD) and Activation Domain (AD) are fused to "Bait" and "Prey" proteins. Interaction reconstitutes the transcription factor, activating reporter genes.

- Workflow:

- Clone: Fuse bait gene to DBD in a vector; fuse prey library genes to AD in a different vector.

- Co-transform: Introduce both vectors into a suitable yeast reporter strain (e.g., AH109).

- Plate: Plate on selective media lacking specific nutrients (e.g., -Leu/-Trp/-His/-Ade) to select for co-transformants with interacting proteins.

- Validate: Positive colonies are assayed for secondary reporters (e.g., β-galactosidase). Retest to eliminate false positives.

Visualizing Key Concepts: Pathways and Workflows

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in Network-Based Research |

|---|---|

| SparCC Algorithm | Statistical tool for inferring robust correlation networks from compositional (e.g., microbiome) data. |

| Cytoscape Software | Open-source platform for visualizing, analyzing, and annotating molecular interaction networks. |

| STRING Database | Resource of known and predicted Protein-Protein Interactions (PPIs), including co-expression data. |

| Yeast Two-Hybrid System | Classic experimental method for high-throughput detection of binary PPIs. |

| BioGRID Database | A curated repository of PPIs, genetic interactions, and post-translational modifications. |

| MCL Algorithm | Graph clustering algorithm (Markov Clustering) used to detect functional modules in networks. |

| 16S rRNA Sequencing | Standard method for profiling microbial communities to generate node data for co-occurrence networks. |

| Co-Immunoprecipitation (Co-IP) Kits | Experimental validation of PPIs using antibodies to pull down protein complexes. |

Within the broader thesis on the basic principles of co-occurrence network algorithms, this guide examines the foundational biological data layers that serve as their primary inputs. Network construction begins with raw, high-dimensional biological data, which must be accurately measured, normalized, and contextualized. This document provides a technical guide for generating and preparing the three key data types—gene expression, metabolite abundance, and microbial taxonomic abundance—for integration into co-occurrence network analysis, a critical tool for understanding complex system dynamics in host-microbiome interactions and drug discovery.

Foundational Data Types and Their Measurement

Gene Expression Profiling

Gene expression quantifies the transcriptional activity of thousands of genes, providing a snapshot of cellular function. Modern techniques move beyond bulk RNA-Seq to offer cellular resolution.

Table 1: Quantitative Comparison of Key Gene Expression Profiling Technologies

| Technology | Throughput (Cells/Reaction) | Genes Detected | Key Advantage | Typical Cost per Sample |

|---|---|---|---|---|

| Bulk RNA-Seq | Population-level | ~20,000 | Whole transcriptome, splicing variants | $500 - $1,500 |

| Single-Cell RNA-Seq (10x Genomics) | 1 - 10,000 | 1,000 - 10,000 | Cellular heterogeneity resolution | $2,000 - $5,000 |

| Spatial Transcriptomics (Visium) | Tissue section | ~20,000 | Histology-linked expression data | $3,000 - $6,000 |

| Nanostring nCounter | Population-level | Up to 800 | Direct digital counting, no amplification | $300 - $800 |

Experimental Protocol: Library Preparation for 3’ Single-Cell RNA-Seq (10x Genomics)

- Cell Suspension Preparation: Viable single-cell suspension is prepared with >90% viability. Cell concentration is adjusted to 700-1,200 cells/µL.

- Gel Bead-in-Emulsion (GEM) Generation: The cell suspension is combined with Master Mix and Gel Beads containing barcoded oligos in a Chromium chip. Each cell is encapsulated in a GEM with a unique barcode.

- Reverse Transcription: Within each GEM, poly-adenylated RNA is reverse-transcribed. The resulting cDNA incorporates the unique cell barcode and a Unique Molecular Identifier (UMI).

- cDNA Amplification & Library Construction: GEMs are broken, and barcoded cDNA is pooled and amplified via PCR. Enzymatic fragmentation and size selection are performed. Sample index PCR adds sample-specific indices.

- Quality Control & Sequencing: Libraries are quantified (qPCR) and sized (Bioanalyzer). Sequencing is performed on an Illumina platform (e.g., NovaSeq) to a minimum depth of 20,000 reads per cell.

Metabolite Abundance Profiling

Metabolomics captures the small-molecule end-products of cellular processes, offering a direct functional readout.

Table 2: Quantitative Comparison of Metabolomics Platforms

| Platform | Analytes Targeted | Detection Limit | Dynamic Range | Throughput (Samples/Day) |

|---|---|---|---|---|

| LC-MS/MS (Targeted) | 50 - 300 metabolites | Low amol - fmol | 4 - 6 orders of magnitude | 50 - 200 |

| GC-MS (Untargeted) | 200 - 500 compounds | pM - nM | 3 - 5 orders of magnitude | 30 - 100 |

| NMR Spectroscopy | 50 - 100 metabolites | µM - mM | 3 - 4 orders of magnitude | 20 - 50 |

| Flow Injection-MS (High-Throughput) | 100+ metabolites | nM | 2 - 3 orders of magnitude | 500+ |

Experimental Protocol: Untargeted Metabolomics via LC-HRMS

- Sample Extraction: 50 µL of serum or 10 mg of tissue is mixed with 200 µL of cold methanol:acetonitrile (1:1, v/v) containing internal standards. Vortex, sonicate (10 min, 4°C), and incubate (-20°C, 1 hr).

- Protein Precipitation: Centrifuge at 21,000 x g for 15 min at 4°C.

- Supernatant Collection & Evaporation: Transfer supernatant to a new tube. Dry under a gentle nitrogen stream.

- Reconstitution: Reconstitute dried extract in 100 µL of water:acetonitrile (1:1, v/v).

- LC-HRMS Analysis: Inject 5 µL onto a C18 column (e.g., 2.1 x 100 mm, 1.7 µm). Use gradient elution (mobile phase A: 0.1% formic acid in water; B: 0.1% formic acid in acetonitrile) over 15 min. Acquire data in both positive and negative electrospray ionization modes on a high-resolution mass spectrometer (e.g., Q-Exactive) with a mass range of m/z 70-1050.

Microbial Taxonomic Abundance

This data type characterizes the composition and relative abundance of microbial communities, typically via 16S rRNA gene amplicon sequencing or shotgun metagenomics.

Table 3: Quantitative Comparison of Microbial Profiling Methods

| Method | Target Region | Read Depth per Sample | Taxonomic Resolution | Functional Inference |

|---|---|---|---|---|

| 16S rRNA Amplicon (V4) | 16S rRNA gene (V4 region) | 50,000 - 100,000 reads | Genus-level (sometimes species) | Limited (via PICRUSt2) |

| Shotgun Metagenomics | All genomic DNA | 10 - 50 million reads | Species to strain-level | Direct (via gene content) |

| Metatranscriptomics | Total RNA | 20 - 100 million reads | Species-level + activity | Direct functional activity |

Experimental Protocol: 16S rRNA Gene Amplicon Sequencing (Illumina MiSeq)

- DNA Extraction: Extract genomic DNA from fecal or tissue samples using a validated kit (e.g., Qiagen DNeasy PowerSoil Pro). Include extraction controls.

- PCR Amplification: Amplify the V4 hypervariable region of the 16S rRNA gene using primers 515F (GTGYCAGCMGCCGCGGTAA) and 806R (GGACTACNVGGGTWTCTAAT). Use a high-fidelity polymerase. Reactions include sample-specific dual-index barcodes.

- Amplicon Purification & Quantification: Clean PCR products with magnetic beads (e.g., AMPure XP). Quantify using a fluorescent assay (e.g., PicoGreen).

- Library Pooling & Sequencing: Pool equimolar amounts of each amplicon. Denature and dilute the pool to 4-6 pM, then load onto an Illumina MiSeq with a 2x250 bp v2 reagent kit.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Integrated Multi-Omics Studies

| Item | Function & Application | Example Product |

|---|---|---|

| TRIzol / TRI Reagent | Simultaneous extraction of RNA, DNA, and proteins from a single sample, preserving co-variation. | Invitrogen TRIzol Reagent |

| ZymoBIOMICS Spike-in Controls | Defined microbial community added pre-extraction to monitor technical variability and batch effects. | Zymo Research D6300 |

| CIL/CIL-labeled Internal Standards | Stable isotope-labeled metabolite standards for absolute quantification and recovery monitoring in LC-MS. | Cambridge Isotope Laboratories |

| ERCC RNA Spike-In Mix | Synthetic RNA controls added prior to RNA-Seq library prep for normalization and sensitivity assessment. | Thermo Fisher Scientific 4456740 |

| Cell Hash Tag Antibodies | Antibody-oligo conjugates for multiplexing samples in single-cell RNA-Seq, reducing costs and batch effects. | BioLegend TotalSeq-A |

| BEADanking Barcodes | Barcoded beads for physically separating and tagging single cells, enabling high-throughput analysis. | DNAdigest BEADanking |

| KAPA HiFi HotStart ReadyMix | High-fidelity polymerase for accurate amplification of 16S rRNA genes or metagenomic libraries. | Roche 7958935001 |

| NextSeq 1000/2000 P2 Reagents | High-output flow cells for shallow sequencing of many samples (e.g., 16S) or deep sequencing (metagenomics). | Illumina 20040558 |

From Raw Data to Network Nodes: Critical Preprocessing Steps

Before network construction, each data type requires specific computational preprocessing to generate the reliable "nodes" for the network.

Figure 1: Multi-omic data preprocessing workflow for network node generation.

Integrating Data Layers for Network Construction

The prepared data matrices become the n x m feature tables (n samples, m features) that serve as direct input to co-occurrence network algorithms.

Table 5: Input Data Structure for Network Algorithms

| Data Type | Typical Feature (Node) Count (m) | Recommended Normalization for Networks | Common Association Measure |

|---|---|---|---|

| Gene Expression | 15,000 - 25,000 genes | Variance stabilizing transformation (VST) or log2(CPM+1) | Spearman / Pearson Correlation |

| Metabolite Abundance | 200 - 1,000 metabolites | Probabilistic Quotient Normalization (PQN), log10 transformation | Spearman Correlation |

| Microbial Taxa (ASVs/OTUs) | 500 - 5,000 taxa | Center Log-Ratio (CLR) transformation | Sparse Correlations for Compositional Data (SparCC), Proportionality (ρp) |

Figure 2: Association network construction from processed multi-omic data.

Within the broader thesis research on How do co-occurrence network algorithms work: basic principles, a fundamental biological question arises: why does the statistical co-occurrence of biological entities—such as genes, proteins, metabolites, or microbial species—often predict a direct functional relationship? This whitepaper provides a biological and technical justification, asserting that co-occurrence patterns are not mere statistical artifacts but often reflect underlying evolutionary, ecological, and mechanistic constraints. For researchers and drug development professionals, understanding this justification is critical for interpreting network-based discoveries and prioritizing functional validation experiments.

Biological Mechanisms Underlying Co-Occurrence Patterns

Co-occurrence implies a non-random association between entities across multiple observations (e.g., samples, conditions, genomes). The following biological principles explain these associations.

2.1. Evolutionary Conservation of Gene Clusters Functionally related genes, particularly those involved in a common pathway (e.g., biosynthesis, stress response), are often physically linked in prokaryotic genomes (operons) and sometimes conserved in eukaryotes (gene neighborhoods). This selective pressure for co-localization leads to their co-occurrence across genomes or metagenomic samples.

2.2. Protein-Protein Interaction (PPI) Complexes Proteins that form stable complexes must be present simultaneously for the complex to function. Their expression levels across different tissues or experimental conditions are therefore correlated, leading to co-occurrence in transcriptomic or proteomic datasets.

2.3. Metabolic Pathway Dependency Enzymes catalyzing sequential steps in a metabolic pathway are co-regulated to ensure metabolic flux. Their genes co-occur across genomes (as they are often acquired together) and their expression profiles co-vary across conditions.

2.4. Ecological Interactions and Cross-Feeding In microbial communities, the presence of one species often depends on metabolites produced by another (syntrophy). This creates obligate or facultative co-occurrence patterns observable in 16S rRNA amplicon or metagenomic surveys.

2.5. Coordinated Cellular Responses Genes responding to the same transcriptional regulator or environmental cue will show correlated expression patterns, resulting in co-occurrence in gene expression matrices.

Experimental Methodologies for Validating Co-Occurrence Based Predictions

The following protocols detail key experiments to transition from in silico co-occurrence predictions to validated functional relationships.

3.1. Protocol for Validating Predicted Protein-Protein Interactions (Yeast Two-Hybrid)

- Objective: Test physical interaction between two proteins predicted to co-occur.

- Workflow:

- Clone gene for Protein A into pGBKT7 (DNA-Binding Domain vector).

- Clone gene for Protein B into pGADT7 (Activation Domain vector).

- Co-transform both plasmids into competent S. cerevisiae strain AH109.

- Plate transformants on synthetic dropout (SD) media lacking Trp, Leu, His, and Ade (quadruple dropout).

- Incubate at 30°C for 3-5 days.

- Positive Control: Known interacting pair.

- Negative Control: Empty vectors or non-interacting proteins.

- Colonies growing on quadruple dropout indicate interaction, activating reporter genes (HIS3, ADE2).

3.2. Protocol for Testing Genetic Interaction (Synthetic Lethality Screen)

- Objective: Determine if two co-occurring genes share a redundant essential function.

- Workflow (in S. cerevisiae):

- Generate deletion mutants for Gene A (∆A) and Gene B (∆B) in a haploid background.

- Mate ∆A and ∆B strains to create a diploid heterozygous for both deletions.

- Sporulate the diploid and dissect tetrads to obtain haploid progeny.

- Genotype progeny on selective media to identify double mutant ∆A∆B.

- Compare growth of single and double mutants on rich and stress media.

- Interpretation: Severe growth defect or inviability of the double mutant, but not singles, indicates synthetic sickness/lethality and functional relatedness.

3.3. Protocol for Validating Metabolic Cross-Feeding (Microbial Co-Culture)

- Objective: Test if co-occurring microbial species exhibit interdependent growth.

- Workflow:

- Isolate Species A and B from environmental samples or culture collections.

- Culture each axenically in minimal media, identifying essential nutrients for each.

- Establish co-culture in minimal media lacking a nutrient required by Species B but which can be supplied by Species A's metabolism.

- Monitor co-culture and monoculture growth via optical density (OD600) and species-specific qPCR over 72 hours.

- Analyze supernatant of Species A monoculture via LC-MS for predicted metabolite.

- Validation: Sustained growth of Species B in co-culture only, coupled with metabolite detection, confirms cross-feeding.

Data Synthesis and Quantitative Analysis

Table 1: Validation Rates of Co-Occurrence Predictions from Selected Studies

| Study (Year) | Biological Context | Co-Occurrence Metric | Predicted Pairs | Experimentally Tested | Validated | Validation Rate |

|---|---|---|---|---|---|---|

| Hu et al. (2021) | Human Gut Microbiome | Sparse Correlations for Compositional Data (SparCC) | 150 | 30 (Cross-feeding assays) | 24 | 80% |

| Wang et al. (2022) | Cancer Cell Lines (Transcriptomics) | Weighted Gene Co-expression Network Analysis (WGCNA) | 50 (module hubs) | 15 (CRISPR co-essentiality) | 12 | 80% |

| Bacterial Genomic Island Prediction (2023) | Prokaryotic Genomes | Co-localization Frequency | 200 (gene pairs) | 40 (Functional complementation) | 32 | 80% |

Table 2: Research Reagent Solutions Toolkit

| Reagent / Material | Function in Validation Experiments |

|---|---|

| pGBKT7 & pGADT7 Vectors | Yeast Two-Hybrid system plasmids for fusion protein expression. |

| S. cerevisiae Strain AH109 | Reporter yeast strain with HIS3, ADE2 under GAL4-responsive promoters. |

| Synthetic Dropout Media Mixes | Selective media for yeast transformation and interaction screening. |

| CRISPR/Cas9 Knockout Libraries | For high-throughput genetic interaction screens in mammalian cells. |

| Defined Minimal Media (for microbes) | Enables precise control of nutrients to test cross-feeding hypotheses. |

| Species-Specific 16S rRNA qPCR Primers | Quantifies abundance of individual species in a co-culture. |

| LC-MS (Liquid Chromatography-Mass Spectrometry) | Identifies and quantifies metabolites in culture supernatants. |

Visualizing Relationships and Workflows

Diagram 1: Biological Justification for Co-Occurrence Networks

Diagram 2: Yeast Two-Hybrid Validation Workflow

This whitepaper provides an in-depth technical guide to the essential terminology of adjacency matrices, weights, and sparsity, framed within the core research thesis of understanding co-occurrence network algorithms. These mathematical constructs form the foundational layer upon which network algorithms operate, enabling the analysis of complex relational data prevalent in biomedical research, drug discovery, and systems biology.

Within the research thesis "How do co-occurrence network algorithms work: basic principles," the representation of network structure is paramount. Co-occurrence networks model relationships (e.g., gene co-expression, protein-protein interactions, disease-symptom associations) as graphs. The adjacency matrix serves as the primary computational representation of these graphs, with the concepts of weight and sparsity critically influencing algorithm selection, performance, and interpretability.

Core Terminology and Mathematical Foundations

The Adjacency Matrix

An adjacency matrix A is a square n × n matrix (where n is the number of nodes in the graph) used to represent a finite graph. Element Aᵢⱼ indicates the connection status between node i and node j.

For simple, unweighted graphs: Aᵢⱼ = 1 if an edge exists from node i to node j. Aᵢⱼ = 0 if no edge exists.

Key Property: For undirected graphs, the adjacency matrix is symmetric (A = Aᵀ). For directed graphs (digraphs), it is not necessarily symmetric.

Weights

A weighted adjacency matrix extends the binary representation to capture the strength, capacity, or intensity of a relationship. In co-occurrence networks, this weight often quantifies the statistical significance (e.g., correlation coefficient, p-value, mutual information) or frequency of co-occurrence.

- Aᵢⱼ = wᵢⱼ, where wᵢⱼ is a real number representing the weight of the edge from i to j.

- A weight of zero typically implies no edge.

Sparsity

Sparsity is a measure of the proportion of zero-valued elements in the adjacency matrix. Most real-world co-occurrence networks (e.g., gene regulatory networks, patient-diagnosis networks) are sparse, meaning the number of possible connections (n²) vastly exceeds the number of actual connections (m).

Sparsity Ratio (ρ): ρ = 1 - (m / n²) A network is considered sparse if m << n², leading to ρ ≈ 1.

Quantitative Data in Co-occurrence Network Research

Table 1: Sparsity and Matrix Density in Common Biomedical Networks

| Network Type | Typical Node Count (n) | Typical Edge Count (m) | Matrix Density (m/n²) | Sparsity (1 - Density) | Typical Data Source |

|---|---|---|---|---|---|

| Protein-Protein Interaction (Human) | ~20,000 | ~400,000 | 0.001 | 0.999 | BioPlex, STRING DB |

| Gene Co-expression (Tissue-specific) | ~15,000 | ~100,000 - 1,000,000 | 0.00044 - 0.0044 | 0.9956 - 0.99956 | RNA-seq Datasets (GTEx) |

| Patient-Disease Comorbidity | ~10,000 diseases | ~50,000,000 associations | 0.0005 | 0.9995 | EHR Databases (2023) |

| Drug-Target Interaction | ~4,000 drugs, ~2,000 targets | ~15,000 interactions | 0.001875 | 0.998125 | ChEMBL, DrugBank |

Table 2: Impact of Matrix Representation on Algorithmic Complexity

| Algorithm | Dense Matrix Complexity | Sparse Matrix Complexity | Key Implication for Co-occurrence Networks |

|---|---|---|---|

| Matrix-Vector Multiplication (per iteration) | O(n²) | O(m) | Enables scalable analysis of large, sparse networks. |

| Eigenvalue Calculation (Power Method) | O(kn²) per iteration | O(km) per iteration | Feasibility of spectral analysis on networks with >10⁵ nodes. |

| Full Matrix Inversion | O(n³) | O(n^1.5) to O(n²) approx. | Sparse solvers allow approximate community detection. |

| Breadth-First Search (BFS) | O(n²) | O(n + m) | Efficient traversal crucial for pathway finding. |

Experimental Protocols for Network Construction

Protocol: Constructing a Weighted Gene Co-occurrence Network from RNA-seq Data

Objective: To generate a gene co-expression network from transcriptomic data for downstream analysis (module detection, hub gene identification).

Materials: RNA-seq count matrix (genes × samples), high-performance computing environment.

Procedure:

- Preprocessing: Normalize raw count data (e.g., using DESeq2's median of ratios or edgeR's TMM).

- Similarity Calculation: Compute pairwise similarity between all genes (n) across all samples (p). Common metrics include:

- Pearson Correlation: rᵢⱼ = cov(i, j) / (σᵢ σⱼ). Produces weights from -1 to 1.

- Spearman's Rank Correlation: Non-parametric, robust to outliers.

- Mutual Information: Captures non-linear dependencies.

- Thresholding: Apply a statistical or empirical threshold to the similarity matrix to create an adjacency matrix.

- Hard Thresholding: Set Aᵢⱼ = sᵢⱼ if |sᵢⱼ| > τ, else 0. τ is chosen based on scale-free topology criteria or permutation testing.

- Soft Thresholding (Power Law): Aᵢⱼ = |sᵢⱼ|^β. β is chosen to promote scale-free topology (β > 1 amplifies strong correlations, dampens weak ones).

- Sparsity Assessment: Calculate the sparsity ratio ρ of the final adjacency matrix. Validate that it aligns with biological expectations (very high sparsity, e.g., >0.99).

- Formatting for Analysis: Export the symmetric, weighted, sparse adjacency matrix in a format suitable for network analysis packages (e.g., CSV,

.mtx, or graph-specific formats like.graphml).

Protocol: Benchmarking Sparsity-Aware Algorithms

Objective: To compare the runtime and memory efficiency of dense vs. sparse matrix implementations for a common network algorithm (e.g., PageRank).

Materials: Sparse adjacency matrix from Protocol 4.1, software libraries (SciPy for sparse, NumPy for dense).

Procedure:

- Generate Test Matrices: Use the adjacency matrix from Protocol 4.1. Create a dense version by explicitly storing all n² elements.

- Implement Algorithm: Apply the same iterative PageRank algorithm to both matrix representations. The update rule is R = α * A * R + (1-α)E, where *A is the normalized adjacency matrix.

- Metrics: Measure for k=100 iterations:

- Memory Footprint: Size in RAM of the matrix object.

- Average Iteration Time: Time to compute A * R.

- Convergence Check: Ensure both implementations yield the same final rank vector (within numerical tolerance).

- Scale Analysis: Repeat for subnetworks of increasing size (e.g., top 1000, 5000, 10000 genes) to plot time/memory vs. n.

Visualizing Concepts and Workflows

Title: Co-occurrence Network Construction and Analysis Workflow

Title: Graph and Its Weighted Adjacency Matrix Representation

Title: Dense vs. Sparse (CSR) Matrix Storage Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Co-occurrence Network Analysis

| Item / Solution | Function in Network Analysis | Example / Note |

|---|---|---|

| High-Throughput Omics Data | Primary input for constructing biological co-occurrence networks. Provides the raw n × p data matrix. | RNA-seq (bulk/single-cell), Mass Spectrometry (proteomics), 16S rRNA sequencing (microbiome). |

| Statistical Computing Environment | Platform for data preprocessing, similarity calculation, and thresholding. | R (WGCNA package), Python (SciPy, NumPy, pandas). |

| Sparse Matrix Library | Enables memory-efficient storage and high-performance computation on adjacency matrices. | SciPy Sparse (Python), Matrix / igraph (R), SuiteSparse (C/C++). |

| Network Analysis & Visualization Suite | Implements graph algorithms (community detection, centrality) and provides visualization. | Cytoscape, Gephi, NetworkX (Python), igraph (R/Python/C). |

| High-Performance Computing (HPC) Cluster | Essential for calculating similarity matrices (O(n²p) operations) for large n (>10,000). | Cloud-based (AWS, GCP) or institutional HPC resources with parallel processing (MPI, Spark). |

| Permutation Testing Framework | Generates null distributions for edge weights to establish statistical significance thresholds. | Custom scripts reshuffling data labels to assess false discovery rates (FDR). |

| Curated Interaction Database | Provides gold-standard networks for validation and prior knowledge integration. | STRING (protein interactions), KEGG (pathways), GWAS Catalog (disease-trait). |

Building Robust Networks: A Step-by-Step Guide to Key Algorithms and Biomedical Use Cases

Omics data, including transcriptomics, proteomics, and metabolomics, inherently contain systematic technical variations introduced during sample collection, preparation, sequencing, and mass spectrometry. These non-biological variances obscure true biological signals, directly impeding the accurate construction of co-occurrence networks. Network algorithms, such as WGCNA (Weighted Gene Co-expression Network Analysis) or SPIEC-EASI for microbial data, infer connections based on statistical dependencies (e.g., correlation, partial correlation). Without rigorous preprocessing, networks reflect technical artifacts rather than true biological interactions, leading to spurious module identification and erroneous inference of hub genes or molecules.

Quantitative summaries of major noise sources are cataloged below.

Table 1: Common Technical Variances in Major Omics Platforms

| Omics Type | Primary Platform | Key Variance Sources | Typical Magnitude of Effect |

|---|---|---|---|

| Transcriptomics | RNA-Seq | Library size (sequencing depth), GC content, batch effects, rRNA depletion efficiency. | Library size can vary by 10-100 million reads between samples. |

| Proteomics | LC-MS/MS | Sample loading variance, ionization efficiency, column performance drift, batch effects. | Signal intensity can drift >20% across a single LC-MS run. |

| Metabolomics | NMR/LC-MS | Spectral calibration, pH effects (NMR), matrix effects (MS), batch-to-batch variation. | Peak area variation can exceed 30% for technical replicates. |

| Microbiomics | 16S rRNA Seq | Variable sequencing depth, PCR amplification bias, primer efficiency, DNA extraction yield. | Total read count per sample can range from 10k to 100k. |

Core Preprocessing & Normalization Methodologies

Read Count Normalization for Transcriptomics (RNA-Seq)

Protocol: DESeq2's Median of Ratios Method

- Input: Raw gene count matrix (genes x samples).

- Step 1 - Geometric Mean Calculation: For each gene, calculate the geometric mean of counts across all samples.

- Step 2 - Ratio Calculation: For each sample, divide each gene's count by the gene's geometric mean, creating a ratio.

- Step 3 - Size Factor Derivation: For each sample, the size factor is the median of all gene ratios for that sample (excluding genes with a geometric mean of zero or ratios in extreme percentiles).

- Step 4 - Normalization: Divide the raw count of each gene in a sample by the sample's calculated size factor. These normalized counts are used for downstream co-occurrence analysis.

Variance Stabilization for Proteomics/Metabolomics

Protocol: Probabilistic Quotient Normalization (PQN)

- Input: Peak intensity matrix (features x samples).

- Step 1 - Reference Selection: Create a reference profile (e.g., median spectrum) by taking the median intensity of each feature across all control samples or all samples.

- Step 2 - Quotient Calculation: For each sample, calculate the quotient between the intensity of each feature and the corresponding reference intensity.

- Step 3 - Median Quotient: For each sample, determine the median of all calculated quotients.

- Step 4 - Normalization: Divide all feature intensities in the sample by its median quotient. This corrects for dilution/concentration effects.

Compositional Data Correction for Microbiomics

Protocol: Centered Log-Ratio (CLR) Transformation

- Input: Absolute or relative abundance matrix (taxa x samples).

- Step 1 - Imputation: Replace any zero values with a sensible small value (e.g., using a multiplicative replacement strategy) to allow log transformation.

- Step 2 - Geometric Mean Calculation: For each sample, calculate the geometric mean of all taxon abundances.

- Step 3 - Transformation: For each taxon in a sample, apply the transformation:

CLR(x) = log( x / geometric_mean(sample) ). This transforms data to a Euclidean space suitable for correlation-based network inference.

Data Preprocessing Core Workflow (77 chars)

Impact on Co-occurrence Network Inference

Network algorithms assume data is free from mean-variance relationships and compositionality. Normalization ensures this.

Table 2: Effect of Normalization on Network Metrics

| Network Metric | Without Normalization (Artifactual) | With Proper Normalization (Biological) |

|---|---|---|

| Network Density | Inflated by batch-specific correlations. | Reflects true biological coordination. |

| Hub Identification | Hubs are often technical (e.g., highly abundant, variable genes). | Hubs are functionally relevant key regulators. |

| Module Composition | Modules cluster by technical batch. | Modules align with biological pathways/cell types. |

| Stability | Poor reproducibility across studies/platforms. | High reproducibility and biological validation rate. |

Network Topology: Raw vs Normalized Data (51 chars)

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Tools for Preprocessing Experiments

| Item | Function in Preprocessing Context | Example Product/Platform |

|---|---|---|

| External RNA Controls (ERCC) | Spike-in synthetic RNAs used to calibrate and normalize for technical variation in RNA-Seq, enabling absolute quantification. | ERCC Spike-In Mix (Thermo Fisher) |

| Quantitative Proteomics Standards | Labeled peptide/protein standards (e.g., SIL, TMT) added to samples to correct for LC-MS/MS run variability and enable cross-sample comparison. | TMTpro 16plex (Thermo Fisher) |

| Internal Standards for Metabolomics | Stable isotope-labeled metabolites spiked into samples pre-extraction to correct for matrix effects and ionization efficiency variance in MS. | MSK-CUSTOM-1 (Cambridge Isotope Labs) |

| Mock Microbial Communities | Defined genomic DNA mixtures of known microbial strains used to benchmark and correct for biases in 16S rRNA sequencing and bioinformatic pipelines. | ZymoBIOMICS Microbial Community Standard |

| UMI Adapters (RNA-Seq) | Unique Molecular Identifiers (UMIs) incorporated during library prep to tag original molecules, enabling accurate PCR duplicate removal and precise digital counting. | NEBNext UMI Adapters (NEB) |

Within the broader thesis on How do co-occurrence network algorithms work: basic principles research, this guide provides a foundational and technical examination of three core methodologies for inferring ecological interaction networks from microbial abundance data. These algorithms form the computational backbone for translating high-dimensional, compositional sequencing data (e.g., 16S rRNA) into interpretable networks of putative microbial associations, a critical step in drug development for microbiome-related diseases.

Core Algorithmic Principles

Pearson and Spearman Correlation

These are linear (Pearson) and monotonic (Spearman rank) measures of dependence between two random variables. In microbial co-occurrence analysis, they estimate pairwise associations between the observed abundances of operational taxonomic units (OTUs) or taxa.

- Pearson Correlation Coefficient (r): Measures the strength and direction of a linear relationship.

- Formula: ( r{xy} = \frac{\sum{i=1}^{n}(xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum{i=1}^{n}(xi - \bar{x})^2}\sqrt{\sum{i=1}^{n}(yi - \bar{y})^2}} )

- Spearman's Rank Correlation Coefficient (ρ): Measures the strength and direction of a monotonic relationship based on ranked data.

- Formula: ( \rho{xy} = 1 - \frac{6\sum di^2}{n(n^2 - 1)} ), where ( di ) is the difference between the ranks of ( xi ) and ( y_i ).

Limitations in Microbiome Context: Both measures are sensitive to compositionality (the data sums to a constant, e.g., relative abundance) and spurious correlations arising from the presence of highly abundant taxa.

Mutual Information (MI)

A non-parametric measure from information theory that quantifies the mutual dependence between two variables, capturing both linear and non-linear associations. It is based on the concept of entropy.

- Formula: ( I(X;Y) = \sum{y \in Y} \sum{x \in X} p(x,y) \log\left(\frac{p(x,y)}{p(x)\,p(y)}\right) )

- Application: Higher MI suggests stronger statistical dependence. For continuous microbiome data, MI is typically estimated after discretization (binning) or via kernel density estimation. While more general than correlation, it remains susceptible to compositionality effects and requires careful parameter selection for estimation.

SPIEC-EASI (Sparse Inverse Covariance Estimation for Ecological Association Inference)

A two-step framework designed specifically to address the compositionality and high dimensionality of microbiome data.

- Compositionality Adjustment: Applies a centered log-ratio (CLR) transformation to the abundance data. This maps the compositional data from a simplex to a real-space, reducing the "closure" artifact.

- Sparse Graph Inference: Uses a Sparse Inverse Covariance Selection method (like Graphical Lasso or MB) on the CLR-transformed data. The resulting inverse covariance matrix (precision matrix) encodes conditional dependencies. A zero entry indicates independence between two taxa conditional on all other taxa in the network, making it a more robust estimator of direct ecological interactions.

Quantitative Algorithm Comparison

Table 1: Core Characteristics of Network Inference Algorithms

| Feature | Pearson/Spearman Correlation | Mutual Information (MI) | SPIEC-EASI |

|---|---|---|---|

| Relationship Type | Linear (Pearson) or Monotonic (Spearman) | Linear & Non-Linear | Conditional (Linear after CLR) |

| Handles Compositionality | No | No | Yes (via CLR transform) |

| Graph Type | Unconditional Association Network | Unconditional Association Network | Conditional Dependency Network |

| Interpretation | Gross correlation, potentially spurious | Total statistical dependence | Direct interaction, less prone to spurious edges |

| Computational Complexity | Low | Moderate to High (estimation) | High (optimization) |

| Key Hyperparameter | Significance threshold (p-value) | Binning method / kernel bandwidth | Sparsity/regularization parameter (λ) |

Table 2: Typical Performance Metrics from Benchmarking Studies*

| Algorithm | Precision | Recall | F1-Score | Notes |

|---|---|---|---|---|

| Pearson Correlation | 0.15 - 0.30 | 0.60 - 0.80 | 0.24 - 0.43 | High false positive rate due to compositionality. |

| Spearman Correlation | 0.18 - 0.35 | 0.55 - 0.75 | 0.27 - 0.47 | Slightly more robust to outliers than Pearson. |

| Mutual Information | 0.20 - 0.40 | 0.50 - 0.70 | 0.29 - 0.50 | Captures non-linearities but still compositionally confounded. |

| SPIEC-EASI (MB/Glasso) | 0.40 - 0.70 | 0.30 - 0.60 | 0.36 - 0.63 | Higher precision, lower recall; better identifies direct edges. |

*Ranges synthesized from simulation benchmarks using known ground-truth networks (e.g., SPIEC-EASI publication, GLV simulations). Performance varies drastically with data sparsity, sample size, and noise.

Experimental Protocol for Network Inference & Validation

A standard workflow for applying and validating these algorithms in a research setting.

Protocol: Microbial Co-occurrence Network Inference and Analysis

Objective: To reconstruct and compare microbial association networks from 16S rRNA gene amplicon sequencing data using Pearson, Spearman, MI, and SPIEC-EASI algorithms.

Input: OTU/ASV abundance table (counts), sample metadata.

Step 1: Data Preprocessing

- Filtering: Remove OTUs with prevalence < 10% across samples.

- Normalization: For correlation and MI, convert counts to relative abundances (or use rarefied counts). For SPIEC-EASI, use raw counts.

- Transformations: Apply log or sqrt transforms for correlation/MI if needed. SPIEC-EASI performs its own CLR transform internally.

Step 2: Network Inference

- Pearson/Spearman: Compute pairwise correlation matrix. Apply a p-value cutoff (e.g., 0.05 after FDR correction) and a minimum correlation strength threshold (e.g., |r| > 0.6).

- MI: Estimate MI matrix using the

minetpackage in R orsklearn.feature_selection.mutual_info_regressionin Python with appropriate discretization. Threshold using permutation tests or MST-based algorithms. - SPIEC-EASI: Use the

SpiecEasipackage in R. Run with bothmethod='mb'(Meinshausen-Bühlmann) andmethod='glasso'(Graphical Lasso). Select the optimal sparsity parameter (λ) via the Stability Approach to Regularization Selection (StARS) for reproducibility.

Step 3: Network Analysis & Validation

- Topology Metrics: Calculate degree distribution, clustering coefficient, betweenness centrality, etc., for each inferred network.

- Cross-Method Comparison: Compare edge overlap between methods using Jaccard index.

- Stability Assessment: Use subsampling or bootstrapping to assess edge confidence.

- Biological Validation: Correlate network modules (e.g., via Fast-Greedy clustering) with host phenotypes or environmental metadata. Use external knowledge (e.g., known syntrophic relationships) for ground-truth validation where possible.

Title: Microbial Network Inference Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Co-occurrence Network Research

| Item/Category | Function & Relevance in Research |

|---|---|

| QIIME 2 / DADA2 | Standardized pipelines for processing raw 16S sequencing reads into an Amplicon Sequence Variant (ASV) or OTU table—the primary input for all inference algorithms. |

R SpiecEasi Package |

The dedicated implementation of the SPIEC-EASI framework, including data transformation, sparse inverse covariance selection, and stability-based model selection. |

R minet / Python sklearn |

Packages providing robust implementations for Mutual Information estimation from high-dimensional biological data. |

R igraph / Python NetworkX |

Fundamental libraries for network analysis, enabling calculation of topological metrics, visualization, and module detection. |

| Synthetic Microbial Community Data (e.g., from gLV simulations) | Crucial benchmark reagents with known interaction ground truth for validating and comparing algorithm performance in silico. |

| Mock Microbial Community Standards (e.g., ZymoBIOMICS) | Physical control communities with defined composition, used to validate wet-lab protocols and assess technical noise prior to inference. |

| Stability Approach to Regularization Selection (StARS) | A methodological "reagent" for objectively selecting the sparsity parameter (λ) in SPIEC-EASI, ensuring a stable, reproducible network. |

Title: Algorithm Relationship & Limitations Hierarchy

This whitepaper examines thresholding strategies, a critical component in the construction and analysis of co-occurrence networks. Within the broader thesis on "How do co-occurrence network algorithms work: basic principles research", thresholding serves as the decisive step that transforms a matrix of pairwise association scores (e.g., correlations, mutual information) into a discrete network topology. The choice between hard and soft thresholding, and its interplay with statistical significance testing, directly influences the network's architecture, its identified hubs, and, consequently, the biological or pharmacological inferences drawn—such as identifying key disease genes or drug targets from high-throughput omics data.

Core Principles: Hard vs. Soft Thresholding

Hard Thresholding applies a strict cutoff. All edge weights (e.g., correlation coefficients |r|) above a chosen threshold τ are retained, often set to 1, and all others are set to 0, resulting in an unweighted network.

Edge Weight (A_{ij}) = 1 if |r_{ij}| ≥ τ, else 0

Soft Thresholding (e.g., via a power function) transforms all edge weights continuously, suppressing noise while preserving gradient information, resulting in a weighted network.

Edge Weight (A_{ij}) = |r_{ij}|^β (where β is the power, often ≥ 1)

The primary distinction is the treatment of weak associations: hard thresholding discards them entirely, while soft thresholding diminishes their influence exponentially.

Table 1: Comparative Analysis of Hard and Soft Thresholding

| Feature | Hard Thresholding | Soft Thresholding |

|---|---|---|

| Network Type | Unweighted | Weighted |

| Topology Sensitivity | High; small τ changes alter connectivity drastically. | Low; topology changes more gradually with β. |

| Noise Suppression | Abrupt; weak signals are eliminated. | Gradual; weak signals are down-weighted. |

| Heterogeneity | Can create "rich-get-richer" scale-free properties. | Preserves a more continuous hierarchy of connections. |

| Common Use Case | Simplifying visualization and analysis of strong links. | Weighted Gene Co-expression Network Analysis (WGCNA). |

| Statistical Testing | Directly applied to the threshold value (τ). | Applied to the original correlations before transformation. |

Integrating Statistical Significance Testing

Threshold selection must be principled to avoid arbitrary networks. Significance testing provides a framework.

- For Hard Thresholding: The threshold τ can be set based on the statistical significance (p-value) of the association measure. For Pearson correlation, the null distribution can be derived. A protocol is outlined below.

- For Soft Thresholding: Significance testing typically validates the existence of a non-zero association before applying the power transformation. The soft threshold parameter β is often chosen via a scale-free topology criterion, aiming for a network where the node degree distribution follows a power law (approximate

P(k) ~ k^-γ).

Table 2: Common Threshold Selection Criteria & Metrics

| Criterion | Method | Target Metric | Typical Value/Range |

|---|---|---|---|

| P-value Cutoff | Significance testing of correlation. | Adjusted p-value < 0.05 or 0.01. | τ corresponding to p < 0.01. |

| False Discovery Rate (FDR) | Benjamini-Hochberg procedure on p-values. | FDR (q-value) < 0.05. | τ defined by max correlation where q < 0.05. |

| Scale-Free Fit (R²) | Regress log(P(k)) on log(k). | Signed R² of linear model. |

Choose β where R² > 0.80-0.90. |

| Mean Connectivity | Ensure network is not too sparse/dense. | Average number of connections per node. | Often chosen empirically (e.g., 5-20). |

Experimental Protocols

Protocol 4.1: Establishing a Significance-Based Hard Threshold for a Correlation Network

Objective: To construct an unweighted co-occurrence network where all edges represent statistically significant correlations after multiple testing correction.

Input: An n x m data matrix (n samples, m variables). For gene co-expression, this is a genes (m) x samples (n) matrix.

Procedure:

- Compute Association Matrix: Calculate all pairwise Pearson correlations

r_ijformvariables, resulting in anm x mcorrelation matrixR. - Calculate p-values: For each

r_ij, compute the corresponding p-value under the null hypothesisr=0. For Pearson, use the t-statistic:t = r * sqrt((n-2)/(1-r^2))withdf = n-2. - Apply Multiple Testing Correction: Apply the Benjamini-Hochberg procedure to all

m*(m-1)/2p-values to control the False Discovery Rate (FDR) at, e.g., 5%. This yields a set of q-values. - Determine Threshold τ: Identify the minimum absolute correlation value among all pairs with

q-value < 0.05. This value becomes your significance-derived thresholdτ_sig.- Alternative: Set a fixed p-value threshold (e.g., 0.001) if FDR is too stringent, but note increased false positives.

- Apply Hard Threshold: Generate the adjacency matrix

A:A_{ij} = 1 if |r_ij| ≥ τ_sig and q_{ij} < 0.05, else 0. - Network Analysis: Use

Afor downstream topological analysis (degree, clustering, module detection).

Protocol 4.2: Selecting a Soft Threshold Power (β) via Scale-Free Topology

Objective: To choose an appropriate soft thresholding power β for constructing a weighted co-occurrence network that exhibits approximate scale-free topology, enhancing biological interpretability.

Input: Correlation matrix R from m variables.

Procedure:

- Define a Candidate Power Range: Typically,

β ∈ {1, 2, 3, ... 20}for unsigned networks (using|r|). - For each candidate β:

a. Compute Adjacency:

A_{ij} = |r_{ij}|^β. b. Calculate Network Connectivity:k_i = Σ_{j≠i} A_{ij}for each node i. c. Estimate the Probability Distribution:p(k)= (number of nodes with connectivity k) / m. d. Fit a Linear Model: Perform linear regression oflog10(p(k))againstlog10(k)fork > 0. e. Record Model Fit: Calculate the squared correlation coefficientR²betweenlog10(p(k))and the fitted values. - Plot & Choose β: Plot

R²vs.βand mean connectivity vs.β. Choose the smallestβwhere theR²curve flattens above a desired level (e.g., 0.85). This balances scale-free topology and network connectivity. - Construct Final Network: Use the chosen

βto compute the final weighted adjacency matrix.

Visualizations

Hard vs Soft Thresholding Process

Significance Testing Workflow for Networks

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages for Thresholding Network Analysis

| Tool/Reagent | Function | Typical Use |

|---|---|---|

| WGCNA R Package | Provides comprehensive functions for soft thresholding (scale-free topology fit), network construction, and module detection. | The standard for weighted gene co-expression network analysis. |

| igraph (R/Python) | A network analysis library for computing topological properties (degree, betweenness) and visualizing networks post-thresholding. | Analyzing and visualizing the structure of the thresholded network. |

| NumPy/SciPy (Python) | Libraries for efficient matrix operations, correlation calculations, and statistical tests (e.g., scipy.stats.pearsonr, scipy.stats.fdr). |

Pre-processing data, computing association matrices, and basic significance testing. |

| Statsmodels (Python) | Provides advanced statistical modeling, including precise p-value calculations and multiple testing corrections. | Implementing rigorous FDR control for hard thresholding. |

| Cytoscape | Open-source platform for visualizing complex networks. Integrates with statistical results for node/edge coloring by significance. | Visualizing and sharing the final thresholded network with biological annotations. |

| High-Performance Computing (HPC) Cluster | Essential for computing all-pairs correlations and permutations for large datasets (e.g., >20,000 genes). | Handling the O(m²) computational complexity of large-scale network construction. |

This technical guide exists within the research thesis: How do co-occurrence network algorithms work: basic principles research. A foundational step in this inquiry is the transformation of raw, quantitative association data (a matrix) into an interpretable network structure. This document details the methodology for that transformation, its visualization, and the initial extraction of biological meaning, with a focus on applications in biomedicine and drug discovery.

The Algorithmic Pipeline: From Matrix to Graph

The core process involves converting a symmetric N x N similarity or correlation matrix (e.g., gene co-expression, protein-protein interaction confidence scores, drug-target affinity scores) into a network G(V, E), where V is a set of nodes (e.g., genes) and E is a set of edges representing significant associations.

Key Experimental Protocol: Network Construction

- Input Matrix Generation: Start with a data matrix M. For gene co-occurrence, this is often a co-expression matrix computed from transcriptomic data (RNA-seq, microarray) using Pearson or Spearman correlation across samples.

- Thresholding: Apply a statistically justified threshold to filter insignificant edges.

- Hard Thresholding: Retain edges where the absolute correlation |r| > T. T is chosen based on statistical significance (p-value < 0.05 after multiple-testing correction) or an arbitrary but consistent cutoff (e.g., |r| > 0.7).

- Soft Thresholding (Weighted Network): Transform correlations using a power function: weight = |r|^β. This emphasizes stronger correlations while preserving continuous information.

- Network Representation: The thresholded matrix is the adjacency matrix A for the network. Each non-zero entry A[i][j] becomes an edge between node i and node j.

Quantitative Data Summary

Table 1: Common Thresholding Strategies & Outcomes

| Strategy | Parameter | Network Type | Typical Use Case | Pros/Cons | ||

|---|---|---|---|---|---|---|

| Hard Threshold | Significance (p<0.01) | Unweighted, Sparse | Topological analysis, module detection | Simple; sensitive to threshold choice. | ||

| Hard Threshold | Absolute Value ( | r | >0.8) | Unweighted, Dense | High-confidence interactions | Clear interpretation; may lose weak signals. |

| Soft Threshold | Power β (e.g., β=6) | Weighted, Continuous | Gene co-expression (WGCNA) | Preserves gradient; less arbitrary. |

Workflow: Constructing a Network from a Data Matrix

Initial Interpretation: Centrality and Community

Once the network is built, initial interpretation focuses on identifying key players and functional subgroups.

Experimental Protocol: Network Topology Analysis

- Centrality Calculation: Compute centrality metrics for all nodes. Common metrics include:

- Degree: Number of connections. High-degree nodes are "hubs."

- Betweenness Centrality: Number of shortest paths passing through a node. Identifies bridges.

- Closeness Centrality: Average shortest path length to all other nodes. Identifies influential spreaders.

- Community Detection (Clustering): Use algorithms to find densely connected subgroups (modules).

- Method: Apply the Louvain or Leiden algorithm for modularity optimization.

- Visual Validation: Overlay centrality (node size/color) and community (node color/grouping) results on the network layout.

Quantitative Data Summary

Table 2: Key Network Metrics for Biological Interpretation

| Metric | Mathematical Definition | Biological Analogy | High Value Indicates |

|---|---|---|---|

| Degree (k) | ki = Σj A_{ij} | Promiscuity | Essential protein, master regulator. |

| Betweenness | BC(v) = Σ{s≠v≠t} (σ{st}(v)/σ_{st}) | Broker, bridge | Pathway connector, critical signal mediator. |

| Modularity (Q) | Q ∝ Σ{ij} [A{ij} - (ki kj)/2m] δ(ci, cj) | Functional compartment | Quality of community division. |

Network: Communities, Hubs, and a Bridge Node

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Co-occurrence Network Analysis

| Item / Solution | Function & Explanation |

|---|---|

| R with igraph/ tidygraph | Primary software environment for network construction, analysis, and statistical computation. |

| Cytoscape | Open-source platform for advanced network visualization and manual exploration/annotation. |

| WGCNA R Package | Specialized tool for weighted gene co-expression network construction and module detection. |

| String Database | Source of pre-computed protein-protein association networks for validation and integration. |

| NetworkX (Python) | Python library for the creation, manipulation, and study of complex networks. |

| Gephi | Interactive visualization and exploration software for all types of networks. |

| Benjamini-Hochberg Procedure | Statistical method for correcting p-values during edge thresholding to control false discovery rate (FDR). |

This guide provides applied methodologies within the overarching research thesis: "How do co-occurrence network algorithms work: basic principles research." Co-expression and microbial co-occurrence networks are specific implementations of correlation-based co-occurrence algorithms. The core principle involves calculating pairwise association metrics (e.g., Pearson/Spearman correlation, SparCC, proportionality) between features (genes, taxa) across samples to infer potential functional relationships or ecological interactions. These networks are then analyzed for topology to extract biologically and clinically meaningful insights.

Constructing Gene Co-Expression Networks for Cancer Subtyping

Core Algorithm Principle: Weighted Gene Co-Expression Network Analysis (WGCNA) uses a soft-thresholding power (β) to transform a matrix of pairwise Pearson correlations (Sij = cor(xi, xj)) into an adjacency matrix (Aij = |Sij|β), emphasizing strong correlations. A Topological Overlap Matrix (TOM) is then computed to measure network interconnectedness.

Detailed Experimental Protocol:

- Data Preprocessing: Obtain RNA-seq or microarray data from public repositories (e.g., TCGA, GEO). Perform normalization (e.g., TPM for RNA-seq, RMA for microarrays), log2 transformation, and batch correction (e.g., using ComBat).

- Gene Filtering: Filter out low-variance genes (e.g., remove genes in the bottom 20% of variance across samples) to reduce noise.

- Network Construction:

- Calculate pairwise Pearson correlations between all genes.

- Choose a soft-thresholding power (β) that achieves approximate scale-free topology (scale-free topology fit R² > 0.85). This is determined empirically.

- Compute the adjacency and TOM matrices.

- Module Detection: Perform hierarchical clustering on the TOM-based dissimilarity (1-TOM). Dynamically cut the dendrogram to identify modules (clusters) of highly co-expressed genes. Assign each module a unique color label (e.g., "MEblue", "MEbrown").

- Relating Modules to Cancer Subtypes:

- Calculate module eigengenes (MEs), the first principal component of each module's expression matrix.

- Correlate MEs with clinical traits (e.g., cancer subtype labels, survival status, tumor grade). High correlation identifies trait-relevant modules.

- Downstream Analysis: Perform functional enrichment (GO, KEGG) on module genes. Identify intra-modular "hub genes" (genes with high intramodular connectivity, kWithin) as potential key drivers.

Quantitative Data Summary: Table 1: Example WGCNA Output from a TCGA Breast Cancer Study

| Module (Color) | Number of Genes | Correlation with Basal Subtype (r) | Top Hub Gene (Symbol) | Enriched Pathway (FDR <0.05) |

|---|---|---|---|---|

| MEblue | 1,250 | 0.92 | FOXM1 | Cell cycle (hsa04110) |

| MEbrown | 850 | -0.87 | GATA3 | Estrogen signaling |

| MEyellow | 420 | 0.45 | EGFR | PI3K-Akt signaling |

WGCNA Workflow for Cancer Subtyping

Constructing Microbial Interaction Networks for Dysbiosis Studies

Core Algorithm Principle: Microbial co-occurrence networks infer potential ecological interactions from species/taxa abundance tables (e.g., 16S rRNA data). The principle involves calculating robust pairwise associations (controlling for compositionality) and applying a significance threshold. Common metrics include SparCC (for compositionality) and proportionality metrics (e.g., ρp).

Detailed Experimental Protocol:

- Data Input & Normalization: Start with an OTU/ASV table (counts) and a taxonomic assignment table. Rarefy data to an even sampling depth or use variance-stabilizing transformations (e.g., centered log-ratio after pseudo-count addition).

- Association Measure Calculation: For compositional data, use SparCC or proportionality (e.g., propr package in R). For each taxon pair (i, j), compute the association measure.

- Statistical Validation & Thresholding: Generate bootstrapped or permuted datasets to estimate p-values for each correlation. Apply a multiple testing correction (Benjamini-Hochberg). Retain only edges with FDR < 0.05 and absolute correlation > 0.6 (thresholds are study-dependent).

- Network Construction & Analysis: Create an adjacency matrix from significant edges. Analyze global properties: average degree, clustering coefficient, betweenness centrality. Identify keystone taxa (high betweenness centrality, low degree).

- Dysbiosis Comparison: Construct separate networks for healthy vs. diseased cohorts (e.g., IBD vs. control). Compare network topology: density, modularity, or identify differential connections specific to the dysbiotic state.

Quantitative Data Summary: Table 2: Example Network Properties from a Healthy vs. IBD Gut Microbiota Study

| Network Property | Healthy Cohort (n=50) | IBD Cohort (n=50) | Interpretation |

|---|---|---|---|

| Number of Nodes (Taxa) | 150 | 120 | Reduced diversity in IBD |

| Number of Edges | 850 | 320 | Reduced connectivity in IBD |

| Average Degree | 11.33 | 5.33 | Less interconnected community |

| Average Path Length | 2.8 | 4.1 | Less efficient information flow |

| Modularity | 0.35 | 0.62 | More fragmented, niche-driven |

Comparative Microbial Networks: Healthy vs. Dysbiosis

The Scientist's Toolkit: Research Reagent & Resource Solutions

Table 3: Essential Tools for Constructing Co-Occurrence Networks

| Item / Resource | Function / Purpose | Example (Vendor/Package) |

|---|---|---|

| Normalized Gene Expression Data | Input for WGCNA. Ensures comparability across samples. | TCGA Pan-Cancer Atlas, GEO Datasets. |

| Processed 16S/ITS OTU Table | Input for microbial networks. Contains taxon counts per sample. | Output from QIIME 2, mothur, or DADA2 pipelines. |

| WGCNA R Package | Comprehensive toolkit for all steps of weighted co-expression network analysis. | CRAN: WGCNA (v1.72-5+) |

| SparCC Algorithm | Calculates correlation from compositional data (microbiome). | Python: pysparcc or R implementation. |

| propr / SPIEC-EASI R Packages | Alternative robust proportionality (propr) or conditional dependency (SPIEC-EASI) measures for microbiome data. | CRAN: propr; GitHub: SPIEC-EASI. |

| Cytoscape with CytoHubba | Network visualization and advanced topological analysis (e.g., identifying hub nodes). | Cytoscape Consortium (v3.10+). |

| igraph / networkX Libraries | Backend engines for graph theory calculations and network property derivation. | R: igraph; Python: networkX. |

| High-Performance Computing (HPC) Cluster | Essential for correlation calculations on large datasets (e.g., >20,000 genes). | AWS EC2, Google Cloud, or local HPC. |

Overcoming Common Pitfalls: Parameter Selection, Sparsity, and Computational Optimization

Within the broader thesis on How do co-occurrence network algorithms work basic principles research, a critical operational challenge is the selection of edges to construct biologically meaningful networks from high-dimensional data. This guide addresses the core dilemma: applying thresholds to correlation or similarity matrices to create sparse networks. A stringent threshold yields high-specificity networks (few false edges) but risks missing true biological interactions (low sensitivity). A lenient threshold captures more true interactions (high sensitivity) but includes spurious edges (low specificity), obscuring true signal with noise. This balance is paramount for researchers and drug development professionals seeking to identify novel targets and pathways from omics data.

Core Principles and Quantitative Metrics

The edge selection process typically begins with a similarity matrix (e.g., Pearson correlation, Spearman rank, mutual information) computed from entity co-occurrence or co-expression profiles. A threshold (τ) is applied to this matrix to create an adjacency matrix A, where A_{ij} = 1 if similarity ≥ τ, and 0 otherwise.

Key metrics for evaluating threshold impact are summarized below:

Table 1: Quantitative Metrics for Edge Selection Performance

| Metric | Formula | Interpretation in Network Context |

|---|---|---|

| Sensitivity (Recall) | TP / (TP + FN) | Proportion of true biological interactions correctly included as edges. |

| Specificity | TN / (TN + FP) | Proportion of true non-interactions correctly excluded as non-edges. |

| Precision | TP / (TP + FP) | Proportion of selected edges that are true biological interactions. |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) | Harmonic mean of Precision and Sensitivity. |

| Network Density | (2 * #Edges) / [N * (N-1)] | Fraction of possible edges present; increases with lower τ. |

Methodological Approaches and Experimental Protocols

Permutation-Based Thresholding

This method estimates the null distribution of similarity scores to control the false positive rate.

- Protocol:

- Calculate the observed similarity matrix Sobs from the original N x M data matrix (N entities, M samples).

- Generate P random permutations (e.g., P=1000) by shuffling sample labels independently for each entity.

- For each permutation p, calculate the similarity matrix Spermp.

- Pool all off-diagonal elements from all Spermp to form a null distribution.

- Set threshold τ at the (1-α) percentile (e.g., 95th) of the null distribution for a desired significance level α.

- Edges in Sobs exceeding τ are retained.

Scale-Free Topology Criterion

Many biological networks approximate a scale-free topology. This method selects τ that maximizes the linearity of the network's degree distribution on a log-log scale.

- Protocol:

- Construct a series of networks across a range of candidate thresholds (τ₁, τ₂, ..., τₖ).

- For each network, calculate the degree (k) of each node.

- Plot the log of the probability P(k) versus log(k). Fit a linear model: log(P(k)) ~ -γ * log(k) + c.

- Calculate the R² of the linear fit for each network.

- Select the threshold τ that yields the highest R², indicating the best fit to a scale-free model.

Stability-Based Selection (Edge Consensus)

This approach prioritizes edges that are robust to data perturbation, enhancing reproducibility.

- Protocol:

- Generate B bootstrap resamples (or subsamples) of the original M samples.

- Construct a network on each resample at a fixed, lenient preliminary threshold.

- Compute the edge consensus matrix EC, where EC{ij} = (frequency of edge i-j across all B networks).

- Apply a final consensus threshold (e.g., EC{ij} ≥ 0.8) to select edges stable across resamples.

Table 2: Comparison of Thresholding Methodologies

| Method | Primary Goal | Advantages | Disadvantages |

|---|---|---|---|

| Permutation-Based | Control statistical false positives. | Strong statistical foundation, controls Type I error. | Computationally intensive; may be overly conservative. |

| Scale-Free Criterion | Produce biologically plausible topology. | Leverages known biological network property. | Assumption may not hold for all data types; can be noisy. |

| Stability Selection | Enhance reproducibility and robustness. | Reduces variance, identifies high-confidence edges. | Computationally very intensive; requires choice of two thresholds. |

Visualization of Workflows and Relationships

Title: Threshold Selection Methodologies Workflow

Title: Threshold Impact on Network Characteristics

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Co-occurrence Network Analysis & Validation

| Tool/Reagent Category | Specific Example/Package | Primary Function |

|---|---|---|

| Network Construction & Analysis | WGCNA R package, igraph (Python/R), Cytoscape | Compute similarity matrices, apply thresholds, perform network topology analysis, and visualize graphs. |

| High-Performance Computing | AWS/GCP Cloud, Slurm HPC Cluster | Provide computational resources for permutation testing, bootstrapping, and large-scale network analysis. |

| Benchmark Validation Datasets | STRING database, KEGG pathway maps, BioGRID | Provide gold-standard sets of known biological interactions for calculating sensitivity/specificity metrics. |

| Experimental Validation - Proximity Ligation | Duolink PLA Assay Kits (Sigma-Aldish) | In situ detection of protein-protein interactions predicted by network edges for wet-lab confirmation. |

| Experimental Validation - Pull Down/MS | Pierce Anti-HA Magnetic Beads, Streptavidin Agarose | Isolate protein complexes centered on a putative hub protein identified by the network for mass spectrometry analysis. |