Co-Occurrence Networks for New Researchers: A 2024 Guide to Inference Algorithms, Tools, and Best Practices

This comprehensive guide demystifies co-occurrence network inference for new researchers in biomedical and drug discovery fields.

Co-Occurrence Networks for New Researchers: A 2024 Guide to Inference Algorithms, Tools, and Best Practices

Abstract

This comprehensive guide demystifies co-occurrence network inference for new researchers in biomedical and drug discovery fields. It begins with foundational concepts and core use cases, then delves into a detailed comparison of key algorithms (e.g., SPIEC-EASI, SparCC, CoNet) and their practical implementation. The guide addresses common pitfalls, optimization strategies for noisy biological data, and critical validation and benchmarking methodologies. By synthesizing theory, application, and evaluation, this article provides a clear roadmap for researchers to confidently construct, analyze, and interpret biological networks from high-throughput omics data.

What Are Co-Occurrence Networks? Core Concepts and Research Applications Explained

Defining Co-Occurrence vs. Correlation Networks in Biological Contexts

Within the broader framework of a Guide to Co-Occurrence Network Inference Algorithms for New Researchers, a foundational and often nuanced distinction lies between co-occurrence and correlation networks. This technical guide clarifies these concepts, their methodologies, and their applications in biological research, such as microbiome ecology, gene expression analysis, and drug target discovery.

Core Conceptual Distinctions

Co-occurrence and correlation networks are both association networks but are derived from different principles and answer different biological questions.

Co-Occurrence Networks describe the non-random presence/absence or abundance patterns of entities (e.g., microbial taxa, genes) across multiple samples. They infer potential ecological or functional relationships, such as symbiosis, competition, or niche sharing, often from compositional count data.

Correlation Networks (typically correlation-based association networks) quantify the degree of linear or monotonic dependence between the quantitative abundance or activity levels of entities across conditions. They infer potential functional interactions, co-regulation, or pathways.

Table 1: Conceptual and Methodological Comparison

| Aspect | Co-Occurrence Networks | Correlation Networks |

|---|---|---|

| Core Question | Do entities appear together more often than by chance? | How do the quantitative levels of entities vary together? |

| Primary Data | Often presence/absence or count data (e.g., OTU tables). | Continuous measurement data (e.g., gene expression, metabolite concentration). |

| Typical Metric | Probabilistic (e.g., pairwise score), joint occurrence count. | Pearson, Spearman, or other correlation coefficients. |

| Null Model | Crucial; randomizes occurrence to assess significance. | Often based on permutation or theoretical distributions. |

| Handling Compositionality | Explicit methods exist (e.g., SPRING, REBACCA). | Requires special techniques (e.g., SparCC, CCLasso). |

| Biological Inference | Habitat preference, ecological guilds, potential interactions. | Functional relationships, co-regulation, pathway activity. |

Methodological Protocols

Protocol for Co-Occurrence Network Inference (Microbiome Example)

Aim: To construct a network of microbial taxa based on their significant co-occurrence across patient samples.

Workflow:

- Data Preprocessing: Input is an Operational Taxonomic Unit (OTU) or Amplicon Sequence Variant (ASV) table (samples x taxa). Apply prevalence filtering (e.g., retain taxa present in >10% of samples) and optional rarefaction.

- Association Calculation: Choose an appropriate measure:

- Joint Occurrence & Probabilistic Scoring: For presence/absence data, calculate the observed co-occurrence count. Compare to a null distribution generated by randomizing the occurrence matrix while preserving row (sample total) and/or column (taxon frequency) sums (Fixed-Fixed model). The pairwise score is:

(Observed co-occurrence - Mean of Null) / Standard Deviation of Null. - Compositionality-Aware Methods: For relative abundance data, use algorithms like SPRING (Semi-Parametric Rank-Based Correlation) or REBACCA (Regularized Estimation of Basis or Core Association) which account for the compositional nature of sequencing data.

- Joint Occurrence & Probabilistic Scoring: For presence/absence data, calculate the observed co-occurrence count. Compare to a null distribution generated by randomizing the occurrence matrix while preserving row (sample total) and/or column (taxon frequency) sums (Fixed-Fixed model). The pairwise score is:

- Statistical Thresholding: Apply a significance cutoff (e.g.,

\|pairwise score\| > 2approximates p < 0.05) and a minimum co-occurrence count filter to reduce spurious edges. - Network Construction: Nodes represent taxa. An edge is drawn between two nodes if their association meets the significance and strength thresholds. Edge weight can represent the association strength.

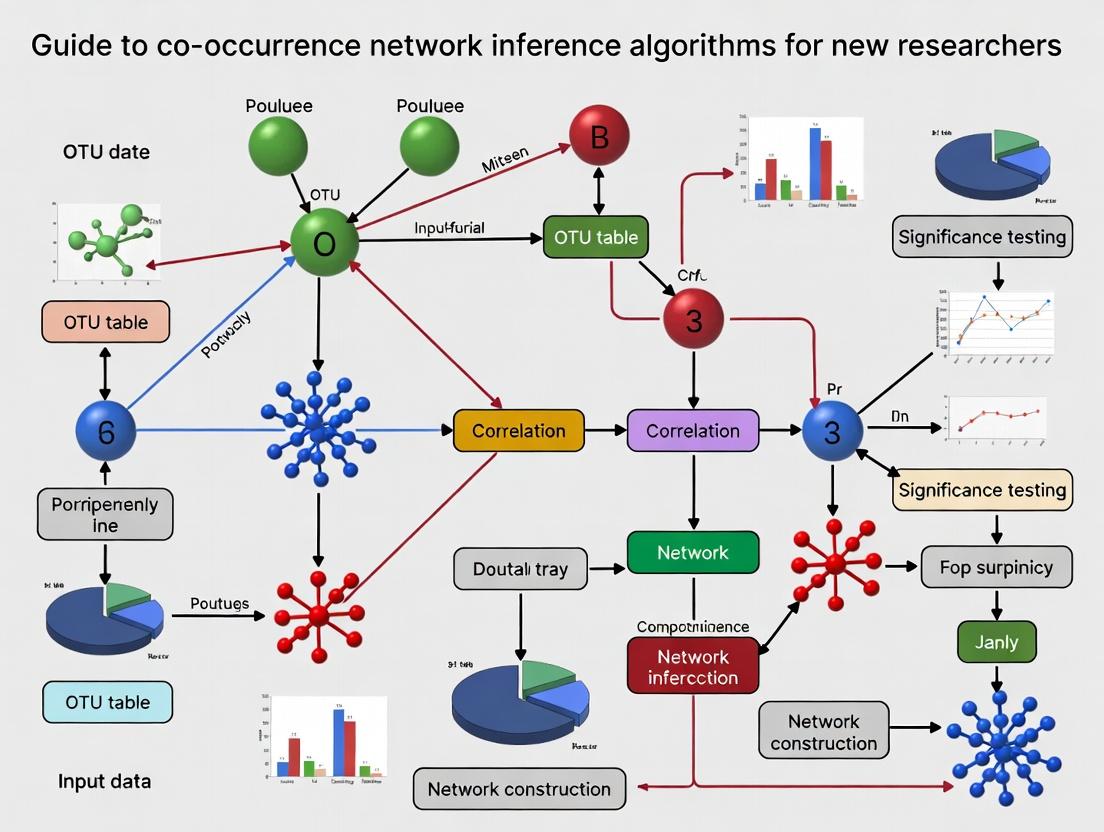

Title: Co-occurrence network inference workflow.

Protocol for Correlation Network Inference (Gene Expression Example)

Aim: To construct a network of genes based on correlated expression patterns across experimental conditions or patients.

Workflow:

- Data Preprocessing: Input is a gene expression matrix (samples x genes). Normalize (e.g., TPM, FPKM for RNA-seq; RMA for microarrays). Filter lowly expressed genes. Optionally, transform data (e.g., log2).

- Correlation Calculation: Compute all pairwise correlation coefficients.

- Pearson Correlation: Measures linear dependence. Sensitive to outliers.

- Spearman Rank Correlation: Measures monotonic dependence. More robust to outliers.

- Significance Assessment & Multiple Testing Correction: Calculate p-value for each correlation (using t-distribution for Pearson, or permutation). Apply correction (e.g., Benjamini-Hochberg FDR) to control false discoveries.

- Thresholding & Network Construction: Retain correlations with

|r| > threshold(e.g., 0.7) and FDR-adjusted p-value < 0.05. Construct network where nodes are genes and edges are significant correlations.

Title: Correlation network inference workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Network Inference Studies

| Item | Function in Context |

|---|---|

| 16S rRNA Gene Sequencing Kits (e.g., Illumina 16S Metagenomic Kit) | Provides raw abundance data for microbial taxa from environmental or host samples. Foundation for microbiome co-occurrence networks. |

| RNA/DNA Extraction Kits (e.g., Qiagen RNeasy, MoBio PowerSoil) | High-quality nucleic acid isolation is critical for generating accurate count or expression matrices for network nodes. |

| Whole Transcriptome Amplification Kits (e.g., SMART-Seq v4) | Enables gene expression profiling from low-input samples (e.g., single cells), a common scenario in network studies. |

| Spike-in Control RNAs (e.g., ERCC RNA Spike-In Mix) | Used to normalize technical variation in sequencing depth, improving the accuracy of correlation estimates in expression networks. |

Statistical Software/Packages: igraph, WGCNA, SPRING, SpiecEasi, CoNet |

Essential computational tools for calculating associations, performing null model tests, and visualizing resulting networks. |

| High-Performance Computing (HPC) Cluster Access | Pairwise calculations across thousands of entities are computationally intensive and often require parallel processing. |

This guide examines the pivotal applications of co-occurrence network inference algorithms across three critical domains. Framed within the broader thesis of A Guide to Co-occurrence Network Inference Algorithms for New Researchers, this technical whiteparesc focuses on translating network topology into actionable biological and clinical insights. The construction of robust association networks from high-dimensional 'omics data serves as a unifying computational scaffold, enabling hypothesis generation and experimental validation.

Core Applications

Microbiome Ecology and Dysbiosis Detection

Network inference is fundamental for moving beyond taxonomic inventories to understanding microbial community dynamics. Co-occurrence networks reveal keystone species, functional guilds, and competitive or syntrophic relationships, which are essential for defining ecosystem stability and dysbiosis.

Experimental Protocol for 16S rRNA Amplicon-Based Network Inference & Validation:

- Sample Collection & Sequencing: Collect environmental or host-associated samples (e.g., stool, soil). Extract genomic DNA and amplify the 16S rRNA gene V4 region via PCR (primers 515F/806R). Perform paired-end sequencing on an Illumina MiSeq platform.

- Bioinformatic Processing: Process raw FASTQ files using QIIME2 or DADA2. Denoise, dereplicate, and remove chimeras to generate Amplicon Sequence Variant (ASV) tables. Assign taxonomy using a reference database (e.g., SILVA or Greengenes). Rarefy the ASV table to an even sampling depth.

- Network Inference: Input the normalized count matrix into

SpiecEasi(using the MB or glasso method) orFlashWeave(for handling compositionality). Set appropriate parameters (e.g., lambda.min.ratio=1e-2, nlambda=20 forSpiecEasi). - Network Analysis: Calculate topological metrics (degree, betweenness centrality, clustering coefficient) using

igraph. Detect modules via the Louvain algorithm. - Validation: Perform cross-validation by network reconstruction on random data subsets. Validate putative keystone taxa via targeted qPCR (using taxon-specific primers) across sample cohorts or through controlled in-vitro co-culture experiments.

Diagram: Microbiome Network Analysis Workflow

Gene Regulatory Network (GRN) Inference

GRNs model causal interactions between transcription factors (TFs) and target genes. Co-expression network algorithms, particularly those leveraging single-cell RNA-seq data, are instrumental in deciphering the regulatory logic underlying cell states and differentiation.

Experimental Protocol for Single-Cell GRN Inference:

- Single-Cell Library Preparation & Sequencing: Prepare single-cell suspensions. Construct libraries using the 10x Genomics Chromium platform (3' or 5' gene expression kit). Sequence on an Illumina NovaSeq to a minimum depth of 50,000 reads per cell.

- Preprocessing & Clustering: Process Cell Ranger output with

SeuratorScanpy. Filter cells (min.genes > 200, max.genes < 2500, mitochondrial percent < 10%). Normalize using SCTransform or log-normalization. Perform PCA, UMAP/t-SNE embedding, and graph-based clustering to identify cell populations. - GRN Inference: For each cell cluster, run

SCENIC(pySCENIC).- Step 1: Identify co-expression modules between TFs and potential targets using

GRNBoost2orGENIE3. - Step 2: Prune modules using cis-regulatory motif analysis (RcisTarget database) to identify direct targets.

- Step 3: Calculate regulon activity (AUC) per cell.

- Step 1: Identify co-expression modules between TFs and potential targets using

- Validation: Perform chromatin immunoprecipitation sequencing (ChIP-seq) for predicted TFs on sorted cell populations to confirm binding at promoter regions of target genes. Use CRISPRi/a to perturb predicted TF and measure target gene expression changes via RT-qPCR.

Diagram: Single-Cell GRN Inference with SCENIC

Drug Target Discovery and Mechanism of Action

Network pharmacology uses disease-specific co-expression networks to identify dysregulated modules. "Guilt-by-association" and network proximity analyses can pinpoint novel drug targets and repurpose existing drugs.

Experimental Protocol for Network-Based Drug Target Identification:

- Disease Network Construction: Obtain disease vs. control transcriptomic data (RNA-seq) from public repositories (e.g., GEO). Process and normalize data using a standard pipeline (e.g., DESeq2 for bulk RNA-seq). Construct a condition-specific co-expression network using

WGCNA. Identify disease-associated modules via correlation with clinical traits. - Target Prioritization: Map genes from the dysregulated module to a protein-protein interaction (PPI) network (e.g., STRING, HuRI). Calculate network centrality measures. Prioritize genes that are: a) high-degree hubs in the disease module, b) differentially expressed, and c) located upstream in signaling pathways.

- Drug Connectivity Mapping: Use the

LINCSL1000 database and tools likeCLUEorigraphto connect the disease signature (differentially expressed genes) to drug-induced gene expression profiles. Compute connectivity scores (e.g., Tau score) to rank compounds with opposing signatures. - Experimental Validation: Perform in vitro knockdown/knockout of the prioritized target gene in a relevant cell line using siRNA or CRISPR-Cas9. Measure phenotypic outcomes (e.g., proliferation, apoptosis). For a predicted compound, conduct dose-response assays and confirm on-target activity via downstream phospho-proteomics or reporter assays.

Diagram: Drug Target Discovery Pipeline

Table 1: Comparison of Key Network Inference Tools Across Applications

| Application Domain | Primary Tool/Algorithm | Input Data Type | Key Output | Typical Network Size (Nodes) | Common Validation Method |

|---|---|---|---|---|---|

| Microbiome Ecology | SpiecEasi (MB/glasso), FlashWeave | 16S rRNA ASV Table (Counts) | Microbial Association Network | 100 - 10,000 | qPCR, Co-culture, Cross-Validation |

| Gene Regulatory Networks | SCENIC (GRNBoost2/GENIE3) | scRNA-seq Count Matrix | Regulons (TF → Target) | 1,000 - 20,000 | ChIP-seq, CRISPR Perturbation |

| Drug Target Discovery | WGCNA, LINCS Connectivity Map | Bulk RNA-seq (FPKM/TPM) | Co-expression Modules, Drug Signatures | 5,000 - 25,000 | In vitro Knockdown, Phenotypic Assay |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Network Inference & Validation Experiments

| Item | Function | Example Product/Catalog |

|---|---|---|

| 16S rRNA Primer Set (515F/806R) | Amplifies the V4 hypervariable region for microbiome profiling. | Illumina (Cat# 15044223 Rev. B) |

| 10x Genomics Chromium Chip & Kit | Enables high-throughput single-cell partitioning and barcoding for scRNA-seq. | 10x Genomics, Chromium Next GEM Single Cell 3' Kit v3.1 |

| RNeasy Mini Kit | Purifies high-quality total RNA from tissues or cells for transcriptomics. | Qiagen (Cat# 74104) |

| TruSeq Stranded mRNA Library Prep Kit | Prepares strand-specific RNA-seq libraries from poly-A selected mRNA. | Illumina (Cat# 20020594) |

| Lipofectamine RNAiMAX | Transfects siRNA or miRNA molecules into mammalian cells for gene knockdown validation. | Thermo Fisher Scientific (Cat# 13778075) |

| Alt-R S.p. HiFi Cas9 Nuclease V3 | Provides high-fidelity Cas9 enzyme for precise CRISPR-Cas9 genome editing validation experiments. | Integrated DNA Technologies (Cat# 1081060) |

| CellTiter-Glo Luminescent Cell Viability Assay | Measures cell viability and proliferation for phenotypic validation of drug/target effects. | Promega (Cat# G7570) |

This guide serves as a foundational chapter within the broader thesis, A Guide to Co-occurrence Network Inference Algorithms for New Researchers. Understanding the core terminology of network science is the critical first step before one can competently evaluate, select, and apply algorithms for inferring biological networks from omics data, a task central to modern drug discovery and systems biology.

Core Terminology and Definitions

Nodes and Edges

A network (or graph) G is formally defined as a pair G = (V, E), where:

- Nodes (V, Vertices): Represent individual entities. In co-occurrence networks, a node is typically a biological element (e.g., a gene, protein, metabolite, species). In a drug-protein interaction network, a node could be a chemical compound or a target protein.

- Edges (E, Links): Represent pairwise relationships or interactions between nodes. An edge between node i and node j can be:

- Undirected: Indicating a mutual association (e.g., co-expression, functional similarity).

- Directed: Indicating a causal or temporal relationship (e.g., a transcription factor regulating a gene).

- Weighted: Assigned a numerical value (weight) indicating the strength, confidence, or magnitude of the interaction (e.g., correlation coefficient, mutual information score).

Adjacency Matrix

The adjacency matrix A is a mathematical representation of a network. For a network with n nodes, A is an n x n square matrix.

- For unweighted networks:

A[i][j] = 1if an edge exists between node i and node j; otherwise,A[i][j] = 0. - For weighted networks:

A[i][j] = w_ij, where w_ij is the weight of the edge. - For undirected networks: The matrix is symmetric (

A[i][j] = A[j][i]).

Example Adjacency Matrices

| Network Type | Matrix Representation (3 nodes) | Description |

|---|---|---|

| Undirected, Unweighted | [[0,1,0], [1,0,1], [0,1,0]] |

Node 1 connected to Node 2. Node 2 connected to Node 3. |

| Directed, Unweighted | [[0,1,0], [0,0,1], [0,0,0]] |

Edge from Node 1→2, and from Node 2→3. |

| Undirected, Weighted | [[0,0.8,0], [0.8,0,0.2], [0,0.2,0]] |

Weighted edges between 1-2 (0.8) and 2-3 (0.2). |

Network Topology

Topology refers to the structural architecture and connectivity patterns of a network. Key topological measures include:

- Degree (k): The number of edges incident to a node. In directed networks, split into in-degree and out-degree.

- Path Length: The number of edges in the shortest path between two nodes.

- Clustering Coefficient: A measure of the degree to which nodes tend to cluster together (i.e., the density of triangles in the neighborhood).

- Centrality Measures: Identify the most "important" nodes (e.g., high-degree "hubs," high betweenness nodes that bridge communities).

Table: Common Network Topologies and Their Properties

| Topology Type | Description | Key Property | Biological Analogy |

|---|---|---|---|

| Random (Erdős–Rényi) | Edges placed randomly between nodes. | Low avg. path length, low clustering. | Rare in biology. |

| Scale-Free | Degree distribution follows a power law (few hubs, many low-degree nodes). | High robustness to random failure, vulnerable to targeted attack. | Protein-protein interaction networks, metabolic networks. |

| Small-World | High clustering (like a regular lattice) but short path lengths (like a random graph). | Efficient information/propagation flow. | Neural networks, genetic regulatory networks. |

| Modular/Community | Dense connections within groups, sparse connections between groups. | High modularity score. | Functional protein complexes, ecological guilds. |

Application in Co-occurrence Network Inference

Inference algorithms construct a network from observed data (e.g., gene expression across samples). The output is typically an adjacency matrix, which is then analyzed for its topology to derive biological insights.

Generalized Experimental Protocol for Co-occurrence Network Inference:

- Data Collection: Obtain a high-dimensional dataset (e.g., RNA-Seq count matrix: m genes x n samples).

- Preprocessing & Normalization: Apply appropriate normalization (e.g., TPM for RNA-Seq, rarefaction for microbiome data) and filtering (remove low-variance features).

- Association Estimation: Calculate a pairwise association measure between all features (nodes).

- Common Measures: Pearson/Spearman Correlation (linear/monotonic), Mutual Information (non-linear), SparCC (for compositional microbiome data).

- Adjacency Matrix Formation: Apply a threshold or statistical test to the association matrix to create a binary or weighted adjacency matrix.

- Hard Thresholding: Keep

|correlation| > 0.7. - Soft Thresholding (Power Law): Used in WGCNA to emphasize strong correlations.

- Statistical Significance: Keep edges with p-value < adjusted alpha.

- Hard Thresholding: Keep

- Network Construction & Topological Analysis: Import the adjacency matrix into network analysis software (e.g., igraph, Cytoscape) to calculate topological metrics and visualize the network.

- Validation & Interpretation: Validate hubs/modules via functional enrichment analysis (GO, KEGG) or against known interaction databases (STRING, KEGG PATHWAY).

Diagram Title: Co-occurrence Network Inference Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Co-occurrence Network Studies

| Item/Category | Function in Network Inference | Example/Note |

|---|---|---|

| High-Throughput Sequencing Platform | Generates the raw omics data (nodes). | Illumina NovaSeq for RNA-Seq; PacBio for full-length 16S. |

| Bioinformatics Pipeline Software | Performs preprocessing, normalization, and quality control. | nf-core/rnaseq, QIIME 2, DADA2. |

| Statistical Computing Environment | Implements association calculations and adjacency matrix formation. | R (with WGCNA, psych, SpiecEasi packages) or Python (with scikit-learn, NetworkX). |

| Network Analysis & Visualization Tool | Constructs the graph, computes topology, and enables visualization. | Cytoscape (desktop GUI), igraph (R/Python library), Gephi. |

| Functional Annotation Database | Provides biological context for interpreting network modules/hubs. | Gene Ontology (GO), Kyoto Encyclopedia of Genes and Genomes (KEGG). |

| Reference Interaction Database | Serves as a benchmark for validating inferred edges. | STRING (protein interactions), KEGG PATHWAY (signaling/metabolism). |

| High-Performance Computing (HPC) Cluster | Provides computational power for large pairwise calculations (O(n²) complexity). | Essential for datasets with thousands of features (e.g., metagenomics). |

Visualizing Key Relationships

Diagram Title: Common Network Topology Types

Mastering the language of nodes, edges, adjacency matrices, and topology is non-negotiable for researchers embarking on co-occurrence network inference. This lexicon forms the basis for comparing algorithmic outputs, interpreting the resulting biological networks, and ultimately generating testable hypotheses in systems pharmacology and drug development. The subsequent chapters of this thesis will build upon this foundation, delving into the specific algorithms that transform data into these fundamental structures.

In the broader investigation of co-occurrence network inference algorithms, the initial and most critical step is the generation of a robust, high-quality count or abundance matrix. This matrix, where rows represent features (e.g., microbial taxa, genes, transcripts) and columns represent samples, forms the foundational data layer upon which all subsequent network analysis—calculating correlations, applying statistical filters, and inferring ecological relationships—is built. Any bias, noise, or artifact introduced during data processing will propagate directly into the inferred network structure, potentially leading to erroneous biological conclusions. This whitepaper provides an in-depth technical guide to constructing this essential matrix from raw sequencing data, detailing the methodologies, quality controls, and critical decision points for three primary omics approaches: 16S rRNA gene amplicon sequencing, shotgun metagenomics, and RNA-seq (metatranscriptomics).

Raw Data Acquisition & Initial Quality Assessment

All pipelines begin with raw sequencing output, typically in FASTQ format. The first universal step is a quality control (QC) check.

Table 1: Common Sequencing Platforms and Output Characteristics

| Platform | Typical Omics Use | Raw Data Format | Key QC Metric |

|---|---|---|---|

| Illumina MiSeq/NovaSeq | 16S, Metagenomics, RNA-seq | Paired-end FASTQ | Q-Score (≥Q30 for >75% bases), read length (e.g., 2x250bp, 2x150bp) |

| Oxford Nanopore | Metagenomics, RNA-seq | FAST5/FASTQ | Mean Q-Score (≥Q10), read length (long, variable) |

| PacBio HiFi | Metagenomics | FASTQ | Read length (long, ~10-25kb), accuracy (>99.9%) |

Experimental Protocol 1: Initial Quality Control with FastQC & MultiQC

- Tool: FastQC (v0.12.1) for individual files; MultiQC (v1.14) for aggregated reporting.

- Command:

- Output Analysis: Assess per-base sequence quality, sequence duplication levels, adapter content, and overrepresented sequences. The presence of adapters or low-quality 3' ends necessitates trimming.

- Aggregation: Run

multiqc ./qc_report/to compile results across all samples.

Diagram 1: Universal Initial QC and Preprocessing Workflow

Methodology-Specific Processing Pipelines

16S rRNA Gene Amplicon Pipeline

The goal is to cluster sequencing reads into Amplicon Sequence Variants (ASVs) or Operational Taxonomic Units (OTUs) to create a taxon abundance matrix.

Experimental Protocol 2: DADA2 Pipeline for ASV Inference (R-based)

- Filter & Trim: Based on FastQC report, truncate reads where quality drops.

- Learn Error Rates: Model sequencing error profiles.

- Dereplication & Sample Inference: Identify unique sequences and infer ASVs.

- Merge Paired Reads & Construct Table: Merge forward/reverse reads and create count table.

- Remove Chimeras: Eliminate PCR artifacts.

- Taxonomic Assignment: Classify ASVs using a reference database (e.g., SILVA, Greengenes).

Shotgun Metagenomics Pipeline

The goal is to quantify the abundance of genes or taxonomic groups from whole-genome sequencing data.

Experimental Protocol 3: Taxonomic Profiling with KneadData & MetaPhlAn

- Host Read Removal & QC (KneadData): Remove contaminant (e.g., human) reads.

- Profiling (MetaPhlAn): Map reads to clade-specific marker genes.

- Merge Tables: Create a unified abundance matrix across samples.

Diagram 2: Metagenomics & RNA-seq Functional/Taxonomic Profiling

RNA-seq (Metatranscriptomics) Pipeline

The goal is to quantify gene expression levels, often involving assembly and mapping.

Experimental Protocol 4: Reference-Based RNA-seq Quantification with Salmon

- Transcriptome Indexing: Build an index from a reference transcriptome (genome-based or de novo assembled).

- Pseudoalignment & Quantification: Rapid, alignment-free quantification.

- Aggregate Counts: Compile the

quant.sffiles from all samples into a matrix using tools liketximportin R.

Table 2: Core Bioinformatics Tools for Each Omics Pipeline

| Pipeline Step | 16S rRNA | Shotgun Metagenomics | RNA-seq |

|---|---|---|---|

| Primary QC/Trimming | Trimmomatic, cutadapt | KneadData, Trimmomatic | Trimmomatic, fastp |

| Core Processing | DADA2, QIIME2, mothur | MetaPhlAn, Kraken2, HUMAnN | Salmon, Kallisto, STAR |

| Clustering/Assembly | DADA2 (ASVs), VSEARCH (OTUs) | MEGAHIT, metaSPAdes | Trinity, rnaSPAdes |

| Abundance Output | ASV/OTU Count Table | Taxonomic/Functional Profile Table | Transcript/Gene Count Table |

| Key Database | SILVA, Greengenes | GTDB, eggNOG, UniRef | RefSeq, custom genome |

Final Matrix Construction and Normalization

The output of each pipeline is a raw count table. For network inference, normalization is essential to correct for technical variation (e.g., sequencing depth) before calculating co-occurrence statistics.

Table 3: Common Normalization Methods for Co-occurrence Analysis

| Method | Formula (for feature i, sample j) | Use Case / Rationale |

|---|---|---|

| Total Sum Scaling (TSS) | ( C{ij} / \text{TotalReads}j ) | Simple, but sensitive to compositionality. |

| Cumulative Sum Scaling (CSS) | ( C{ij} / \text{CSSPercentile}j ) | Used in MetagenomeSeq, robust to outliers. |

| Relative Abundance (%) | ( (C{ij} / \text{TotalReads}j) * 100 ) | Intuitive for community composition. |

| Center Log-Ratio (CLR) | ( \ln[C{ij} / g(\mathbf{C}{*j})] ) where (g) is geometric mean. | Addresses compositionality; used in SparCC. |

| Trimmed Mean of M-values (TMM) | (Implemented in edgeR) | For RNA-seq, assumes most features not differentially abundant. |

Experimental Protocol 5: Constructing a Normalized Matrix in R

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 4: Essential Materials and Tools for the Omics Data Pipeline

| Item | Function/Description | Example Product/Software |

|---|---|---|

| High-Fidelity PCR Mix | For 16S library prep, minimizes amplification bias. | Q5 Hot Start High-Fidelity 2X Master Mix (NEB) |

| RNA Stabilization Reagent | Preserves microbial transcriptomes immediately upon sampling. | RNAlater Stabilization Solution (Thermo Fisher) |

| DNA/RNA Extraction Kit | Co-isolation of nucleic acids from complex samples (soil, stool). | AllPrep PowerFecal DNA/RNA Kit (QIAGEN) |

| Library Prep Kit | Prepares sequencing-ready libraries from purified DNA/RNA. | Nextera XT DNA Library Prep Kit (Illumina) |

| Bioinformatics Suite | Integrated platform for analysis pipelines. | QIIME 2 (16S), nf-core (metagenomics/RNA-seq) |

| Reference Database | For taxonomic classification or functional annotation. | SILVA (16S), GTDB (genomes), eggNOG (functions) |

| High-Performance Computing | Cloud or cluster for computationally intensive steps. | Amazon EC2, Google Cloud Platform, local SLURM cluster |

The construction of a reliable count/abundance matrix is a non-trivial, methodology-dependent process requiring careful execution of quality control, read processing, and normalization steps. For the researcher aiming to infer co-occurrence networks, the choices made in this initial pipeline—from ASV inference algorithm (DADA2 vs. OTU clustering) to normalization method (CSS vs. CLR)—profoundly impact the input data's structure and noise profile. A rigorously constructed matrix provides a solid foundation for applying network inference algorithms like SPIEC-EASI, SparCC, or CoNet, moving from raw sequencing data toward meaningful ecological interaction hypotheses.

Within the broader research on A Guide to Co-occurrence Network Inference Algorithms for New Researchers, this whitepaper addresses a foundational question: why is statistical and computational inference not merely helpful but necessary for reconstructing biological networks from observational data? Observational data, such as gene expression counts from RNA-seq, metabolite concentrations, or protein abundances, captures system states but does not reveal causal interactions. The core challenge is that correlation—often measured by simple co-occurrence—does not imply causation or direct interaction. High-dimensional data (many molecules, few samples), noise, and hidden confounders make direct observation of the network impossible. Inference provides the mathematical framework to deduce the most plausible network structures that could generate the observed data.

The Fundamental Challenge: From Correlations to Causality

Observational datasets are typically represented as an n x p matrix, with n samples (observations) and p features (e.g., genes). The sheer number of potential pairwise interactions scales with p², making exhaustive experimental validation infeasible. Furthermore, indirect correlations are pervasive: if Gene A regulates Gene B, and Gene B regulates Gene C, then A and C will correlate without a direct edge existing between them. Distinguishing these direct from indirect links requires inference.

Quantitative Data on Network Complexity and Data Scales

Table 1: Scale of the Network Inference Problem in Model Organisms

| Organism | Approximate Protein-Coding Genes | Possible Undirected Interactions | Experimentally Validated Interactions (STRING DB v12.0, 2024) |

|---|---|---|---|

| Escherichia coli | ~4,300 | ~9.2 million | ~326,000 |

| Saccharomyces cerevisiae | ~6,000 | ~18 million | ~1.8 million |

| Homo sapiens | ~19,000 | ~180 million | ~12.5 million |

Table 2: Typical Observational Data Constraints in Omics Studies

| Data Type | Sample Size (n) Range | Feature Size (p) Range | n << p Typical? |

|---|---|---|---|

| Bulk RNA-seq (TCGA) | 100 - 1,000 | 20,000 - 60,000 | Yes |

| Single-cell RNA-seq | 1,000 - 1,000,000 | 20,000 - 30,000 | No (n > p common) |

| Metabolomics (untargeted) | 50 - 500 | 500 - 5,000 | Often |

| Proteomics (mass spec) | 20 - 200 | 3,000 - 10,000 | Yes |

Core Inference Methodologies: Protocols and Workflows

Protocol for Correlation-Based Network Inference (Baseline)

Objective: Construct an undirected co-expression network from gene expression data. Input: Normalized gene expression matrix (genes x samples). Steps:

- Similarity Computation: Calculate all pairwise similarity scores (e.g., Pearson correlation, Spearman rank correlation) for

pgenes. - Thresholding: Apply a hard threshold (e.g., |r| > 0.8) or a soft threshold (e.g., scale-free topology criterion) to create an adjacency matrix.

- Network Creation: Represent genes as nodes and significant correlations as undirected edges. Limitation: Produces dense networks with many spurious edges from indirect relationships.

Protocol for Partial Correlation-Based Inference (Addressing Indirect Links)

Objective: Infer a Conditional Dependency Network (Gaussian Graphical Model). Input: Normalized, approximately Gaussian-distributed expression matrix. Steps:

- Covariance Matrix Estimation: Compute the

p x psample covariance matrix Σ. - Inverse Covariance (Precision Matrix) Calculation: Compute Θ = Σ⁻¹. The elements θ_ij of Θ represent the partial correlation between feature i and j, conditional on all other features.

- Sparsity Constraint: Use L1-regularization (graphical lasso) to force many elements of Θ to zero, addressing the

n << pproblem. - Hypothesis Testing: Perform statistical tests on non-zero θ_ij to create a sparse adjacency network of direct conditional dependencies. Advantage: Reduces edges mediated by a common neighbor.

Protocol for Bayesian Network Inference (Directional Hypotheses)

Objective: Infer a directed acyclic graph (DAG) suggesting potential causal flow. Input: Pre-processed observational data, assumed to be from a stationary process. Steps:

- Structure Learning:

- Constraint-based: Use conditional independence tests (e.g., PC algorithm) to orient edges.

- Score-based: Search the space of DAGs to maximize a score (e.g., BIC, BDeu).

- Parameter Learning: Estimate the conditional probability distributions for each node given its parents.

- Model Averaging: Often, many equally scoring DAGs exist; averaging across a high-probability set yields more robust edges. Critical Note: True causality requires interventional data; BN edges suggest probabilistic dependency ordering.

Visualizing Inference Concepts and Workflows

Diagram 1: Inference pathways from data to network models (67 chars)

Diagram 2: Direct vs. indirect relationships in a network (60 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents & Tools for Experimental Network Validation

| Item | Function in Validation | Example Product/Catalog |

|---|---|---|

| siRNA/shRNA Library | Targeted gene knockdown to test node necessity in inferred network. | Dharmacon SMARTpool siRNA libraries, MISSION TRC shRNA. |

| CRISPR-Cas9 Knockout/Knockin Kits | Permanent gene editing to validate causal edges and network robustness. | Synthego CRISPR kits, IDT Alt-R CRISPR-Cas9 system. |

| Dual-Luciferase Reporter Assay | Quantifying transcriptional regulation between inferred TF-target pairs. | Promega Dual-Luciferase Reporter Assay System (E1910). |

| Co-Immunoprecipitation (Co-IP) Kits | Validating physical protein-protein interaction edges. | Thermo Fisher Pierce Co-IP Kit (26149). |

| Phospho-Specific Antibodies | Detecting activity states in signaling pathways inferred from phosphoproteomics. | Cell Signaling Technology Phospho-Antibody Sampler Kits. |

| Metabolite Standards & LC-MS Kits | Absolute quantification for validating metabolomic co-occurrence networks. | IROA Technologies Mass Spectrometry Metabolite Library. |

Reconstructing networks from observational data is an ill-posed inverse problem with no unique solution. Inference is necessary to navigate the vast space of possible networks and propose parsimonious, testable models that explain the data. While co-occurrence provides a starting point, advanced inference algorithms—partial correlation, Bayesian networks, and others—are essential to control for confounders and propose direct interactions and directionality. The ultimate output is not a ground-truth map but a set of high-confidence hypotheses requiring rigorous experimental validation, as outlined in the Scientist's Toolkit. This iterative cycle of computational inference and experimental validation drives discovery in systems biology and targeted drug development.

Step-by-Step Guide: Comparing and Applying Key Inference Algorithms

This guide serves as the first installment in a comprehensive series on co-occurrence network inference algorithms for new researchers. Within the field of systems biology and drug development, constructing networks from high-throughput data (e.g., gene expression, protein abundance) is foundational for identifying functional modules, key regulators, and therapeutic targets. Correlation-based methods, primarily Pearson and Spearman coefficients, are the most ubiquitous starting point for such inference due to their conceptual simplicity and computational efficiency. This whitepaper provides a rigorous technical dissection of these methods, their application protocols, and—critically—their limitations, setting the stage for more advanced algorithms covered in subsequent guides.

Core Algorithmic Foundations

Pearson Correlation Coefficient (r): Measures the linear relationship between two continuous variables, (X) and (Y). It is the covariance of the two variables divided by the product of their standard deviations.

[ r{XY} = \frac{\sum{i=1}^{n}(Xi - \bar{X})(Yi - \bar{Y})}{\sqrt{\sum{i=1}^{n}(Xi - \bar{X})^2}\sqrt{\sum{i=1}^{n}(Yi - \bar{Y})^2}} ]

Spearman's Rank Correlation Coefficient (ρ): Assesses monotonic relationships by calculating the Pearson correlation between the rank-transformed variables.

[ \rho{XY} = 1 - \frac{6 \sum di^2}{n(n^2 - 1)} ] where (d_i) is the difference between the ranks of corresponding variables.

Table 1: Comparison of Pearson vs. Spearman Correlation

| Feature | Pearson (r) | Spearman (ρ) |

|---|---|---|

| Relationship Type | Linear | Monotonic (Linear or Non-Linear) |

| Data Assumption | Interval/Ratio, Bivariate Normal | Ordinal, Interval, or Ratio |

| Robustness to Outliers | Low | High |

| Sensitivity | To linear trends | To rank order |

| Typical Use Case | Co-expression of genes in linear regimes | Gene expression with potential non-linear saturation |

| Computational Complexity | O(n) | O(n log n) due to ranking |

Standard Experimental Protocol for Network Inference

The following workflow is standard in -omics studies for constructing initial correlation networks.

Diagram Title: Standard Correlation Network Inference Workflow

Critical Limitations and Pitfalls

- Spurious Correlation: Measures statistical association, not causation. Confounded by latent variables (e.g., batch effects, cellular heterogeneity).

- Sensitivity to Distribution: Pearson assumes normality and homoscedasticity. Real biological data often violates these assumptions.

- Linear/Monotonic Constraint: Fails to capture complex, non-monotonic relationships (e.g., oscillatory or sinusoidal patterns common in signaling).

- Sample Size Dependency: Reliable estimation requires many independent samples; often scarce in clinical or niche model organism studies.

- Dense Network Output: Produces a fully connected graph, necessitating often-arbitrary thresholding to obtain sparse networks for interpretation.

Table 2: Impact of Limitations on Network Inference

| Limitation | Consequence for Inference | Typical Mitigation Strategy |

|---|---|---|

| Spurious Correlation | High false-positive edge rate; networks reflect noise or batch effects. | Careful experimental design, covariate adjustment, permutation testing. |

| Non-Linear Relationships | True biological interactions are missed, leading to false negatives. | Use of mutual information, GENIE3, or other non-linear models. |

| Thresholding Arbitrariness | Network topology and key hubs change drastically with threshold choice. | Stability-based approaches (e.g., bootstrap) or weighted network analysis. |

Advanced Considerations: Partial Correlation

Partial correlation attempts to address direct vs. indirect association by measuring the relationship between two variables while controlling for the effect of one or more other variables. It is a step towards causality but remains limited.

Diagram Title: Direct vs. Indirect Correlation Controlled by Partial Correlation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Correlation-Based Network Studies

| Item / Reagent | Function in Protocol |

|---|---|

| RNA-seq Library Prep Kit | Converts extracted RNA into sequencing-ready cDNA libraries. Quality dictates input data fidelity. |

| Normalization Software | (e.g., DESeq2, edgeR). Corrects for technical variation (library size, composition) prior to correlation. |

| Statistical Computing Environment | (e.g., R with cor(), Hmisc; Python with SciPy, pandas). Core platforms for calculation. |

| High-Performance Computing (HPC) Cluster | Enables O(n²) pairwise calculations across tens of thousands of features (genes/proteins). |

| Network Visualization Tool | (e.g., Cytoscape, Gephi). Renders and explores the resulting correlation network graphically. |

| P-Value Adjustment Tool | (e.g., Benjamini-Hochberg FDR correction). Addresses multiple testing for correlation p-values. |

| Synthetic Benchmark Datasets | (e.g., DREAM Challenge networks). Provides gold standards for algorithm validation. |

This article is part of a broader thesis, A Guide to Co-occurrence Network Inference Algorithms for New Researchers, focusing on methods designed to address the unique challenges of compositional data. Microbiome sequencing data, such as 16S rRNA amplicon or metagenomic counts, is inherently compositional. The total read count per sample (library size) is an arbitrary constraint imposed by sequencing depth, not a true reflection of biological abundance. This means the data conveys only relative abundance information. Analyzing such data with standard correlation measures (e.g., Pearson, Spearman) leads to spurious correlations due to the closure effect, where an increase in one taxon's proportion necessarily causes a decrease in others. This article provides an in-depth technical guide to three pivotal algorithms—SparCC, SPRING, and FastSpar—developed specifically for robust microbial network inference from compositional data.

Core Mathematical & Algorithmic Principles

The Compositional Data Problem

For a sample containing ( D ) taxa, the observed data is a vector of counts ( [x1, x2, ..., xD] ) transformed to proportions or relative abundances ( [y1, y2, ..., yD] ) where ( \sum{i=1}^{D} yi = 1 ). This sum constraint induces a negative bias in correlation estimates.

SparCC (Sparse Correlations for Compositional Data)

SparCC, introduced by Friedman & Alm (2012), is foundational. It operates on the key insight that for sparse communities, the true log-ratio variance ( V{ij} = \text{Var}(\log \frac{xi}{xj}) ) can be approximated from compositional data and is related to the underlying (unobserved) absolute abundance covariances ( T{ij} ).

- Core Iterative Algorithm:

- Estimate Basis Variance: From the observed compositional data, compute all pairwise variances ( v{ij} ) of (\log(yi / yj)).

- Solve for Component Variances: Approximate the variance of the log-transformed absolute abundance of each component ( i ) (( \omegai )) by solving: [ v{ij} \approx \omegai + \omegaj - 2T{ij} ] assuming most ( T{ij} = 0 ) (sparsity).

- Infer Basis Covariance: Compute the covariance matrix ( \Omega ) for the log-transformed absolute abundances: [ \Omega{ij} = T{ij} = (\omegai + \omegaj - v{ij}) / 2 ]

- Calculate Correlation: Derive the correlation matrix: [ \rho{ij} = \frac{T{ij}}{\sqrt{\omegai \omegaj}} ]

- Iterative Refinement: Exclude pairs with correlations exceeding a threshold (e.g., |ρ| > 0.1) as "likely correlated," recompute variances using only the remaining pairs, and repeat.

SPRING (Semi-Parametric Rank-Based Correlation and Partial Correlation for Compositional Data)

SPRING, developed by Yoon et al. (2019), extends the concept by incorporating a semi-parametric Gaussian copula model and enabling direct estimation of conditional independence (partial correlation).

- Core Algorithm:

- Rank-Based Quantile Matching: The relative abundance ( yi^s ) for taxon ( i ) in sample ( s ) is transformed via a rank-based matching to a standard normal variable: [ zi^s = \Phi^{-1}\left( \frac{\text{rank}(yi^s) - 0.5}{n} \right) ] where ( \Phi^{-1} ) is the inverse CDF of a standard normal distribution. This handles non-normality.

- Compositional Adjustment: The transformed data matrix ( Z ) is centered using a compositional-adjusted mean (e.g., geometric mean).

- Sparse Partial Correlation Estimation: A sparse inverse covariance matrix (precision matrix, ( \Theta )) is estimated directly from ( Z ) using the Graphical Lasso or CLIME, which penalizes the L1-norm of ( \Theta ) to encourage sparsity (conditional independence). The partial correlation ( \rho{ij|rest} ) between taxon ( i ) and ( j ) is: [ \rho{ij|\text{rest}} = -\frac{\Theta{ij}}{\sqrt{\Theta{ii}\Theta{jj}}} ]

- Model Selection: Stability selection or information criteria (e.g., EBIC) are used to choose the optimal regularization parameter ( \lambda ).

FastSpar

FastSpar, by Watts et al. (2019), is a rapid, parallelized implementation of the SparCC methodology with the addition of robust p-value estimation via bootstrap and/or permutation.

- Core Optimizations:

- Vectorized & Parallel Computation: All log-ratio variance calculations are vectorized across taxa pairs and distributed across CPU cores.

- Efficient Iteration: The exclusion of strong correlations is implemented efficiently.

- Inference via Resampling:

- Bootstrap: Data is resampled with replacement to create many bootstrapped datasets. FastSpar runs on each, generating a distribution of correlation values. The p-value is the proportion of bootstrap correlations more extreme than the observed.

- Permutation: The abundance vector for each taxon is shuffled independently across samples to break all associations. FastSpar runs on many permuted datasets to create a null distribution of correlations.

Table 1: Core Algorithmic Comparison

| Feature | SparCC | SPRING | FastSpar |

|---|---|---|---|

| Primary Output | Sparse Correlation Network | Sparse Partial Correlation Network (Conditional Independence) | Sparse Correlation Network |

| Core Method | Log-ratio variance decomposition | Semi-parametric Gaussian Copula + Graphical Lasso | Optimized, parallel SparCC implementation |

| Inference | Heuristic iterative exclusion | Regularized likelihood optimization (L1-penalty) | Bootstrap / Permutation testing |

| Key Assumption | Community sparsity (many true correlations are zero) | Underlying latent variables follow a multivariate Gaussian after transformation | Same as SparCC |

| Computational Speed | Moderate | Slow (depends on regularization path search) | Very Fast (parallelized, C++ backend) |

| Uniqueness | Foundational method | Estimates direct interactions via partial correlation | Modern, fast standard with robust p-values |

Table 2: Typical Performance Metrics (Synthetic Dataset with 200 Taxa, 500 Samples)

| Metric | SparCC | SPRING | FastSpar |

|---|---|---|---|

| Precision (Positive Predictive Value) | 0.75 | 0.92 | 0.76 |

| Recall (Sensitivity) | 0.60 | 0.55 | 0.62 |

| F1-Score | 0.67 | 0.69 | 0.68 |

| Run Time (Minutes) | ~45 | ~120 | ~5 |

| Memory Usage (GB) | ~2.1 | ~4.5 | ~1.8 |

Experimental Protocols for Validation

Protocol 1: Benchmarking on Synthetic Data (Gold Standard)

- Data Generation: Use a realistic data simulator like

SPIEC-EASIorcompositionsR package.- Generate a ground-truth sparse precision matrix ( \Theta{true} ) for ( D=100 ) taxa.

- Draw ( n=500 ) samples from a multivariate log-normal distribution: ( \log(X) \sim \mathcal{N}(0, \Theta{true}^{-1}) ).

- Convert absolute abundances ( X ) to compositional data ( Y ) by applying a closure operation (dividing by total per sample).

- Network Inference: Apply SparCC, SPRING, and FastSpar to ( Y ). For SPRING, use EBIC for model selection. For FastSpar, run with 1000 bootstraps.

- Evaluation: Compare inferred edges (e.g., correlation > threshold, p < 0.01) to ( \Theta_{true} ). Calculate Precision, Recall, F1-score, and Area Under the Precision-Recall Curve (AUPR).

Protocol 2: Application to Real Microbiome Data with Perturbation

- Dataset: Obtain a publicly available time-series or case-control dataset (e.g., from antibiotic perturbation studies in mice or humans).

- Preprocessing: Perform standard bioinformatics: quality filtering, ASV/OTU clustering, chimera removal, and rarefaction or CSS normalization. Filter low-prevalence taxa (<10% of samples).

- Network Inference: Run FastSpar (for speed and p-values) and SPRING (for conditional independence) on the entire dataset or per group (e.g., pre- vs post-antibiotic).

- Analysis:

- Compare global network properties (modularity, degree distribution).

- Identify keystone taxa (high betweenness centrality) in each network.

- Validate edges using cross-validation stability or by checking against known metabolic cross-feeding pathways from literature/KEGG.

Visualization of Workflows & Relationships

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages

| Item (Tool/Package) | Function & Explanation |

|---|---|

| FastSpar C++/CLI Tool | The primary, high-performance software for running the FastSpar algorithm. Used for robust correlation inference with p-values via bootstrap. |

| SPRING R Package | The R implementation of the SPRING algorithm. Essential for inferring conditional dependence networks via the Gaussian copula graphical model. |

| QIIME 2 (q2-sparcc plugin) | A bioinformatics pipeline plugin that wraps SparCC/FastSpar for integrated microbiome analysis from sequences to networks. |

| NetCoMi R Package | A comprehensive network analysis and comparison toolbox. Can interface with various inference methods, including SparCC, for stability calculation and differential network analysis. |

| SPIEC-EASI R Package | Contains the SPRING algorithm and also provides tools for synthetic data generation, crucial for method benchmarking and validation. |

| igraph (R/Python) / Cytoscape | Network visualization and topological analysis suites. Used to visualize inferred networks, calculate centrality metrics, and identify modules. |

| GMPR / CSS Normalization Scripts | While not part of the algorithms themselves, proper normalization (e.g., CSS in MetagenomeSeq, GMPR) before analysis is often a critical pre-processing step for uneven sequencing depth. |

This guide is the third installment in a comprehensive thesis, A Guide to Co-occurrence Network Inference Algorithms for New Researchers. Having previously examined correlation-based and distance-based methods, we now delve into probabilistic graphical models. These approaches, notably SPIEC-EASI and gCoda, model microbial interactions as conditional dependence relationships within a mathematical framework, offering a more robust statistical foundation for inferring ecological networks from compositional count data.

Core Concepts & Theoretical Foundation

Microbial abundance data from high-throughput sequencing is compositional, meaning the total count per sample is arbitrary and carries no biological information. This introduces a spurious correlation (the closure effect), making standard correlation measures invalid. Graphical model approaches address this by modeling the underlying (unobserved) absolute abundances.

The core idea is to infer an undirected graphical model (or Markov Random Field) where:

- Nodes represent microbial taxa (e.g., OTUs, ASVs).

- Edges represent conditional dependencies between taxa.

- The absence of an edge implies the two taxa are conditionally independent given all other taxa in the network.

The inferred graph is characterized by a sparse inverse covariance matrix (precision matrix, Ω), where non-zero off-diagonal elements correspond to edges in the network.

Detailed Algorithmic Breakdown

SPIEC-EASI (Sparse InversE Covariance Estimation for Ecological Association Inference)

SPIEC-EASI combines a compositional data transformation with a sparse inverse covariance estimation method.

Workflow:

- Input: Compositional count matrix ( Y ) (samples x taxa).

- Center Log-Ratio (CLR) Transformation: ( \text{clr}(Y) = \log(Y / g(Y)) ), where ( g(Y) ) is the geometric mean of the sample. This transforms data from the simplex to real space but creates singular covariance.

- Covariance Estimation: Uses a pseudoinverse approach or relies on the subsequent sparse estimation to handle singularity.

- Sparse Inverse Covariance Selection:

- EASI 1 (MB): Applies the Meinshausen-Bühlmann (MB) method, performing neighborhood selection for each node via L1-penalized (lasso) regression.

- EASI 2 (GL): Uses the Graphical Lasso to directly estimate the sparse precision matrix Ω by maximizing the penalized log-likelihood: ( \log \det \Omega - \text{tr}(S \Omega) - \lambda \|\Omega\|_1 ), where ( S ) is the sample covariance matrix of the CLR-transformed data.

- Output: A sparse precision matrix, interpreted as the microbial interaction network.

Diagram Title: SPIEC-EASI Dual-Path Inference Workflow

gCoda (Compositional Graphical Lasso via Convex Optimization)

gCoda directly incorporates the compositional constraint into the graphical model optimization, avoiding explicit transformation.

Model: Assumes the underlying absolute abundances ( X ) follow a multivariate log-normal distribution. The observed proportions ( Y ) are ( Y{ij} = X{ij} / \sum{k} X{ik} ).

Optimization: gCoda maximizes a penalized log-likelihood based on the multinomial logit model, which inherently accounts for compositionality. The objective function is: [ \ell(\Theta) = \frac{1}{N} \sum{i=1}^{N} \left[ \sum{j=1}^{p} y{ij} (\thetaj - \log(\sum{k=1}^{p} \exp(\thetak))) \right] - \lambda \|\Theta\|_1 ] Here, ( \Theta ) is related to the underlying precision structure, and L1 penalty induces sparsity. It solves this convex problem using an efficient optimization algorithm.

Diagram Title: gCoda Direct Compositional Optimization

Comparative Analysis & Experimental Data

Table 1: Algorithmic Comparison of SPIEC-EASI and gCoda

| Feature | SPIEC-EASI | gCoda |

|---|---|---|

| Core Principle | CLR transform + Sparse Inverse Covariance | Direct penalized likelihood on compositional data |

| Handles Compositionality | Via CLR transformation | Inherently in likelihood model |

| Primary Method | Graphical Lasso (GL) or Meinshausen-Bühlmann (MB) | Convex optimization (Gradient descent) |

| Key Hyperparameter | Sparsity penalty λ (for GL/MB) | Sparsity penalty λ |

| Computational Complexity | Moderate to High (depends on method) | High (custom optimization required) |

| Primary Output | Sparse Precision Matrix (Ω) | Sparse Parameter Matrix (Θ) |

| Model Assumptions | Underlying abundances are transformed to real space. | Underlying abundances follow a log-normal distribution. |

Table 2: Performance Summary from Benchmarking Studies (Synthetic Data)

| Metric (Mean) | SPIEC-EASI (MB) | SPIEC-EASI (GL) | gCoda | Random Guess |

|---|---|---|---|---|

| Precision (PPV) | 0.68 | 0.71 | 0.75 | 0.05 |

| Recall (TPR) | 0.65 | 0.60 | 0.69 | 0.05 |

| F1-Score | 0.66 | 0.65 | 0.72 | 0.05 |

| AUPR | 0.67 | 0.66 | 0.74 | 0.05 |

Note: Example data synthesized from benchmark results in Kurtz et al. (2015) and Fang et al. (2017). Performance varies heavily with network topology, sample size, and sparsity.

Detailed Experimental Protocol for Validation

A standard protocol for benchmarking these algorithms involves using synthetic data with known ground-truth networks.

Title: Protocol for Validating Graphical Model Inference Algorithms

Objective: To evaluate the accuracy (Precision, Recall) of SPIEC-EASI and gCoda in recovering known microbial interaction networks from simulated compositional count data.

Materials:

- High-performance computing cluster (R/Python environment).

- Ground-truth network files (e.g., adjacency matrices).

SpiecEasiR package andgCodaimplementation (MATLAB/R).

Procedure:

Network Simulation:

- Generate 10 distinct random network topologies (e.g., Scale-Free, Erdos-Renyi) with p=50 nodes.

- For each topology, create a positive-definite precision matrix Ω by assigning random positive/negative values to edges and ensuring diagonal dominance.

- Invert Ω to obtain covariance matrix Σ.

Data Generation:

- For each Σ, draw n=100 multivariate normal samples to create an absolute abundance matrix ( X_{abs} ).

- Convert ( X{abs} ) to a compositional matrix ( Y ) by dividing each row by its sum: ( Y{ij} = X{abs, ij} / \sumk X_{abs, ik} ).

- Multiply ( Y ) by a random library size (e.g., 10^4 to 10^5) and round to generate integer count data.

Network Inference:

- Apply SPIEC-EASI (GL & MB) to the count matrix ( Y ). Use StARS or stability-based method to select the optimal sparsity parameter λ. Run with 100 subsamples.

- Apply gCoda to the same ( Y ). Use its default or cross-validation method for λ selection.

- Binarize output precision matrices (non-zero = edge).

Evaluation:

- Compare each inferred adjacency matrix to the ground-truth matrix.

- Calculate Precision, Recall, F1-score, and AUPR for each run.

- Aggregate results across the 10 different network topologies.

Analysis:

- Perform paired statistical tests (e.g., Wilcoxon signed-rank) on F1-scores across methods.

- Visualize results using box plots and precision-recall curves.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Graphical Model-Based Network Inference

| Item (Software/Package) | Primary Function | Application in Protocol |

|---|---|---|

SpiecEasi R Package |

Implements the full SPIEC-EASI pipeline (CLR + MB/GL). | Core tool for SPIEC-EASI inference and stability selection. |

gCoda (MATLAB/R) |

Implements the gCoda algorithm for direct compositional inference. | Core tool for gCoda network inference. |

huge R Package |

Provides high-dimensional undirected graph estimation (MB, GL). | Used internally by SpiecEasi; can be used for custom pipelines. |

igraph / network |

Network analysis and visualization libraries. | For analyzing and visualizing the structure of inferred networks. |

POT/ROCR |

Computing Precision-Recall curves and AUPR. | Critical for quantitative evaluation against ground truth. |

compositions R Package |

Tools for compositional data analysis (CLR transform). | For preliminary data transformation if building custom workflows. |

Synthetic Data Generator (e.g., seqtime) |

Simulates microbial count data from known network models. | For creating rigorous benchmark datasets (Step 1 & 2 of protocol). |

| High-Performance Computing (HPC) Cluster | Parallel processing environment. | Essential for running stability selections (StARS) and cross-validation, which are computationally intensive. |

Within the broader research on "Guide to co-occurrence network inference algorithms for new researchers," a critical evolution is the application of machine learning (ML) and ensemble methods. Traditional correlation-based and statistical inference methods often possess limitations in handling compositional, high-dimensional, and noisy microbial or molecular data. This guide delves into two advanced approaches that represent this paradigm shift: CoNet (an ensemble method) and MENA (Molecular Ecological Network Analysis). These algorithms leverage ML principles to improve the robustness, accuracy, and biological interpretability of inferred co-occurrence networks, a crucial step for researchers and drug development professionals in identifying key interacting entities for therapeutic targeting.

Core Algorithmic Frameworks

CoNet: An Ensemble Inference Engine

CoNet integrates multiple dissimilarity and similarity measures, moving beyond single-metric inference. It applies an ensemble of methods—including Pearson and Spearman correlation, Bray-Curtis dissimilarity, and Kullback-Leibler divergence—and combines results using a consensus-based approach to generate more reliable edges.

Key Workflow:

- Base Measure Calculation: Compute pairwise scores for all node pairs using multiple measures.

- Permutation & Bootstrap: For each measure, generate null distributions via data permutation (row shuffling) and bootstrap distributions via column resampling.

- P-value & Confidence Interval: Calculate p-values from the permutation null and confidence intervals from the bootstrap distribution for each edge score.

- Ensemble Thresholding: Apply multiple pre-selected thresholds (e.g., score, p-value, bootstrap CI) across all measures.

- Consensus Edge Selection: An edge is retained only if it passes the threshold criteria for a majority (user-definable) of the base measures.

MENA: Network Construction & Topological Analysis

MENA is a comprehensive pipeline (hosted on the Molecular Ecological Network Analysis Pipeline website) specifically designed for high-throughput sequencing data. It employs a Random Matrix Theory (RMT)-based approach to automatically identify a correlation threshold for network construction, avoiding arbitrary cut-offs.

Key Workflow:

- Correlation Matrix Construction: Calculate all pairwise Pearson or Spearman correlations.

- RMT Threshold Detection: Analyze the eigenvalue distribution of the correlation matrix. The optimal similarity threshold is identified where the eigenvalue distribution of the observed data deviates from the distribution derived from a random matrix.

- Network Generation: Build an adjacency matrix using the RMT-derived threshold.

- Topological Analysis: Calculate a suite of network properties (modularity, centrality, etc.) and perform module-based analyses.

- Robustness Assessment: Evaluate network stability using random node removal.

Table 1: Quantitative Comparison of CoNet & MENA Features

| Feature | CoNet | MENA |

|---|---|---|

| Core Approach | Ensemble of multiple measures | Random Matrix Theory (RMT) |

| Primary Input | Abundance matrix (OTU, gene, metabolite) | Abundance matrix (typically OTU/ASV) |

| Threshold Strategy | Consensus across measures & statistical filtering | Data-driven, automatic via RMT |

| Key Output | Consensus network with edge weights | Network with topological metrics & modules |

| Null Model | Row-wise permutation & bootstrap | Randomized matrix based on RMT |

| Typical Domain | General ecological/molecular associations | Microbial ecology (16S rRNA, metagenomics) |

| Handles Compositionality | Yes, via included measures (e.g., Bray-Curtis) | Indirectly via correlation choice & log-transform |

Table 2: Typical Topological Metrics Reported in MENA Analysis

| Metric | Description | Biological Insight |

|---|---|---|

| Average Degree | Average number of connections per node | Overall connectivity of the community |

| Average Path Length | Average shortest distance between nodes | Efficiency of information/propagation |

| Modularity | Strength of division into modules (sub-communities) | Functional or ecological niches |

| Avg. Clustering Coefficient | Measure of local interconnectedness | Resilience of local groups |

| Centralization | Degree to which network is centered on key nodes | Top-down control or keystone species |

Detailed Experimental Protocols

Protocol 1: Implementing CoNet for Microbial Association Network Inference

Materials: Abundance table (e.g., OTU/ASV counts), metadata, R environment, CoNet plugin for Cytoscape or standalone R scripts.

Methodology:

- Preprocessing: Filter low-abundance features. Apply a centered log-ratio (CLR) or other appropriate transformation to address compositionality.

- Parameter Configuration: In the CoNet interface (e.g., in Cytoscape), select at least four base measures (e.g., Pearson, Spearman, Bray-Curtis, Kullback-Leibler). Set the number of permutations (e.g., 1000) for null model generation and bootstrap iterations (e.g., 1000).

- Threshold Setting: Define initial edge selection thresholds: score threshold (e.g., >0.5 or <-0.5), p-value threshold (e.g., <0.05), and bootstrap confidence threshold (e.g., >0.0). Set the consensus rule (e.g., an edge must pass thresholds for >50% of measures).

- Execution: Run the CoNet algorithm. The tool will generate the consensus network and provide edge tables with combined scores, p-values, and bootstrap support.

- Post-processing: Import the edge and node lists into network analysis software (e.g., Gephi, Cytoscape) for visualization and further topological analysis. Validate key edges against known biological knowledge or databases.

Protocol 2: Constructing a Network using the MENA Pipeline

Materials: Normalized OTU/ASV abundance table, environmental factor data (optional), access to the MENA website or local software.

Methodology:

- Data Upload & Formatting: Prepare the abundance matrix as a tab-delimited file (samples in columns, OTUs in rows). Log-transform the data (usually Ln(x+1)) as recommended.

- Correlation Selection: Choose the correlation method (Pearson or Spearman). For compositional data, this step is critical and may require prior transformation.

- Automatic Threshold Detection: Initiate the RMT-based analysis. The pipeline will analyze the eigenvalue spacing distribution to determine the optimal similarity threshold (St) that separates signal from noise.

- Network Construction: The pipeline creates the adjacency matrix using the calculated St. Only correlations above |St| are considered significant edges.

- Topological & Modular Analysis: Run the built-in algorithms to calculate global network properties (Table 2) and identify modules (clusters of densely connected OTUs).

- Zi-Pi Analysis: For each node, calculate within-module connectivity (Zi) and among-module connectivity (Pi) to categorize nodes as module hubs, connectors, network hubs, or peripherals. This identifies potential keystone taxa.

- Robustness Test: Use the "random removal" function to assess network stability, typically plotting the proportion of remaining nodes versus connectivity.

Visualizing Workflows & Relationships

Title: CoNet Ensemble Inference Pipeline

Title: MENA Network Construction and Analysis

Title: Algorithm Taxonomy in Thesis on Network Inference

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for CoNet & MENA-based Research

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| High-Throughput Sequencer | Generate raw abundance data (OTUs, genes). | Illumina MiSeq/HiSeq for 16S rRNA; NovaSeq for metagenomics. |

| Bioinformatics Pipeline | Process raw sequences into an abundance matrix. | QIIME 2, MOTHUR, DADA2 for 16S; HUMAnN3 for metagenomics. |

| Normalization & Transform Tools | Mitigate compositionality and variance. | R packages: compositions (CLR), DESeq2 (median of ratios). |

| CoNet Implementation | Execute the ensemble network inference. | Cytoscape plugin (CoNet app) or custom R scripts using co-occurrence packages. |

| MENA Web Platform | Perform RMT-based network construction and analysis. | Publicly accessible at http://ieg4.rccc.ou.edu/mena/. |

| Network Visualization Software | Visualize and analyze graph structure. | Gephi, Cytoscape, R (igraph, network packages). |

| Statistical Environment | Data manipulation, preprocessing, and custom analysis. | R (preferred) or Python with pandas, numpy, scikit-learn. |

| Reference Databases | Validate inferred biological interactions. | KEGG, MetaCyc (pathways); STRING, SPIKE (protein interactions). |

In the broader thesis of constructing a guide to co-occurrence network inference algorithms for new researchers, this technical whitepaper provides a foundational workflow. Selecting the correct algorithm is not arbitrary; it is dictated by the specific intersection of your data type and research question. This guide details a structured, hands-on approach to this critical decision.

The Core Decision Framework

The initial choice of a network inference algorithm is governed by three pillars: the Data Type, the underlying Data Distribution, and the precise Research Question. The following diagram illustrates this foundational logic.

Title: Algorithm Choice Logic: Data Type to Research Question.

Quantitative Algorithm Comparison Table

The following table summarizes key performance metrics and characteristics of prevalent algorithm families, based on recent benchmarking studies.

Table 1: Co-occurrence Network Algorithm Benchmarking Summary

| Algorithm Family | Example Algorithm | Optimal Data Type | Typical Computational Cost | Key Strength | Key Limitation |

|---|---|---|---|---|---|

| Correlation | SparCC, Spearman | Compositional, Continuous | Low | Intuitive, fast for initial hypothesis generation. | Prone to spurious correlations from compositionality. |

| Regularized Regression | gLasso, SPIEC-EASI (MB) | Continuous, Compositional | Medium | Accounts for conditional dependencies; good specificity. | Requires careful hyperparameter tuning (λ). |

| Conditional Dependence | SPIEC-EASI (GL) | Compositional | High | Directly models compositional data; robust. | Computationally intensive; slower on very large datasets. |

| Bayesian/Probabilistic | MPLN, BAnOCC | Compositional, Count-based | Very High | Quantifies uncertainty; robust to noise and compositionality. | Extremely high computational demand; complex interpretation. |

| Information Theory | MIDAS, MI (with BC/CR correction) | Compositional (microbiome) | Medium | Non-linear; designed for microbial count data. | High variance with low sample size; requires careful discretization. |

| Machine Learning | GENIE3, FLORAL | Continuous (e.g., gene expression) | High | Infers directed networks; captures non-linearities. | High risk of overfitting; requires very large sample sizes. |

Detailed Experimental Protocols for Key Methods

Protocol 1: SPIEC-EASI Workflow for Microbiome Data (Compositional)

Objective: Infer a microbial association network from 16S rRNA OTU count data while addressing compositionality and sparsity.

- Input Data Preparation: Start with an OTU table (samples x OTUs). Apply a centered log-ratio (CLR) transformation to each sample. For zero counts, use multiplicative replacement or a pseudo-count.

- Algorithm Selection: Choose between:

- SPIEC-EASI (MB): Neighborhood selection (Meinshausen-Bühlmann). More scalable.

- SPIEC-EASI (GL): Graphical Lasso. More stable for dense networks.

- Stability Selection: Subsample data (e.g., 80% of samples) 100+ times. Run the chosen algorithm on each subset with a range of regularization parameters (λ).

- Network Reconstruction: Calculate the empirical probability for each edge (presence across subsamples). Select edges with probability > a threshold (e.g., 0.8). The final network is the consensus graph.

- Visualization & Analysis: Import the adjacency matrix into

igraph(R) orNetworkX(Python) for topology calculation (degree, betweenness centrality) and visualization.

Protocol 2: GENIE3 for Directed Transcriptional Network Inference

Objective: Infer a directed regulatory network from a gene expression matrix (continuous).

- Data Preprocessing: Input is a matrix of normalized expression values (e.g., TPM, FPKM) across conditions/time points. Log-transform if necessary.

- Tree-Based Ensembles: For each gene i (target):

- Set its expression profile as the response variable

Y. - Set the expression profiles of all other genes as the predictor matrix

X. - Train a tree-based ensemble (e.g., Random Forest, Gradient Boosting) to predict

YfromX.

- Set its expression profile as the response variable

- Feature Importance Scoring: Extract a feature importance score (e.g., Mean Decrease in Impurity) for every predictor gene j. This score indicates the putative regulatory influence of j on i.

- Aggregate & Threshold: Compile all importance scores into a weighted, directed adjacency matrix. Apply a threshold (e.g., top 100,000 edges or based on permutation testing) to obtain the final directed network.

- Validation: Compare inferred regulatory edges against known databases (e.g., RegulonDB for E. coli) to calculate precision/recall.

Title: GENIE3 Directed Network Inference Workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Packages

| Tool/Resource | Function | Primary Use Case |

|---|---|---|

| SPIEC-EASI (R) | Statistical inference for compositionally-correct networks. | Primary analysis for 16S rRNA or metagenomic count data. |

| SpiecEasi (Python Port) | Python implementation of SPIEC-EASI. | Integrating network inference into Python-based bioinformatics pipelines. |

| NetCoMi (R) | Comprehensive network construction, comparison, and analysis. | Comparing microbial networks across conditions (e.g., healthy vs. disease). |

| FlashWeave (Julia) | Fast, adaptive network inference for heterogeneous data. | Integrating microbial and continuous host data (multi-omics). |

| MEGENA (R) | Multiscale Embedded Gene Co-expression Network Analysis. | Identifying multi-scale modules in large gene expression networks. |

| Graphia (Desktop App) | High-performance network visualization and analysis. | Visualizing and exploring large, complex networks post-inference. |

| Cytoscape | Open-source platform for network visualization and analysis. | Manual curation, advanced visualization, and plugin-based analysis. |

| igraph (R/Python) | Core library for network analysis and graph theory metrics. | Calculating centrality, modularity, and custom network statistics. |

This guide provides a technical overview of essential software tools for inferring and analyzing co-occurrence networks in microbial ecology and related fields, framed within a broader thesis on methodologies for new researchers.

Co-occurrence network inference is a critical step in understanding complex microbial community interactions. The process typically involves data preprocessing, statistical inference of associations, network construction, and visualization/analysis. Different software ecosystems offer specialized tools for each stage.

Core Software Ecosystems

R Environment

R is a statistical programming language with extensive packages for microbial bioinformatics.

SpiecEasi(Sparse Inverse Covariance Estimation for Ecological Association Inference): A premier package for inferring microbial association networks using sparse inverse covariance selection methods. It is designed to handle compositional, sparse, and high-dimensional microbiome data.microbiomeR Package: A comprehensive toolkit for microbiome data analysis, often used upstream of network inference for normalization, transformation, and community profiling.

Python Environment

Python offers flexible, general-purpose programming with strong data science libraries.

NetworkX: A fundamental Python library for the creation, manipulation, and study of the structure, dynamics, and functions of complex networks. It is commonly used to analyze networks inferred from tools likeSpiecEasi.

User-Friendly Graphical Platforms

These platforms provide intuitive graphical interfaces for network visualization and exploration.

Cytoscape: An open-source platform for visualizing complex networks and integrating them with any type of attribute data. It supports extensive plugins for network analysis.Gephi: An interactive visualization and exploration platform for all kinds of networks and complex systems, dynamic and hierarchical graphs.

Quantitative Tool Comparison

Table 1: Core Software Tool Characteristics

| Tool / Platform | Primary Language | Key Strength | Typical Input | Typical Output | Best For |

|---|---|---|---|---|---|

| SpiecEasi (R) | R | Robust statistical inference for compositional data | OTU/ASV table (counts) | Association matrix, edge list | Network Inference from microbiome data |

| microbiome (R) | R | Data preprocessing & community analysis | Raw OTU/ASV table, metadata | Normalized/transformed tables, alpha/beta diversity | Data Preparation & preliminary analysis |