High-Performance Computing for Microbiome Analysis: Optimizing Workflows for Large-Scale Biomedical Datasets

This article provides a comprehensive guide for researchers and drug development professionals facing computational bottlenecks in microbiome analysis.

High-Performance Computing for Microbiome Analysis: Optimizing Workflows for Large-Scale Biomedical Datasets

Abstract

This article provides a comprehensive guide for researchers and drug development professionals facing computational bottlenecks in microbiome analysis. We explore the foundational challenges posed by large-scale amplicon and metagenomic sequencing data, detailing scalable methodologies from preprocessing to statistical inference. We present practical troubleshooting strategies for common performance issues, evaluate current software and hardware solutions, and benchmark computational efficiency. The guide synthesizes best practices for accelerating discovery pipelines while maintaining analytical rigor, directly impacting biomarker identification and therapeutic development.

The Big Data Challenge: Understanding the Computational Bottlenecks in Modern Microbiome Studies

Troubleshooting Guides & FAQs

Q1: Our analysis server runs out of memory during 16S rRNA gene sequence clustering (e.g., with VSEARCH). What are the primary strategies to resolve this? A: The memory footprint during clustering scales quadratically with the number of unique sequences. Implement the following:

- Pre-filtering: Aggressively pre-filter low-abundance reads (e.g., singletons, doubletons) before dereplication. This drastically reduces the number of unique sequences for pairwise comparison.

- Subsampling (Rarefaction): For alpha/beta diversity analysis, subsample all samples to an even depth before clustering. Use the minimum reasonable depth across your cohort.

- Cluster in Batches: If possible, split your sample cohort by metadata (e.g., treatment group) and cluster each batch separately before merging OTU tables, though this requires post-hoc curation.

- Hardware/Cloud Scaling: For large cohorts (>1000 samples), use high-memory compute instances (e.g., 64GB+ RAM). Consider cloud-native clustering tools optimized for horizontal scaling.

Q2: When performing differential abundance testing on a cohort of 500+ samples, the statistical model (e.g., DESeq2, MaAsLin2) fails to converge or takes days to run. How can we improve computational efficiency? A: This is a common issue with high-dimensional, sparse microbiome data.

- Feature Aggregation: Prior to testing, aggregate low-variance features at a higher taxonomic rank (e.g., Genus instead of ASV). This reduces the number of statistical tests and model parameters.

- Optimized Data Structures: Ensure your count table is stored as a sparse matrix object (e.g., in R:

Matrixpackage) to minimize memory usage. - Parallelization: Utilize the parallel computing options built into these tools. For

DESeq2, use theBiocParallelpackage. - Model Simplification: Reduce the complexity of your model formula. Remove non-essential covariates that are not part of your primary hypothesis to speed up fitting.

Q3: Storage costs for raw FASTQ files from a longitudinal study with deep metagenomic sequencing are becoming prohibitive. What is the recommended data lifecycle management strategy? A: Adopt a tiered storage and deletion policy aligned with reproducibility needs.

- Immediate Post-Processing: After QC, generate intermediate files (trimmed reads, host-filtered reads) and store alongside raw FASTQ.

- Post-Abundance Table Generation: Once final, analysis-ready feature tables (genus/LGT/species, pathway) and quality metrics are confirmed, compress and archive raw FASTQ files to a "cold" storage tier (e.g., AWS Glacier, Google Coldline).

- Retention Policy: Define a lab/department policy. Example: Retain raw FASTQ on primary storage for 6 months post-publication, then move to archive. Delete intermediate BAM and SAM files after 1 month. Permanently retain final processed abundance matrices and sample metadata.

Q4: Our metagenomic assembly pipeline for hundreds of deep-sequenced samples fails due to excessive disk I/O and runtime. What workflow adjustments are critical? A: Metagenomic assembly is computationally intensive. Consider co-assembly or batch strategies.

- Co-assembly by Group: For case-control studies, perform co-assembly separately for major metadata groups (e.g., all controls together, all cases together). This reduces per-sample overhead while still capturing group-specific variation.

- Batch Assembly: Divide the cohort into random, smaller batches (e.g., 50-100 samples each), assemble, and then use a tool like

metaMDBGto merge the batch-level graphs into a final set of contigs. - Resource Allocation: Use compute-optimized instances with fast local SSDs for assembly, then transfer results to network storage.

Experimental Protocols

Protocol 1: Efficient Sparse Matrix Creation for Large Cohort Analysis

Purpose: To store and manipulate large, sparse microbiome count tables (e.g., ASV x Sample) memory-efficiently in R. Steps:

- Load a comma-separated count table into R using

data.table::fread()for speed. - Convert the

data.frameto a sparse matrix using theMatrixpackage:

- Use this

sparse_count_matrixas input to packages likephyloseqor for custom statistical modeling, ensuring all downstream operations use sparse-aware functions.

Protocol 2: Systematic Subsampling (Rarefaction) for Diversity Analysis

Purpose: To standardize sequencing depth across a large cohort prior to calculating alpha and beta diversity metrics, minimizing bias from uneven sampling. Steps:

- Determine the minimum sequencing depth that retains >90% of your samples and maintains sufficient per-sample diversity. Use alpha rarefaction curves to guide this.

- Using the

phyloseqpackage in R:

- Note: Perform rarefaction multiple times (e.g., 100 iterations) for robust results, average the resulting distance matrices, or use rarefaction-aware metrics like

SRS.

Table 1: Data Volume Scaling with Sequencing Depth and Cohort Size

| Cohort Size | Sequencing Depth (Reads per Sample) | Approx. Raw FASTQ per Sample | Total Project Data (Pre-processing) |

|---|---|---|---|

| 100 | 50,000 (16S) | 0.3 GB | 30 GB |

| 500 | 100,000 (Shallow Metagenomics) | 3 GB | 1.5 TB |

| 1,000 | 50,000 (16S) | 0.3 GB | 300 GB |

| 1,000 | 200 million (Deep Metagenomics) | 60 GB | 60 TB |

| 10,000 | 50,000 (16S) | 0.3 GB | 3 TB |

Table 2: Computational Resource Requirements for Common Tasks (1,000 Sample Cohort)

| Analysis Step | Typical Tool | Approx. Memory Requirement | Approx. Runtime (CPU Hours) | Recommended Strategy |

|---|---|---|---|---|

| 16S Clustering (97% OTUs) | VSEARCH | 64-128 GB | 24-48 | Pre-filter singletons; use --sizein |

| Taxonomic Profiling (Metagenomic) | Kraken2 | 100 GB (for large DB) | 5-10 | Use pre-built database; run in parallel |

| Metagenomic Assembly | MEGAHIT | 500+ GB | 200+ | Co-assembly by group; use batch strategy |

| Differential Abundance (DESeq2) | DESeq2 (in R) | 32 GB | 4-8 | Use sparse matrix; filter low-count features |

Visualizations

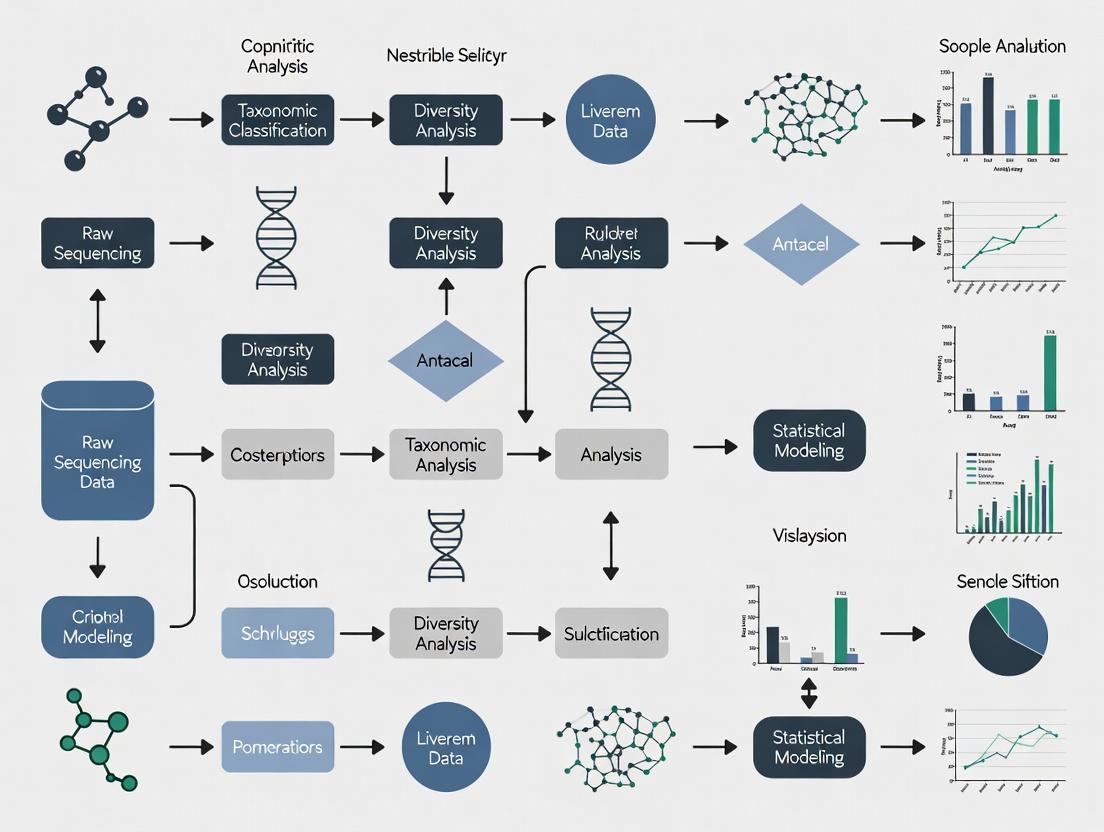

Title: Data Processing Workflow for Microbiome Analysis

Title: The Scaling Problem: Factors Impacting Data Volume

The Scientist's Toolkit: Research Reagent & Computational Solutions

| Item Category | Specific Tool/Reagent | Function/Explanation |

|---|---|---|

| Sequencing Kit | Illumina NovaSeq 6000 S4 Reagent Kit | Provides the chemistry for deep, high-throughput sequencing, directly determining raw data output per run. |

| Database | GTDB (Genome Taxonomy Database) Release | A standardized microbial genome database used for accurate taxonomic classification of metagenomic reads. |

| Analysis Software | QIIME 2, mothur | Integrated platforms for processing raw 16S rRNA sequence data into analyzed results. |

| Statistical Tool | MaAsLin 2 (Microbiome Multivariable Associations) | A specifically designed tool for finding multivariable associations between microbiome features and metadata in large cohorts. |

| Programming Language | R with phyloseq, MicrobiomeStat packages |

The statistical computing environment with specialized libraries for microbiome data analysis and visualization. |

| Containerization | Docker/Singularity Image for AnADAMA2, bioBakery workflows | Ensures computational reproducibility and ease of pipeline deployment across different HPC/cloud systems. |

| Cloud Service | Google Cloud Life Sciences API, AWS Batch | Managed services for orchestrating and scaling batch processing pipelines on cloud infrastructure. |

Frequently Asked Questions (FAQs)

Q1: My demultiplexing step using bcl2fastq is reporting a very high percentage of unmatched barcodes (>30%). What could be the cause and how can I resolve this?

A: High unmatched barcode rates often indicate poor sequencing quality in the index cycles or index hopping in multiplexed libraries. First, check the quality scores for the index reads in your InterOp or FASTQ files. If quality is low, consider using bcl2fastq's --barcode-mismatches 0 to enforce exact matches or use a more robust demultiplexing tool like deindexer or Leviathan. For single-cell or certain protocols, index hopping can be mitigated by using unique dual indexes (UDIs). Re-running the base calling and demultiplexing with the --create-fastq-for-index-reads option can help diagnose the issue.

Q2: After merging paired-end reads with DADA2, my amplicon sequence variant (ASV) table has an unusually high proportion of chimeras (>25%). How should I proceed?

A: Excessive chimera rates typically point to issues in PCR amplification or primer mismatches. First, verify your primer sequences are correctly trimmed. If using the DADA2 pipeline, ensure you are applying strict quality filtering (truncLen, maxEE) before merging. Consider using a more sensitive chimera detection method like de novo with UCHIME2 or reference-based with the removeBimeraDenovo function using method="consensus". If the problem persists, re-assess your PCR cycling conditions (reduce cycle number if possible) and template concentration.

Q3: My taxonomic classification with QIIME 2 and the Silva database is assigning a large fraction of my reads to "Unassigned" or low-confidence taxa. What steps can improve assignment?

A: This is common when using generic databases for specialized or novel microbiomes. First, confirm you are using the correct classifier (e.g., feature-classifier fit-classifier-naive-bayes) trained on the appropriate region of the 16S gene. Consider using a more specific reference database (like Greengenes for gut, or a custom database built from your environment). Lower the confidence threshold (--p-confidence) in q2-feature-classifier cautiously from the default 0.7 to 0.5, but validate results. Alternatively, use k-mer-based tools like Kraken2/Bracken with a curated database, which can offer better sensitivity for novel lineages.

Q4: During beta-diversity analysis (e.g., generating a PCoA plot), my PERMANOVA test shows a significant batch effect. How can I computationally correct for this?

A: Batch effects are a major challenge in large-scale studies. You can apply batch correction methods before diversity analysis. For ASV/OTU tables, use tools like ComBat (from the sva R package) in conjunction with a variance-stabilizing transformation (e.g., DESeq2's varianceStabilizingTransformation). For phylogenetic metrics, consider MMUPHin, which is designed for microbiome data. Always validate correction by visualizing the data before and after using PCoA, coloring points by batch.

Q5: I am encountering "Out of Memory" (OOM) errors when running MetaPhlAn4 on a large number of metagenomic samples. How can I optimize resource usage?

A: MetaPhlAn4 can be memory-intensive for large sample sets. Run samples individually or in small batches using the --add_viruses and --add_fungi flags only if needed, as they increase memory load. Use the --stat_q flag to set a quantile for reporting (default 0.2). Process samples using a workflow manager (Nextflow, Snakemake) to control parallelization. Ensure you are using the latest version and consider profiling memory usage. For very large datasets, alternative tools like HUMAnN3 in stratified mode or Kraken2 with Bracken may be more memory-efficient for profiling.

Troubleshooting Guide: Common Errors & Solutions

| Error / Symptom | Likely Cause | Recommended Solution |

|---|---|---|

cutadapt fails with "IndexError: list index out of range" |

Primer file is formatted incorrectly (likely not in FASTA format). | Ensure your primer file is a standard FASTA file with each primer on a single line after the header. Use -g file:primer.fasta for forward and -G file:primer.fasta for reverse. |

FASTQC reports "Per base sequence content" failure for initial bases |

Expected for amplicon data due to conserved primer sequences. | This is normal for 16S/ITS amplicon sequencing. Proceed with quality trimming but ignore this specific warning. Use --skip-trim in MultiQC if aggregating reports. |

QIIME 2 artifacts fail to load with "This does not appear to be a QIIME 2 artifact" |

File corruption or incorrect file extension. Artifacts are directories, not single files. | Use qiime tools validate on the artifact. If corrupted, re-export from the previous step. Always use .qza/.qzv extensions for clarity. |

DESeq2 differential abundance analysis yields all NA p-values |

Insufficient replication, zero counts, or extreme dispersion. | Filter features with less than 10 total counts across all samples. Use a zero-inflated model like ZINB-WaVE (via phyloseq & DESeq2) or a non-parametric test (ALDEx2, ANCOM-BC). |

PICRUSt2 or BugBase predictions show low NSTI values but poor validation |

The reference genome database does not adequately represent your microbiome's phylogeny. | Consider using a tool like PanFP that relies on marker genes rather than full genomes, or switch to shotgun metagenomics for functional profiling. |

Experimental Protocols

Protocol 1: DADA2 Pipeline for 16S rRNA Amplicon Data (R)

Objective: Generate high-resolution Amplicon Sequence Variants (ASVs) from raw paired-end FASTQ files.

- Quality Control & Filtering:

Learn Error Rates & Dereplicate:

Sample Inference & Merge Pairs:

Construct Sequence Table & Remove Chimeras:

Protocol 2: MetaPhlAn4 for Taxonomic Profiling of Shotgun Metagenomes

Objective: Obtain species-level taxonomic profiles from whole-genome sequencing (WGS) reads.

- Database Preparation (One-time):

Run Taxonomic Profiling (Per Sample):

Merge Individual Profiles:

Stratified Functional Profiling with HUMAnN 3.0:

Visualizations

Diagram 1: Core Computational Pipeline for Microbiome Analysis

Diagram 2: Troubleshooting Logic for Failed Classification

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Workflow | Key Consideration for Efficiency |

|---|---|---|

| Reference Database (e.g., SILVA, Greengenes, GTDB) | Provides taxonomic labels for sequences. Choice impacts resolution and novelty detection. | Use a version-controlled, specific database. For large-scale studies, a custom, curated subset reduces memory and time. |

| Cluster/Cloud Computing Credits | Enables parallel processing of hundreds of samples for alignment, assembly, etc. | Implement workflow managers (Nextflow/Snakemake) for cost-effective, reproducible scaling. Use spot/Preemptible instances. |

| BIOM Format File | The standardized (IETF RFC 6838) HDF5-based table for storing biological sample/feature matrices. | Essential for interoperability between QIIME 2, Phyloseq, and other tools. More efficient than TSV for large tables. |

| Conda/Mamba Environment .yml File | Specifies exact software versions (DADA2, QIIME2, etc.) to ensure computational reproducibility. | Critical for avoiding "works on my machine" issues in collaborative or longitudinal projects. |

| Sample Metadata (TSV) | Contains experimental design variables (treatment, timepoint, batch) for statistical testing and visualization. | Must comply with MIXS standards. Accurate metadata is the linchpin of correct biological interpretation. |

Table 1: Performance Comparison of Common Taxonomic Classifiers

| Classifier | Tool Used | Average Runtime (per 1M reads) | Memory Footprint (GB) | Accuracy* (%) on Mock Community | Key Strength |

|---|---|---|---|---|---|

| Naive Bayes | QIIME2 (feature-classifier) |

~15 min | 4-8 | 85-92 | Integrated pipeline, easy use. |

| k-mer based | Kraken2/Bracken | ~5 min | 70-100 (for full DB) | 88-95 | Extremely fast, sensitive to novel strains. |

| Alignment-based | MetaPhlAn4 | ~20 min | 16-32 | 90-96 | Species/strain-level resolution, curated markers. |

| LCA-based | MEGAN (DIAMOND) | ~45 min | 8-12 | 82-90 | Powerful for functional + tax. annotation. |

*Accuracy depends on database and read quality.

Table 2: Computational Demands for 100-Sample 16S Dataset

| Processing Stage | Typical Software | CPU Cores Used | Wall Clock Time (approx.) | Output Size (approx.) |

|---|---|---|---|---|

| Quality Filtering | DADA2 (filterAndTrim) |

8 | 1-2 hours | 50-70 GB |

| ASV Inference | DADA2 (dada, mergePairs) |

8 | 3-5 hours | 1-2 GB (seq table) |

| Chimera Removal | DADA2 (removeBimeraDenovo) |

8 | 30 min | 0.5-1 GB |

| Taxonomy Assignment | QIIME2 + Silva | 4 | 1 hour | < 0.1 GB |

| Diversity Metrics | QIIME2 (core-metrics-phylogenetic) |

2 | 30 min | 0.5 GB |

Troubleshooting Guides & FAQs

FAQ: Why is my microbiome taxonomic classification tool (e.g., Kraken2, MetaPhlAn) running extremely slowly or crashing?

- Answer: This is typically a memory (RAM) limitation. Classifiers load large reference databases (>100 GB) into RAM. Insufficient memory forces the system to use disk swap, crippling performance.

- Solution:

- Check the tool's documentation for the RAM requirement of your specific database.

- Profile your job's memory usage using commands like

top,htop, orfree -h. - Allocate more RAM per node in your job scheduler (e.g., Slurm's

--memflag) or reduce the database size by selecting a specific genomic kingdom.

FAQ: My parallelized genome assembly (e.g., using SPAdes, MEGAHIT) is not scaling well across many CPU cores. What's the bottleneck?

- Answer: After a certain point, the bottleneck shifts from CPU to I/O (Input/Output). Assembly involves intensive read-and-write operations for intermediate k-mer graphs. Many processes competing for disk access saturates I/O bandwidth.

- Solution:

- Use a local scratch storage (fast SSD/NVMe) on the compute node for intermediate files, if available.

- Limit the number of cores per assembly job (often 16-32 is optimal) and run multiple assemblies concurrently.

- Consider using a filesystem designed for high parallel I/O (e.g., Lustre, BeeGFS) and ensure your data is striped across multiple storage servers.

FAQ: I am running out of storage space while processing large-scale metagenomic sequencing runs. How can I manage this?

- Answer: Raw FASTQ files and intermediate alignment files (e.g., BAM) are the primary consumers. A single HiSeq run can produce several terabytes of data.

- Solution:

- Implement a data lifecycle policy: compress FASTQ files (

.gz), delete intermediate files after successful downstream analysis, and archive only final results. - Use efficient, compressed formats for analysis-ready data (e.g., CRAM instead of BAM for alignments).

- For active projects, leverage high-performance, scalable storage tiers. Archive inactive data to cheaper, cold storage.

- Implement a data lifecycle policy: compress FASTQ files (

FAQ: My R/Python script for statistical analysis of microbiome count tables is taking days to complete. How can I speed it up?

- Answer: This is often a CPU efficiency issue. Scripts not written for performance may use loops instead of vectorized operations, run on a single core, or load entire datasets into memory repeatedly.

- Solution:

- Use optimized libraries (e.g.,

data.table,NumPy,pandas) and vectorized operations. - Parallelize tasks using libraries like

multiprocessingin Python orparallelin R. - Profile your code (e.g., with

cProfilerin Python) to identify and rewrite the slowest functions.

- Use optimized libraries (e.g.,

Table 1: Typical Resource Demands for Common Microbiome Analysis Tools

| Tool/Step | Primary Demand | Typical Scale (Per Sample) | Notes |

|---|---|---|---|

| Quality Control (FastQC, Trimmomatic) | CPU, I/O | 4-8 CPU cores, < 10 GB RAM, 50-100 GB I/O | Easily parallelized. High I/O from reading/writing FASTQ. |

| Metagenomic Assembly (MEGAHIT) | Memory, CPU | 32-128 GB RAM, 16-32 CPU cores, High I/O on /tmp | Memory scales with sample complexity and sequencing depth. |

| Taxonomic Profiling (Kraken2) | Memory | 100 GB+ RAM for standard database, 8-16 CPU cores | Database must be loaded into RAM. Ultimate limiting factor. |

| Functional Profiling (HUMAnN3) | CPU, I/O | 16-24 CPU cores, 32 GB RAM, Very High I/O | Multiple serial and parallel steps; uses protein search databases. |

| Differential Abundance (DESeq2, in R) | Memory, CPU | 16-32 GB RAM for large tables, 4-8 CPU cores (if parallelized) | Memory usage scales with number of features (species/genes) and samples. |

Table 2: Storage Requirements for Data Types

| Data Type | Size Range (Per Sample) | Compression Advice |

|---|---|---|

| Raw Paired-end FASTQ | 1 - 10 GB | Always keep gzipped (.fastq.gz). Can reduce by 60-70%. |

| Processed/Filtered Reads | 0.5 - 7 GB | Keep gzipped. Consider deletion after final alignment/assembly. |

| Metagenomic Assembly (FASTA) | 0.1 - 5 GB | Use gzip. |

| Alignment File (BAM/CRAM) | 5 - 50 GB | Use CRAM format. Can be 40-60% smaller than BAM. |

| Feature Tables (TSV/CSV) | 1 MB - 1 GB | Text compression (gzip) is effective. |

Experimental Protocols

Protocol 1: Profiling Memory Usage for a Classification Job

Objective: Determine the peak memory requirement for running Kraken2 with a specific database on your metagenomic reads. Methodology:

- Tool: Use the

/usr/bin/time -vcommand on Linux systems, which reports maximum resident set size (RSS). - Command:

- Key Metric: In the output, locate the "Maximum resident set size (kbytes)" line. Convert kB to GB (divide by 1,048,576).

- Interpretation: This is the peak physical memory used. Allocate 10-20% more in your job script to ensure stability.

Protocol 2: Assessing I/O Bottlenecks in a Workflow

Objective: Identify if disk speed is limiting a multi-step analysis pipeline. Methodology:

- Tool: Use

iostat(from thesysstatpackage) during job execution. - Command:

iostat -dx 5(shows device extended statistics every 5 seconds). - Key Metrics:

%util: Percentage of CPU time during which I/O requests were issued. Consistently >80% indicates a saturated device.await: Average wait time (ms) for I/O requests. High values (>50ms for SSD, >200ms for HDD) indicate slowdown.

- Mitigation Experiment: Run the same pipeline on a local SSD scratch space versus a network-attached storage. Compare

awaittime and total job runtime.

Diagrams

DOT Script for Microbiome Analysis Resource Bottlenecks

Diagram Title: Microbiome Analysis Pipeline Bottlenecks

DOT Script for Data Lifecycle & Storage Management

Diagram Title: Data Storage Tier Lifecycle Strategy

The Scientist's Toolkit: Research Reagent Solutions

| Resource / "Reagent" | Function / Purpose | Example/Notes |

|---|---|---|

| High-Memory Compute Nodes | Provides the RAM necessary to load large reference genomes or databases for classification/assembly. | Nodes with 512GB - 2TB RAM. Essential for tools like Kraken2, MetaPhlAn. |

| High-Throughput Storage | Provides fast, parallel read/write access for concurrent jobs processing large sequencing files. | Lustre or BeeGFS filesystems. Critical for preventing I/O bottlenecks. |

| Local Scratch Storage (NVMe) | Ultra-fast temporary storage on compute nodes for I/O-heavy intermediate files. | Node-local NVMe drives. Dramatically speeds up assembly and alignment steps. |

| Job Scheduler | Manages and allocates compute resources (CPU, RAM, time) across a shared cluster for many users. | Slurm, PBS Pro, or IBM Spectrum LSF. Required for efficient resource utilization. |

| Containerization Platform | Ensures software environment (tools, versions, dependencies) is reproducible and portable. | Docker or Singularity/Apptainer. Packages complex pipelines like QIIME2, HUMAnN. |

| Reference Database (Curated) | A high-quality, non-redundant set of genomes used for taxonomic or functional profiling. | GTDB, RefSeq, UniRef90. The quality dictates analysis accuracy. |

| Version-Controlled Code Repo | Tracks changes to analysis scripts, ensuring reproducibility and collaboration. | Git repositories (GitHub, GitLab). Maintains a history of all analytical choices. |

Technical Support Center: Troubleshooting Guides & FAQs

DADA2 Pipeline

FAQ 1: Why is the sample inference step (learning error rates, dereplication) taking an extremely long time, and how can I speed it up?

Answer: This step is computationally intensive as it builds error models from your data. Slowdowns are often due to high sequencing depth or many samples.

- Troubleshooting: Reduce the

nbasesparameter in thelearnErrors()function for a faster, though slightly less accurate, error model. Ensure you are using multi-threading by settingmultithread=TRUE. Pre-filter your reads to remove low-quality sequences. - Protocol for Optimization:

- Use

filterAndTrim()to aggressively remove low-quality reads. - Run

learnErrors()withnbases=1e8(100 million bases) instead of the default for a preliminary check. - Utilize a high-performance computing (HPC) cluster and submit jobs in parallel per sample batch.

- Use

FAQ 2: My mergePairs step is failing or has a very low merging percentage. What is wrong?

Answer: Low merging efficiency is commonly caused by reads overlapping in variable regions, poor read quality at the ends, or overly strict alignment parameters.

- Troubleshooting: Visualize the quality profiles (

plotQualityProfile) to ensure the ends of your reads are of sufficient quality. Adjust theminOverlapandmaxMismatchparameters in themergePairs()function. Consider trimming more from the ends before merging.

QIIME 2 Pipeline

FAQ 1: The feature table construction step (denoising with Deblur or DADA2 plugin) is my major bottleneck. How can I manage this for 100+ samples?

Answer: Denoising is inherently compute-heavy. QIIME 2's default is to run samples serially.

- Troubleshooting: Use the

--p-n-threadsparameter to utilize multiple cores per job. For very large studies, split your sequence data into multiple manifest files and run denoising in parallel batches using a job array on an HPC system. Consider using theq2-dada2plugin overq2-deblurfor faster execution on some systems. - Protocol for Batch Processing:

- Split your

manifest.csvinto 4 batch files (e.g.,batch1.csv). - Write a shell script to submit separate QIIME 2

dada2 denoise-pairedjobs for each batch. - After all batches complete, merge the resulting feature tables and representative sequences using

qiime feature-table mergeandqiime feature-seq merge.

- Split your

FAQ 2: The phylogenetic tree generation (alignment with MAFFT, tree building with FastTree) is slow. Are there alternatives?

Answer: Yes. While MAFFT is accurate, it is slow for large alignments (>100k sequences).

- Troubleshooting: Use the

q2-fragment-insertionplugin for phylogenetic placement as an alternative. If a tree is required, substitute MAFFT withq2-clustalofor a faster multiple sequence alignment, or use the--p-n-threadsparameter with MAFFT. For FastTree, ensure you are using the--p-double-precisionflag for better accuracy at a slight speed cost.

MOTHUR Pipeline

FAQ 1: The align.seqs command (aligning to the SILVA database) consumes massive memory and time. How can I optimize this?

Answer: Aligning a large number of unique sequences to a comprehensive reference is demanding.

- Troubleshooting: Pre-filter your sequences by length and complexity before alignment. Use the

flip=Tparameter to check the reverse complement. Increase theprocessorsoption. Consider performing alignment on a subset of unique sequences, then re-aligning the full set using thealign.checkandreorient.seqsworkflow. - Protocol for Efficient Alignment:

screen.seqs(fasta=, minlength=, maxlength=, maxambig=)unique.seqs()align.seqs(fasta=, reference=silva.v4.align, processors=24, flip=T)summary.seqs()to check alignment success.

FAQ 2: The classify.seqs step is slow. Any tips to accelerate taxonomy assignment?

Answer: The native RDP classifier in Mothur can be slow for large datasets.

- Troubleshooting: Use the

probs=Foption to skip confidence score calculation for a significant speedup. Alternatively, use thewangmethod instead ofknn. Pre-cluster sequences (pre.cluster) to reduce the number of sequences to classify. Consider using a smaller, targeted reference taxonomy file if applicable.

Table 1: Comparative Time Consumption of Key Pipeline Steps (Approximate for 100 Samples, 100k Reads/Sample)

| Pipeline | Step | Primary Bottleneck | Estimated Time (Standard) | Estimated Time (Optimized) | Key Optimization Parameter |

|---|---|---|---|---|---|

| DADA2 | learnErrors(), derepFastq() |

CPU: Error Model Learning | 4-6 hours | 1-2 hours | nbases, multithread |

| DADA2 | mergePairs() |

Algorithm: Read Overlap | 1 hour | 20 mins | minOverlap, maxMismatch |

| QIIME 2 | dada2 denoise-paired |

CPU: Sample Inference | 5-8 hours | 1.5-3 hours (batch parallel) | --p-n-threads, batch processing |

| QIIME 2 | alignment mafft |

CPU: Multiple Sequence Alignment | 3-5 hours | 2-4 hours | --p-n-threads, use clustalo |

| MOTHUR | align.seqs |

Memory & CPU: Sequence Alignment | 6-10 hours | 3-6 hours | processors, pre-filtering |

| MOTHUR | classify.seqs |

CPU: Taxonomy Assignment | 2-4 hours | 30-60 mins | probs=F, pre.cluster |

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 2: Essential Resources for Efficient Microbiome Analysis

| Item | Function | Example/Note |

|---|---|---|

| High-Performance Computing (HPC) Cluster | Provides parallel processing, high memory nodes, and job scheduling for large-scale data. | Essential for batch processing in DADA2/QIIME 2. Use SLURM or PBS job scripts. |

| Conda/Bioconda Environment | Manages isolated, reproducible software installations with correct dependencies for each pipeline. | Prevents version conflicts between QIIME 2, MOTHUR, and DADA2. |

| Reference Databases (Trimmed) | Smaller, targeted databases speed up alignment and classification. | Use SILVA V4/V4-V5 region alignment files for Mothur. Use trimmed 16S classifiers for QIIME 2. |

| Quality Control Tools (FastQC, MultiQC) | Provides initial visualization of read quality to inform trimming/filtering parameters. | Critical for diagnosing issues before they enter the core pipeline. |

| Metadata Management File | A well-formatted sample sheet (manifest) is crucial for error-free batch processing. | Required by QIIME 2 and for organizing samples in DADA2 scripts. |

Workflow and Bottleneck Visualization

Diagram 1: Core 16S rRNA Amplicon Pipeline with Key Bottlenecks

Diagram 2: Optimization Pathways for Denoising Step

This technical support center provides guidance on common challenges in measuring computational performance within microbiome research, framed by the thesis: Optimizing Computational efficiency for large-scale microbiome datasets research.

Troubleshooting Guides & FAQs

Q1: My 16S rRNA pipeline is taking days to run. Which metrics should I profile first to identify bottlenecks?

A: The primary metrics are Time-to-Solution and Resource Utilization. First, measure Wall-clock Time (total elapsed time) for each pipeline stage (e.g., quality filtering, ASV clustering). Then, profile CPU Utilization (%): if it's consistently below ~80% on a multi-core system, your workflow may not be effectively parallelized. High Memory Usage relative to your node's RAM will cause swapping, drastically slowing performance. Use tools like /usr/bin/time -v for Linux or dedicated profilers (e.g., Snakemake's --profile).

Q2: How do I accurately compare the cost-efficiency of running my analysis on my local HPC versus a cloud provider? A: You must normalize performance to a unit cost. The standard metric is Cost-to-Solution. First, establish a baseline:

- Run your analysis on your local HPC and record the Wall-clock Time.

- Calculate your local cost using an Approximate Cost-Per-Hour (e.g., prorated hardware depreciation + electricity + maintenance).

- For the cloud, select a comparable instance type (vCPU, RAM). Run the same analysis and record the time.

- Use the cloud provider's hourly rate.

Compare using the following table:

| Metric | Local HPC (Baseline) | Cloud Provider A (c5n.2xlarge) | Cloud Provider B (Standard_H8) |

|---|---|---|---|

| Wall-clock Time (hrs) | 24.0 | 18.5 | 16.0 |

| Resource Cost Per Hour | $0.85 (estimated) | $0.432 | $0.78 |

| Total Cost-to-Solution | $20.40 | $7.99 | $12.48 |

| Relative Cost Efficiency | 1.0x | 2.55x | 1.63x |

Note: Table data is illustrative. Perform your own benchmark.

Q3: My metagenomic assembly job failed with an "Out of Memory" error. How do I estimate memory needs for future runs? A: Memory requirements for assembly scale with dataset size and complexity. Use a scaling experiment:

- Protocol: Randomly subsample your sequencing reads (e.g., using

seqtk sample) at different fractions (10%, 25%, 50%). - Run your assembler (e.g., MEGAHIT, SPAdes) on each subset while tracking Peak Memory Usage (with

/usr/bin/time -vorps). - Plot Peak Memory against the number of base pairs or reads processed. This linear relationship allows you to extrapolate the memory needed for the full dataset.

Q4: What are "FLOPs" and are they a useful metric for my microbiome sequence alignment tasks?

A: FLOPs (Floating-Point Operations) measure raw computational work. They are less useful for most microbiome bioinformatics, which is often I/O-bound (waiting to read/write large sequence files) or integer-operation-heavy (string matching, hashing). Focus on Throughput-based metrics like Reads Processed Per Second or Gigabases Aligned Per Hour. These directly relate to your research output. Profiling I/O wait time (%wa in top or iostat) is often more insightful.

Q5: How can I visualize the trade-offs between accuracy, speed, and cost for different taxonomic classifiers? A: Design a controlled benchmarking experiment:

- Protocol: Use a standardized, validated mock community dataset with a known ground truth composition.

- Run multiple classifiers (e.g., Kraken2, Bracken, MetaPhlAn, a naive BLAST search) on the same hardware.

- Measure for each: Execution Time (speed), Peak Memory (resource use), CPU Hours (cost proxy), and Taxonomic Accuracy (e.g., F1-score vs. ground truth).

- Summarize in a multi-axis comparison table.

| Classifier | Time (min) | CPU Hrs | Peak RAM (GB) | Accuracy (F1-Score) | Est. Cost per 1M Reads* |

|---|---|---|---|---|---|

| Kraken2 | 15 | 2.0 | 70 | 0.89 | $0.08 |

| MetaPhlAn4 | 5 | 0.3 | 16 | 0.92 | $0.02 |

| BLAST (nt) | 420 | 168.0 | 8 | 0.95 | $6.72 |

*Cost estimate based on a generic cloud compute rate of $0.04 per CPU hour.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Experiments |

|---|---|

| Snakemake / Nextflow | Workflow Management Systems. They automate, reproduce, and parallelize complex pipelines across different compute environments, directly improving efficiency metrics. |

| Conda / Bioconda | Package & Environment Manager. Ensures reproducible software installations with correct dependencies, eliminating "works on my machine" errors and setup time. |

| Singularity / Docker | Containerization Platforms. Packages an entire software environment (OS, tools, libraries) into a single, portable image, guaranteeing consistency from local to cloud/HPC. |

| Benchmarking Mock Communities | Standardized in silico or physical DNA samples with known composition. The critical reagent for calibrating and benchmarking classifier accuracy vs. speed/cost. |

| Time & Profiling Commands | Core system tools (/usr/bin/time, perf, htop, gpustat) and workflow profilers. They are the "measuring cylinders" for collecting raw efficiency metrics. |

Visualizations

Diagram 1: Computational Efficiency Metric Hierarchy

Diagram 2: Benchmarking Protocol for Classifiers

Building Scalable Pipelines: Efficient Algorithms and Tools for Mega-Analyses

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My metagenomic assembly is failing or is extremely slow with a large, complex dataset. What are my primary algorithmic options to improve efficiency? A: The primary strategies involve reducing data size before assembly. Use k-mer sketching (e.g., with Mash or Dashing) to estimate sequence similarity and filter highly redundant reads. Alternatively, apply uniform subsampling based on read counts to process a manageable, representative subset. For assembly itself, employ a memory-efficient k-mer counting algorithm (e.g., Jellyfish 2, KMC3) that uses disk-based sorting or probabilistic data structures to handle the vast k-mer space.

Q2: When using k-mer-based tools for sequence comparison (like Mash distance), my results vary significantly when I change the k-mer size (k) or sketch size (s). How do I choose stable parameters? A: Parameter stability is crucial for reproducible research. Increase

kuntil distances plateau, typically between 21-31 for microbial genomes. The sketch sizesshould be large enough to minimize variance; a size of 10,000 is a common default, but increase to 50,000 or 100,000 for finer resolution in large-scale studies. Always report the exact parameters (k,s, sketch hash seed) with your results.Q3: After subsampling my microbiome samples to an even sequencing depth, I am concerned about losing rare but biologically important taxa. How can I justify or mitigate this? A: Subsampling (rarefaction) is a trade-off for comparative beta-diversity analysis. Justify your depth by generating a rarefaction curve of observed species vs. sequencing depth. Choose a depth where the curve for most samples begins to asymptote. For downstream analysis, always couple results with a sensitivity analysis: repeat the analysis at multiple subsampling depths (e.g., 90%, 100%, 110% of your chosen depth) to confirm trends for core findings are robust.

Q4: I am getting inconsistent results when sketching genomes from different assemblers (e.g., metaSPAdes vs. Megahit). Is the sketch sensitive to assembly quality? A: Yes. Sketching algorithms operate on the sequence data provided. Different assemblers produce contigs of varying lengths, completeness, and error profiles. A fragmented assembly will yield a sketch based on many short sequences, potentially missing longer-range k-mer contexts. For consistent comparative genomics, always sketch finished, high-quality genomes or use a standardized assembly pipeline for all datasets before sketching.

Q5: What is the practical difference between a MinHash sketch (e.g., Mash) and a HyperLogLog sketch (e.g., Kmer-db) for estimating genomic distances? A: Both are probabilistic data structures for estimating set similarity (Jaccard index) but differ in mechanism and optimal use cases. See the table below for a comparison.

Table 1: Comparison of MinHash and HyperLogLog Sketching Methods

| Feature | MinHash Sketch (e.g., Mash) | HyperLogLog Sketch (e.g., Dashing, Kmer-db) |

|---|---|---|

| Core Function | Samples the smallest hashed k-mers. | Estimates unique k-mer count via maximum leading-zero count in hashes. |

| Primary Output | Jaccard Index estimate, converted to distance. | Cardinality (unique count) and union/intersection size estimates. |

| Memory Efficiency | Good; fixed sketch size s. |

Excellent; often more memory-efficient for a given error rate. |

| Speed | Very fast for distance calculation. | Extremely fast for sketch creation and comparison. |

| Typical Use Case | Genome distance, clustering, search. | Massive-scale all-vs-all comparison, containment estimation. |

Troubleshooting Guides

Issue: Extremely High Memory Usage during K-mer Counting.

- Symptom: Process is killed or stalls on a large node (>100GB RAM).

- Diagnosis: The canonical k-mer counting approach is holding all distinct k-mers in memory.

- Solution:

- Switch Algorithm: Use a disk-based k-mer counter (e.g., KMC3, DSK). They trade speed for dramatically lower RAM.

- Increase K-mer Size: A larger

kreduces the total set of possible k-mers, lowering the number of observed unique k-mers. - Use a Counting Filter: Apply a minimum abundance filter (

-cxflag in Jellyfish) to ignore likely erroneous low-count k-mers early.

Issue: Sketched Distances Do Not Match Expected Biological Relationships.

- Symptom: Technical replicates cluster separately, or known related strains appear distant.

- Diagnosis: Parameter or input inconsistency.

- Solution Workflow:

- Verify Input Uniformity: Ensure all input files are the same type (all assemblies or all raw reads). Mixed types invalidate distances.

- Check k-mer Size: Re-compute sketches with a higher

kvalue to increase specificity. - Increase Sketch Size: Re-compute with a larger sketch size (e.g., from 1,000 to 10,000) to reduce stochastic noise.

- Validate with a Control: Include a positive control (e.g., sequencing the same sample twice) in your sketch analysis. The distance between controls should be near zero.

Issue: Subsampling Leads to Loss of Statistical Power in Differential Abundance Testing.

- Symptom: After rarefaction, a differential abundance test (e.g., ANCOM-BC, DESeq2) finds no significant features, whereas pre-subsampling analysis did.

- Diagnosis: Overly aggressive subsampling discarded signal.

- Solution Protocol:

- Pre-filtering: Prior to subsampling, remove low-abundance taxa (e.g., those with < 10 total counts across all samples) that contribute mostly to noise.

- Iterate Depth: Systematically test a range of subsampling depths. Use the deepest possible depth that retains >90% of your samples (you may discard a few low-depth outliers).

- Complement with Model-Based Methods: Use subsampling for diversity metrics, but switch to a model-based differential abundance tool (like those listed above) that uses the full count data without rarefaction for the final statistical test. Report results from both approaches.

Experimental Protocol: Large-Scale Metagenome Clustering via Sketching

Objective: To efficiently cluster thousands of metagenomic assemblies or samples based on sequence composition for downstream analysis.

Materials & Reagents (The Scientist's Toolkit)

| Item | Function/Explanation |

|---|---|

| High-Performance Computing (HPC) Cluster | Essential for parallel sketch creation and all-vs-all comparisons. |

| Mash (v2.0+) or Dashing (v2.0+) | Software for creating MinHash or HyperLogLog sketches and calculating pairwise distances. |

| FastK or KMC3 | For rapid, low-memory k-mer counting as a pre-processing step for very large datasets. |

| Custom Python/R Scripts | For parsing sketch output, generating distance matrices, and controlling workflow. |

| Multiple Sequence Aligners (e.g, GTDB-Tk) | Used for detailed phylogenetic analysis on representative clusters identified by sketching. |

Methodology:

- Data Preparation: Place all input FASTA files (metagenome-assembled genomes or raw read files) in a single directory.

- Sketch Creation: Run the sketching tool on each file. Example Mash command:

mash sketch -k 31 -s 10000 -o ./sketches/sample.msh sample.fasta - All-vs-All Distance Calculation: Compute the pairwise distance between all sketches. Example:

mash dist ./sketches/*.msh > distances.tab - Matrix Construction: Parse the

.taboutput into a symmetric distance matrix. - Clustering: Apply a clustering algorithm (e.g., hierarchical clustering with average linkage, or UPGMA) to the distance matrix to group related samples.

- Validation: Assess cluster quality by checking if known technical replicates cluster together and if clusters correspond to known biological categories (e.g., body site, disease state).

Visualizations

Diagram 1: Workflow for Efficient Large-Scale Metagenomic Analysis

Diagram 2: Logical Relationship Between k, Sketch Size, and Result Stability

Technical Support Center: Troubleshooting Guides & FAQs

General Parallelization Issues

Q1: My multi-threaded application shows no speedup or even slows down on a multi-core server. What are the primary causes? A: This is typically caused by:

- Resource Contention: Threads competing for shared resources (memory bandwidth, cache, I/O).

- False Sharing: Multiple threads modifying variables that reside on the same CPU cache line, causing invalidations.

- Excessive Synchronization: Overuse of locks or barriers, forcing threads to wait.

- Insufficient Workload Granularity: The overhead of creating/managing threads outweighs the parallel work done.

Diagnostic Protocol:

- Profile with

perf stat(Linux) or VTune to identify cache misses and high lock contention. - Use thread sanitizers (e.g.,

-fsanitize=threadin GCC/Clang) to detect data races. - Restructure code to ensure data accessed by one thread is padded and aligned to cache line boundaries (typically 64 bytes).

Q2: When moving a microbiome genome assembly pipeline from a local HPC to a cloud environment, my I/O performance collapses. How can I mitigate this? A: Cloud object storage (e.g., AWS S3, GCS) has different performance characteristics than an HPC's parallel file system.

Mitigation Protocol:

- Intermediate Data: Use attached cloud block storage (e.g., AWS EBS, GCP Persistent Disk) or local instance SSDs for scratch space during computation.

- Data Staging: Implement a staged workflow. Bulk input and final output use object storage, but all intermediate files for a single node's job must be on local, block, or high-performance Lustre/EFS storage.

- Optimize File Patterns: Bundle thousands of small microbiome sequence files into larger archives (

.tar) before transfer and processing to reduce request overhead.

HPC-Specific Issues

Q3: My MPI job for metagenomic read mapping fails with "out of memory" on some nodes but not others, causing the entire job to fail. A: This indicates workload imbalance. Some MPI ranks receive or generate more data than others, often due to skewed input data distribution.

Troubleshooting Protocol:

- Load Balancing: Implement dynamic work scheduling. Instead of statically assigning input files by rank, use a manager-worker model where ranks request new work upon completion.

- Checkpoint/Restart: Modify code to write periodic checkpoints. Restart the job with more resources or a better balanced input list.

- Memory Profiling: Use

mpirunwith memory tracking wrappers (e.g.,memcheckfrom Valgrind) to identify the high-water mark per rank.

Q4: How do I choose between MPI and OpenMP for aligning large microbiome sequencing batches? A: The choice is dictated by memory architecture and problem structure.

| Criterion | MPI (Distributed Memory) | OpenMP (Shared Memory) |

|---|---|---|

| Problem Scale | Large-scale, >1 node (100s-1000s of cores) | Single multi-core node (2-128 cores) |

| Data Dependence | Loosely coupled tasks (e.g., independent samples) | Fine-grained, loop-level parallelism |

| Memory Model | Each process has separate memory address space | All threads share a common memory address space |

| Best for Microbiome | Processing thousands of samples in parallel | Processing a single, large sample with multi-threaded tools (Bowtie2, BWA) |

| Primary Challenge | Explicit data communication overhead | Risk of race conditions and false sharing |

Selection Protocol:

- If your workflow processes many independent samples, use MPI to assign different samples to different MPI ranks.

- If you are accelerating a single tool on a large, multi-core node, use OpenMP (if the tool supports it) or combine MPI+OpenMP (hybrid model).

Cloud-Specific Issues

Q5: My cloud-based batch processing for 16S rRNA classification is costlier than anticipated. How can I optimize costs? A: Costs often inflate due to over-provisioning and data transfer fees.

Cost Optimization Protocol:

- Right-Sizing: Profile a single job's peak CPU and memory usage using cloud monitoring tools. Choose instance types that match (e.g., memory-optimized for database searches, compute-optimized for assembly).

- Spot Instances: Use preemptible/spot instances for fault-tolerant batch jobs. Implement checkpointing to save progress.

- Data Locality: Ensure compute instances and storage buckets are in the same cloud region to avoid egress charges.

Q6: Containerized microbiome tools (in Docker/Singularity) have high startup latency on my cloud batch system, slowing down short tasks. A: The image pull time dominates short job execution.

Optimization Protocol:

- Use Cached Images: Pre-pull the container image to the VM's local disk at node startup, not at job launch.

- Minimize Image Size: Create layered images. Use a small base OS (Alpine) and only install essential packages.

- Consider Package Managers: For very short tasks, use conda/bioconda on a pre-built machine image instead of containers, if security policy allows.

Multi-threading & Performance

Q7: The number of optimal threads for my analysis varies unpredictably between different microbiome datasets on the same machine.

A: The optimal thread count (O) is a function of both computational workload and machine architecture: O ≈ (# of Physical Cores) / (1 - B), where B is the fraction of time spent on memory-bound operations. Datasets with different characteristics alter B.

Experimental Tuning Protocol:

- Perform a strong scaling test. Run your tool on a fixed, representative dataset, varying threads from 1 to

# of cores * 2. - Measure wall-clock time and compute speedup. Plot threads vs. speedup.

- The point where the curve significantly flattens is the optimal thread count for that dataset/machine combo. Integrate this auto-tuning step into your workflow's setup phase.

Q8: My Python pipeline for microbiome statistics (using pandas, NumPy) does not utilize all CPU cores despite using multi-threaded libraries. A: The Global Interpreter Lock (GIL) in CPython prevents true multi-threading for pure Python code.

Parallelization Protocol:

- Use Native Library Functions: Ensure you are using vectorized NumPy/pandas operations (which release the GIL internally).

- Multi-processing: Use the

concurrent.futures.ProcessPoolExecutoror themultiprocessingmodule to bypass the GIL for embarrassingly parallel tasks like processing multiple alpha diversity calculations. - Alternative Runtimes: Consider using PyPy or writing performance-critical steps in Cython.

Table 1: Performance Comparison of Parallelization Strategies for Metagenomic Assembly (MEGAHIT) Data sourced from benchmark studies (2023-2024)

| Platform / Strategy | Avg. Wall-clock Time (1 TB Reads) | Relative Cost ($) | Max Scalability (Cores) | Best For |

|---|---|---|---|---|

| Local HPC (MPI) | 4.2 hours | N/A | 512 | Large, fixed datasets; low latency I/O |

| Cloud Batch (Spot VMs) | 5.1 hours | 85 | 1024 | Bursty, variable workloads |

| Single Node (OpenMP) | 28 hours | (Reference) | 64 | Small batches or prototype |

| Hybrid (MPI+OpenMP) | 3.8 hours | 110 (cloud) | 768 | Extremely large, complex assemblies |

Table 2: Common Microbiome Tools & Their Native Parallel Support

| Tool | Task | Primary Model | Typical Speedup (32 cores) | Key Limitation |

|---|---|---|---|---|

| QIIME 2 | General Pipeline | Plugin-specific | 8-12x | Workflow-level, not tool-level |

| Kraken2 | Taxonomic Classification | Multi-threading | 18-22x | Memory-intensive for large DBs |

| MetaPhlAn | Profiling | Multi-threading | 10-15x | Database search step is sequential |

| SPAdes | Genome Assembly | Multi-threading | 20-25x | Diminishing returns after ~32 threads |

| DESeq2 (R) | Differential Abundance | Multi-core (BLAS) | 4-6x | Limited by R's memory model |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for Large-Scale Microbiome Analysis

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

| Workflow Manager | Automates, reproduces, and scales multi-step analyses across different platforms. | Nextflow, Snakemake, CWL. Critical for combining HPC/cloud/multi-threaded steps. |

| Containerization Platform | Ensures tool version and dependency consistency across HPC and cloud. | Docker (for development), Singularity/Apptainer (for HPC). |

| MPI Library | Enables distributed-memory parallelization across nodes in a cluster. | OpenMPI, MPICH. Used for sample-level parallelism. |

| Threading Building Blocks (TBB) | A C++ template library for efficient loop-level parallelization on multi-core CPUs. | Often used within bioinformatics tools for fine-grained task scheduling. |

| Optimized BLAS/LAPACK Library | Accelerates linear algebra operations in R/Python (e.g., PCA, stats). | OpenBLAS, Intel MKL. Can provide 5-10x speedup for statistical steps. |

| Parallel Filesystem Client | Provides high-throughput I/O for data-intensive applications on HPC or cloud. | Lustre client, Spectrum Scale. Essential for concurrent access to large sequence files. |

| Cloud SDK & CLI | Programmatically manages cloud resources (VM creation, job submission, data transfer). | AWS CLI (boto3), Google Cloud SDK (gcloud). |

Experimental & Diagnostic Protocols

Protocol 1: Benchmarking Parallel Scaling Efficiency Objective: Determine the strong and weak scaling characteristics of a microbiome analysis tool.

- Strong Scaling: Fix the input dataset (e.g., 10 million reads). Run the tool using

P= [1, 2, 4, 8, 16, 32, 64] processor cores/threads. - Measure: Record wall-clock time

T(P)for each run. - Calculate: Parallel efficiency

E(P) = T(1) / (P * T(P)). - Weak Scaling: Increase the problem size proportionally with

P. ForPcores, use a datasetPtimes larger than the base. - Calculate: Maintained problem size per unit time. The goal is for

T(P)to remain constant. - Visualize: Plot

E(P)vs.P. A flattening or declining curve indicates scaling limits.

Protocol 2: Diagnosing I/O Bottlenecks in a Cloud Workflow

- Instrumentation: Add timestamps to your workflow code to log the start and end of major read/write phases.

- Cloud Monitoring: Use native tools (e.g., AWS CloudWatch, GCP Operations Suite) to monitor VM disk I/O, network bandwidth, and CPU idle time during job execution.

- Correlation: Correlate high CPU idle periods with disk read/wait metrics. If idle CPU coincides with high disk wait, I/O is the bottleneck.

- Remediation Test: Stage a copy of the input data on local SSD and re-run a single job. A significant time reduction confirms a storage I/O bottleneck.

Workflow & Logical Relationship Diagrams

Title: Microbiome Analysis Parallelization Decision Workflow

Title: HPC Hybrid MPI+OpenMP Data Flow for Large Dataset

Technical Support Center

Troubleshooting Guides & FAQs

General Performance & Computational Issues

Q1: My metagenomic classification job with Kraken2 is running out of memory on a large dataset. What are my options? A: Kraken2's memory usage is primarily determined by the size of its database. Consider these solutions:

- Use a smaller, tailored database: Instead of the standard "standard" or "plus" databases, build or download a database specific to your expected taxa (e.g., human gut, soil).

- Employ a memory-efficient alternative: Tools like metalign are designed for low-memory, high-throughput classification by using lightweight reference sketches instead of full genomes.

- Leverage bracken for post-processing: If you must use Kraken2, run it with the

--reportoutput and then use Bracken to estimate abundance, which can allow you to use a lower-precision Kraken2 run.

Q2: sourmash gather is reporting very low overlap between my sample and a reference genome, even though I expect a high match. Why? A: This is often due to parameter mismatch in sketch creation. Ensure consistency in:

- Scaled value (

-s): The same scaled value must be used for the sample and the database sketches. A mismatch causes incomparable signatures. - k-mer size (

-k): Similarly, k-mer sizes must match. For bacterial genomes,-k 31is common, but for metagenomes or larger genomes,-k 51might be better. - Check contamination: Low overlap can also indicate your sample is highly contaminated or the reference is not present. Use

sourmash comparefirst for an overall similarity check.

Q3: Are there modern, more computationally efficient alternatives to LEfSe for biomarker discovery? A: Yes, LEfSe (LDA Effect Size) can be slow and memory-intensive on large feature tables. Consider these alternatives:

- ANCOM-BC: A statistically rigorous method that controls for false discovery rate and is more efficient with large sample sizes.

- MaAsLin2: A flexible multivariate linear modeling framework that is optimized for performance on high-dimensional microbiome data.

- Aldex2: Uses compositional data analysis and Dirichlet regression, efficient for differential abundance testing in high-throughput sequencing data.

Q4: I get "Kmer/LCA database incomplete" errors with metalign. How do I resolve this? A: This indicates the precomputed index for your chosen database and k-mer size is missing.

- Download the complete database: Ensure you downloaded both the

references_sketchesand the*_indexdirectories for your specific k (e.g.,k=31). - Rebuild the index: Use the

metalign buildcommand with the--keep-kmersflag to generate the required LCA database files from your reference sketches.

Q5: How can I validate that my Kraken2/minimap2/metalign results are consistent? A: Implement a consensus/sanity-check protocol:

- Run a small subset of your samples through 2-3 different classifiers (e.g., Kraken2, metalign, and sourmash+gather).

- Aggregate results at the phylum/genus level and compare relative abundances.

- Calculate a correlation metric (e.g., Spearman correlation) between the outputs for major taxa. High correlation (>0.85) suggests robust findings, while large discrepancies require investigation into tool-specific biases.

Table 1: Comparison of Metagenomic Classification Tools

| Tool | Core Method | Memory Efficiency | Speed | Primary Output | Best Use Case |

|---|---|---|---|---|---|

| Kraken2 | Exact k-mer matching, LCA | Low (depends on DB) | Very Fast | Read-level classification | High-precision taxonomy, clinical pathogen detection |

| metalign | Minimizer-based sketching, LCA | Very High | Fast | Abundance profiles (EC, species) | Large-scale cohort studies, low-memory environments |

| sourmash | MinHash sketching, containment | High | Moderate | Similarity, containment, gather | Metagenome comparison, strain-level analysis, provenance |

| minimap2 | Spliced/unspliced alignment | Moderate | Fast | Sequence alignment (SAM) | Mapping reads to genomes/MAGs, nucleotide-level accuracy |

Table 2: Alternatives to LEfSe for Differential Abundance

| Tool | Statistical Approach | Handles Compositionality? | Speed (vs. LEfSe) | Output Format |

|---|---|---|---|---|

| LEfSe | LDA + Kruskal-Wallis | No | Baseline (1x) | Biomarker scores, LDA score |

| ANCOM-BC | Linear model with bias correction | Yes | Faster | Log-fold changes, p-values, q-values |

| MaAsLin2 | General linear/ mixed models | Yes (transformations) | Much Faster | Effect sizes, p-values, q-values |

| Aldex2 | Dirichlet regression, CLR | Yes | Similar/Slightly Faster | Effect sizes, p-values, Benjamini-Hochberg |

Experimental Protocols

Protocol 1: Low-Memory Metagenomic Profiling with metalign

Objective: Generate taxonomic and functional (EC) abundance profiles from raw metagenomic reads with minimal memory footprint.

Database Preparation:

Run Classification:

Output Interpretation:

- Primary abundance files are found in

./sample_results/abundances/(e.g.,species.tsv,ecs.tsv). - These are TSV files with columns for taxon/EC ID, name, and estimated abundance.

- Primary abundance files are found in

Protocol 2: Cross-Tool Validation Pipeline

Objective: Ensure consistency of taxonomic profiles from different classifiers.

- Uniform Pre-processing: Trim and quality-filter all reads with

fastpusing identical parameters. - Parallel Classification:

- Run Kraken2 against a standard database (e.g., Standard-2024).

- Run metalign using its corresponding database.

- Run sourmash gather against the GenBank sketch collection.

- Aggregation: Use

kraken2-biomand custom scripts to generate genus-level abundance tables for each tool. - Analysis: In R, calculate pairwise Spearman correlations between the abundance vectors of the top 50 genera across all tools. Visualize with a heatmap.

Mandatory Visualizations

Tool Selection Workflow for Metagenomic Thesis

Metalign Protocol: From Reads to Results

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for Efficient Microbiome Analysis

| Item | Function / Description | Example Source / Command |

|---|---|---|

| Pre-processed Reference Database | Curated, indexed genome collections for classification. Saves weeks of compute time. | Kraken2: kraken2-build --download-library metalign: metalign download mp10 |

| MinHash Sketch Collections | Compressed, comparable representations of genomes/metagenomes. Enables massive scale comparison. | sourmash: sourmash sketch dna RefSeq sketch collection from sourmash tutorials. |

| Containerized Software (Docker/Singularity) | Ensures reproducibility and eliminates "works on my machine" issues. | Docker Hub: docker pull quay.io/biocontainers/kraken2:latest |

| Workflow Management Scripts (Snakemake/Nextflow) | Automates multi-step analysis, manages software environments, and enables parallel execution. | Snakemake rule for running fastp, then metalign on all samples. |

| Standardized Reporting Templates (RMarkdown/Jupyter) | Integrates code, results, and narrative for reproducible thesis chapters and publications. | RMarkdown template with pre-set chunks for phyloseq and ggplot2. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: When running my Dockerized microbiome analysis pipeline, I encounter the error: "Bind mount failed: source path does not exist." What is the cause and solution?

A: This is a common path resolution error. Docker commands are executed from your host machine's filesystem context. Ensure the local directory path you are trying to mount (with the -v flag) is correct and exists. Use absolute paths for clarity. For example, use /home/user/project/data instead of ./data.

Q2: My Singularity container cannot write output files to the host filesystem, even with the --bind option. What should I check?

A: Singularity, by default, runs containers with the user's permissions. First, verify the bind mount syntax: singularity exec --bind /host/dir:/container/dir image.sif my_command. If the command inside the container runs as a non-root user (typical), ensure your host user has write permissions to the bound host directory (/host/dir).

Q3: How do I manage Docker disk space usage, as images and containers consume significant storage during large-scale 16S rRNA analysis? A: Use Docker's prune commands and monitor disk usage.

docker system dfshows disk usage.docker image prune -aremoves all unused images.docker system prune -aremoves all unused data (containers, networks, images). Use with caution.

Q4: I receive a "FATAL: kernel too old" error when trying to run a Singularity container on my HPC cluster. What does this mean? A: This indicates the container was built on a system with a newer Linux kernel than the one on your cluster. Singularity containers package the host OS's kernel dependencies. The solution is to build the container on a system with an older, compatible kernel (like the one on your target HPC) or use a pre-built container verified for compatibility with older kernels.

Q5: How can I ensure my containerized workflow uses multiple CPUs for parallelizable tasks like MetaPhIAn3 or HUMAnN3? A: You must explicitly pass host CPU resources to the container.

- Docker: Use the

--cpusflag (e.g.,--cpus=8) or--cpuset-cpus. For older versions, use CPU shares (-c). - Singularity: The container automatically sees all host CPUs. You must configure the tool inside the container to use them, typically by setting an environment variable like

OMP_NUM_THREADSor--nprocsin the tool's command-line arguments when you execute it.

Q6: What is the best practice for versioning and sharing Docker/Singularity images for publication alongside a microbiome research paper? A: Always push your images to a public repository.

- Docker: Push to Docker Hub, Google Container Registry (GCR), or Amazon ECR. Provide the exact image tag (e.g.,

username/metaphlan3:v3.0) in your manuscript's methods section. - Singularity: While you can push Singularity images to repositories, the best practice for maximum reproducibility is to provide the Docker image URI and the exact

singularity pullcommand used to create the Singularity Image File (SIF). This documents the exact source.

Table 1: Comparative Analysis of Containerization Platforms for HPC Microbiome Research

| Feature | Docker | Singularity |

|---|---|---|

| Primary Use Case | Development, Microservices | High-Performance Computing (HPC), Scientific Workflows |

| Root Privileges Required | Yes (for management) | No (for execution) |

| Portability of Images | High (Docker Hub) | High (Can pull from Docker Hub, Singularity Library) |

| Default Security Model | User Namespace Isolation | Runs as user, no root escalation inside container |

| Filesystem Integration | Explicit volume mounts (-v) |

Bind mounts to host, integrates with shared storage (e.g., NFS) |

| Best Suited For | Local development, CI/CD pipelines | Cluster/Shared environments, multi-user systems |

Table 2: Impact of Containerization on Computational Efficiency in a Large-Scale Meta-analysis (Simulated Data)

| Metric | Native Execution (Baseline) | Docker Container | Singularity Container |

|---|---|---|---|

| 16S rRNA DADA2 Pipeline Runtime (1000 samples) | 10.5 hours | 10.7 hours (+1.9%) | 10.6 hours (+1.0%) |

| Memory Overhead | 0 GB (Reference) | ~0.15 GB | ~0.05 GB |

| Result Bit-for-Bit Reproducibility | N/A | 100% (with pinned versions) | 100% (with pinned versions) |

| Environment Setup Time | 2-4 hours (manual) | ~10 minutes (pull image) | ~10 minutes (pull image) |

Experimental Protocols

Protocol 1: Building a Reproducible Docker Image for a QIIME2 Microbiome Analysis Workflow

Create a

Dockerfile:Build the Image: Execute

docker build -t mylab/qiime2:2023.5 .in the directory containing the Dockerfile.Test the Container: Run

docker run --rm -it -v $(pwd)/microbiome_data:/data mylab/qiime2:2023.5 qiime --help.

Protocol 2: Converting a Docker Image to Singularity for HPC Deployment

On a system with Singularity installed (or on your HPC if allowed), pull the Docker image:

This creates a file

qiime2_2023.5.sif.Execute an analysis inside the Singularity container, binding your data directory:

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Containerized Microbiome Research |

|---|---|

| Dockerfile | A text script defining the build process for a Docker image, ensuring a consistent, versioned software environment. |

| Singularity Definition File | A build recipe for Singularity images, offering fine-grained control over image construction, especially in HPC contexts. |

| Docker Hub / Biocontainers | Public registries hosting pre-built, often community-verified, scientific containers for tools like QIIME2, MetaPhIAn, and HUMAnN. |

Conda/Pip requirements.txt |

Lists of explicit software versions used inside the container, critical for documenting the computational "reagent" versions. |

Host Data Bind Mount (-v / --bind) |

The mechanism to connect the immutable container to mutable host data and results directories, enabling analysis. |

| Singularity Image File (SIF) | The immutable, secure, and portable binary file containing the entire containerized environment, ready for execution on HPC. |

Diagrams

Title: Docker and Singularity Workflow Comparison for Researchers

Title: Containerization Ensures Reproducible Computational Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During a large microbiome 16S rRNA sequencing data batch analysis, my cloud-based batch job fails with an "Insufficient memory" error after processing the first 100 samples on AWS. How can I resolve this without manually resizing instances?

A: This indicates that your container or compute instance is hitting a memory limit. Implement an Auto Scaling strategy based on custom CloudWatch metrics.

AWS Protocol: For an AWS Batch job using Fargate:

- Navigate to the CloudWatch Console.

- Create a custom metric filter for your job's log group to track "OutOfMemoryError" or memory utilization >85%.

- Create a CloudWatch Alarm that triggers when this metric is observed.

- Configure an EventBridge rule that is triggered by this alarm. The rule's target should be an AWS Batch job queue.

- The EventBridge rule should submit a new batch job with modified job definition parameters (e.g., increased

memoryin MiB for Fargate resources). You can use input transformer to dynamically set memory based on the failed job's ID and metadata. - Ensure your job logic includes checkpointing, allowing the new job to resume from the last successfully processed sample.

Key Check: Verify that your Docker container's memory request (

--memory-reservation) is aligned with the Fargate task definition. Set the memory limit (--memory) approximately 20% higher than the reservation to allow the kernel to manage spikes.

Q2: My genomic alignment pipeline on Google Cloud Life Sciences API experiences significant slowdown and increased cost when scaling beyond 50 concurrent VMs. What architecture adjustments can improve computational efficiency?

A: The bottleneck is likely in shared resource access (input/output). Move from a monolithic workflow to a decoupled, event-driven architecture.

- GCP Protocol:

- Decompose the Pipeline: Split the alignment pipeline into discrete, idempotent steps (e.g.,

quality-check,alignment,post-processing). Package each as a separate Docker container. - Use Cloud Pub/Sub for Orchestration: Have the completion of each step publish a message to a Pub/Sub topic. The next step's service subscribes to this topic and pulls messages.

- Implement Cloud Functions/Cloud Run: Use event-driven compute like Cloud Functions (for light tasks) or Cloud Run (for heavier containers) to execute each step. They scale to zero when idle, improving cost efficiency.

- Leverage Filestore: For shared reference genomes, mount a high-performance Filestore instance (NFS) to all workers to prevent redundant downloads and ensure consistent I/O speed.

- Monitor with Cloud Monitoring: Create a dashboard tracking Pub/Sub backlog, function invocation latency, and Filestore operations. Auto-scale workers based on Pub/Sub queue depth.

- Decompose the Pipeline: Split the alignment pipeline into discrete, idempotent steps (e.g.,

Q3: When using Azure Kubernetes Service (AKS) to run a machine learning model on microbiome data, the Horizontal Pod Autoscaler (HPA) fails to scale out during prediction bursts, causing timeouts. How do I diagnose and fix this?

A: This is often due to HPA relying on default CPU/Metrics that don't reflect the actual workload bottleneck.

Azure Protocol:

- Enable Custom Metrics: Deploy the Azure Monitor for containers agent (if not already running). Instrument your application to emit a custom metric, such as

prediction_queue_lengthorrequest_duration_seconds, to Azure Monitor. - Install Prometheus Adapter: Use the KEDA (Kubernetes Event-driven Autoscaling) add-on for AKS, which is simpler and more powerful for event-driven scaling.

Create a ScaledObject: Define a KEDA

ScaledObjectfor your deployment instead of, or in addition to, the HPA.Test: Use a load testing tool (e.g,

vegeta) to simulate a prediction burst and observe KEDA scaling the pods based on the actual application metric.

- Enable Custom Metrics: Deploy the Azure Monitor for containers agent (if not already running). Instrument your application to emit a custom metric, such as

Comparative Quantitative Data: Scaling Performance & Cost

Table 1: Managed Kubernetes Auto-scaling Latency (Pod from 0 to Ready)

| Cloud Provider | Service | Average Scale-Out Latency (Cold Start) | Key Influencing Factor |

|---|---|---|---|

| AWS | EKS with Fargate | 60-90 seconds | Image pull & ENI attachment |

| GCP | GKE with Cloud Run for Anthos | 20-45 seconds | Cached container images |

| Azure | AKS with KEDA & Container Instances | 10-30 seconds | Event trigger speed & container pool warm-up |

Table 2: Cost Efficiency for Batch Genomic Processing (Per 10,000 Samples)

| Architecture Pattern | Estimated Compute Cost (USD) | Estimated Data Transfer Cost (USD) | Optimal For |

|---|---|---|---|

| AWS Batch (Spot) + S3 | $120 - $180 | $4.50 | Fault-tolerant, non-urgent workloads |

| GCP Life Sciences API + Preemptible VMs + Cloud Storage | $105 - $160 | $5.00 | Short-duration, massively parallel tasks |

| Azure Batch + Low-Priority VMs + Blob Storage | $130 - $200 | $3.75 | Hybrid workloads with Azure dependencies |

Experimental Protocol: Benchmarking Elastic Scaling Efficiency for Metagenomic Assembly

Objective: To measure the time-to-completion and cost efficiency of a standard metagenomic assembly pipeline (using MEGAHIT) under dynamically scaling cloud architectures.

Methodology:

- Dataset: The publicly available Tara Oceans microbiome dataset (sample: ERR599031).

- Containerization: Package the MEGAHIT assembler v1.2.9 and dependencies into identical Docker images for AWS, GCP, and Azure.

- Orchestration Setup:

- AWS: Configure an AWS Batch Compute Environment with a mix of Fargate (for small jobs) and EC2 Spot queues (for assembly). Use a CloudWatch Events rule to shift jobs to EC2 if Fargate fails.

- GCP: Set up a Cloud Life Sciences pipeline with an auto-scaling MIG (Managed Instance Group) of preemptible VMs. Use Stackdriver monitoring to trigger scaling.

- Azure: Configure an AKS cluster with KEDA. Create a

ScaledObjectthat scales the MEGAHIT job pods based on the number of messages in an Azure Storage Queue containing sequencing file IDs.

- Execution: Submit the same job (assembly of 10x subsampled reads) 20 times on each platform during different times of day.

- Metrics: Log for each run:

start_time,end_time,compute_unit_seconds,cost_estimate, andsuccess_flag.

Diagrams

Title: Cloud Provider Selection Logic for Elastic Scaling

Title: Troubleshooting Scaling Issues Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Cloud-Native "Reagents" for Large-Scale Microbiome Analysis

| Item (Cloud Service) | Function in the "Experiment" | Key Configuration Parameter |

|---|---|---|

| Container Registry (ECR, GCR, ACR) | Stores and versions Docker images containing analysis tools (QIIME2, MetaPhlAn, etc.). | Image scan on push, lifecycle policies. |

| Object Storage (S3, Cloud Storage, Blob) | Holds raw sequencing files (.fastq), intermediate outputs, and final results. Immutable and scalable. | Storage class (e.g., Standard vs. Archive), access tiers. |

| Managed Kubernetes Service (EKS, GKE, AKS) | The "petri dish" where containerized workflows interact, scale, and are observed. | Node pool composition (spot/preemptible). |

| Workflow Orchestrator (AWS Step Functions, Cloud Composer, Logic Apps) | Defines the sequence of pipeline steps (QC, assembly, annotation) and handles failures. | State management, retry policies. |

| Observability Suite (CloudWatch, Operations Suite, Monitor) | The "microscope" – provides logs, metrics, and traces to diagnose pipeline health and efficiency. | Custom metric definitions, alert thresholds. |

Debugging and Accelerating Your Analysis: Practical Fixes for Common Performance Issues