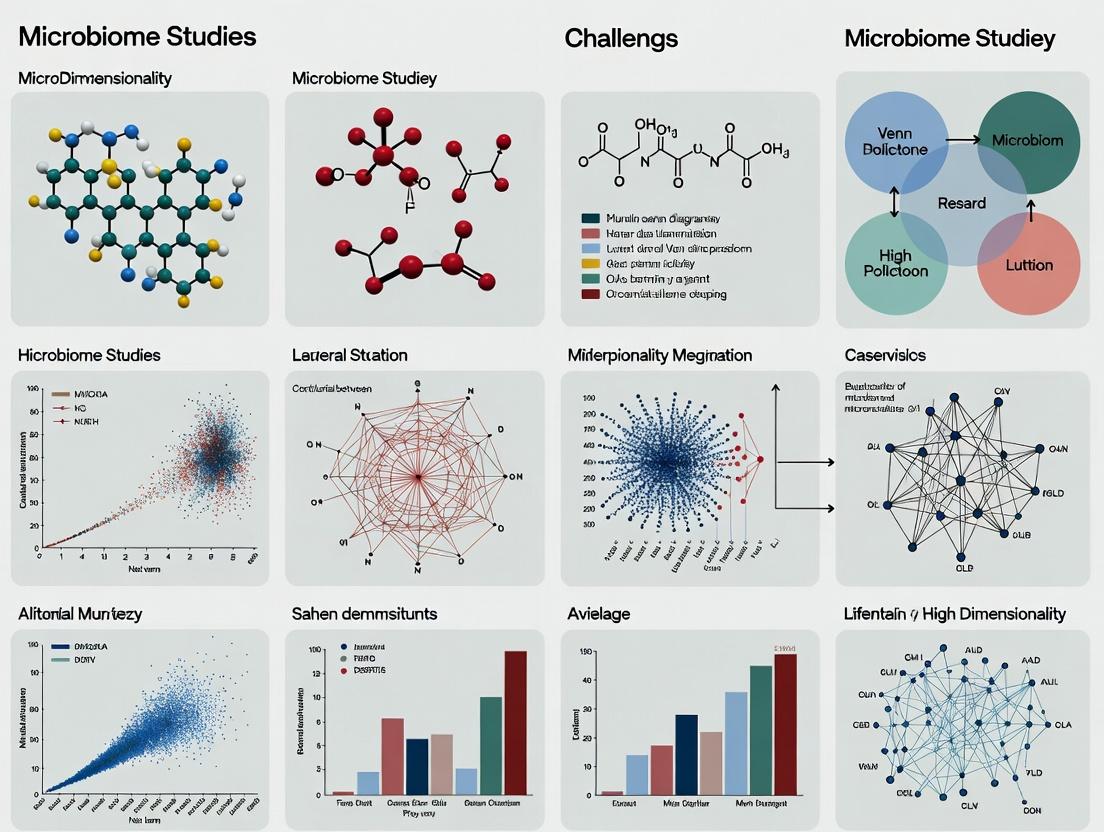

Navigating the p>>n Problem in Microbiome Research: Strategies for High-Dimensional Data Analysis

Microbiome studies inherently face the 'curse of dimensionality,' where the number of microbial features (p) vastly exceeds the number of samples (n).

Navigating the p>>n Problem in Microbiome Research: Strategies for High-Dimensional Data Analysis

Abstract

Microbiome studies inherently face the 'curse of dimensionality,' where the number of microbial features (p) vastly exceeds the number of samples (n). This p>>n paradigm presents formidable statistical and computational challenges, including overfitting, model instability, and spurious correlations. This article provides a comprehensive guide for researchers and drug development professionals, addressing the foundational nature of the problem, reviewing specialized methodological approaches, offering practical troubleshooting and optimization strategies, and evaluating validation frameworks. By synthesizing current best practices, we aim to equip scientists with the tools to derive robust, biologically meaningful insights from complex, high-dimensional microbial datasets and translate them into clinical and therapeutic applications.

Understanding the p>>n Paradigm: Why Microbiome Data is Inherently High-Dimensional

The "p>>n" problem, where the number of features (p) vastly exceeds the number of samples (n), is a fundamental and pervasive challenge in modern microbiome research. This high-dimensional data landscape introduces significant statistical and computational hurdles for reliable biological inference. This whitepaper, framed within a broader thesis on the challenges of high dimensionality in microbiome studies, provides an in-depth technical analysis of the p>>n problem as it manifests in the two primary microbial profiling techniques: 16S rRNA gene sequencing and shotgun metagenomics. We examine the origins, scale, and consequences of this dimensionality issue for researchers, scientists, and drug development professionals.

The Dimensionality Landscape: A Quantitative Comparison

The scale of the p>>n problem differs dramatically between 16S rRNA and shotgun metagenomics approaches, primarily due to their resolution.

Table 1: Dimensionality Scale in Typical Microbiome Studies

| Aspect | 16S rRNA Gene Sequencing | Shotgun Metagenomics |

|---|---|---|

| Feature Type | Amplicon Sequence Variants (ASVs) or Operational Taxonomic Units (OTUs) | Microbial Genes (e.g., from MGnify, UniRef90 clusters) |

| Typical p (Features) | 100 – 10,000 ASVs/OTUs per study | 1 – 10 million gene families or pathways per study |

| Typical n (Samples) | 10 – 1,000 (often <200 in cohort studies) | 10 – 1,000 (similar scale to 16S) |

| Dimensionality Ratio (p/n) | ~1 to 100 | ~1,000 to 100,000+ |

| Primary Source of High p | Taxonomic diversity within and across samples | Functional gene diversity across the microbial pangenome |

| Data Sparsity | High (many zero counts) | Extreme (majority of genes absent in most samples) |

Experimental Protocols Contributing to Highp

16S rRNA Gene Sequencing Protocol (Key Steps)

- DNA Extraction & PCR Amplification: Genomic DNA is extracted from samples (e.g., stool, swab). The hypervariable regions (e.g., V4) of the 16S rRNA gene are amplified using universal bacterial/archaeal primers.

- Library Preparation & Sequencing: Amplified products are indexed, pooled, and sequenced on platforms like Illumina MiSeq or NovaSeq, generating millions of paired-end reads.

- Bioinformatic Processing (DADA2 pipeline example):

- Quality Filtering & Denoising: Reads are trimmed, filtered for quality, and error-corrected to infer exact Amplicon Sequence Variants (ASVs).

- Chimera Removal: Artifactual sequences are identified and removed.

- Taxonomy Assignment: ASVs are classified against a reference database (e.g., SILVA, Greengenes) using a naive Bayesian classifier.

- Output: A feature table (ASV x Sample count matrix) and taxonomy map.

Shotgun Metagenomic Sequencing Protocol (Key Steps)

- DNA Extraction & Fragmentation: Community DNA is extracted and mechanically or enzymatically fragmented.

- Library Preparation & Sequencing: Fragments are size-selected, adaptor-ligated, and sequenced on short-read (Illumina) or long-read (PacBio, Oxford Nanopore) platforms without target amplification.

- Bioinformatic Processing (MetaPhlAn & HUMAnN3 pipeline example):

- Host DNA Removal: Reads aligning to a host reference genome (e.g., human) are filtered out.

- Profiling: Reads are aligned to clade-specific marker databases (MetaPhlAn) for taxonomic profiling.

- Functional Profiling: Reads are aligned to comprehensive protein databases (e.g., UniRef90) using tools like

bowtie2or directly assembled. Gene families are quantified with tools likesalmon. Pathways are reconstructed via HUMAnN3 using the MinPath algorithm. - Output: Taxonomic profile, gene family abundance table (Gene x Sample), and pathway abundance table (Pathway x Sample).

Statistical Consequences of p>>n

The p>>n regime leads to several critical challenges:

- Curse of Dimensionality: In high-dimensional space, samples become sparse, making distance metrics less meaningful and reducing the power of any statistical test.

- Overfitting: Models can perfectly fit the training data (n) by capitalizing on random noise in the high-dimensional feature space (p), but will fail to generalize to new samples. Risk is extreme in shotgun data.

- Multiple Testing: Correcting for false discoveries (e.g., using FDR) across millions of tests (shotgun) requires extremely small p-values, drastically reducing power.

- Collinearity & Non-Independence: Microbial features (taxa, genes) are highly correlated due to ecology and evolution, violating assumptions of many statistical models.

- Compositionality: Data are relative abundances (sum-constrained), not absolute counts, creating spurious correlations.

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 2: Essential Toolkit for Addressing p>>n in Microbiome Studies

| Category | Item/Reagent/Tool | Function in Addressing p>>n |

|---|---|---|

| Wet-Lab & Reagents | ZymoBIOMICS Spike-in Controls (e.g., Log Distribution) | Quantifies technical variation and enables data normalization, mitigating batch effects that exacerbate dimensionality issues. |

| Magnetic Bead-based DNA Extraction Kits (e.g., MagAttract, DNeasy PowerSoil) | Provides reproducible, high-yield DNA extraction, reducing technical noise that contributes to spurious high-dimensional variance. | |

| Unique Molecular Identifiers (UMIs) | Tags individual DNA molecules pre-PCR to correct for amplification bias, improving accuracy of feature counts. | |

| Bioinformatic & Statistical Tools | ALDEx2, ANCOM-BC | Statistical models designed for compositional data to identify differentially abundant features while controlling for false positives. |

| DESeq2 (with modifications), edgeR | Negative binomial-based differential abundance tools, adapted for sparse microbiome data after careful filtering. | |

| SparCC, SPIEC-EASI, FlashWeave | Methods to infer microbial association networks that account for compositionality and sparsity. | |

| PERMANOVA (adonis2) | Non-parametric multivariate test for assessing group differences in community structure, robust to high p. | |

| glmnet, sPLS-DA (mixOmics) | Regularized regression (Lasso, Elastic Net) and sparse partial least squares for predictive modeling and feature selection in p>>n settings. | |

| Reference Databases | SILVA, GTDB | High-quality taxonomic databases for 16S classification, reducing feature misclassification. |

| UniRef, KEGG, EggNOG | Curated functional databases for shotgun annotation, enabling aggregation of genes into fewer, meaningful functional units. |

Mitigation Strategies: A Technical Guide

Table 3: Strategy Comparison for Mitigating p>>n Challenges

| Strategy | Application to 16S Data | Application to Shotgun Data | Primary Benefit |

|---|---|---|---|

| Dimensionality Reduction | Phylogeny-based (UniFrac) or count-based (Bray-Curtis) ordination (PCoA). | PCoA on functional distance (e.g., Bray-Curtis on pathway abundance). | Visualizes and tests community differences in lower-dimensional space. |

| Feature Aggregation | Roll up ASVs to Genus or Family level. | Aggregate genes into MetaCyc pathways or Enzyme Commission numbers. | Reduces p by using biologically relevant, less sparse units. |

| Regularization | Use LASSO regression to select predictive taxa for a phenotype. | Apply elastic net to identify key microbial genes associated with a disease state. | Performs automated feature selection to prevent overfitting. |

| Increased Sample Size | Multi-study integration via meta-analysis or federated learning. | Leverage large public repositories (e.g., MG-RAST, EBI Metagenomics). | Directly increases n to balance the p/n ratio. |

| Causal Inference & Validation | Animal model colonization experiments with identified taxa. | Culture-based assays or genetic manipulation of implicated microbial functions. | Moves beyond correlation to establish mechanistic proof, overcoming limitations of high-dimensional observational data. |

Within microbiome research, the "p>>n" problem—where the number of features (p; e.g., microbial taxa, genes) vastly exceeds the number of samples (n)—presents a fundamental analytical challenge. This high-dimensionality is not an artifact but a direct consequence of two converging forces: relentless technological advances in sequencing and multi-omics profiling, and the inherent, staggering biological complexity of microbial communities. This whitepaper dissects these root causes, detailing how they drive dimensionality and create both opportunities and significant methodological hurdles in statistical inference, biomarker discovery, and causal interpretation in drug development and translational research.

Technological Drivers of Dimensionality

Next-generation sequencing (NGS) and subsequent technological leaps have exponentially increased the measurable feature space.

Evolution of Sequencing Depth and Multi-Omics Layers

Recent platforms enable deep, cost-effective profiling, moving beyond 16S rRNA gene sequencing to whole-metagenome shotgun (WMS) and meta-transcriptomics, which capture millions of microbial genes and their expression.

Diagram Title: Tech Advances Expanding Feature Space

Table 1: Quantitative Leap in Features from Sequencing Technologies

| Technology | Typical Features Measured | Approximate Features (p) per Sample | Key Driver of Dimensionality |

|---|---|---|---|

| 16S rRNA (V4 region) | Operational Taxonomic Units (OTUs) / ASVs | 10² - 10³ | Hypervariable region sequencing depth |

| Shotgun Metagenomics | Microbial Gene Families (e.g., KEGG Orthologs) | 10⁵ - 10⁶ | Sequencing depth (Gbp/sample), assembly methods |

| Metatranscriptomics | Expressed Transcripts | 10⁵ - 10⁶ | RNA-seq depth, host RNA depletion efficiency |

| Integrated Multi-Omics | Genes, Transcripts, Proteins, Metabolites | 10⁶ - 10⁷+ | Data fusion from multiple platforms |

Experimental Protocol: Deep Shotgun Metagenomic Sequencing

- Sample Preparation: Microbial DNA extraction using bead-beating and column-based purification kits (e.g., QIAamp PowerFecal Pro DNA Kit). Include internal spike-in controls for quantification.

- Library Construction: Use Illumina DNA Prep kit for tagmentation-based library prep. Size selection (typically ~350-550bp insert size) is performed using magnetic beads.

- Sequencing: Run on Illumina NovaSeq X Plus platform targeting 5-10 Gb of paired-end (2x150 bp) data per sample for adequate depth in complex communities.

- Bioinformatic Processing: (1) Quality trimming with Trimmomatic. (2) Host read subtraction using KneadData against human reference. (3) De novo assembly with MEGAHIT or metaSPAdes. (4) Gene prediction and annotation using Prokka or alignment to integrated gene catalogs like the Unified Human Gastrointestinal Genome (UHGG) collection.

Biological Drivers of Dimensionality

The technological capacity is matched by the intrinsic complexity of the microbiome itself.

Microbial Diversity and Functional Redundancy

A single human gut sample can contain thousands of bacterial species, each with thousands of genes, many of which are functionally overlapping but phylogenetically distinct.

Multi-Kingdom Interactions and Host Modulation

The feature space expands beyond bacteria to include archaea, viruses (virome), fungi (mycobiome), and host-microbe interaction molecules (e.g., immune receptors, metabolites).

Diagram Title: Biological Complexity from Multi-Kingdom Interactions

Table 2: Biological Contributors to High-Dimensional Feature Space

| Biological Component | Estimated Features Added | Nature of Complexity | Impact on p>>n |

|---|---|---|---|

| Bacterial Strain Diversity | 10³ - 10⁴ strains per gut | Single-nucleotide variants (SNVs), mobile genetic elements | Massive increase in p at sub-species level |

| Viral Particles (Virome) | 10⁴ - 10⁵ viral contigs | High mutation rates, strain-specific phage-bacteria links | Adds orthogonal, high-dimension layer |

| Microbial Metabolites | 10³ - 10⁴ measurable compounds | Products of microbial metabolism, diet interaction | Functional readout, but adds correlated features |

| Host Gene Expression Response | 10⁴ - 2x10⁴ human genes | Individual-specific immune and mucosal response | Integrative models dramatically increase p |

Experimental Protocol: Multi-Omics Sample Processing for Host-Microbe Studies

- Concurrent Sampling: From a single biopsy or fecal sample, aliquot material for DNA (WMS), RNA (host transcriptomics & metatranscriptomics), and metabolites.

- Metabolite Profiling: (1) Perform liquid chromatography-mass spectrometry (LC-MS). Use reversed-phase and HILIC columns for broad polar/non-polar coverage. (2) Standards: Include internal deuterated standards for quantification. (3) Data Processing: Use XCMS for peak picking, alignment, and annotation against libraries like METLIN.

- Host Transcriptomics: (1) RNA extraction with poly-A selection for mRNA enrichment. (2) Library prep using Illumina Stranded mRNA Prep. (3) Sequence to depth of 30-50 million reads per sample. (4) Map to human reference genome (GRCh38) using STAR aligner.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for High-Dimensional Microbiome Research

| Item Name | Vendor Examples | Function in Context of High-Dimensionality |

|---|---|---|

| Bead-Beating Tubes (0.1mm & 0.5mm beads) | MP Biomedicals, Qiagen | Mechanical lysis of diverse microbial cell walls (Gram+, Gram-, spores) for unbiased DNA/RNA extraction. |

| Host Depletion Kits (RNA/DNA) | NEBNext Microbiome DNA Enrichment Kit, QIAseq FastSelect | Deplete host (human) nucleic acids to increase sequencing depth on microbial targets, improving feature detection. |

| Mock Microbial Community Standards (DNA) | ATCC MSA-1000, ZymoBIOMICS | Quantitative controls for benchmarking sequencing platform performance, bioinformatic pipelines, and estimating false discovery rates in high-dimension data. |

| Stable Isotope-Labeled Internal Standards (for Metabolomics) | Cambridge Isotope Laboratories, Sigma-Isotopes | Enable absolute quantification of microbial metabolites in complex LC-MS runs, critical for integrating metabolomic data into multi-omics models. |

| Unique Molecular Identifiers (UMI) Adapter Kits | Illumina TruSeq UMI, Oxford Nanopore | Tag individual molecules pre-amplification to correct for PCR bias and sequencing errors, improving accuracy of gene/transcript abundance estimates. |

| High-Performance Computing Cluster with >1TB RAM & SLURM | AWS, Google Cloud, On-premise | Essential for processing and storing terabyte-scale multi-omics datasets and running complex dimensional reduction (e.g., PLS, MMUPHin) or regularized regressions. |

In microbiome studies, the fundamental statistical challenge is the high-dimensional setting where the number of features (p; e.g., microbial taxa, genes, metabolites) vastly exceeds the number of samples (n). This p>>n paradigm renders many classical statistical methods invalid and amplifies three core challenges: overfitting, multicollinearity, and the curse of dimensionality. This guide examines these challenges within the context of modern microbiome research, which seeks to link microbial communities to host phenotypes, disease states, and therapeutic interventions.

The Dimensionality Problem in Microbiome Data

Microbiome data, typically generated via 16S rRNA amplicon sequencing or shotgun metagenomics, is characterized by extreme sparsity and high dimensionality. A single study may profile thousands of Operational Taxonomic Units (OTUs) or microbial genes across fewer than one hundred human hosts.

Table 1: Characteristic Scale of Dimensionality in Microbiome Studies

| Data Type | Typical Number of Features (p) | Typical Sample Size (n) | p/n Ratio |

|---|---|---|---|

| 16S rRNA (Genus Level) | 500 - 1,000 | 50 - 200 | 5 - 20 |

| Shotgun Metagenomics (KEGG Pathways) | 3,000 - 10,000 | 100 - 500 | 10 - 100 |

| Metatranscriptomics | 10,000 - 50,000+ | 20 - 100 | 200 - 2,500 |

Core Challenge 1: Overfitting

Overfitting occurs when a model learns not only the underlying signal but also the noise specific to the training dataset, leading to poor performance on new, unseen data. In p>>n scenarios, the risk is extreme.

Experimental Protocol: Assessing Overfitting via Cross-Validation

- Objective: To train a classifier (e.g., Random Forest, LASSO logistic regression) to distinguish healthy from diseased gut microbiomes and evaluate its generalizability.

- Protocol:

- Data Preprocessing: Rarefy sequencing data to an even depth. Apply a variance-stabilizing transformation (e.g., centered log-ratio for compositional data).

- Model Training: Split data into a training set (e.g., 70%) and a hold-out test set (30%). The test set is locked away.

- Internal Validation: Perform k-fold (e.g., 5-fold) cross-validation only on the training set to tune hyperparameters (e.g., regularization strength λ in LASSO).

- Final Evaluation: Train the final model with the optimal hyperparameters on the entire training set. Evaluate its performance (AUC, accuracy) on the untouched hold-out test set.

- Overfitting Diagnostic: A large discrepancy between cross-validation accuracy (e.g., 95%) and hold-out test accuracy (e.g., 65%) indicates severe overfitting.

Diagram Title: Workflow for Diagnosing Model Overfitting

Core Challenge 2: Multicollinearity

Microbial taxa exist in ecological networks, leading to strong co-occurrence or mutual exclusion patterns. This multicollinearity—high correlation among predictor variables—inflates variance of coefficient estimates, making them unstable and uninterpretable.

Table 2: Impact of Multicollinearity on Model Stability

| Statistical Method | Consequence of High Multicollinearity (Microbiome Context) | Mitigation Strategy |

|---|---|---|

| Multiple Linear Regression | Coefficients become unstable; small data changes cause large coefficient swings. | Use Regularization (Ridge, Elastic Net). |

| Logistic Regression | Standard errors inflate, p-values lose meaning, variable selection is arbitrary. | Apply LASSO for feature selection. |

| Principal Component Analysis (PCA) | Works effectively to derive uncorrelated components from correlated taxa. | Use PC scores as new features in models. |

Protocol: Diagnosing Multicollinearity with Variance Inflation Factor (VIF)

- Fit a standard linear regression model with one microbial feature as the response and all others as predictors.

- Calculate the R² from this model.

- Compute VIF = 1 / (1 - R²). A VIF > 10 indicates problematic multicollinearity for that feature.

- In practice for p>>n, VIF calculation is impossible without dimension reduction first (e.g., PCA).

Core Challenge 3: The Curse of Dimensionality

As the number of dimensions (p) increases, the volume of the feature space grows exponentially, making data points exceedingly sparse. Distance metrics lose meaning, and any model requiring density estimation fails.

Protocol: Demonstrating Distance Concentration

- Simulate Data: Generate random data points in increasing dimensions (d=10, 100, 1000) from a uniform distribution.

- Calculate Distances: Compute pairwise Euclidean distances between all points.

- Analyze: Observe the ratio of the standard deviation to the mean of these distances. This ratio shrinks toward zero as d grows, proving all pairwise distances become similar, crippling distance-based classifiers (e.g., k-NN) and clustering.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Analytical Tools for High-Dimensional Microbiome Data

| Tool/Reagent | Function in Addressing p>>n Challenges | Example/Note |

|---|---|---|

| Regularized Regression (LASSO/Elastic Net) | Performs simultaneous feature selection & regularization to combat overfitting & multicollinearity. | glmnet R package; critical for deriving sparse microbial signatures. |

| Compositional Data Analysis (CoDA) Tools | Correctly handles relative abundance data (closed-sum constraint). | compositions or robCompositions R packages; uses log-ratio transforms. |

| Permutational Multivariate ANOVA (PERMANOVA) | Tests group differences in microbial community structure using distance matrices, robust to high p. | vegan::adonis2; primary method for beta-diversity analysis. |

| Singular Value Decomposition (SVD) / PCA | Reduces dimensionality, creates uncorrelated components, mitigates the curse. | Foundation for ordination (PCoA) and preprocessing for downstream models. |

| SparCC / SPIEC-EASI | Infers microbial association networks from compositional data, accounting for sparsity. | Provides insights into ecological multicollinearity structure. |

| Stability Selection | Improves feature selection reliability by combining results from multiple subsampled datasets. | c060 R package; increases confidence in selected microbial biomarkers. |

| Bayesian Graphical Models | Models uncertainty explicitly and can incorporate prior knowledge to improve inference in high dimensions. | BDgraph R package for structure learning in microbial networks. |

| Phylogenetic Trees | Provides structured, informative regularization (phylogenetic penalty) in models. | Used in ridgeTree or phylofactor to group related taxa. |

Integrated Analysis Workflow

A robust analytical pipeline for p>>n microbiome data must sequentially address these challenges.

Diagram Title: Integrated Pipeline for High-Dimensional Microbiome Analysis

The p>>n landscape of microbiome research necessitates a paradigm shift from classical statistics to high-dimensional machine learning and careful causal inference. Overfitting is managed through rigorous validation, multicollinearity through regularization or projection, and the curse of dimensionality through feature engineering or modeling assumptions that exploit inherent structure (e.g., phylogeny, compositionality). Success lies in the judicious application of the tools and protocols outlined above, always prioritizing biological interpretability and generalizability over mere algorithmic performance on training data.

1. Introduction

In microbiome studies, the "curse of dimensionality" is a central challenge, characterized by the paradigm where the number of features (p) – such as microbial taxa or functional genes – vastly exceeds the number of samples (n). This p>>n problem is intrinsic to high-throughput sequencing and is compounded at every analytical stage: from 16S rRNA gene-based Operational Taxonomic Units (OTUs) or Amplicon Sequence Variants (ASVs) to shotgun metagenomics-derived functional pathways. The core statistical consequence is the multiple testing burden, where thousands of simultaneous hypotheses are tested, dramatically increasing the likelihood of false positives (Type I errors). This whitepaper provides a technical guide to understanding, quantifying, and mitigating this burden across the microbiome data analysis pipeline.

2. The Burden Across Analytical Layers

The multiplicity problem is not monolithic; its scale and nature evolve with data type.

Table 1: Scale of Multiple Testing in Typical Microbiome Studies

| Data Type | Typical Feature Number (p) | Common Tests Performed | Primary Correction Challenge |

|---|---|---|---|

| 16S rRNA (ASVs/OTUs) | 1,000 – 10,000 taxa | Differential abundance (e.g., DESeq2, edgeR, ANCOM-BC), alpha/beta diversity associations. | Sparse count data, compositionality, phylogenetic relatedness. |

| Shotgun Metagenomics (Species/Genes) | 10,000 – 100,000+ microbial genes or MAGs | Differential abundance, co-abundance networks, case-control associations. | Extreme sparsity, functional redundancy, vast feature space. |

| Functional Pathways (e.g., MetaCyc, KEGG) | 300 – 1,000 pathways | Pathway abundance/activity comparisons, multi-omics integration. | Hierarchical structure (genes → pathways), correlated outcomes. |

3. Core Experimental Protocols & Methodologies

Protocol 1: Standard 16S rRNA Amplicon Sequencing & ASV Analysis

- DNA Extraction & PCR: Use a standardized kit (e.g., DNeasy PowerSoil Pro) with bead-beating. Amplify the V4 region using dual-indexed primers (515F/806R).

- Sequencing: Perform 2x250 bp paired-end sequencing on an Illumina MiSeq or NovaSeq platform.

- Bioinformatics (DADA2 Pipeline):

- Filter & Trim:

filterAndTrim()withmaxN=0, maxEE=c(2,2), truncQ=2. - Learn Error Rates:

learnErrors()using a subset of data. - Dereplication & ASV Inference:

derepFastq()followed bydada()to resolve exact sequence variants. - Merge Pairs & Construct Table:

mergePairs()andmakeSequenceTable(). - Remove Chimeras:

removeBimeraDenovo()using the "consensus" method. - Taxonomy Assignment: Assign using

assignTaxonomy()against the SILVA v138 database.

- Filter & Trim:

- Statistical Differential Testing: Apply a method like ANCOM-BC which models sample-wise sampling fractions to address compositionality while testing each ASV.

Protocol 2: Shotgun Metagenomic Functional Profiling via HUMAnN 3.0

- Library Preparation & Sequencing: Fragment genomic DNA, prepare Illumina-compatible libraries (insert size ~350bp), sequence with 2x150 bp reads.

- Quality Control & Host Filtering: Use

fastpfor adapter trimming and quality filtering. Align reads to a host genome (e.g., GRCh38) withBowtie2and retain non-aligned reads. - Metagenomic Assembly & Gene Calling: Co-assemble all quality-controlled reads using

MEGAHIT. Predict open reading frames on contigs withProdigal. - Functional Profiling with HUMAnN 3:

- Step A – Taxonomic Profiling: Rapidly align reads to Chocophlan database for community composition.

- Step B – Custom Nucleotide Search: Per-sample, align unclassified reads to the sample-specific pangenome from Step A.

- Step C – Translated Search: Align remaining reads to UniRef90 protein database using

diamond. - Step D – Pathway Reconstruction: Regroup gene families into MetaCyc pathways via

minpathto compute pathway abundance and coverage.

4. Statistical Mitigation Strategies & Visualization

Standard corrections like Bonferroni are overly conservative for correlated microbiome data. Hierarchical and correlation-aware methods are preferred.

Table 2: Statistical Methods for Multiple Test Correction

| Method | Principle | Best Applied To | Tool/Implementation |

|---|---|---|---|

| Benjamini-Hochberg (FDR) | Controls the False Discovery Rate. Less conservative than FWER methods. | Initial broad screening of differential ASVs/genes. | p.adjust(method="BH") in R. |

| q-value | Estimates the proportion of true null hypotheses from the p-value distribution. | Large-scale metagenomic association studies. | qvalue package in R/Bioconductor. |

| Independent Hypothesis Weighting (IHW) | Uses a covariate (e.g., mean abundance) to weight hypothesis tests, improving power. | Differential abundance testing where prior is informative. | IHW package in R/Bioconductor. |

| Structural FDR (e.g., CAMERA) | Accounts for inter-gene correlation in competitive gene set tests. | Pathway enrichment analysis from gene-level stats. | CAMERA in the limma R package. |

Diagram 1: Microbiome Analysis Flow & Multiple Test Points

Diagram 2: Hierarchical Testing Strategy for Pathways

5. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Kits for Featured Protocols

| Item Name | Provider/Example | Function in Workflow |

|---|---|---|

| Magnetic Bead-based DNA Extraction Kit | DNeasy PowerSoil Pro Kit (Qiagen) | Efficient lysis of microbial cells and inhibitor removal for consistent yield from complex samples (feces, soil). |

| 16S rRNA Gene Amplification Primers | 515F (Parada) / 806R (Apprill) | Target the V4 hypervariable region for high-fidelity amplification and minimal bias in bacterial/archaeal community profiling. |

| Shotgun Metagenomic Library Prep Kit | Nextera XT DNA Library Prep Kit (Illumina) | Facilitates fast, PCR-based fragmentation, indexing, and adapter ligation for Illumina sequencing. |

| Functional Reference Database | UniRef90 (for HUMAnN3) | A clustered non-redundant protein database enabling fast and comprehensive translated search of metagenomic reads. |

| Pathway Reference Database | MetaCyc | A curated database of metabolic pathways used for accurate pathway abundance inference and coverage analysis. |

| Positive Control Mock Community | ZymoBIOMICS Microbial Community Standard | A defined mix of bacterial/fungal cells used to evaluate extraction, sequencing, and bioinformatics pipeline performance. |

In microbiome studies, the challenge of high dimensionality, where the number of features (p; microbial taxa, genes, pathways) vastly exceeds the number of samples (n), is a fundamental obstacle. This whitepaper deconstructs this "p>>n" problem through the intertwined lenses of sparsity, compositionality, and biological variance. We provide a technical guide for distinguishing true biological signal from statistical and technical noise, offering current methodologies, experimental protocols, and analytical toolkits for robust research and drug development.

The p>>n Paradigm in Microbiome Research

Microbiome data is intrinsically high-dimensional. A typical 16S rRNA gene sequencing study may yield hundreds to thousands of Amplicon Sequence Variants (ASVs) per sample, while shotgun metagenomics can generate data on millions of microbial genes. Sample sizes (n), constrained by cost, recruitment, and processing throughput, often number in the tens to hundreds. This p>>n scenario violates classical statistical assumptions, inflates false discovery rates, and complicates predictive modeling.

Table 1: Characteristic Dimensions in Microbiome Studies

| Study Type | Typical Features (p) | Typical Samples (n) | p/n Ratio | Primary Data Type |

|---|---|---|---|---|

| 16S rRNA Amplicon | 500 - 10,000 ASVs | 50 - 500 | 10 - 200 | Compositional Counts |

| Shotgun Metagenomics | 1M - 10M Genes | 100 - 1000 | 1000 - 100,000 | Compositional Counts |

| Metatranscriptomics | 1M - 10M Transcripts | 20 - 200 | 5000 - 500,000 | Compositional Counts |

| Metabolomics (Host & Microbial) | 500 - 10,000 Metabolites | 50 - 300 | 10 - 200 | Continuous Abundance |

Core Conceptual Challenges

Sparsity

Microbial count data is sparse, with a majority of zeros. These zeros can represent true biological absence (structural zero) or undersampling due to limited sequencing depth (sampling zero).

Table 2: Sources and Implications of Data Sparsity

| Source of Zero | Description | Implication for p>>n Analysis |

|---|---|---|

| Structural Zero | Taxon is genuinely absent from the niche. | Represents true biological signal; can inform niche specialization. |

| Sampling Zero | Taxon is present but undetected due to finite sequencing depth. | Major source of noise; leads to biased diversity estimates and inflated beta-dispersion. |

| Technical Zero | Artifact of DNA extraction, PCR dropout, or sequencing error. | Pure noise; can create spurious correlations and batch effects. |

Compositionality

Microbiome sequencing data provides relative, not absolute, abundance. The total count per sample (library size) is an arbitrary constraint imposed by sequencing technology. This compositionality induces a negative bias in correlation estimates and confounds differential abundance testing.

Biological Variance

Intrinsic biological variation—from host genetics, diet, environment, and temporal dynamics—is often large. In p>>n settings, disentangling this true biological variance from noise associated with sparsity and compositionality is paramount.

Methodological Framework and Experimental Protocols

Protocol for Controlled Spike-Ins to Quantify Technical Noise

Objective: To distinguish technical zeros from biological zeros and calibrate abundance estimates. Reagents: Defined microbial communities (e.g., ZymoBIOMICS Microbial Community Standards) or synthetic DNA spikes (e.g., External RNA Control Consortium spikes). Procedure:

- Spike-in Design: Introduce a known quantity of foreign cells or DNA sequences not found in the native sample across a dilution series.

- Sample Processing: Split each biological sample into technical replicates. Add spike-ins at the lysis step.

- Sequencing: Process all samples in a single sequencing run to minimize batch effects.

- Analysis: Model the relationship between observed read count and expected spike-in abundance. Use this model to infer the limit of detection and classify zeros in the native data.

Protocol for Longitudinal Sampling to Decipher Biological Variance

Objective: To model within-subject (temporal) vs. between-subject variance. Procedure:

- Cohort Design: Recruit subjects and collect samples at high frequency (e.g., daily, weekly) over an extended period.

- Metadata Collection: Record detailed host metadata (diet, medication, health status) at each time point.

- Sequencing: Use a balanced block design to sequence samples from all time points for a single subject together to control for batch effects.

- Analysis: Apply multivariate time-series models (e.g, Bayesian Gaussian Processes, Multivariate Autoregressive Models) or variance partitioning tools (e.g.,

vegan::varpartin R) to quantify variance components.

Analytical Workflow for Sparse, Compositional Data

The following diagram outlines a core analytical pipeline for addressing p>>n challenges.

Diagram Title: Analytical workflow for high-dimensional microbiome data.

Signaling Pathway Inference from Sparse Metagenomic Data

Inferring host-microbiome interactions often involves predicting microbial modulation of host signaling pathways from sparse metagenomic or metabolomic data.

Diagram Title: From sparse metagenomics to host signaling pathways.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Microbiome p>>n Studies

| Reagent / Material | Function in Addressing p>>n Challenges | Example Product / Source |

|---|---|---|

| Mock Microbial Community Standards | Quantifies technical variance and sparsity. Provides ground truth for algorithm validation. | ZymoBIOMICS Microbial Community Standards, ATCC MSA-1003 |

| Synthetic Spike-in Oligonucleotides | Controls for compositionality and normalization. Enables absolute abundance calibration. | ERA (External RNA Controls Consortium) spikes, SeqControl synthetic sequences |

| Stable Isotope-Labeled Probes (SIP) | Resolves biological activity vs. presence. Reduces noise by linking phylogeny to function. | 13C- or 15N-labeled substrates for Stable Isotope Probing |

| Gnotobiotic Animal Models | Reduces confounding biological variance. Allows causal testing of high-dimensional microbial signatures. | Germ-free mice/rats colonized with defined microbial consortia (e.g., Oligo-MM12) |

| Duplex Sequencing Tags | Mitigates PCR/sequencing errors that inflate feature count (p). Dramatically reduces technical noise. | Unique Molecular Identifiers (UMIs), duplex UMI kits |

| Modular Transparent Reporting Templates | Standardizes reporting to separate signal from noise in published literature. | MIxS (Minimum Information about any (x) Sequence) standards, STORMS checklist |

Advanced Statistical & Computational Approaches

Table 4: Comparative Analysis of Sparse, Compositional Regression Methods

| Method | Underlying Algorithm | Handles Compositionality? | Handles Sparsity? | Software/Package |

|---|---|---|---|---|

| ANCOM-BC | Linear model with bias correction & log-ratio transformation. | Yes (via log-ratio) | Moderate | ANCOMBC (R) |

| MaAsLin 2 | Generalized linear models with multiple covariate adjustment. | Yes (via TSS/CLR) | Yes (through filtering) | MaAsLin2 (R) |

| selbal | Balance selection via lasso-penalized regression on log-ratios. | Yes (core method) | Yes (via balances) | selbal (R) |

| MicrobiomeNet | Graph-constrained regression incorporating microbial networks. | Can integrate CLR | Yes (via network sparsity) | Custom (Python/R) |

| ZINB-Based Models (e.g., GLMMadaptive) | Zero-inflated negative binomial mixed models. | No (requires careful normalization) | Yes (explicit zero model) | GLMMadaptive (R) |

Navigating the p>>n landscape in microbiome research demands a rigorous, multi-faceted approach. By explicitly modeling sparsity, respecting compositionality, and carefully quantifying biological variance, researchers can transform high-dimensional data into robust, reproducible biological insights. The integration of controlled experimental designs, standardized reagents, and sophisticated analytical frameworks is essential for advancing microbiome-based therapeutics and diagnostics.

Analytical Arsenal for p>>n: Key Methods and Their Applications in Microbiome Studies

The analysis of microbiome data presents a canonical "large p, small n" problem, where the number of measured features (p; e.g., microbial taxa or operational taxonomic units) far exceeds the number of samples (n). This high-dimensionality introduces challenges including multicollinearity, overfitting, the curse of dimensionality, and computational bottlenecks. Dimensionality reduction techniques are essential for transforming these complex, sparse datasets into lower-dimensional representations that preserve meaningful biological signal, facilitate visualization, and enable downstream statistical inference. This whitepaper details three foundational methods—Principal Component Analysis (PCA), Principal Coordinates Analysis (PCoA), and Non-metric Multidimensional Scaling (NMDS)—within the specific context of microbiome research challenges.

Core Methodologies: A Comparative Technical Guide

Principal Component Analysis (PCA)

Objective: To find orthogonal axes (principal components) of maximum variance in a multidimensional dataset, assuming linear relationships. Key Assumption: Data is continuous, linearly related, and Euclidean distances are meaningful. Microbiome Application: Best suited for transformed (e.g., centered log-ratio transformation to address compositionality) and normalized abundance data.

Protocol for Microbiome Data:

- Preprocessing: Perform a centered log-ratio (CLR) transformation on the taxa count matrix to address compositionality.

- Covariance Matrix: Compute the covariance matrix of the transformed data.

- Eigen Decomposition: Calculate the eigenvectors and eigenvalues of the covariance matrix.

- Projection: Project the original data onto the selected eigenvectors (PCs) to obtain coordinates (scores).

Principal Coordinates Analysis (PCoA / Metric MDS)

Objective: To produce a low-dimensional representation where the pairwise distances between points approximate a user-defined distance matrix. Key Assumption: The chosen distance metric is metric (full triangle inequality holds). Microbiome Application: Extensively used with ecological distance metrics (e.g., Bray-Curtis, UniFrac) that capture community dissimilarity.

Protocol for Microbiome Data:

- Distance Matrix Calculation: Compute a pairwise dissimilarity matrix

Dfor all samples using an appropriate beta-diversity metric (e.g., weighted UniFrac). - Double-Centering: Apply the double-centering transformation to

Dto obtain a Gram matrix. - Eigen Decomposition: Perform eigen decomposition on the Gram matrix.

- Coordinate Extraction: Use the eigenvectors scaled by the square root of their corresponding positive eigenvalues to obtain sample coordinates.

Non-metric Multidimensional Scaling (NMDS)

Objective: To arrange samples in low-dimensional space such that the rank order of inter-point distances matches the rank order of the original dissimilarities as closely as possible (stress minimization). Key Assumption: Only the rank-order of dissimilarities carries information (non-parametric). Microbiome Application: Ideal for noisy, non-linear data where only dissimilarity ranks are trusted, such as with many ecological distance measures.

Protocol for Microbiome Data:

- Distance Matrix: Compute a dissimilarity matrix (e.g., Bray-Curtis).

- Initial Configuration: Choose a random starting configuration of points in k-dimensions.

- Stress Optimization: Iteratively adjust point positions to minimize a stress function (e.g., Kruskal's Stress-1) using a gradient descent algorithm.

- Assessment: Run from multiple random starts to avoid local minima. Assess final stress value.

Table 1: Core Characteristics of Dimensionality Reduction Methods in Microbiome Studies

| Feature | PCA | PCoA | NMDS |

|---|---|---|---|

| Input Data | Raw/Transformed Abundance Matrix | Pairwise Distance Matrix | Pairwise Distance Matrix |

| Distance Preserved | Euclidean (implicitly) | Any metric distance (Bray-Curtis, UniFrac, etc.) | Rank-order of any distance |

| Optimization Criterion | Maximize Variance | Preserve Original Distances | Minimize Ordinal Stress |

| Linearity Assumption | Strong | Depends on distance metric | None (Non-metric) |

| Output Axes | Orthogonal, Ranked by Variance | Orthogonal, Ranked by Variance | Not necessarily orthogonal |

| Variance Explained | Quantifiable (Eigenvalues) | Quantifiable (Eigenvalues for metric inputs) | Not directly quantifiable |

| Microbiome-Specific Utility | CLR-transformed data | Direct use of beta-diversity metrics | Robust to noisy, non-linear relationships |

Table 2: Common Distance Metrics for PCoA/NMDS in Microbiome Analysis

| Metric | Type | Sensitive to | Recommended For |

|---|---|---|---|

| Bray-Curtis | Abundance-based, Non-Euclidean | Composition & Abundance | General community profiling |

| Jaccard | Presence/Absence, Non-Euclidean | Taxon Turnover | Deeply sequenced, rare biosphere |

| Weighted UniFrac | Phylogenetic & Abundance | Abundant, phylogeny-related shifts | Functional & phylogenetic studies |

| Unweighted UniFrac | Phylogenetic & Presence/Absence | Lineage presence, robust to abundance | Detecting phylogenetic turnover |

Visualizing the Method Selection Workflow

Dimensionality Reduction Method Selection for Microbiome Data

Table 3: Key Software Packages & Analysis Resources

| Item | Function | Primary Application |

|---|---|---|

| QIIME 2 (2024.5) | End-to-end microbiome analysis platform. Plugins (deicode for RPCA, emperor for visualization) integrate PCA/PCoA. |

Pipeline for distance calculation, PCoA, and visualization. |

R phyloseq/vegan |

Core R packages for ecological data. phyloseq::ordinate() wraps PCA, PCoA, NMDS. vegan::metaMDS() for NMDS. |

Flexible, script-based analysis and custom plotting. |

Python scikit-bio |

Provides skbio.stats.ordination module with pcoa, nmds, and pca functions for integration into Python ML pipelines. |

Integrating ordination into machine learning workflows. |

| GUniFrac Library | Efficiently calculates both weighted and unweighted UniFrac distance matrices, critical input for PCoA/NMDS. | Generating phylogenetically informed dissimilarity matrices. |

| Centered Log-Ratio (CLR) Transform | A transformation (e.g., via compositions::clr() in R) that makes PCA valid for compositional microbiome data. |

Preprocessing for PCA to address the unit-sum constraint. |

PERMANOVA (vegan::adonis2) |

Permutational multivariate analysis of variance, used to test group differences on PCoA/NMDS ordinations. | Statistical testing of group separation in ordination space. |

Experimental Protocol: A Standard 16S rRNA Gene Sequencing Analysis Workflow Featuring PCoA

Objective: To compare microbial community structures between two treatment groups using 16S rRNA gene amplicon data.

Microbiome Analysis Workflow from Sequencing to PCoA

Detailed Protocol Steps:

- Wet-lab Processing: Extract genomic DNA from samples (e.g., stool, soil). Amplify the V4 region of the 16S rRNA gene using barcoded primers. Sequence on an Illumina MiSeq platform (2x250 bp).

- Bioinformatic Processing: Process raw FASTQ files using a denoising pipeline (QIIME 2 with DADA2 plugin, or mothur). Generate an Amplicon Sequence Variant (ASV) table, taxonomy assignments, and a phylogenetic tree.

- Normalization: Apply a standardization method to correct for uneven sequencing depth. Options include rarefaction (subsampling to an even depth) or Cumulative Sum Scaling (CSS).

- Distance Calculation: Using the normalized ASV table (and phylogenetic tree if needed), compute a pairwise dissimilarity matrix for all samples. A common choice is the Bray-Curtis dissimilarity:

d_jk = (sum_i |x_ij - x_ik|) / (sum_i (x_ij + x_ik)), wherexis the abundance of featureiin samplesjandk. - PCoA Execution: Input the distance matrix into the PCoA algorithm (

skbio.stats.ordination.pcoain Python,ape::pcoa()in R, orqiime diversity pcoa). Retain enough principal coordinates to explain >70% of total variance (via scree plot). - Visualization & Inference: Plot samples in 2D/3D space using the first 2-3 principal coordinates, coloring points by treatment group. Statistically assess group separation using PERMANOVA (e.g.,

vegan::adonis2with 9999 permutations).

Microbiome studies, particularly those utilizing high-throughput 16S rRNA or shotgun metagenomic sequencing, epitomize the "large p, small n" (p >> n) problem. Here, n represents the number of samples (often in the tens to hundreds), while p represents the number of features—taxonomic operational taxonomic units (OTUs), amplicon sequence variants (ASVs), or functional gene pathways—which can number in the thousands or millions. This dimensionality leads to non-identifiable models, severe overfitting, and unreliable biological inference. Regularization techniques, which impose constraints on model coefficients, are essential for deriving sparse, interpretable, and generalizable models from such data.

Mathematical Foundations of Regularization

Ordinary Least Squares (OLS) regression minimizes the residual sum of squares (RSS). Regularized regression modifies this objective function by adding a penalty term (P(\alpha, \beta)) that constrains the magnitude of the coefficients.

The general penalized objective function is: [ \min{\beta0, \beta} \left{ \frac{1}{2N} \sum{i=1}^{N} (yi - \beta0 - xi^T \beta)^2 + \lambda P(\alpha, \beta) \right} ] Where:

- (y_i): Response variable (e.g., disease status, metabolite level).

- (x_i): Vector of p features for the i-th sample.

- (\beta_0, \beta): Intercept and coefficient vector.

- (\lambda): Overall regularization strength (tuning parameter).

- (\alpha): Mixing parameter between LASSO and Ridge penalties.

Ridge Regression (L2 Penalty)

Ridge regression uses an L2-norm penalty, which shrinks coefficients towards zero but does not set them to exactly zero. [ P(\alpha, \beta) = (1 - \alpha) \frac{1}{2} ||\beta||_2^2 \quad \text{with} \quad \alpha = 0 ] Primary Effect: Reduces model complexity and multicollinearity by penalizing large coefficients, improving prediction accuracy when predictors are highly correlated.

LASSO Regression (L1 Penalty)

The Least Absolute Shrinkage and Selection Operator (LASSO) uses an L1-norm penalty, which can drive coefficients to exactly zero. [ P(\alpha, \beta) = \alpha ||\beta||_1 \quad \text{with} \quad \alpha = 1 ] Primary Effect: Performs automatic feature selection, yielding sparse, interpretable models—a critical property for identifying key microbial drivers from thousands of taxa.

Elastic Net (Combined L1 & L2 Penalty)

Elastic Net combines both penalties, controlled by the mixing parameter (\alpha) (where 0 < (\alpha) < 1). [ P(\alpha, \beta) = \alpha ||\beta||1 + (1 - \alpha) \frac{1}{2} ||\beta||2^2 ] Primary Effect: Balances the feature selection capability of LASSO with the grouping effect of Ridge, which is useful when features (e.g., correlated bacterial taxa within a functional guild) are highly correlated.

Quantitative Comparison of Regularization Techniques

The following table summarizes the core properties and applications of each method in the context of microbiome data analysis.

Table 1: Comparison of Regularization Techniques for Microbiome Data (p >> n)

| Aspect | Ridge Regression (L2) | LASSO (L1) | Elastic Net | ||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Penalty Term | ( | \beta | _2^2) | ( | \beta | _1) | (\alpha | \beta | _1 + (1-\alpha) | \beta | _2^2) | ||||||||

| Feature Selection | No (Keeps all features) | Yes (Produces sparse models) | Yes (Controlled sparsity) | ||||||||||||||||

| Handles Multicollinearity | Excellent | Poor (Selects one from group) | Good (Selects/weights groups) | ||||||||||||||||

| Best Use Case | Prediction-focused, all features may be relevant | Interpretability-focused, identifying key drivers | Correlated features expected, seeking stable selection | ||||||||||||||||

| Microbiome Example | Predicting a continuous outcome from all OTUs | Identifying 5-10 signature taxa for a disease | Identifying co-abundant gene pathways linked to a phenotype |

Experimental Protocol: A Standardized Analysis Workflow

This protocol outlines a complete pipeline for applying regularized regression to a typical microbiome case-control dataset.

Objective: Identify microbial taxa associated with disease status (binary outcome) while controlling for host covariates (e.g., age, BMI).

Step 1: Data Preprocessing & Normalization

- Feature Table: Start with an OTU/ASV table (samples x taxa). Apply a prevalence filter (e.g., retain taxa present in >10% of samples).

- Normalization: Convert raw counts to relative abundances (CSS, CLR, or TSS). For count-based models (e.g., glmmLASSO), use raw counts with an appropriate offset.

- Covariates: Center and scale continuous covariates (age, BMI). Code categorical covariates as factors.

- Outcome: Code disease status as 0/1.

Step 2: Model Training & Tuning Parameter Selection

- Split Data: Partition into training (70-80%) and hold-out test (20-30%) sets. Never use the test set for parameter tuning.

- Define Hyperparameter Grid:

- For LASSO/Ridge: Create a sequence of (\lambda) values (e.g.,

10^seq(4, -2, length=100)). - For Elastic Net: Also define a grid for (\alpha) (e.g.,

c(0.1, 0.5, 0.9)).

- For LASSO/Ridge: Create a sequence of (\lambda) values (e.g.,

- Perform k-Fold Cross-Validation (CV): On the training set, use 5- or 10-fold CV to evaluate model performance (using deviance or AUC) for each ((\lambda, \alpha)) combination.

- Select Optimal Parameters: Choose the (\lambda) that gives the minimum CV error (

lambda.min) or the most regularized model within one standard error of the minimum (lambda.1se), which yields a sparser model.

Step 3: Model Evaluation & Interpretation

- Final Model: Fit the final model on the entire training set using the optimal ((\lambda, \alpha)).

- Test Set Evaluation: Predict on the untouched test set. Calculate performance metrics: AUC-ROC for classification, RMSE for regression.

- Inference: Extract non-zero coefficients from LASSO/Elastic Net models. Caution: Standard p-values do not apply post-selection. Use methods like bootstrapping or

selectiveInferencepackage for valid confidence intervals. - Stability Analysis: Perform repeated subsampling to check if selected features are robust.

Title: Regularized Regression Workflow for Microbiome Data

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Research Reagent Solutions for Microbiome Regularization Studies

| Item | Function / Role | Example / Note |

|---|---|---|

| High-Throughput Sequencer | Generates raw feature count data (p >> n matrix). | Illumina MiSeq/NovaSeq for 16S rRNA; HiSeq for shotgun metagenomics. |

| Bioinformatics Pipeline | Processes raw sequences into analyzable feature tables. | QIIME 2, DADA2 (for ASVs), or MOTHUR for 16S; HUMAnN3 for pathways. |

| Normalization Tool | Mitigates compositionality and variance in count data. | microbiome R package (CSS, CLR), DESeq2 (median-of-ratios). |

| Regularization Software | Implements LASSO, Ridge, and Elastic Net algorithms. | glmnet R package (fast, standard), scikit-learn in Python. |

| Model Evaluation Suite | Assesses prediction accuracy and model calibration. | pROC (AUC), caret/mlr3 (cross-validation, performance metrics). |

| Inference Package | Provides valid statistical inference post-selection. | selectiveInference R package for constructing confidence intervals. |

| Visualization Library | Creates coefficient paths and performance plots. | ggplot2 (R), matplotlib/seaborn (Python). |

Title: Decision Path for Selecting a Regularization Technique

Advanced Considerations & Recent Developments

- Compositional Data: Microbiome data is compositional (sum-constrained). The log-contrast framework with a zero-sum constraint on coefficients is often necessary for valid inference.

- Integrated Regularization: Extensions like

MMUPHinallow for regularized regression while correcting for batch effects across studies. - Network-Informed Regularization: Methods such as Graph-constrained Elastic Net incorporate microbial co-occurrence network information to guide the selection of biologically coherent taxa.

- Software: The

mixOmicspackage provides sparse PLS-DA, a related dimension-reduction and regularization method popular for multi-omics integration.

In the high-dimensional landscape of microbiome research, regularization is not merely a statistical refinement but a fundamental necessity. Ridge regression offers stable prediction, LASSO enables parsimonious feature selection, and Elastic Net provides a flexible compromise. The choice depends on the study's primary goal: prediction or causal inference. A rigorous protocol involving careful preprocessing, cross-validated tuning, and validated inference is critical to deriving robust, biologically meaningful insights from complex microbial communities.

High-dimensional data, where the number of features (p) vastly exceeds the number of samples (n) (p>>n), is a central challenge in microbiome research. This paradigm complicates statistical analysis, risking overfitting, model instability, and spurious findings. Within this thesis on "Challenges of high dimensionality p>>n in microbiome studies," we examine specialized computational models designed to navigate this complexity. This guide details Partial Least Squares Discriminant Analysis (PLS-DA), its sparse variant (sPLS-DA), and algorithms developed specifically for microbiome data, providing a technical framework for researchers and drug development professionals.

Core Models and Methodologies

Partial Least Squares Discriminant Analysis (PLS-DA)

PLS-DA is a supervised classification method adapted from the Partial Least Squares regression framework. It projects the high-dimensional data into a lower-dimensional space of orthogonal latent components (also called latent variables). These components maximize the covariance between the feature matrix X (e.g., OTU/species abundances) and the response matrix Y (a binary or dummy-coded class assignment).

Experimental Protocol:

- Input: A normalized feature matrix X (n x p) and a response matrix Y (n x K) for K classes.

- Preprocessing: Center (and often scale) the columns of X. Y is coded using dummy variables.

- Component Extraction: Iteratively compute latent components t = Xw, where weight vector w is derived to maximize cov(t, Y).

- Regression: Perform linear regression of Y on the extracted components T.

- Prediction: For a new sample, project its data onto the component space and apply the regression model to predict class probabilities.

- Validation: Use cross-validation (e.g., k-fold, leave-one-out) to determine the optimal number of components and assess model performance (e.g., Balanced Accuracy, AUC).

Sparse PLS-DA (sPLS-DA)

sPLS-DA introduces L1 (lasso) penalization on the weight vectors w during component extraction. This penalty forces the weights of non-informative features to zero, performing variable selection directly within the discriminant analysis.

Experimental Protocol:

- Follow PLS-DA steps 1-2.

- Sparse Component Extraction: Solve an optimization problem that includes a lasso penalty on w: max{cov(Xw, Y) - λ||w||₁}, where λ is a tuning parameter.

- Parameter Tuning: Use cross-validation to optimize both the number of components and the sparsity parameter (λ or the number of features to keep per component).

- Interpretation: The resulting model yields a shortlist of selected, discriminative features for each component.

Microbiome-Specific Algorithms

These algorithms incorporate the unique characteristics of microbiome data: compositionality, sparsity, phylogenetic structure, and heterogeneous variance.

- ANCOM-BC: (Analysis of Compositions of Microbiomes with Bias Correction) Models observed abundances as a function of sample characteristics, correcting for sample-specific sampling fractions and bias.

- LOCOM: (Logistic regression for COmpositional Microbiome data) A non-parametric, compositionally-aware logistic regression framework for testing the association of individual taxa with a binary outcome.

- LinDA: (Linear model for Differential Abundance analysis) A fast method using a novel variance-stabilizing transformation and generalized least squares to improve power and control false discovery rate.

Generalized Experimental Protocol for Differential Abundance (e.g., ANCOM-BC):

- Input: Raw count table X (n x p), metadata (e.g., group labels).

- Bias Estimation: Estimate sample-specific sampling fractions (biases) using a log-linear model on reference taxa.

- Model Fitting: Fit a linear model on bias-corrected log-transformed abundances: log(𝑂𝑖𝑗) = 𝛽𝑗 + 𝐱𝑖ᵀ𝜶𝑗 + log(𝑑̂𝑖). Where 𝑂𝑖𝑗 is observed abundance, 𝛽𝑗 is intercept, 𝐱𝑖 is covariate vector, 𝜶𝑗 is coefficient vector, and 𝑑̂𝑖 is estimated bias.

- Hypothesis Testing: Perform significance test (e.g., Wald test) on the coefficients 𝜶𝑗.

- FDR Control: Apply multiple testing correction (e.g., Benjamini-Hochberg) to p-values.

Data Presentation: Model Comparison

Table 1: Comparison of High-Dimensional Models for Microbiome Analysis

| Model | Key Feature | Handles Compositionality? | Performs Feature Selection? | Primary Use Case | Typical Software/Package |

|---|---|---|---|---|---|

| PLS-DA | Maximizes covariance between X and Y | No (requires pre-processing) | No (uses all features) | Discriminant analysis, classification | mixOmics (R), sklearn (Python) |

| sPLS-DA | L1 penalty for sparse component weights | No (requires pre-processing) | Yes (integrated selection) | Discriminant analysis with biomarker ID | mixOmics (R) |

| ANCOM-BC | Bias correction for sampling fraction | Yes (inherently models it) | No (tests all features) | Differential abundance testing | ANCOMBC (R) |

| LOCOM | Non-parametric, compositionally aware | Yes (uses compositionally robust test) | No (tests all features) | Association testing (binary outcome) | LOCOM (R) |

| LinDA | Variance-stabilizing transformation | Yes (via transformation) | No (tests all features) | Differential abundance testing | LinDA (R) |

Table 2: Example Performance Metrics from a Simulated p>>n Study (n=50, p=1000)

| Model | Balanced Accuracy (Mean ± SD) | AUC-ROC | Number of Features Selected | False Discovery Rate (FDR) |

|---|---|---|---|---|

| PLS-DA (2 comp.) | 0.82 ± 0.07 | 0.89 | 1000 (all) | Not Applicable |

| sPLS-DA (2 comp.) | 0.88 ± 0.05 | 0.93 | 45 ± 8 | 0.10 |

| Reference: Random Forest | 0.85 ± 0.06 | 0.91 | Varies (importance) | Not Applicable |

Visualizations

Title: PLS-DA Model Training and Validation Workflow

Title: Microbiome Data Analysis Pipeline with Model Options

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for High-Dimensional Microbiome Analysis

| Item / Reagent | Function / Purpose | Example / Specification |

|---|---|---|

| QIIME 2 / MOTHUR | End-to-end microbiome analysis pipeline from raw sequences to ecological statistics. Provides reproducible workflows. | QIIME2 (version 2024.5), plugins for diversity, composition, and phylogeny. |

R phyloseq Object |

Central data structure for organizing OTU/ASV table, taxonomy, sample metadata, and phylogenetic tree. Enables integrative analysis. | phyloseq class in R, inputs from DADA2, Deblur, or QIIME2. |

| Centered Log-Ratio (CLR) Transform | Normalization technique for compositional data. Mitigates the unit-sum constraint, allowing use of standard statistical methods. | Implemented via compositions::clr() or microbiome::transform(). |

mixOmics R Package |

Primary toolkit for applying PLS-DA, sPLS-DA, and related multivariate methods to 'omics data. | Version 6.26.0, includes functions plsda(), splsda(), and performance diagnostics. |

ANCOMBC R Package |

Implements the ANCOM-BC algorithm for differential abundance testing with bias correction. | Version 2.2.0, function ancombc2() for flexible model formulation. |

| High-Performance Computing (HPC) Cluster Access | Enables permutation testing, repeated cross-validation, and large-scale meta-analyses that are computationally intensive. | Slurm or similar job scheduler, with adequate RAM (>64GB) for p>>n problems. |

| Permutation Test Framework | Non-parametric method for assessing the statistical significance of model performance metrics (e.g., classification accuracy). | 1000+ permutations of the response label Y to generate a null distribution. |

Microbiome research is fundamentally a "p >> n" problem, where the number of measured features (p - microbial taxa, often hundreds to thousands) vastly exceeds the number of samples (n - typically tens to hundreds). This high-dimensional setting invalidates classical statistical methods and introduces severe challenges:

- Covariance Matrix Estimation: The sample covariance matrix is singular and cannot be inverted.

- Overfitting: Models with more parameters than samples will fit noise.

- Spurious Correlations: Many apparent associations are statistical artifacts.

Network inference provides a framework to move beyond differential abundance to understand microbial community structure and ecological interactions. Graphical models represent these interactions, where nodes are taxa and edges represent conditional dependencies.

The SPIEC-EASI Framework: Theory and Implementation

SPIEC-EASI (Sparse Inverse Covariance Estimation for Ecological Association Inference) is a two-step pipeline designed specifically for compositional, high-dimensional microbiome data.

Step 1: Compositional Data Transformation Microbiome data (e.g., 16S rRNA gene amplicon sequencing) provides relative abundances, residing in a simplex. Applying standard correlation measures (e.g., Pearson) leads to false positives. SPIEC-EASI employs the Centered Log-Ratio (CLR) transformation. [ \text{CLR}(x) = \left[ \log\frac{x1}{g(x)}, \ldots, \log\frac{xp}{g(x)} \right], \quad g(x) = \left( \prod{i=1}^p xi \right)^{1/p} ] where (x) is the compositional vector and (g(x)) is the geometric mean. This transformation moves data from the simplex to a (p-1) dimensional Euclidean space.

Step 2: Sparse Inverse Covariance Selection After CLR transformation, SPIEC-EASI infers the underlying microbial interaction network by estimating the sparse inverse covariance (precision) matrix, (\Theta = \Sigma^{-1}). A non-zero entry (\Theta_{ij}) indicates a conditional dependence between taxa i and j, given all other taxa. Sparsity is induced via one of two methods:

- GLASSO (Graphical Lasso): Maximizes the (l_1)-penalized log-likelihood.

- MB (Neighborhood Selection): Uses sparse regression (Lasso) to select the neighborhood for each node.

Key Experimental Protocol: SPIEC-EASI Network Inference

- Input: An (n \times p) count or relative abundance matrix (samples x taxa). Pre-filter to a manageable number of prevalent taxa (e.g., >10% prevalence).

- Preprocessing: Optional rarefaction (controversial) or convert to proportions. Apply a pseudo-count (e.g., 0.5) to zero values.

- CLR Transformation: Compute the geometric mean of each sample and log-transform the ratios.

- Sparse Inverse Covariance Estimation:

- Choose

method='glasso'ormethod='mb'. - Use StARS (

pulsarpackage) or stability-based criterion to select the sparsity/regularization parameter ((\lambda)) that yields the most stable edge set. - Fit the model to obtain the precision matrix (\Theta).

- Choose

- Network Construction: Threshold the precision matrix (soft-threshold via (\lambda) selection). Non-zero off-diagonal entries become undirected edges in the network.

- Validation: Use cross-validation, edge stability assessment, or comparison to synthetic data with known ground truth (e.g., SPIEC-EASI's

SpiecEasi::makeGraphsimulations).

Diagram 1: SPIEC-EASI workflow for network inference.

Quantitative Data & Performance

Table 1: Comparison of Network Inference Methods in High-Dimensional (p>>n) Simulations

| Method | Data Type Assumption | Core Algorithm | Key Strength | Key Limitation (p>>n context) | Typical F1-Score* (p=200, n=100) |

|---|---|---|---|---|---|

| SPIEC-EASI (MB) | Compositional | Sparse Regression | Fast, good control of false discoveries | Sensitive to tuning parameter choice | 0.72 |

| SPIEC-EASI (GLASSO) | Compositional | Penalized Likelihood | Stable, single convex optimization | Computationally intensive for very large p | 0.68 |

| SparCC | Compositional | Variance Decomposition | Designed for sparse compositions | Assumes low average correlation; can be biased | 0.55 |

| CCLasso | Compositional | Penalized Likelihood | Handles zeros via covariance correction | Performance degrades with high zero proportion | 0.62 |

| Pearson Correlation | Arbitrary | Covariance | Simple, fast | Ignored compositionality; many spurious edges | 0.38 |

| MIC | Arbitrary | Information Theory | Captures non-linear relationships | Extremely high false positive rate when p>>n | 0.41 |

*F1-Score (harmonic mean of Precision & Recall) based on benchmark studies using synthetic microbial data with known network structure (e.g., SPIEC-EASI publications). Scores are illustrative.

Table 2: Impact of Dimensionality (p/n ratio) on Network Inference Accuracy

| p (Features) | n (Samples) | p/n Ratio | Average Node Degree Recovered | Precision (SPIEC-EASI MB) | Recall (SPIEC-EASI MB) | Computational Time (min)* |

|---|---|---|---|---|---|---|

| 50 | 100 | 0.5 | 4.8 | 0.92 | 0.85 | <1 |

| 200 | 100 | 2.0 | 3.2 | 0.81 | 0.66 | ~5 |

| 500 | 100 | 5.0 | 1.5 | 0.73 | 0.41 | ~30 |

| 1000 | 100 | 10.0 | 0.7 | 0.65 | 0.22 | ~120 |

*Benchmarked on a standard 8-core workstation. Time includes StARS stability selection.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Microbial Network Analysis

| Item Name / Solution | Function & Relevance in High-Dimensional Inference |

|---|---|

| QIIME 2 / mothur / DADA2 | Primary pipelines for processing raw sequencing reads into an Amplicon Sequence Variant (ASV) or OTU table—the foundational n × p data matrix. |

| SpiecEasi R Package | Core implementation of the SPIEC-EASI pipeline, including CLR transformation, GLASSO/MB, and StARS stability selection. |

| NetCoMi R Package | Comprehensive toolbox for network construction (including SPIEC-EASI), comparison, differential network analysis, and visualization. |

| gRbase / igraph / qgraph R Packages | Libraries for manipulating and visualizing graphical models and networks (igraph), and modeling probabilistic graphical structures (gRbase). |

| Pulsar R Package | Implements the StARS (Stability Approach to Regularization Selection) method for robust hyperparameter (λ) tuning in high-dimensional settings. |

Synthetic Data with Known Network (e.g., SPIEC-EASI makeGraph, seqtime R package) |

Critical for method validation and benchmarking in the absence of a biological ground truth, especially under p>>n conditions. |

| High-Performance Computing (HPC) Cluster Access | Necessary for computationally intensive steps (StARS, bootstrapping) with large p or for running multiple method comparisons. |

Compositional Data Analysis (CoDA) Libraries (compositions, robCompositions R packages) |

Provide alternative transformations and robust methods for handling zeros and outliers in compositional data prior to inference. |

Advanced Considerations and Protocol Extensions

Differential Network Analysis: To compare networks between two conditions (e.g., Healthy vs. Disease), a common protocol is:

- Infer networks separately for each group using SPIEC-EASI.

- Use the NetCoMi package's

netComparefunction to statistically test global (e.g., edge weight correlation, robustness) and local (e.g., node centrality, specific edge differences) properties via permutation tests. - Validate differential edges by examining their stability across bootstrap replicates.

Handling Zeros: The zero problem in sequencing data is exacerbated in p>>n settings. A detailed protocol involves:

- Simple Replacement: Add a uniform pseudo-count (e.g., 0.5) to all counts.

- Multiplicative Replacement: Use the

zCompositionsR package for more sophisticated imputation of zeros. - Model-Based Approach: Use a Bayesian-multiplicative replacement or a hurdle model (as in the

microbialR package) before CLR transformation.

Diagram 2: Core challenges and solutions for p>>n inference.

Within the thesis on the challenges of p >> n in microbiome research, SPIEC-EASI represents a principled statistical solution that directly addresses both the dimensionality crisis through sparse inverse covariance estimation and the compositional nature of the data via the CLR transformation. While not a panacea, its stability-driven framework provides a robust foundation for generating testable hypotheses about microbial ecological interactions from high-dimensional, low-sample-size data. Future directions involve integrating phylogenetic information, multi-omic data layers (metabolomics, metatranscriptomics), and developing more powerful methods for differential network analysis in this challenging context.

Microbiome research, particularly in therapeutic development, is characterized by the "large p, small n" problem, where the number of features (p; e.g., microbial taxa, genes) vastly exceeds the number of samples (n). This high-dimensional data landscape, often with p>>n, presents significant challenges: increased risk of overfitting, multicollinearity, and computational complexity. This technical guide examines three robust machine learning (ML) algorithms—Random Forests, Support Vector Machines (SVMs), and XGBoost—that are particularly suited for constructing predictive models from such complex, sparse biological data.

Core Algorithmic Frameworks & Suitability for Microbiome Data

Random Forests (RF)

An ensemble method that constructs a multitude of decision trees during training. It is inherently robust to high dimensionality due to feature subsampling at each split, which decorrelates trees and reduces overfitting.

Key Mechanism for p>>n: The mtry parameter controls the number of randomly selected features considered for splitting a node (typically √p for classification). This random subspace method is critical for high-dimensional data.

Support Vector Machines (SVM)

SVMs identify a hyperplane that maximizes the margin between classes in a transformed feature space. Kernel functions (e.g., linear, radial basis function) allow efficient computation in high-dimensional spaces without explicit transformation.

Key Mechanism for p>>n: Regularization parameter (C) controls the trade-off between achieving a low error on training data and minimizing model complexity, which is vital to prevent overfitting when n is small. For very high p, linear kernels often perform well.

XGBoost (eXtreme Gradient Boosting)

A gradient-boosting framework that builds trees sequentially, where each new tree corrects errors of the ensemble. It incorporates advanced regularization (L1/L2) to control model complexity.

Key Mechanism for p>>n: Regularization terms in the objective function penalize model complexity. Its built-in feature importance and selection capabilities help navigate redundant or irrelevant features common in microbiome datasets.

Recent benchmark studies on microbiome datasets (e.g., 16S rRNA amplicon, metagenomic shotgun) comparing these algorithms yield the following insights:

Table 1: Comparative Performance of ML Algorithms on Microbiome Classification Tasks (p>>n)

| Metric / Algorithm | Random Forest | Support Vector Machine (RBF Kernel) | XGBoost |

|---|---|---|---|

| Avg. Accuracy (CV) | 0.82 (±0.05) | 0.79 (±0.07) | 0.85 (±0.04) |

| Avg. AUC-ROC | 0.88 (±0.04) | 0.85 (±0.06) | 0.90 (±0.03) |

| Feature Selection Capability | High (Impurity-based) | Low (Requires pre-filtering) | Very High (Gain-based) |

| Interpretability | Moderate | Low | Moderate |

| Training Time (Relative) | Medium | High (for large n) | Medium-High |

| Robustness to Noise | High | Medium | Medium |

Table 2: Typical Hyperparameter Ranges for Microbiome Data (p >> n)

| Algorithm | Critical Hyperparameter | Recommended Search Range (p>>n context) | Primary Effect |

|---|---|---|---|

| Random Forest | mtry (Features per split) |

[√p, p/3] | Controls decorrelation |

n_estimators |

[500, 2000] | Number of trees | |

| SVM | C (Regularization) |

[1e-3, 1e3] (log scale) | Margin hardness |

gamma (RBF kernel) |

[1e-5, 1e-1] (log scale) | Kernel influence | |

| XGBoost | max_depth |

[3, 6] | Tree complexity |

learning_rate (eta) |

[0.01, 0.1] | Step size shrinkage | |

subsample |

[0.7, 0.9] | Prevents overfitting | |

colsample_bytree |

[0.5, 0.8] | Feature subsampling |

Detailed Experimental Protocol for Benchmarking

Objective: To compare the classification performance of RF, SVM, and XGBoost on a microbiome dataset with a case-control phenotype.

Materials & Data:

- Dataset: Fecal microbiome 16S rRNA data (e.g., from IBD study). Dimensions: n=150 samples, p=5,000 OTUs (Operational Taxonomic Units).

- Phenotype: Binary classification (e.g., Healthy vs. Crohn's Disease).

Step-by-Step Methodology:

Preprocessing & Feature Filtering:

- Normalization: Convert OTU counts to relative abundance (or use CSS, CLR transformations).

- Low Variance Filter: Remove OTUs present in <10% of samples or with near-zero variance.

- Address Sparsity: Apply a prevalence filter or consider zero-inflated Gaussian models as an alternative preprocessing step.

- Train-Test Split: 70/30 stratified split to preserve class distribution.

Dimensionality Reduction (Optional but Common):

- Apply a univariate filter (e.g., ANCOM-BC, DESeq2 for differential abundance) to select top ~500-1000 discriminatory features. Alternatively, use PCA on CLR-transformed data, retaining components explaining 80% variance.

Model Training with Nested Cross-Validation:

- Outer Loop: 5-fold CV for performance estimation.

- Inner Loop: 3-fold CV within each training fold for hyperparameter tuning via grid/random search (see Table 2 for ranges).

- Class Imbalance: Apply

class_weight='balanced'(RF, SVM) orscale_pos_weight(XGBoost).

Model Evaluation:

- Primary Metrics: Calculate Accuracy, AUC-ROC, Precision, Recall, F1-Score on the held-out test set.

- Statistical Significance: Use DeLong's test to compare AUC-ROC values between algorithms.

- Feature Importance: Extract and compare top 20 discriminatory features from RF (mean decrease Gini) and XGBoost (gain).

Validation:

- Perform external validation on a completely independent cohort if available.

Visualizing Model Workflows and Decision Logic

Title: Random Forest Training Workflow for High-Dimensional Data

Title: SVM Kernel Trick for High-Dimensional Microbiome Data