Taming the Microbial Jungle: A Strategic Guide to Feature Selection for High-Dimensional Microbiome Data

High-dimensional microbiome datasets present a formidable challenge for researchers seeking to identify true biological signals.

Taming the Microbial Jungle: A Strategic Guide to Feature Selection for High-Dimensional Microbiome Data

Abstract

High-dimensional microbiome datasets present a formidable challenge for researchers seeking to identify true biological signals. This article provides a comprehensive guide to feature selection, a critical step for robust biomarker discovery and predictive modeling. We first establish the core challenges of microbiome data, including sparsity, compositionality, and noise. We then detail and compare modern methodological approaches, from traditional statistical filters to advanced machine learning wrappers and embedded methods. A dedicated troubleshooting section addresses common pitfalls like overfitting and batch effects, offering practical optimization strategies. Finally, we discuss rigorous validation protocols, comparative performance metrics, and best practices for translating microbial features into reliable biological insights for clinical and pharmaceutical applications.

Why Feature Selection is Your First, Most Critical Step in Microbiome Analysis

Troubleshooting Guides & FAQs

Q1: After 16S rRNA sequencing and processing with DADA2, my feature table contains over 10,000 ASVs. How do I begin reducing this dimensionality for meaningful statistical analysis without losing biological signal?

A: Initial dimensionality reduction should be multi-step. First, apply prevalence and variance filtering.

- Protocol: Filter out ASVs present in fewer than 10% of samples (prevalence) and with a total count across all samples below a threshold (e.g., 20 reads). This removes rare, potentially spurious features. Follow this with a variance-stabilizing transformation (VST) like DESeq2's

varianceStabilizingTransformationor a centered log-ratio (CLR) transformation after adding a pseudocount. This mitigates compositionality and heteroscedasticity. Subsequent Principal Coordinates Analysis (PCoA) on a robust distance metric (e.g., Aitchison) will reveal if major sample clusters exist.

Q2: My PERMANOVA results on beta-diversity are significant, but I cannot identify which specific ASVs are driving the separation between my treatment and control groups. What feature selection methods are robust for microbiome count data?

A: For high-dimensional microbiome data, regularized regression models are effective.

- Protocol: Zero-Inflated Negative Binomial (ZINB) based LASSO Regression.

- Data Preparation: Aggregate ASVs to genus level to reduce sparsity. Create a phenotype vector (e.g., Treatment=1, Control=0).

- Model Fitting: Use the

glmnetpackage in R with a negative binomial family and log link. Incorporate an offset for library size if needed. Set alpha=1 for LASSO penalty. - Cross-Validation: Perform 10-fold cross-validation (

cv.glmnet) to identify the optimal lambda value (λ) that minimizes the deviance. - Feature Extraction: Extract the non-zero coefficients from the model fitted with

lambda.minorlambda.1se. These ASV/genera are the selected discriminative features.

Q3: I suspect my intervention affects a known metabolic pathway. How can I perform feature selection that incorporates prior biological knowledge (e.g., KEGG pathways)?

A: Pathway-centric feature selection can be achieved through methods like SELBAL or by using pathway enrichment on pre-selected features.

- Protocol: Balance Selection with SELBAL.

- Input: CLR-transformed ASV table, metadata with groups.

- Execution: Use the

selbalpackage. The algorithm identifies a balance—a log-ratio between two groups of ASVs—that best discriminates your conditions. - Output: The result is not a single ASV but a microbial signature (balance) comprised of positively and negatively weighted groups of ASVs. These groups can be mapped to KEGG modules via tools like PICRUSt2 or Tax4Fun2 for functional interpretation.

Q4: My dataset has multiple confounding factors (age, BMI, batch). How can I select features associated primarily with the disease state?

A: Use a model that can adjust for covariates.

- Protocol: Multivariable Association Model with MaAsLin2.

- Setup: Prepare your ASV table (recommended: TSS normalized then log-transformed) and metadata file.

- Model Specification: In MaAsLin2, specify your primary fixed effect (e.g., DiseaseStatus) and include all confounders as additional fixed effects (e.g., Age, BMI, Batch).

- Run & Interpretation: The algorithm performs a series of general linear models with multiple testing correction (e.g., Benjamini-Hochberg). The output table lists ASVs significantly associated with the DiseaseStatus after adjusting for the specified confounders.

Q5: How do I validate my selected microbial biomarkers from a discovery cohort in an independent validation cohort?

A: Validation requires locking down the model and testing it on new data.

- Protocol: Cross-Cohort Validation.

- Train Model in Discovery Cohort: Using your chosen method (e.g., LASSO), fit the model on the full discovery dataset. Save the exact model parameters (including coefficients, normalization factors, and transformation rules).

- Apply to Validation Cohort: Transform the validation cohort ASV data using the parameters learned from the discovery cohort (e.g., the same mean for centering, the same ASV list). Generate predictions using the saved model.

- Assess Performance: Calculate performance metrics (AUC-ROC, Accuracy, Sensitivity/Specificity) on the validation cohort predictions. A significant drop in performance indicates potential overfitting in discovery.

Table 1: Comparison of Common Feature Selection Methods for Microbiome Data

| Method | Model Type | Handles Sparsity? | Adjusts for Covariates? | Output | Software/Package |

|---|---|---|---|---|---|

| ANCOM-BC | Linear Model | Yes (Compositional) | Yes | Differentially abundant taxa | ANCOMBC (R) |

| DESeq2 | Negative Binomial GLM | Yes | Yes | Differentially abundant taxa | DESeq2 (R) |

| LASSO/glmnet | Regularized Regression | Moderate (pre-filter advised) | Yes | Sparse set of predictive taxa | glmnet (R) |

| LEfSe | Linear Discriminant Analysis | No (pre-filter advised) | No | Biomarker taxa with effect size | Galaxy, Huttenhower Lab |

| SELBAL | Balance Analysis | Yes (via log-ratios) | Limited | A single predictive balance (taxa groups) | selbal (R) |

| MaAsLin2 | General Linear Models | Yes (transformations) | Yes | Significantly associated taxa | MaAsLin2 (R) |

Table 2: Typical Dimensionality Reduction Pipeline & Data Loss

| Processing Step | Typical Input Dimension | Typical Output Dimension | Reduction Goal |

|---|---|---|---|

| Raw Sequence Reads | Millions of sequences | 10,000 - 50,000 ASVs | Denoising, chimera removal |

| Prevalence/Variance Filtering | 10,000 - 50,000 ASVs | 500 - 2,000 ASVs | Remove rare/noisy features |

| Agglomeration (e.g., to Genus) | 500 - 2,000 ASVs | 100 - 300 Genera | Reduce sparsity, biological summarization |

| Statistical Feature Selection | 100 - 300 Features | 5 - 20 Biomarker Features | Identify drivers of phenotype |

Experimental Protocol: Zero-Inflated Negative Binomial LASSO Regression

Objective: To identify a minimal set of microbial taxa predictive of a binary outcome (e.g., responder vs. non-responder) from high-dimensional ASV data.

Materials:

- A count matrix (ASVs x Samples).

- A metadata vector with binary outcome (0/1).

- R software with

glmnetandpsclpackages installed.

Procedure:

- Pre-processing: Filter ASVs as in Q1. Consider agglomeration to genus level.

- Model Design Matrix: Create model matrix

xfrom the filtered count matrix (transposed: Samples x Features). Do not include an intercept column. Create response vectoryfrom metadata. - Offset (Optional): Calculate log library size (total reads per sample) as an offset to control for sequencing depth.

- Initial ZINB Fit: Fit a non-penalized ZINB model to the full data using

zeroinfl()from thepsclpackage. This is computationally intensive but provides dispersion parameters. - LASSO-Penalized Fit: Using the

glmnetpackage, fit a negative binomial regression with LASSO penalty (family="neg_binomial",alpha=1). Use the dispersion parameters (theta) estimated in step 4. Pass the offset from step 3. - Tuning: Perform k-fold cross-validation (

cv.glmnet) to determine the optimal lambda (λ) for prediction. - Feature Extraction: Apply

coef(cv_model, s = "lambda.min")to obtain coefficients. Non-zero coefficients are the selected features.

Visualizations

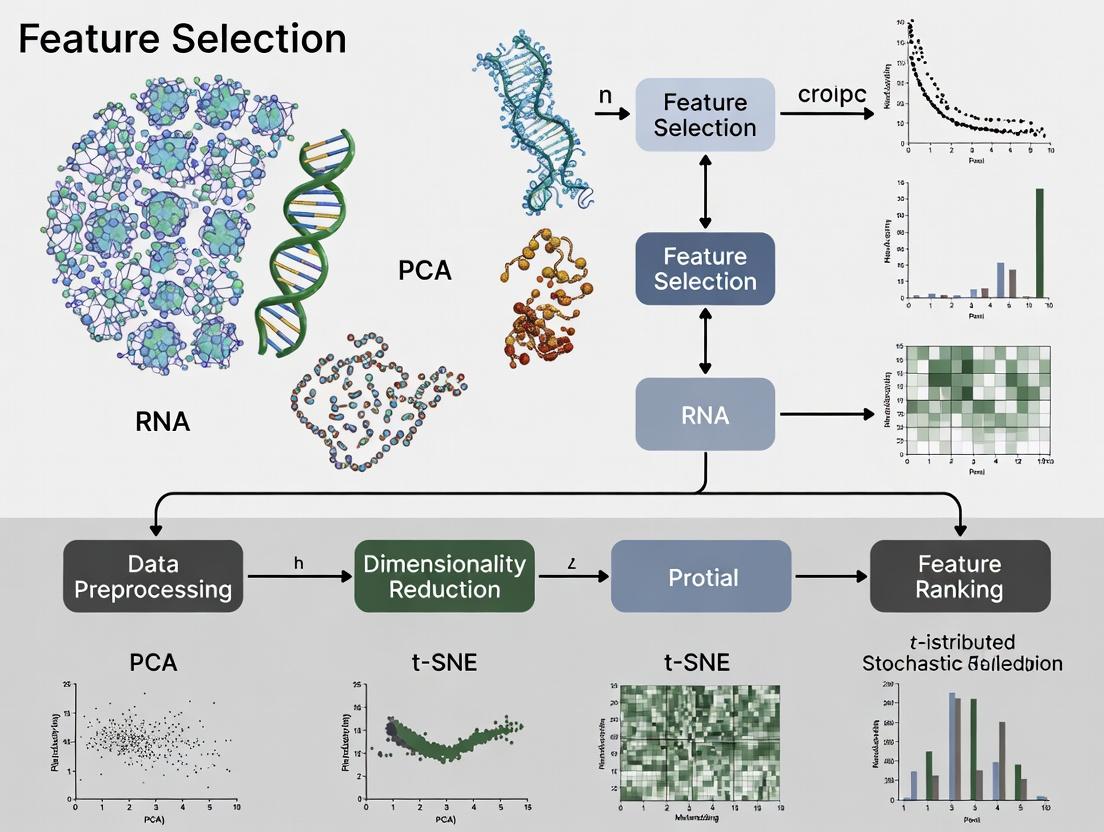

Title: Microbiome Data Analysis Workflow: From ASVs to Insights

Title: Feature Selection Decision Pathway for Microbiome Data

The Scientist's Toolkit: Research Reagent & Computational Solutions

| Item | Function in Analysis | Example/Tool |

|---|---|---|

| 16S rRNA Gene Primer Set | Amplifies hypervariable regions for sequencing. Choice affects taxonomic resolution. | 515F/806R (V4), 27F/1492R (full-length) |

| Bioinformatics Pipeline | Processes raw FASTQ files into an ASV table. Handles quality control, denoising, chimera removal. | DADA2, QIIME 2, mothur |

| Reference Database | For taxonomic assignment of ASV sequences. | SILVA, Greengenes, GTDB |

| Normalization Method | Corrects for uneven sequencing depth and compositionality before downstream analysis. | Centered Log-Ratio (CLR), Cumulative Sum Scaling (CSS), DESeq2's VST |

| Statistical Software Environment | Provides libraries for advanced statistical modeling and visualization. | R (with phyloseq, microbiome, glmnet packages), Python (scikit-bio, sci-kit learn) |

| Functional Prediction Tool | Infers putative metabolic functions from 16S data, enabling pathway-based feature selection. | PICRUSt2, Tax4Fun2, HUMAnN (for shotgun data) |

| Long-Term Storage Solution | Archives raw sequences and processed data for reproducibility and sharing. | NCBI SRA, ENA, Qiita, Institutional Servers |

Understanding Data Sparsity, Compositionality, and Experimental Noise

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My feature selection method identifies a set of microbial taxa, but the results are unstable across repeated runs on the same dataset. What could be the cause and how can I resolve this?

A: This is a classic symptom of high-dimensional data sparsity interacting with algorithmic randomness. In microbiome data, many taxa have counts of zero in most samples. When you subsample data or if the algorithm uses random initialization (like in some wrapper methods), these zero-inflated features can cause significant variance in selected features.

- Solution: Employ stability selection. Run your feature selection method (e.g., LASSO) multiple times (e.g., 100x) on bootstrapped subsets of your data. Only retain features that are selected in a high percentage (e.g., >80%) of runs. This penalizes features that appear due to random noise correlations with the sparse outcome.

Q2: After performing a differential abundance analysis between two groups, I get a list of significant taxa. However, when I create a compositional bar plot, their relative abundances look miniscule. Are these findings biologically relevant?

A: This issue directly relates to compositionality. Microbiome data is relative (e.g., from 16S rRNA sequencing), not absolute. A taxon can be statistically "differentially abundant" because its proportion has changed relative to others, even if its absolute count is low. A large relative decrease in a dominant taxon can make many minor taxa appear statistically increased, even if their absolute numbers are unchanged.

- Solution: First, apply a centered log-ratio (CLR) transformation prior to analysis to mitigate compositionality effects. Second, always supplement relative abundance data with qPCR data for absolute abundance of key taxa or the total bacterial load, if possible, to contextualize your findings.

Q3: My negative control samples in 16S sequencing show non-negligible reads and sometimes even taxa that appear in my experimental samples. How should I handle this experimental noise?

A: This is expected experimental noise from lab reagents, kit contaminants, and index hopping.

- Solution: Implement a rigorous contamination removal pipeline.

- Identify Contaminants: Use the

decontampackage (R) with its prevalence or frequency method, comparing putative contaminants in positive samples versus negative control samples. - Filter: Remove ASVs/OTUs identified as contaminants from the entire dataset.

- Threshold: Apply a minimum abundance threshold (e.g., features must have at least 10 reads in a sample to be considered present) to filter low-level noise that passed through.

- Identify Contaminants: Use the

Q4: When using penalized regression (like LASSO) for feature selection, my model performance is good on the training set but poor on the validation set. What steps should I take?

A: This indicates overfitting, often exacerbated by data sparsity and high dimensionality (p >> n).

- Solution:

- Nested Cross-Validation: Use an outer CV loop for performance estimation and an inner CV loop strictly for tuning the regularization parameter (lambda). This prevents data leakage.

- Aggregate Features: Before modeling, consider aggregating rare OTUs at a higher taxonomic level (e.g., Genus) to reduce sparsity.

- Regularization Path: Check the regularization path. If the path is extremely unstable with many features entering/leaving at similar lambdas, it's a sign of high noise. Increase the penalty or use the elastic net (mix of LASSO and ridge) for more stable selection.

Key Experiment Protocols

Protocol 1: Stability Selection for Robust Feature Selection

- Input: CLR-transformed feature matrix (samples x taxa), outcome vector.

- Subsampling: Generate B (e.g., 100) bootstrap subsets by randomly sampling N samples with replacement.

- Feature Selection: On each subset, run a feature selection algorithm (e.g., LASSO with lambda selected via 5-fold CV on that subset). Record all selected features.

- Stability Calculation: For each original feature, compute its selection probability as (Number of subsets where selected) / B.

- Thresholding: Retain features with a selection probability above a pre-defined cutoff (e.g., π_thr = 0.8). The final stable feature set is the intersection of features selected at this probability across all bootstrap iterations.

Protocol 2: Contaminant Identification with decontam (Prevalence Method)

- Prepare Data: Create an ASV/OTU table (samples x features) and a sample metadata table with a column indicating "SampleType" (e.g., "TrueSample", "NegativeControl").

- Calculate Prevalence: For each feature, calculate its prevalence (proportion of samples where present) separately in true samples and in negative controls.

- Statistical Test: Perform a Wilcoxon rank-sum test or a Fisher's exact test (using

decontam) to assess if the prevalence of each feature is significantly higher in true samples than in controls. - Identify Contaminants: Features with a p-value above a threshold (e.g., > 0.05 for the prevalence method, or a lower threshold for the frequency method) are classified as contaminants.

- Filter: Remove all contaminant features from the OTU table before downstream analysis.

Table 1: Impact of Data Processing on Feature Sparsity in a Simulated Microbiome Dataset (n=100 samples, p=500 taxa)

| Processing Step | Average Sparsity (Zero % per Sample) | Number of Non-Zero Features (Mean ± SD) |

|---|---|---|

| Raw Count Data | 85.2% | 74.4 ± 12.1 |

| After Rarefaction (Depth=10,000) | 84.1% | 79.1 ± 10.8 |

| After CLR Transformation* | 0% (by definition) | 500 |

| After Stability Selection (π_thr=0.8) | N/A | 22 ± 3 |

*Note: CLR uses a pseudo-count for zero replacement, eliminating sparsity in the transformed space but not adding information.

Table 2: Common Sources of Experimental Noise in 16S rRNA Sequencing

| Noise Source | Description | Typical Mitigation Strategy |

|---|---|---|

| Kit/Reagent Contamination | Bacterial DNA present in extraction kits and PCR reagents. | Use multiple negative controls; apply decontam. |

| Index Hopping (Multiplexing) | Misassignment of reads between samples during pooled sequencing. | Use dual-unique indexing; bioinformatic filters. |

| PCR Amplification Bias | Differential amplification of 16S regions based on primer specificity and GC content. | Use validated primer sets; limit PCR cycles. |

| Chimeric Sequences | Artifactual reads formed during PCR from two parent sequences. | Use chimera detection tools (e.g., DADA2, UCHIME). |

Visualizations

Title: Microbiome Feature Selection Workflow

Title: Challenges Impacting Microbiome Feature Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Microbiome Feature Selection Research |

|---|---|

| Mock Microbial Community Standards (e.g., ZymoBIOMICS) | Contains known, quantified strains. Used to assess technical noise, bioinformatic pipeline accuracy, and detection limits in your experimental setup. |

| DNA Extraction Kit with Bead Beating (e.g., Qiagen PowerSoil Pro) | Standardizes cell lysis across diverse microbial cell walls, critical for minimizing bias in the initial feature count matrix. |

| UltraPure PCR-Grade Water | Used as negative control during extraction and PCR to identify reagent-borne contaminant DNA, which is a major source of experimental noise. |

| Dual-Unique Indexed Adapters (Illumina) | Significantly reduces index hopping compared to single indexing, ensuring sample identity fidelity and reducing noise in sample-feature mapping. |

| SYBR Green or TaqMan Assay for 16S rRNA qPCR | Quantifies total bacterial load (16S gene copies) in samples. Essential for converting relative abundances from sequencing to absolute abundances, addressing compositionality. |

| Phusion High-Fidelity DNA Polymerase | Provides high-fidelity amplification during library prep, reducing PCR errors that can create artifactual "features" (chimeras, point mutations). |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After applying PCA to my 16S rRNA amplicon sequence variant (ASV) table, the variance explained by the first two principal components is very low (<30%). What could be the cause and how can I improve it?

A: Low cumulative variance is common in sparse, high-dimensional microbiome data. First, ensure you have applied an appropriate transformation to address compositionality and heteroscedasticity. We recommend a centered log-ratio (CLR) transformation after replacing zeros using a method like Bayesian multiplicative replacement. If variance remains low, the biological signal may be distributed across many dimensions. Consider using alternative methods like Phylogenetic Isometric Log-Ratio (PhILR) transformation or a distance-based dimensionality reduction method (PCoA on a robust beta-diversity metric). Increasing sample size can also help capture broader variance.

Q2: My differential abundance analysis (e.g., DESeq2, LEfSe) yields a long list of putative biomarkers, but many are low-abundance, unannotated taxa. How can I prioritize robust, biologically interpretable biomarkers?

A: This is a frequent challenge. Implement a multi-stage filtering pipeline:

- Pre-filtering: Remove taxa present in <10% of samples or with near-zero variance.

- Effect Size & Prevalence: Prioritize biomarkers with large effect size (e.g., log2 fold change >2) and high prevalence in the case/control group (>70%).

- Consistency: Use bootstrap resampling or leave-multiple-out cross-validation to assess biomarker stability. Retain only features selected in >80% of iterations.

- External Validation: Check candidate biomarkers against public repositories (e.g., GMrepo, Qiita) for consistency in similar disease states.

- Functional Correlation: If possible, correlate taxa with metagenomic functional pathways (e.g., via PICRUSt2 or HUMAnN3) to assess mechanistic plausibility.

Q3: My supervised model (Random Forest, SVM) for disease classification is severely overfitting despite using feature selection. How do I remove noise and improve generalization?

A: Overfitting indicates the selected features contain experiment-specific noise. Mitigation strategies include:

- Aggregation: Aggregate ASVs at a higher taxonomic level (e.g., Genus) or into co-abundance groups (CAGs) to reduce dimensionality and noise.

- More Stringent CV: Use nested cross-validation, where the feature selection is performed within each training fold of the outer CV loop. This prevents data leakage.

- Consensus Features: Apply multiple, fundamentally different feature selection methods (e.g., LASSO, stability selection, and RF feature importance). Use only the intersecting set of features as your final biomarker panel.

- Batch Correction: Apply ComBat or similar methods if technical batch effects are present, as they are a major source of non-biological noise.

Experimental Protocol: A Robust Pipeline for Biomarker Discovery

Title: Integrated Protocol for Dimensionality Reduction and Biomarker Identification from 16S rRNA Data.

Objective: To process raw microbiome data into a robust, shortlist of candidate biomarkers for validation.

Input: Raw ASV/OTU table (counts), taxonomic assignments, and sample metadata.

Step-by-Step Methodology:

Preprocessing & Noise Filtering:

- Remove ASVs with a total count < 10 across all samples.

- Remove samples with a total read depth below 50% of the median depth.

- Zero Imputation: Apply Bayesian Multiplicative Replacement (e.g., using the

zCompositionsR package) to all non-zero counts.

Dimensionality Reduction & Transformation:

- Apply Centered Log-Ratio (CLR) transformation to the imputed count matrix.

- Perform Principal Component Analysis (PCA) on the CLR-transformed matrix.

- Output: Determine the number of PCs to retain (e.g., >70% variance or elbow in scree plot).

Supervised Feature Selection for Biomarker Identification:

- Using the original filtered count data (not CLR), perform differential abundance testing with a method appropriate for over-dispersed counts (e.g.,

DESeq2). - In parallel, train a Random Forest classifier using the CLR-transformed data with recursive feature elimination (RFE).

- Perform Spearman correlation analysis between each microbial feature and the clinical outcome of interest.

- Consensus: Take the intersection of features meeting:

- DESeq2: Adjusted p-value < 0.05 & |log2FoldChange| > 1.

- Random Forest RFE: In top 20 most important features.

- Correlation: |rho| > 0.4 & p-value < 0.01.

- Using the original filtered count data (not CLR), perform differential abundance testing with a method appropriate for over-dispersed counts (e.g.,

Validation & Stability Assessment:

- Perform 100-iteration bootstrap on the consensus feature set. The final biomarker panel is defined as features selected in >85% of bootstrap samples.

Visualizations

Diagram 1: Biomarker Discovery Pipeline Workflow

Diagram 2: Nested Cross-Validation to Prevent Overfitting

Research Reagent Solutions

| Item | Function & Application in Microbiome Research |

|---|---|

| DNeasy PowerSoil Pro Kit (Qiagen) | Gold-standard for microbial genomic DNA extraction from complex, difficult samples. Maximizes yield and minimizes inhibitor co-purification for downstream sequencing. |

| ZymoBIOMICS Microbial Community Standard | Defined mock community of bacteria and fungi. Serves as a positive control and spike-in for evaluating sequencing accuracy, bioinformatic pipeline performance, and batch effects. |

| PhiX Sequencing Control v3 (Illumina) | Added to every Illumina MiSeq/HiSeq run (1-5%). Monitors cluster generation, sequencing accuracy, and phasing/pre-phasing, crucial for quantifying technical noise in sequence data. |

| PNA PCR Clamp Mix | Peptide Nucleic Acid (PNA) clamps designed to block host (e.g., human) mitochondrial and plastid 16S rRNA gene amplification, enriching for microbial sequences in host-associated samples. |

| MagAttract PowerMicrobiome DNA/RNA Kit (Qiagen) | For simultaneous co-isolation of microbial DNA and RNA from the same sample. Enables integrated analysis of taxonomic composition (DNA) and potentially active community (RNA). |

The Curse of Dimensionality and the Risk of Overfitting in Microbiome Studies

Technical Support Center: Troubleshooting & FAQs

This support center addresses common computational and statistical challenges in high-dimensional microbiome analysis, framed within a thesis on feature selection.

Frequently Asked Questions (FAQs)

Q1: My model performs perfectly on my training data but fails on new samples. What is happening? A: This is a classic sign of overfitting. With thousands of Operational Taxonomic Units (OTUs) or Amplicon Sequence Variants (ASVs) (features, p) and far fewer samples (observations, n), models learn noise. Implement robust feature selection before model building and always use held-out validation sets.

Q2: Which dimensionality reduction technique is best for microbiome count data? A: No single best method exists. Use a combination:

- Variance-Stabilizing Transformation: Like DESeq2's or ANCOM-BC's, to handle compositionality and heteroscedasticity.

- Filter Methods: Apply prevalence and variance filters first to remove low-information features.

- Supervised Selection: Follow with methods like LEfSe or MaAsLin2, which use statistical tests while accounting for covariates.

Q3: How do I choose the right sample size to avoid the curse of dimensionality?

A: Power is feature-dependent. Use pilot data and tools like HMP or pwr in R for power analysis. As a rule of thumb, the number of samples should significantly exceed the number of features after aggressive filtering.

Q4: My differential abundance results are inconsistent between tools. How should I proceed? A: Inconsistency is expected due to different underlying statistical assumptions. Adopt a consensus approach: trust features identified by multiple, methodologically distinct tools (e.g., ANCOM-II, DESeq2, and a zero-inflated model like ZINB-WaVE).

Q5: How can I validate my selected microbial biomarkers? A: Internal validation is not enough. Best practices include:

- Hold-Out/Cross-Validation: Use nested CV to avoid data leakage.

- External Validation: Test on a completely independent cohort.

- Biological Validation: Use qPCR or metagenomic sequencing on key taxa.

Troubleshooting Guides

Issue: Model Instability in Cross-Validation Symptoms: Wildly fluctuating accuracy metrics across CV folds. Diagnosis: High feature correlation or redundant features causing the model to pick different, equally predictive but unstable sets each time. Solution: Apply a two-step selection: 1) Use CLR or another compositional transform. 2) Apply a sparsity-inducing method like LASSO or Elastic Net that performs embedded feature selection.

Issue: Excessive False Positives in Differential Abundance Symptoms: Too many statistically significant taxa, many of which are likely artifacts. Diagnosis: Failure to correct for multiple hypothesis testing across thousands of features. Solution: Move beyond simple FDR (Benjamini-Hochberg). Use methods that incorporate feature interdependencies or compositional data principles. See Table 1.

Issue: Poor Generalization to a New Dataset Symptoms: A diagnostic model built on Cohort A fails on Cohort B. Diagnosis: Batch effects, different sequencing protocols, or population-specific confounders dominate the signal. Solution: 1) Use batch correction (e.g., ComBat-seq). 2) Focus feature selection on robust, high-abundance, and prevalent taxa. 3) Apply meta-analysis frameworks to combine studies.

Table 1: Comparison of Feature Selection & Differential Abundance Methods

| Method | Core Approach | Controls Compositionality? | Handles Zeros? | FDR Control | Best For |

|---|---|---|---|---|---|

| ANCOM-II | Log-ratio analysis, uses F-statistic. | Yes (central to method). | Moderate (uses prevalence). | Conservative | Avoiding false positives; large effect sizes. |

| DESeq2 (phyloseq) | Negative binomial model, Wald test. | No (requires careful normalization). | Good (inherent model). | Standard (BH) | High sensitivity; paired designs. |

| LEfSe | Kruskal-Wallis & LDA. | No. | Poor. | No inherent control | Exploratory analysis; class comparison. |

| MaAsLin2 | Generalized linear models. | Yes (optional CLR). | Good (multiple model types). | Standard (BH) | Complex, mixed-effect models with covariates. |

| LASSO Regression | L1-penalized regression. | No (pre-transform needed). | Good. | Via CV | Predictive model building; high-dimensional data. |

Table 2: Impact of Pre-Filtering on Dimensionality (Example from a 16S Study)

| Filtering Step | Initial Features | Remaining Features | % Reduction | Recommended Threshold |

|---|---|---|---|---|

| No Filter | 15,000 ASVs | 15,000 | 0% | N/A |

| Prevalence (5%) | 15,000 | ~4,500 | 70% | Present in >5% of samples. |

| Prevalence + Abundance | ~4,500 | ~1,200 | 92% (cumulative) | Mean relative abundance >0.01%. |

| After De-novo Clustering (OTUs) | 15,000 ASVs | ~800 OTUs | 95% | 97% similarity threshold. |

Experimental Protocols

Protocol 1: Robust Differential Abundance Analysis Workflow

- Data Preprocessing & Filtering:

- Input: ASV/OTU table, taxonomy, metadata.

- Remove features with total count < 10 across all samples.

- Remove features present in < 10% of samples in the smallest group of interest.

- Rarefy to an even sequencing depth only if necessary for beta-diversity, NOT for differential analysis.

- Normalization/Transformation:

- For methods like DESeq2, use its internal median-of-ratios size factor estimation.

- For other models, apply a centered log-ratio (CLR) transformation using a pseudo-count or the

compositionsR package.

- Feature Selection/Analysis:

- Option A (Compositional): Run ANCOM-II using the

ANCOMBCpackage with default parameters. - Option B (Count-based): Run DESeq2, specifying the experimental design formula (e.g.,

~ batch + condition). - Option C (Flexible): Run MaAsLin2 with a CLR transform, specifying fixed and random effects.

- Option A (Compositional): Run ANCOM-II using the

- Validation:

- Split data into 70% training and 30% validation before any analysis. Perform steps 1-3 on training data only.

- Apply the trained model/selected features to the held-out validation set to assess performance.

Protocol 2: Nested Cross-Validation for Predictive Modeling

- Define the outer k-fold (e.g., 5-fold) split. Each fold has a training and test set.

- For each outer training set:

- Define an inner cross-validation loop (e.g., 5-fold) for hyperparameter tuning (e.g., lambda for LASSO).

- Perform full preprocessing and feature selection using only the inner training splits.

- Train the model with the selected features and tuned hyperparameters on the entire outer training set.

- Evaluate the final model on the outer test set.

- Repeat and aggregate performance across all outer test folds. The features selected in each outer loop can be aggregated to form a final, stable biomarker list.

Diagram: High-Dimensional Microbiome Analysis Workflow

Diagram Title: Microbiome Analysis Workflow with Overfitting Risk

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Microbiome Feature Selection |

|---|---|

| QIIME 2 / DADA2 | Pipeline for processing raw sequencing reads into an ASV table. Provides initial feature definition. |

| phyloseq (R) | Data structure and suite for handling OTU table, taxonomy, and metadata. Essential for preprocessing and filtering. |

| DESeq2 / EdgeR | Packages designed for RNA-seq, adapted for microbiome. Use negative binomial models for count-based differential abundance testing. |

| ANCOM-BC / ANCOM-II | Specialized R packages for differential abundance analysis that directly account for compositional nature of data. |

| LEfSe | Tool for discovering high-dimensional biomarkers that characterize differences between biological conditions. |

| MaAsLin2 | Flexible R package for finding associations between clinical metadata and microbial multi-omics features. |

| glmnet | R package for fitting LASSO and Elastic Net models, performing embedded feature selection for prediction. |

| caret / mlr3 | Meta-R packages for streamlining machine learning workflows, including cross-validation and tuning. |

| Simulated Datasets | (e.g., via SPsimSeq) Critical for benchmarking and testing feature selection methods under known truth. |

Technical Support Center

Troubleshooting Guides

Issue 1: Inconsistent Taxonomic Assignment Between OTU and ASV Pipelines

- Symptoms: Results from the same dataset show different taxonomic profiles when using an OTU-clustering (e.g., 97% similarity) workflow versus an ASV (DADA2, deblur, UNOISE) workflow. This impacts downstream feature selection.

- Diagnosis: This is expected due to fundamental methodological differences. OTUs cluster sequences into bins of similar sequences, while ASVs resolve single-nucleotide differences.

- Resolution:

- Re-evaluate Research Question: For strain-level analysis, prioritize ASVs. For broader ecological patterns, OTUs may still be valid.

- Standardize Pipeline: Use one method consistently for all comparative analyses within your thesis. For feature selection, note that ASVs increase feature dimensionality.

- Benchmark: Process a mock community (with known composition) through both pipelines to understand error profiles.

Issue 2: Alpha Diversity Metrics Yield Counterintuitive Results

- Symptoms: Richness or Shannon Index values decrease after a treatment expected to increase diversity, or metrics are highly correlated, making interpretation difficult.

- Diagnosis: Different alpha diversity metrics capture different aspects of "diversity." Some are sensitive to rare features, others to abundant ones. Confounding factors like sequencing depth can skew results.

- Resolution:

- Rarefy or Use Metrics Robust to Depth: Apply rarefaction to an even depth across all samples for alpha diversity calculation only. Alternatively, use metrics like Chao1 or ACE which estimate true richness.

- Select Metrics A Priori: Choose metrics aligned with your hypothesis. For feature selection, understand that filtering by prevalence/abundance prior to diversity calculation alters results.

- Visualize: Always plot rarefaction curves to confirm sufficient sequencing depth was achieved.

Issue 3: Differential Abundance Analysis Suffers from False Positives or Low Power

- Symptoms: Many identified features have negligible log-fold changes, or known differences are not detected. This is a critical problem for high-dimensional feature selection.

- Diagnosis: Common tests (e.g., t-test, Wilcoxon) on compositional, sparse, and over-dispersed microbiome data violate statistical assumptions.

- Resolution:

- Use Compositional-Aware Tools: Employ methods designed for microbiome data (e.g., ANCOM-BC, DESeq2 with appropriate modifications, ALDEx2, MaAsLin2).

- Account for Confounders: Include relevant metadata (e.g., age, batch) as covariates in the model.

- Apply Multiple Testing Correction: Use Benjamini-Hochberg (FDR) correction. For feature selection, consider a two-stage approach: filter with a less stringent method before applying a robust DA test.

FAQs

Q1: For feature selection in my thesis, should I use OTUs or ASVs as my input features? A: ASVs are generally preferred for feature selection in modern research due to their higher resolution and reproducibility. However, they result in a higher-dimensional feature space. You may need more aggressive filtering (based on prevalence and abundance) before applying statistical feature selection methods (like LASSO) to avoid overfitting.

Q2: How do I choose between alpha diversity metrics for my analysis? A: Select metrics based on your biological question. Use a table to guide your choice:

| Metric | Type | Sensitivity | Best For |

|---|---|---|---|

| Observed Features | Richness | Counts all features equally | Simple count of total unique features (OTUs/ASVs). |

| Chao1 | Richness Estimator | Weights rare features | Estimating true richness, correcting for unobserved species. |

| Shannon Index | Diversity | Weights abundant features | Assessing community evenness and richness combined. |

| Simpson's Index | Diversity | Weights very abundant features | Emphasizing dominant species in the community. |

Q3: What is the key difference between beta diversity and differential abundance analysis? A: Beta diversity quantifies the overall compositional dissimilarity between samples or groups (e.g., using Bray-Curtis or Weighted Unifrac distances). Differential abundance identifies specific features (e.g., ASVs) whose relative abundances differ significantly between defined groups. In your thesis, beta diversity can guide group comparisons, while DA provides the specific candidate features for selection.

Q4: My differential abundance tool requires a feature table without zeros. How should I handle them? A: Do not simply replace zeros with a small number arbitrarily. Use tools with built-in zero-handling mechanisms (e.g., ALDEx2 uses a prior, DESeq2 models zeros separately) or employ compositional data analysis principles, such as centered log-ratio (CLR) transformation with a proper imputation method for zeros (e.g., Bayesian-multiplicative replacement).

Experimental Protocols

Protocol 1: Generating an ASV Table using DADA2 (16S rRNA Data)

Purpose: To obtain a high-resolution, reproducible feature table for downstream analysis and feature selection. Workflow:

- Demultiplexed Reads: Start with forward and reverse FASTQ files.

- Quality Filtering & Trimming: Use

filterAndTrim()to remove low-quality bases and reads. - Learn Error Rates: Model sequencing error profiles with

learnErrors(). - Dereplication: Combine identical reads with

derepFastq(). - Sample Inference: Apply the core algorithm

dada()to each sample to infer exact biological sequences. - Merge Paired Reads: Merge forward/reverse reads with

mergePairs(). - Construct Sequence Table: Build an ASV table with

makeSequenceTable(). - Remove Chimeras: Identify and remove chimeric sequences with

removeBimeraDenovo(). - Taxonomic Assignment: Assign taxonomy using a reference database (e.g., SILVA) via

assignTaxonomy().

Protocol 2: Performing a Standard Beta Diversity Analysis

Purpose: To visualize and test for overall compositional differences between sample groups. Workflow:

- Normalization: Rarefy the feature table to an even sequencing depth or apply a compositional transformation (e.g., CSS, log).

- Calculate Distance Matrix: Compute a pairwise dissimilarity matrix (e.g., Bray-Curtis for composition, Unifrac for phylogeny).

- Ordination: Reduce dimensionality using PCoA (Principal Coordinates Analysis) or NMDS (Non-metric Multidimensional Scaling).

- Visualization: Plot ordination results, coloring points by sample metadata.

- Statistical Testing: Perform permutational multivariate analysis of variance (PERMANOVA) using

adonis2()to test if group centroids are significantly different.

Protocol 3: Differential Abundance Analysis with ANCOM-BC

Purpose: To identify features with significantly different abundances between conditions while accounting for compositionality. Workflow:

- Preprocessing: Filter features with very low prevalence (e.g., present in <10% of samples).

- Run ANCOM-BC: Use the

ancombc()function with the formula specifying the condition of interest and relevant covariates. - Interpret Output: Examine the

resobject for:W: Test statistics for the differential abundance (beta) coefficients.p_val: Raw p-values.q_val: FDR-adjusted p-values.diff_abn: Logical vector indicating if a feature is differentially abundant (based on q_val < 0.05).

- Results: The selected

diff_abnfeatures are candidates for further validation and inclusion in your feature selection thesis work.

Visualizations

Diagram Title: OTU vs ASV Pipeline Comparison

Diagram Title: Alpha and Beta Diversity Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Microbiome Feature Selection Research |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | A standardized mix of known microbial genomes. Used as a positive control to benchmark bioinformatics pipelines (OTU/ASV error rates), validate feature detection, and calibrate differential abundance tools. |

| DNA Extraction Kits with Bead Beating (e.g., MO BIO PowerSoil) | Ensures efficient lysis of diverse bacterial cell walls, which is critical for obtaining a representative genomic profile and minimizing bias in the initial feature pool. |

| PCR Primer Sets (e.g., 515F/806R for 16S V4) | Targets a specific, hypervariable region for amplification. Choice of primer directly influences which taxonomic features are detectable and thus available for subsequent selection. |

| Quantitative PCR (qPCR) Reagents | Used for absolute quantification of total bacterial load (16S gene copies). Essential for validating relative abundance shifts observed in differential abundance analysis. |

| Spike-in Synthetic DNA (e.g., SynDNA) | Known, non-biological sequences added to samples pre-extraction. Allows for normalization and correction of technical variation across samples, improving accuracy in differential abundance testing. |

| Bioinformatics Software (QIIME 2, mothur, R packages) | Platforms for executing pipelines from raw data to processed feature tables, diversity metrics, and statistical tests, forming the computational core of feature selection research. |

From Theory to Pipeline: A Review of Modern Feature Selection Techniques

Technical Support Center: Troubleshooting Guide & FAQs for Microbiome Feature Selection

This support center addresses common challenges researchers face when applying filter-based feature selection methods to high-dimensional microbiome datasets (e.g., 16S rRNA, metagenomic data) in drug development and biomarker discovery.

Frequently Asked Questions (FAQs)

Q1: My p-value distribution from univariate statistical tests is highly skewed, with an overwhelming number of significant features after multiple testing correction. Is this biologically plausible or an artifact? A: This is a common artifact in microbiome data due to its compositionality and sparsity. The apparent significance often stems from uneven library sizes and the presence of many rare taxa with zero-inflated counts. Before applying statistical tests, ensure proper normalization (e.g., CSS, TMM, or a centered log-ratio transformation) to mitigate compositionality effects. Consider using specialized tests like ANCOM-II or Aldex2 that account for the data's structure, rather than standard t-tests or Wilcoxon tests.

Q2: When using correlation-based filters (e.g., to remove redundant OTUs/ASVs), my final feature set becomes unstable with small changes in the data. How can I improve robustness? A: Correlation estimates on sparse, compositional data are notoriously unstable. First, apply a prevalence filter (e.g., retain features present in >10% of samples) to remove ultra-rare taxa. Then, use a more robust correlation metric like Spearman's rank or a compositionally aware measure (SparCC or proportionality). Implement stability selection: run the correlation filter on multiple bootstrap subsamples and select features that are consistently chosen.

Q3: I used a variance threshold filter, but it removed taxa that are known to be key pathogens in my disease model. What went wrong? A: Variance thresholding can inadvertently remove low-abundance but biologically critical signatures. Microbiome data often contains low-abundance "keystone" species. Avoid using variance alone. Instead, use a method that considers both variance and association with the outcome. Combine a mild variance filter (to remove technical noise) with a subsequent statistical test (like Kruskal-Wallis) that is sensitive to mean shifts, even in low-abundance features.

Q4: How do I choose between different univariate statistical tests (parametric vs. non-parametric) for my case-control microbiome study? A: The choice depends on your data's distribution after normalization.

- Use parametric tests (t-test, ANOVA) only if the transformed abundance data passes normality checks (e.g., Shapiro-Wilk) and homogeneity of variance (Levene's test). This is rare for raw counts but may apply after certain transformations.

- Non-parametric tests (Mann-Whitney U, Kruskal-Wallis) are generally safer default choices as they do not assume normality. They are robust to outliers but may lose power.

- For paired/longitudinal designs, use paired tests (Wilcoxon signed-rank, Friedman test).

- Protocol: 1) Apply your chosen normalization. 2) Check normality and homoscedasticity assumptions on 5-10 randomly selected features. 3) If assumptions are broadly violated, default to a non-parametric test.

Quantitative Comparison of Common Filter Methods

Table 1: Comparison of Filter Methods for Microbiome Data

| Method | Typical Use Case | Key Assumptions | Speed | Sensitivity to Compositionality | Recommended for Microbiome? |

|---|---|---|---|---|---|

| Variance Threshold | Initial cleanup to remove near-constant features. | None. | Very High | Low | Yes, as a first-pass filter. |

| t-test / ANOVA | Comparing feature means between 2 or more groups. | Normality, equal variance. | High | Very High | Not recommended without careful normalization and checking. |

| Mann-Whitney U / Kruskal-Wallis | Non-parametric group comparison. | Independent, comparable samples. | High | Medium | Yes, a robust default choice for group comparisons. |

| Pearson Correlation | Assessing linear feature-feature redundancy or feature-outcome association. | Linear relationship, normality. | High | Very High | Not recommended for raw or normalized count data. |

| Spearman Correlation | Assessing monotonic relationships. | Monotonic relationship. | High | Medium | Yes, more robust than Pearson for microbiome data. |

| Chi-Square / Fisher's Exact | Testing independence of categorical features (e.g., presence/absence). | Sufficient count in contingency table. | Medium | Low | Useful for testing prevalence differences after binarizing data. |

| ANCOM-II | Differential abundance testing. | Log-ratio differences of a feature are constant across samples. | Low | Low | Yes, specifically designed for compositional data. |

| SparCC | Inference of feature-feature correlations. | Data is compositional, and correlations are sparse. | Low | Low | Yes, for estimating robust correlation networks. |

Experimental Protocols

Protocol 1: Executing a Robust Filter-Based Selection Pipeline for 16S rRNA Data Objective: To identify a stable, non-redundant set of microbial features associated with a clinical outcome.

- Input: OTU/ASV Table (counts), Metadata with outcome.

- Preprocessing: Apply a prevalence filter (retain taxa in >20% of samples). Normalize using CSS normalization (via

metagenomeSeqpackage) or a centered log-ratio (CLR) transformation (add a pseudocount first). - Univariate Screening: Apply Kruskal-Wallis test (for >2 groups) or Mann-Whitney U test (for 2 groups) between the normalized abundance of each feature and the outcome. Correct for multiple testing using the Benjamini-Hochberg (FDR) procedure. Retain features with FDR < 0.05.

- Redundancy Reduction: On the retained features, compute a robust correlation matrix (SparCC or Spearman). Define a correlation threshold (e.g., |r| > 0.8). Within each highly correlated cluster, retain the feature with the lowest FDR p-value from Step 3.

- Output: A reduced, stable feature set for downstream modeling or interpretation.

Protocol 2: Stability Selection for Correlation-Based Filtering Objective: To assess and improve the reliability of selected features.

- Bootstrap: Generate 100 bootstrap samples (random sampling with replacement) from your preprocessed data.

- Feature Selection on Each Sample: On each bootstrap sample, run your chosen correlation filter (e.g., remove one feature from each pair with Spearman |r| > 0.7).

- Calculate Selection Probability: For each original feature, compute the proportion of bootstrap samples in which it was retained.

- Final Selection: Retain only features with a selection probability above a defined threshold (e.g., >0.8). This yields features robust to data perturbations.

Visualizations: Workflows and Logical Relationships

Diagram 1: Robust Filter Method Workflow for Microbiome Data

Diagram 2: Decision Tree for Choosing a Filter Method

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Filter-Based Feature Selection on Microbiome Data

| Tool/Reagent | Function/Benefit | Example or Package (R/Python) |

|---|---|---|

| CSS Normalization | Mitigates library size differences without assuming most features are non-differential. Crucial pre-step for many statistical tests. | metagenomeSeq::cumNorm (R) |

| Centered Log-Ratio (CLR) Transform | Converts compositional data to Euclidean space, enabling use of standard methods while respecting compositionality. | compositions::clr (R), skbio.stats.composition.clr (Python) |

| SparCC Algorithm | Estimates correlation networks from compositional data more reliably than standard metrics. | SpiecEasi::sparcc (R), gneiss (QIIME 2) |

| ANCOM-II Package | Statistical framework specifically designed for differential abundance testing in compositional microbiota data. | ANCOMBC (R) |

| FDR Control Software | Corrects for false discoveries arising from multiple hypothesis testing across thousands of taxa. | stats::p.adjust (R, method='BH'), statsmodels.stats.multitest.fdrcorrection (Python) |

| Stability Selection Framework | Provides a resampling-based method to evaluate and improve feature selection stability. | c060::stabpath (R), custom bootstrap scripts. |

| High-Performance Computing (HPC) Cluster Access | Enables rapid iteration of filter methods and stability selection across large bootstrap samples. | Slurm, AWS Batch, Google Cloud. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During RFECV on microbiome OTU tables, the cross-validation score becomes highly unstable and fluctuates wildly between folds. What is the cause and solution? A: This is often due to high dimensionality and sparsity causing different feature subsets to be selected in each fold, leading to model variance.

- Solution: Increase the

min_features_to_selectparameter or use stratified k-fold that accounts for batch effects. Pre-filter features using variance threshold (e.g., remove OTUs present in <10% of samples) before applying RFECV. Ensure thecviterator is appropriate for your experimental design (useGroupKFoldif samples are from the same patient).

Q2: Stepwise selection (forward/backward) on a large microbial feature set (e.g., >1000 ASVs) is computationally infeasible. How can I accelerate the process? A: Stepwise selection scales poorly (O(n²)). Implement these fixes:

- Solution 1: Use a prefiltering step with a univariate filter method (e.g.,

SelectKBestwith mutual information) to reduce the feature pool to 200-300 before stepwise. - Solution 2: Limit the

n_features_to_selectparameter. Use a computationally cheaper estimator (e.g., Logistic Regression over Random Forest) for the selection process itself. - Solution 3: Leverage parallel computing by setting the

n_jobsparameter to -1 if your library supports it.

Q3: My final model from wrapper selection overfits severely on independent validation data, despite good CV performance during selection. What went wrong? A: This indicates data leakage or an over-optimistic CV setup. The wrapper may have exploited noise.

- Solution: Re-run the entire wrapper selection (RFECV/Stepwise) within a nested cross-validation loop. Use an inner loop for feature selection/model tuning and an outer loop for performance estimation. This prevents selection bias from influencing the final performance metric.

Q4: How do I choose between RFECV and Stepwise Selection for 16S rRNA gene sequencing data? A: The choice balances robustness and computational cost.

- RFECV: Preferable for smaller, well-powered studies (<500 samples) where computational resources allow. It is more robust as it uses CV to determine the optimal number of features automatically. See Table 1 for a comparison.

- Stepwise: More practical for initial exploration on very high-dimensional data or when you must strictly limit the number of final features for interpretability. It is more greedy and may miss interacting features.

Q5: I receive convergence warnings from my estimator during recursive elimination. Should I be concerned? A: Yes, this can affect selection stability.

- Solution: The model may be struggling as features are removed. Adjust the underlying estimator's hyperparameters (e.g., increase

max_iterfor logistic regression, reduce model complexity). Ensure data is properly scaled. Using a more robust estimator like a linear SVM with L2 penalty can sometimes alleviate this.

Table 1: Comparative Analysis of Wrapper Methods on Simulated Microbiome Dataset (n=150 samples, p=1000 OTUs)

| Metric | RFECV (SVM-RBF) | Forward Selection (Logistic Regression) | Backward Elimination (Random Forest) |

|---|---|---|---|

| Avg. Final Features Selected | 28 ± 5 | 15 ± 3 | 42 ± 8 |

| Mean CV Accuracy (5-fold) | 0.89 ± 0.04 | 0.85 ± 0.05 | 0.88 ± 0.03 |

| Computation Time (min) | 125.6 | 18.2 | 203.5 |

| Stability Index (Kuncheva) | 0.81 | 0.65 | 0.72 |

Table 2: Impact of Pre-filtering on RFECV Performance & Cost

| Pre-filtering Method | Features Post-Filter | RFECV Time (min) | Model AUC |

|---|---|---|---|

| None | 1000 | 125.6 | 0.92 |

| Variance Threshold (>1% prevalence) | 310 | 41.2 | 0.93 |

| SelectKBest (k=200, f_classif) | 200 | 26.5 | 0.91 |

Experimental Protocols

Protocol 1: Executing Nested-CV with RFECV for Microbiome Data Objective: To obtain an unbiased performance estimate of a classifier built with features selected via RFECV.

- Data Preparation: Rarefy OTU table to even depth. Apply centered log-ratio (CLR) transformation to address compositionality.

- Outer Loop: Split data into 5 outer folds. For each fold:

a. Hold out one fold as the test set.

b. On the remaining 4 folds (training set), perform RFECV:

* Use an inner 5-fold CV on the training set.

* Set

step=0.1to remove 10% of features per iteration. * Use a Linear Support Vector Classifier as the estimator. * RFECV outputs the optimal set of microbial features. c. Train a final model (e.g., SVM) on the training set using only the optimal features. d. Evaluate the model on the held-out outer test fold. - Aggregation: The average performance across all 5 outer test folds is the final unbiased metric.

Protocol 2: Stepwise Forward Selection with Early Stopping Objective: To select a parsimonious set of features with controlled computation.

- Initialize: Start with an empty set of selected features. Pre-scale all features (mean=0, variance=1).

- Iteration: While the number of selected features is less than

n_features_to_select(e.g., 20): a. For each candidate feature not yet selected, train a model (e.g., Lasso Regression) on the current set + candidate. b. Evaluate the model using 5-fold CV on the training data (metric: balanced accuracy). c. Identify the candidate feature that yields the largest improvement in CV score. d. Hypothesis Test: Perform a permutation test (100 permutations) to assess if the improvement is significant (p < 0.05). If not, terminate early. e. Add the significant feature to the selected set. - Output: The final ordered list of selected features.

Visualizations

Title: RFECV Workflow for Microbiome Feature Selection

Title: Forward Stepwise Selection with Early Stopping

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Microbiome Wrapper Methods Analysis |

|---|---|

| QIIME 2 / DADA2 | Pipeline for processing raw 16S rRNA sequences into Amplicon Sequence Variant (ASV) or OTU tables, the primary input feature matrix. |

| centered log-ratio (CLR) transform | Essential statistical transform applied to compositional microbiome data before feature selection to mitigate spurious correlations. |

| scikit-learn (v1.3+) | Python library providing RFECV, SequentialFeatureSelector, and core estimators for implementing wrapper methods. |

| StratifiedGroupKFold | Cross-validation iterator crucial for nested CV designs when samples are correlated (e.g., longitudinal sampling). |

| stability-selection | Python package that can be used alongside wrappers to compute feature selection stability metrics (e.g., Kuncheva index). |

| High-Performance Computing (HPC) Cluster | Often required for RFECV on large datasets due to the need to train models hundreds of times. |

Technical Support Center

Troubleshooting Guide & FAQs

Q1: When applying LASSO to my microbiome OTU table (samples x features), the model returns zero coefficients for all features, selecting nothing. What could be the cause and how do I fix it? A: This typically indicates an overly stringent regularization penalty. The hyperparameter lambda (λ) is too high.

- Troubleshooting Steps:

- Check Lambda Range: Ensure your cross-validation grid searches a sufficiently low range of lambda values. Use

glmnet::glmnet()(R) orsklearn.linear_model.LassoCV(Python) withn_lambdas=100(default) to explore a broad spectrum. - Standardize Data: LASSO is sensitive to feature scale. Ensure all microbial abundance features (e.g., OTU counts transformed to centered log-ratios) are standardized to mean=0 and variance=1 before fitting. Do not standardize the response variable.

- Check for Separability: In binary classification with perfect separation, LASSO may diverge. Check your outcome variable.

- Solution: Refit using

cv.glmnetto find the optimal λ (lambda.min) and refit the full model using this value. If the issue persists, uselambda.1se(the largest λ within 1 standard error of the minimum) as a less aggressive alternative.

- Check Lambda Range: Ensure your cross-validation grid searches a sufficiently low range of lambda values. Use

Q2: My Random Forest feature importance (Mean Decrease Gini) ranks many irrelevant features highly, likely due to correlated microbiome taxa. How can I obtain a more reliable importance measure? A: The standard Mean Decrease in Impurity (Gini) is biased towards high-cardinality and correlated features. Use permutation importance instead.

- Troubleshooting Steps:

- Switch to Permutation Importance: Calculate importance by randomly permuting each feature out-of-bag (OOB) and measuring the decrease in model accuracy/R². This is unbiased.

- Use Conditional Importance: For highly correlated microbial features, use the

partypackage (R) withcforest()andvarimp(conditional=TRUE). This permutes features conditional on correlated siblings, providing a more accurate view. - Protocol: In R, after fitting a random forest with

randomForest, useimportance(model, type=1, scale=FALSE)to get mean decrease in accuracy. Repeat with multiple seeds to assess stability.

Q3: How do I choose between LASSO and Random Forest for embedded selection in my microbiome study, which has a binary clinical outcome and 5,000+ ASV features? A: The choice depends on your data structure and goal.

| Aspect | LASSO (GLMNET) | Random Forest |

|---|---|---|

| Model Type | Linear / Generalized Linear | Ensemble of Non-linear Trees |

| Feature Selection | Yes, explicit coefficient shrinkage to zero. | Yes, via importance scores; requires thresholding. |

| Handling Correlations | Selects one feature from a correlated group arbitrarily. | More robust; can spread importance across correlated features. |

| Interpretability | High; provides a sparse, interpretable model. | Lower; a "black box" with complex interactions. |

| Best For | Identifying a minimal, stable microbial signature for diagnostics. | Capturing complex, non-linear microbial community interactions. |

| Protocol Recommendation | Use for linear association studies, predictive modeling with simplicity. | Use for exploratory analysis, or when complex interactions are suspected. |

Q4: I am using gradient boosting (XGBoost) for selection. How do I tune parameters to prevent overfitting while maintaining selection capability? A: Overfitting in tree-based methods leads to false feature discovery.

- Key Parameters to Tune via Grid/Bayesian Search:

max_depth(3-6): Lower depth reduces complexity.min_child_weight(≥1 for microbiome data): Higher values prevent sparse nodes.gamma(≥0): Minimum loss reduction for a split.subsample&colsample_bytree(0.7-0.8): Use less than 1 for regularization.lambda(L2 reg) /alpha(L1 reg): Add explicit regularization.

- Protocol: Use k-fold (k=5) cross-validation on the training set only. Use early stopping (

early_stopping_rounds) with a validation set. Monitor the log loss or error metric on the validation set.

Experimental Protocols

Protocol 1: Nested Cross-Validation for Embedded Feature Selection and Model Evaluation Objective: To provide an unbiased performance estimate of a predictive model that includes embedded feature selection (e.g., LASSO, RFE) on microbiome data.

- Data Preparation: Perform a study-level split into Discovery (80%) and Hold-out Test (20%) sets. The Hold-out set is locked away.

- Outer Loop (Performance Estimation): On the Discovery set, perform 10-fold CV.

- For each of the 10 folds, the outer training set (9/10 of Discovery) enters the Inner Loop.

- Inner Loop (Model & Selection Tuning): On the current outer training set, perform another 5-fold CV.

- For each inner fold, apply the full embedded selection and modeling pipeline (e.g., standardize data, perform LASSO path, tune λ).

- Determine the optimal hyperparameter (e.g., λ that minimizes CV error) across inner folds.

- Refit and Assess: Refit the model with the chosen hyperparameter on the entire current outer training set. Apply this fitted model (including its selected features) to the outer test fold to compute performance.

- Final Model: Average performance across the 10 outer folds. Finally, train a model on the entire Discovery set using the optimal hyperparameters and apply it to the locked Hold-out Test set for a final estimate.

Protocol 2: Stability Selection with LASSO for Robust Microbiome Feature Identification Objective: To identify microbial features that are consistently selected across many subsamples, improving reliability.

- Subsampling: Generate

Brandom subsamples (e.g.,B=100) of the data, each containing 50% of the samples. - LASSO on Subsets: For each subsample

b, run LASSO regression over a low regularization path (λ low). This encourages more features to enter the model. - Selection Probability: For each feature (microbial taxon), compute its selection probability: the proportion of subsamples

Bin which it had a non-zero coefficient. - Thresholding: Select features whose selection probability exceeds a predefined threshold (e.g., π_thr = 0.8). This set is the stable selection.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Embedded Feature Selection for Microbiome Data |

|---|---|

R glmnet / Python scikit-learn |

Core software libraries for implementing LASSO, Elastic Net, and related penalized regression models with efficient cross-validation. |

R randomForest / ranger |

Packages for constructing Random Forests and calculating permutation-based feature importance measures. |

R Boruta |

Wrapper around Random Forest to perform all-relevant feature selection by comparing original feature importance to shuffled "shadow" features. |

R xgboost / lightgbm |

Efficient, scalable implementations of gradient boosting machines for tree-based embedded selection using gain-based importance. |

| CLR-Transformed OTU Table | The preprocessed feature matrix. Centered Log-Ratio transformation addresses compositionality, making data suitable for linear methods like LASSO. |

| Stability Selection Scripts | Custom scripts (often in R/Python) to implement subsampling and selection probability calculation, crucial for robust biomarker identification. |

| High-Performance Computing (HPC) Cluster Access | Essential for running computationally intensive nested CV, stability selection, or large Random Forest models on high-dimensional datasets. |

Stability Selection and Ensemble Approaches for Robust Feature Identification

FAQs and Troubleshooting Guide

FAQ 1: Why does my stability selection model select too many features, even with a high threshold? Answer: This is often due to high correlation among microbiome taxa. Stability selection can spread selection probability across correlated features. Solution: Apply a pre-filtering step using variance or abundance thresholds before stability selection. Alternatively, use an ensemble method that incorporates correlation penalization, like the ensemble of graphical lasso models.

FAQ 2: My selected feature set varies drastically between different runs of the same ensemble algorithm. What could be wrong? Answer: High variability indicates a lack of robust signal. Ensure your base estimator's parameters (e.g., regularization strength for lasso) are appropriately tuned. The subsampling ratio (typically 0.5 to 0.8) and the number of iterations (B >= 100) are critical for convergence. Use the following protocol to diagnose:

- Fix the random seed and run stability selection 5 times. If results are stable, the issue is random initialization.

- Incrementally increase the number of bootstrap iterations (B) from 50 to 500 and plot the number of selected features. It should plateau.

FAQ 3: How do I interpret the stability scores or selection probabilities output by the algorithm? Answer: A stability score represents the proportion of subsamples (or models in an ensemble) where a given feature was selected. The core principle is that features with scores above a defined cutoff (e.g., 0.6 - 0.8) are considered robust. Compare your scores to the baseline in the table below.

FAQ 4: When using an ensemble of SVMs or random forests with stability selection, the computation is prohibitively slow for my dataset (n=200, p=5000). How can I optimize? Answer: For high-dimensional microbiome data, use the following optimization protocol:

- Parallelization: Implement the subsampling loop using a parallel computing framework (e.g., Python's joblib, R's doParallel).

- Dimensionality Pre-filtering: Use a fast, univariate filter (like ANCOM-BC or DESeq2 for differential abundance) to reduce dimensions to the top 1000-2000 features before the ensemble step.

- Algorithm Choice: For the base learner, use L1-penalized logistic regression (liblinear solver) instead of non-linear SVMs for initial feature screening.

Experimental Protocols

Protocol 1: Implementing Stability Selection with Lasso (Meinshausen & Bühlmann, 2010)

- Input: High-dimensional count matrix (e.g., OTU table), normalized and transformed (e.g., CLR, log-transform).

- Subsampling: For

Biterations (e.g., B=100), draw a random subsample of the data without replacement, typically of size⌊n/2⌋. - Base Learner: On each subsample, run a Lasso regression (or L1-logistic regression) across a regularization path of

λvalues. - Selection: For each

λon the path, record the set of selected features (non-zero coefficients). - Aggregation: For each original feature

j, compute its selection probability: π̂j = (1/B) * Σ{b=1}^B I(feature j selected in iteration b at λ). - Final Selection: Select all features

jfor which π̂_j ≥π_thr(a user-defined threshold, e.g., 0.6).

Protocol 2: Ensemble Feature Selection with Random Forest and Intersection (Robustness Focus)

- Input: Processed feature matrix and outcome vector.

- Multiple Trainings: Train

Kindependent Random Forest models (e.g., K=50) on bootstrapped samples of the training data. Vary key hyperparameters (likemtry) slightly across models to encourage diversity. - Importance Extraction: From each model, extract a feature importance list (e.g., Mean Decrease in Gini) and select the top-

mfeatures. - Voting Scheme: Compute the frequency of selection for each feature across all

Kmodels. - Final Set: Apply a "strict intersection" rule: retain only features selected by >75% of models, OR use stability selection's thresholding on the frequency vector.

Table 1: Comparison of Feature Selection Methods on Simulated Microbiome Data (n=150, p=10,000)

| Method | Avg. Features Selected | True Positive Rate (TPR) | False Discovery Rate (FDR) | Avg. Runtime (s) |

|---|---|---|---|---|

| Lasso (BIC) | 45.2 | 0.65 | 0.41 | 12.5 |

| Stability Selection (Lasso) | 22.1 | 0.88 | 0.12 | 185.7 |

| Random Forest (MeanDecreaseGini) | 112.5 | 0.92 | 0.55 | 89.3 |

| Ensemble RF + Stability | 18.7 | 0.85 | 0.09 | 320.4 |

| Benchmark: True Features | 15 | 1.00 | 0.00 | N/A |

Table 2: Typical Stability Selection Probability Ranges and Interpretation

| Probability Range (π̂_j) | Interpretation | Recommended Action |

|---|---|---|

| 0.0 - 0.2 | Very unstable/noise feature | Exclude confidently. |

| 0.2 - 0.6 | Unstable/context-dependent feature | Investigate correlations; usually exclude. |

| 0.6 - 0.8 | Stable, robust feature | Core set for validation and interpretation. |

| 0.8 - 1.0 | Highly stable, dominant feature | Likely key biological drivers. Prioritize. |

Visualizations

Stability Selection Workflow for Microbiome Data

Ensemble Approach for Robust Feature Identification

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Feature Selection for Microbiome Analysis |

|---|---|

| Compositional Transformation Tool (e.g., CLR, ALDEx2) | Preprocessing step to handle the compositional nature of microbiome data, removing spurious correlations before feature selection. |

| High-Performance Computing (HPC) Cluster Access | Essential for running the computationally intensive, repeated subsampling and model fitting required by stability selection and ensemble methods. |

Stable Feature Selection Software (e.g., stabs in R, sklearn in Python) |

Provides tested, optimized implementations of stability selection algorithms, ensuring reproducibility of selection probabilities. |

| Synthetic Data Generator | Creates simulated microbiome datasets with known true positive features. Crucial for benchmarking and tuning the threshold (π_thr) of any stability selection protocol. |

Correlation Network Visualization Package (e.g., igraph, cytoscape) |

Allows investigation of selected features in a biological context, identifying correlated clusters of taxa that may represent functional units. |

In high-dimensional microbiome data analysis, feature selection is a critical step to identify taxa or functional pathways truly associated with host phenotypes, while mitigating overfitting and enhancing model interpretability. This technical support center provides troubleshooting guidance for implementing a reproducible feature selection pipeline, a core component of robust microbial biomarker discovery in translational research and drug development.

FAQs & Troubleshooting Guides

Q1: My pipeline yields different selected features each time I run it, despite using the same dataset. How do I ensure reproducibility? A: This is typically caused by unset random seeds in stochastic algorithms or data ordering issues.

- Solution: Explicitly set the

random_stateparameter in all scikit-learn functions (e.g.,RandomForestClassifier,SelectFromModel) and any custom splitting functions. Initialize NumPy's (np.random.seed) and Python's built-inrandommodule at the start of your script. For parallel processing, ensure seeds are managed per worker. - Protocol: Insert the following code at the very beginning of your master script:

Q2: After aggressive filtering, my microbial count table has many zeros. Which feature selection methods are most robust to sparse, compositional data? A: Standard variance-based filters fail, and many models assume normally distributed data.

- Solution: Utilize methods designed for compositional or sparse data. Use a zero-inflated negative binomial model (via the

statsmodelsorGLMin R) for univariate filtering. For wrapper or embedded methods, employ regularized regression with appropriate link functions (e.g.,sklearn.linear_model.LogisticRegression(penalty='l1', solver='liblinear')for binary outcomes) or tree-based methods (e.g., Random Forests), which can handle sparse inputs. - Protocol for Zero-Inflated Negative Binomial Filter:

- Using the

statsmodelsAPI, fit a ZINB model for each microbial feature against your target. - Extract the p-value for the coefficient of the non-zero component.

- Apply False Discovery Rate (FDR) correction (Benjamini-Hochberg) to the p-values.

- Retain features with an FDR-adjusted p-value < 0.05.

- Using the

Q3: How do I validate my feature selection pipeline to avoid overfitting to my specific cohort? A: The most common mistake is performing feature selection on the entire dataset before train/test splitting.

- Solution: Nest the feature selection within the cross-validation loop. Perform selection only on the training fold in each iteration, then transform both the training and validation folds.

- Protocol:

- Use

sklearn.model_selection.PredefinedSplit,KFold, orStratifiedKFold. - Create a pipeline combining a feature selector (e.g.,

SelectFromModel) and a classifier/regressor. - Use

GridSearchCVorcross_validateon this pipeline. The selection will be refit on each training fold. - For final evaluation, use a completely held-out test set that was never involved in any selection or CV tuning.

- Use

Q4: I have metadata (e.g., pH, Age, Diet) alongside OTU/ASV data. How do I integrate them into the feature selection process? A: Treat them as a unified but heterogeneous feature matrix with careful preprocessing.

- Solution:

- Preprocess Separately, Then Concatenate: Standard-scale continuous metadata. One-hot encode categorical metadata. CLR-transform or center-log-ratio transform the compositional microbiome data.

- Apply Block-Wise Selection: Use methods like

sklearn.feature_selection.RFECV(Recursive Feature Elimination with CV) ormlxtend.SequentialFeatureSelectoron the entire matrix, or employ domain knowledge to force important metadata into the model first. - Multi-View Learning: For advanced integration, consider methods like

MultiCCAor supervised multi-view models in mixOmics R package (block.plsda).

Data Presentation

Table 1: Comparison of Feature Selection Methods for Microbiome Data

| Method Type | Example Algorithm | Handles Sparsity | Compositionality-Aware | Key Parameter to Tune for Reproducibility |

|---|---|---|---|---|

| Filter | Zero-Inflated Model (ZINB) | Yes | Indirectly | FDR threshold, Model convergence criteria |

| Wrapper | Recursive Feature Elimination (RFE) | Depends on estimator | No | n_features_to_select, random_state of estimator |

| Embedded | Lasso Regularization (L1) | Moderate | No | Regularization strength (C or alpha) |

| Embedded | Random Forest Feature Importance | Yes | No | random_state, max_depth, n_estimators |

Table 2: Impact of Nested vs. Non-Nested Feature Selection on Classifier Performance Simulated results from a 16S rRNA gene study (n=200 samples, 5000 ASVs) predicting disease status.

| Validation Scheme | Reported AUC (Mean ± SD) | Estimated Generalization AUC (on true hold-out) | Risk of Overfitting |

|---|---|---|---|

| Non-Nested (Selection on full train set before CV) | 0.95 ± 0.03 | ~0.82 | Very High |

| Nested (Selection inside each CV fold) | 0.87 ± 0.05 | ~0.86 | Low |

Experimental Protocols

Protocol: Reproducible, Nested Feature Selection Pipeline for Case-Control Microbiome Studies Objective: To identify a stable set of microbial features predictive of a binary phenotype while producing a generalizable performance estimate.

- Data Partitioning: Split raw data (count table + metadata) into Discovery Set (80%) and Hold-Out Test Set (20%). Stratify by target variable. Never use the Hold-Out Set until the final model assessment.

- Preprocessing on Discovery Set:

- Filtering: Remove features present in <10% of samples or with near-zero variance.

- Normalization: Apply a CLR transform (add a small pseudocount first) to the filtered count data. Standard-scale continuous metadata.

- Nested Cross-Validation on Discovery Set:

- Outer Loop: 5-fold StratifiedKFold (for performance estimation).