Unraveling the Microbiome: A Modern Guide to Exploratory Data Analysis for High-Dimensional 16S & Metagenomic Data

This comprehensive guide provides researchers, scientists, and drug development professionals with a modern framework for Exploratory Data Analysis (EDA) of high-dimensional microbiome data.

Unraveling the Microbiome: A Modern Guide to Exploratory Data Analysis for High-Dimensional 16S & Metagenomic Data

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a modern framework for Exploratory Data Analysis (EDA) of high-dimensional microbiome data. It addresses the core challenges of handling sparse, compositional, and high-throughput 16S rRNA and shotgun metagenomic datasets. We cover foundational principles, robust statistical and visualization methodologies, critical troubleshooting for common pitfalls, and validation strategies to ensure biological relevance. The article synthesizes current best practices to transform raw sequencing data into robust biological insights, supporting hypothesis generation for biomedical and therapeutic discovery.

First Steps in the Microbiome Jungle: Foundational Concepts and Initial Exploration of High-Dimensional Data

This technical guide examines the defining challenges of microbiome data analysis within a broader thesis on exploratory data analysis for high-dimensional microbiome research. The human microbiome, comprising trillions of microbes, generates datasets characterized by extreme dimensionality, sparsity, and compositional nature. These intrinsic properties necessitate specialized analytical approaches to derive meaningful biological and clinical insights for researchers and drug development professionals.

Core Characteristics of Microbiome Data

High-Dimensionality

Microbiome profiling via 16S rRNA gene sequencing or shotgun metagenomics identifies thousands to millions of unique taxonomic features (OTUs, ASVs, or genes) per sample. This far exceeds the number of samples typically collected (the "n << p" problem), leading to statistical challenges including overfitting and the curse of dimensionality.

Table 1: Typical Scale of Microbiome Data Dimensions

| Data Type | Typical Number of Features | Typical Sample Size (n) | Dimension Ratio (p/n) |

|---|---|---|---|

| 16S rRNA (OTU) | 1,000 - 10,000 OTUs | 50 - 500 | 20 - 200 |

| Shotgun Metagenomics | 1 - 10 million genes | 100 - 1,000 | 1,000 - 100,000 |

| Metatranscriptomics | 10,000 - 100,000 transcripts | 20 - 200 | 500 - 5,000 |

Sparsity

The majority of microbial taxa are absent in most samples due to biological rarity, technical detection limits, or ecological specialization. Sparsity is quantified as the percentage of zero counts in the feature-by-sample matrix.

Table 2: Sparsity Metrics in Public Microbiome Datasets

| Dataset | Number of Samples | Number of Features | Sparsity (%) | Reference |

|---|---|---|---|---|

| Human Microbiome Project | 300 | 3,000 OTUs | 85-95% | HMP, 2012 |

| American Gut Project | 10,000 | 15,000 ASVs | 90-98% | McDonald et al., 2018 |

| T2D Metagenomics | 368 | 5.9M genes | >99.5% | Qin et al., 2012 |

Compositionality

Microbiome data are inherently compositional—they convey relative, not absolute, abundance information due to library size normalization (e.g., sequencing depth). The total sum of counts per sample is constrained by the sequencing effort, making each value dependent on all others in the sample.

Table 3: Impact of Compositionality on Correlation Structure

| Correlation Type | In Raw Counts | In True Abundances | Bias Introduced |

|---|---|---|---|

| Between two rare taxa | Artificially negative | Near zero | High |

| Between two abundant taxa | Artificially negative | Positive | Moderate |

| Between rare and abundant | Artificially positive | Negative | Very High |

Methodological Implications and Experimental Protocols

Protocol 1: Library Preparation and Sequencing for 16S rRNA Amplicon Data

Objective: Generate high-dimensional, sparse compositional data from microbial communities. Steps:

- DNA Extraction: Use bead-beating mechanical lysis with chemical disruption (e.g., phenol-chloroform) to ensure broad taxonomic coverage.

- PCR Amplification: Amplify hypervariable regions (V3-V4) using barcoded primers (e.g., 341F/806R) with 25-30 cycles.

- Library Pooling: Normalize amplicon concentrations and pool equimolarly.

- Sequencing: Perform paired-end sequencing (2x250 bp or 2x300 bp) on Illumina MiSeq or NovaSeq platforms.

- Data Output: Generate FASTQ files containing barcode, primer, and amplicon sequence data.

Protocol 2: Shotgun Metagenomic Sequencing Workflow

Objective: Generate gene- and pathway-level functional profiles. Steps:

- High-quality DNA Extraction: Use kits optimized for low-biomass samples (e.g., MoBio PowerSoil).

- Library Construction: Fragment DNA (200-500 bp), adaptor ligation, and PCR amplification (4-8 cycles).

- High-throughput Sequencing: Sequence on Illumina HiSeq/NovaSeq (≥10 million reads/sample) or long-read platforms (PacBio, Nanopore).

- Bioinformatic Processing: Remove host reads, assemble contigs, predict genes, and map to reference databases (KEGG, COG, EggNOG).

Protocol 3: Quantitative Profiling via qPCR or Flow Cytometry

Objective: Obtain absolute abundance measurements to address compositionality. Steps:

- Spike-in Standards: Add known quantities of synthetic DNA (e.g., gBlocks) or fluorescent beads before DNA extraction.

- qPCR Quantification: Target universal (16S) and taxon-specific genes with standard curves.

- Flow Cytometry: Stain cells with SYBR Green I, count with a flow cytometer, and calibrate with bead standards.

- Data Integration: Convert relative sequencing abundances to absolute cell counts per gram or milliliter.

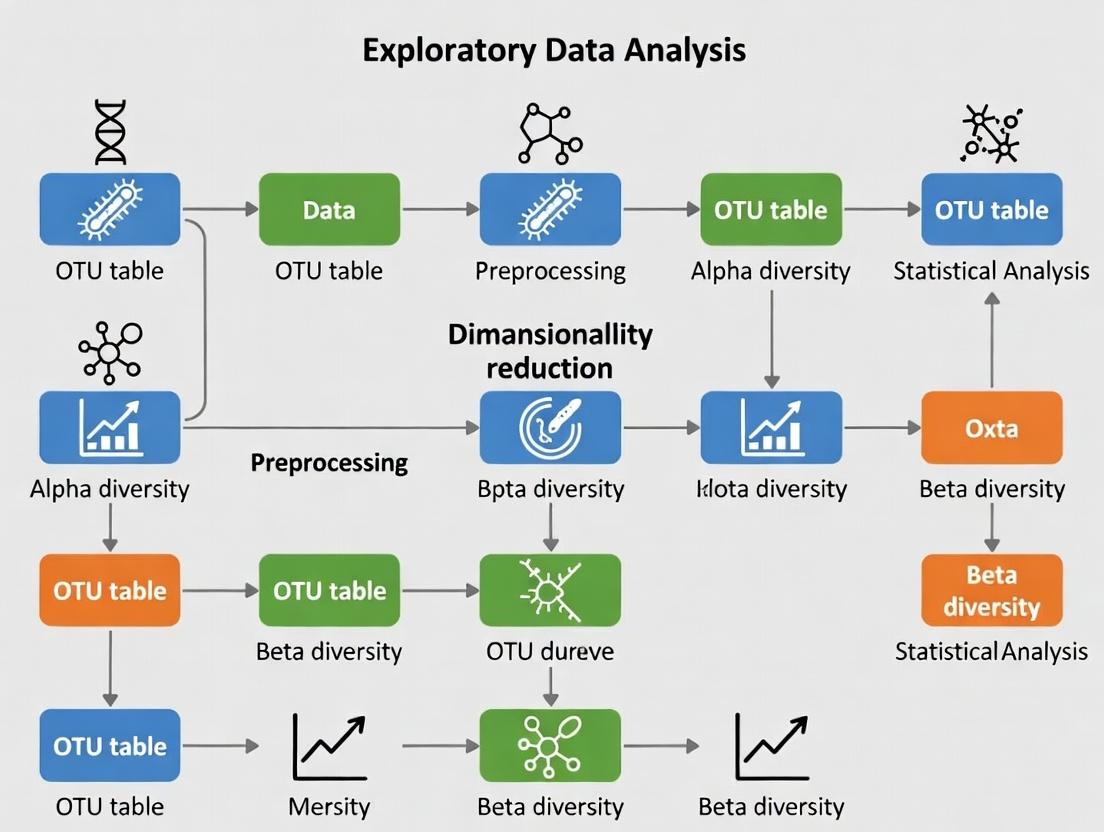

Diagram Title: Microbiome Data Generation and Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Materials for Microbiome Research

| Item | Function | Example Product/Catalog |

|---|---|---|

| Bead-beating Tubes | Mechanical lysis of microbial cells for DNA extraction | MP Biomedicals FastPrep Tubes, 116004500-CF |

| Inhibitor Removal Technology | Removes PCR inhibitors (humics, polyphenols) from environmental samples | Qiagen PowerSoil Pro Kit, 47014 |

| Mock Community Standards | Controls for extraction and sequencing bias | ZymoBIOMICS Microbial Community Standard, D6300 |

| Spike-in Synthetic DNA | Quantifies absolute abundance and corrects for compositionality | IDT gBlocks, custom sequences |

| Degenerate PCR Primers | Amplifies hypervariable regions across diverse taxa | 515F/806R, 27F/1492R |

| Size-selection Beads | Normalizes library fragment sizes for sequencing | Beckman Coulter AMPure XP, A63880 |

| Indexed Adapters | Multiplexes samples for high-throughput sequencing | Illumina Nextera XT Index Kit, FC-131-1096 |

| Sequencing Control PhiX | Improves base-calling accuracy on Illumina platforms | Illumina PhiX Control v3, FC-110-3001 |

Diagram Title: Analytical Pipeline for Microbiome Data Challenges

High-dimensionality, sparsity, and compositionality are interdependent characteristics that define the analytical landscape of microbiome research. Effective exploratory data analysis requires protocols that acknowledge these features through appropriate experimental controls, validated transformations, and compositional-aware statistical models. Addressing these challenges is fundamental for advancing microbiome science toward causal inference and therapeutic development.

Exploratory Data Analysis (EDA) is the foundational step in transforming raw, high-dimensional microbiome sequencing data into actionable biological hypotheses. Framed within the broader thesis that rigorous EDA is indispensable for robust microbiome research, this technical guide details the methodologies, visualizations, and analytical protocols essential for researchers and drug development professionals navigating the complexity of microbial community data.

Microbiome data, typically generated via 16S rRNA amplicon or shotgun metagenomic sequencing, is characterized by extreme dimensionality (hundreds to thousands of taxa), compositionality (relative abundances sum to a constant), sparsity (many zero counts), and high technical variability. EDA provides the critical first lens through which researchers assess data quality, identify patterns, detect outliers, and inform downstream statistical modeling and hypothesis testing. Neglecting EDA risks deriving insights from artifacts or noise.

Foundational EDA Metrics and Quantitative Summaries

Key quantitative descriptors form the initial assessment of a microbiome dataset.

Table 1: Core Quantitative Metrics in Microbiome EDA

| Metric | Description | Typical Range/Interpretation | Tool/Formula |

|---|---|---|---|

| Sequencing Depth | Total reads per sample. | Inadequate depth (<10k reads for 16S) may omit rare taxa. | colSums(otu_table) |

| Alpha Diversity | Within-sample richness/evenness. | Shannon Index: 3.0-7.0; Observed OTUs: 100-1000s. | vegan::diversity() |

| Beta Diversity | Between-sample dissimilarity. | Bray-Curtis: 0 (identical) to 1 (max dissimilarity). | vegdist(matrix, method="bray") |

| Sample Read Distribution | Spread of total reads across samples. | High skew may indicate failed library prep. | Summary statistics (mean, median, CV) |

| Taxonomic Composition | Proportion of dominant phyla/classes. | Often: Firmicutes, Bacteroidetes dominate gut. | taxa_sums(physeq)/sum(taxa_sums) |

Table 2: Common Quality Control (QC) Filtering Thresholds

| Filtering Step | Typical Threshold | Rationale |

|---|---|---|

| Low-abundance OTU/ASV removal | Prevalence < 10% of samples & relative abundance < 0.01% | Reduces sparsity and noise from sequencing errors. |

| Low-depth sample removal | Reads < 30% of median sample depth | Excludes potential technical failures. |

| Contaminant removal | Frequency correlation with negative controls (p<0.1) | Uses decontam (R) to identify reagent/kit DNA. |

| Chimeric sequence removal | >99% similarity to parent sequences | Applied during DADA2/QIIME2 pipeline pre-EDA. |

Experimental Protocols for Key EDA-Centric Analyses

Protocol 3.1: Alpha and Beta Diversity Analysis with PERMANOVA

Objective: To quantify and statistically compare microbial diversity within and between pre-defined sample groups (e.g., disease vs. control).

Materials: Processed and filtered OTU/ASV table, sample metadata, R with vegan, phyloseq, ggplot2.

Procedure:

- Normalize: Rarefy data to even depth (if using non-phylogenetic metrics like Bray-Curtis) or use a variance-stabilizing transformation (e.g.,

DESeq2'svarianceStabilizingTransformation). - Calculate Alpha Diversity: Compute indices (Observed, Shannon, Simpson) for each sample. Visualize with boxplots grouped by condition.

- Statistical Test: Apply Wilcoxon rank-sum test (for two groups) or Kruskal-Wallis test (multiple groups) to alpha diversity indices.

- Calculate Beta Diversity: Generate a dissimilarity matrix (Bray-Curtis, Unweighted/Weighted UniFrac).

- Ordination: Perform Principal Coordinates Analysis (PCoA) on the matrix. Plot ordination using first two principal coordinates.

- PERMANOVA: Use the

adonis2function (vegan::adonis2(distance_matrix ~ Condition, data=metadata, permutations=999)) to test if group centroids differ significantly. - Homogeneity Check: Test dispersion homogeneity with

betadisper; a significant result confounds PERMANOVA.

Protocol 3.2: Differential Abundance Analysis via DESeq2

Objective: To identify taxa whose abundances are significantly associated with a phenotype, accounting for compositionality and over-dispersion.

Materials: Raw (non-rarefied) ASV/OTU count table, metadata, R with DESeq2, phyloseq.

Procedure:

- Phyloseq to DESeq2: Convert phyloseq object:

diagdds = phyloseq_to_deseq2(physeq, ~ Condition). - Model Fitting: Apply negative binomial model:

diagdds = DESeq(diagdds, test="Wald", fitType="parametric"). - Results Extraction: Retrieve results:

res = results(diagdds, contrast=c("Condition", "Treatment", "Control")). - Multiple Testing Correction: Apply Benjamini-Hochberg FDR correction. Filter results at

alpha = 0.05. - Visualization: Generate volcano plots (log2 fold-change vs. -log10(p-value)) or plot normalized counts of significant taxa.

Mandatory Visualizations: Workflows and Relationships

Diagram Title: Microbiome Analysis Pipeline with EDA Feedback

Diagram Title: Core EDA Modules for Microbiome Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Microbiome EDA Workflows

| Item | Function in EDA/Research | Example Product/Kit |

|---|---|---|

| DNA Extraction Kit (with bead-beating) | Standardizes microbial lysis from complex matrices, a major source of pre-sequencing bias. Critical for comparing alpha diversity across studies. | Qiagen DNeasy PowerSoil Pro Kit, MoBio PowerLyzer |

| PCR Reagents & Barcoded Primers | Amplifies target region (e.g., V4 of 16S) with sample-specific barcodes for multiplexing. Efficiency impacts sequencing depth distribution. | KAPA HiFi HotStart ReadyMix, Illumina 16S Metagenomic Sequencing Library Prep |

| Negative Extraction Controls | Reagents-only controls used in wet-lab to identify kit/reagent contaminants for subsequent bioinformatic filtering (e.g., via decontam). |

Molecular grade water processed identically to samples |

| Mock Community Standards | Defined mixtures of known microbial genomes. Used to validate entire wet-lab to dry-lab pipeline, assess error rates, and calibrate EDA expectations. | ZymoBIOMICS Microbial Community Standards |

| Positive Sequencing Control (PhiX) | Spiked into Illumina runs for calibration, quality scoring, and identifying low-diversity samples (a potential sign of contamination). | Illumina PhiX Control v3 |

| Bioinformatics Pipelines | Software that processes raw FASTQ into feature tables, the substrate for EDA. Choice affects resolution (OTU vs. ASV). | QIIME 2, DADA2 (R), MOTHUR |

| Statistical Software & Packages | Primary environment for executing EDA protocols, visualization, and statistical testing. | R (phyloseq, vegan, DESeq2, ggplot2), Python (qiime2, scikit-bio, anndata) |

This guide outlines the foundational steps for preparing high-dimensional microbiome sequencing data for exploratory data analysis (EDA). Within the broader thesis of EDA for microbiome research, robust pre-processing is critical to transform raw sequence data into a reliable biological signal, enabling downstream statistical and ecological interpretation.

Quality Control (QC) and Trimming

The initial step involves assessing and improving the quality of raw sequencing reads (typically from 16S rRNA gene amplicon sequencing).

Key QC Metrics

Table 1: Common QC Metrics and Recommended Thresholds

| Metric | Description | Typical Threshold (16S Amplicons) | Tool Output Example |

|---|---|---|---|

| Per-base Sequence Quality | Phred score (Q) across all bases. | Q ≥ 30 (99.9% accuracy) | FastQC, MultiQC |

| Per-sequence Quality | Average quality per read. | Mean Q ≥ 25 | FastQC, MultiQC |

| Sequence Length | Distribution of read lengths post-trimming. | Expect uniformity based on amplicon length. | FastQC |

| Adapter Content | Presence of sequencing adapters. | ≤ 5% of reads | FastQC, cutadapt |

| Ambiguous Bases (N) | Proportion of uncalled bases. | 0% | Trimming/Filtering step |

| GC Content | Distribution of GC% per read. | Should match expected organism/target. | FastQC |

Experimental Protocol: QC and Trimming with DADA2 or QIIME 2

A. Tools Required: FastQC, MultiQC, Trimmomatic/cutadapt, DADA2 (R) or QIIME 2 (q2-demux, q2-quality-filter). B. Protocol Steps:

- Demultiplexing: Assign reads to samples based on barcodes (often done by sequencing facility).

- Initial QC: Run FastQC on all raw FASTQ files. Aggregate reports with MultiQC.

- Trimming:

- Remove Adapters: Use cutadapt or the

q2-cutadaptplugin to trim adapter sequences. - Trim Low-Quality Ends: Using DADA2's

filterAndTrim()function orq2-quality-filter. Typical parameters: trim left=10-20 bases (remove primers/initial low quality), truncate at first instance of Q<20-30.

- Remove Adapters: Use cutadapt or the

- Post-trimming QC: Run FastQC again on trimmed reads to confirm improvement.

Denoising and Sequence Variant Inference

Denoising corrects sequencing errors and infers the true biological sequences, moving beyond Operational Taxonomic Unit (OTU) clustering to Amplicon Sequence Variants (ASVs).

OTU vs. ASV Approaches

Table 2: Comparison of OTU Clustering and ASV Denoising

| Feature | OTU Clustering (97% similarity) | ASV Denoising (DADA2, Deblur, UNOISE3) |

|---|---|---|

| Definition | Clusters reads based on similarity threshold. | Infers exact biological sequences by error modeling. |

| Resolution | Lower (groups similar sequences). | Single-nucleotide resolution. |

| Reproducibility | Variable (depends on clustering method/database). | Highly reproducible. |

| Common Tools | VSEARCH, UCLUST, mothur. | DADA2, Deblur, UNOISE3. |

| Typical Output | OTU table (counts per OTU per sample). | ASV table (counts per exact sequence per sample). |

Experimental Protocol: Denoising with DADA2

A. Core Algorithm Steps: Error-rate learning → Dereplication → Sample inference → Denoising (error correction) → Chimera removal. B. Detailed Protocol (DADA2 in R):

- Learn Error Rates:

learnErrors(filtFs, multithread=TRUE)models the platform-specific error profile. - Dereplication:

derepFastq()combines identical reads, reducing computation. - Core Sample Inference:

dada(derep, err=error_rates, pool=FALSE)applies the error model to each sample, identifying true sequences. - Merge Paired-end Reads:

mergePairs()for paired-end data, ensuring high-quality overlaps. - Construct Sequence Table:

makeSequenceTable()creates the initial ASV-by-sample count matrix. - Remove Chimeras:

removeBimeraDenovo()identifies and removes PCR chimeras. - Output: A denoised ASV table (counts) and a FASTA file of ASV sequences.

Diagram Title: DADA2 Denoising Workflow for ASV Inference

ASV/OTU Table Creation and Basic Filtering

The final output of pre-processing is a feature-by-sample count matrix.

Table Structure and Post-Processing

Table 3: Structure of a Typical ASV/OTU Table

| Sample_1 | Sample_2 | Sample_3 | ... | Taxonomy | |

|---|---|---|---|---|---|

| ASV_001 | 1500 | 450 | 0 | ... | pFirmicutes; cBacilli; ... |

| ASV_002 | 0 | 1200 | 85 | ... | pBacteroidota; cBacteroidia; ... |

| ... | ... | ... | ... | ... | ... |

| Total Reads | 50,000 | 48,500 | 52,100 | ... |

Experimental Protocol: Table Refinement and Taxonomy Assignment

- Taxonomy Assignment: Assign taxonomy to ASV sequences using a reference database (e.g., SILVA, Greengenes, RDP). Tool:

assignTaxonomy()in DADA2 orq2-feature-classifierin QIIME 2. - Basic Filtering:

- Remove singletons/doubletons: Filter ASVs with total abundance ≤ 2 across all samples to reduce spurious noise.

- Prevalence filtering: Remove ASVs present in fewer than a threshold percentage of samples (e.g., 5%).

- Remove non-bacterial sequences: (for 16S data) Filter out sequences assigned to Archaea, Chloroplasts, Mitochondria.

- Normalization (for downstream EDA): Note: Normalization is part of EDA, not pre-processing, but is essential for comparison. Methods include rarefaction, Cumulative Sum Scaling (CSS), or relative abundance conversion.

Diagram Title: Post-Denoising Table Curation Steps

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Materials for 16S rRNA Amplicon Sequencing Pre-Processing

| Item | Function in Pre-Processing Context | Example/Note |

|---|---|---|

| High-Fidelity DNA Polymerase | Critical for accurate PCR amplification with minimal bias during library prep. | KAPA HiFi HotStart, Q5 Hot Start. |

| Validated Primer Sets | Target-specific amplification of variable regions (V3-V4, V4). | 515F/806R, 341F/785R. Must be barcoded/indexed for multiplexing. |

| Standardized Mock Community DNA | Positive control for evaluating pipeline performance (denoising error, taxonomy). | ZymoBIOMICS Microbial Community Standard. |

| Negative Extraction Control Reagents | Identifies reagent/lab kit contamination for downstream filtering. | Sterile water or buffer taken through extraction. |

| Quantitation Kits (dsDNA-specific) | Accurate measurement of library concentration for pooling. | Qubit dsDNA HS Assay, Quant-iT PicoGreen. |

| Size Selection Beads | Cleanup of PCR products and final libraries to remove primer dimers. | AMPure XP, SPRIselect beads. |

| Sequencing Platform-Specific Kits | Generation of sequencing-ready libraries (e.g., adding adapters, indices). | Illumina Nextera XT, Swift Amplicon. |

| Bioinformatics Software Suites | De facto reagents for computational pre-processing. | QIIME 2 (plugins), DADA2 (R package), MOTHUR. |

Within the broader framework of Exploratory Data Analysis (EDA) for high-dimensional microbiome research, initial sanity checks are the critical first step to ensure data integrity and interpretability. These checks validate the raw sequencing output, identify gross anomalies, and set the stage for robust downstream analysis. This guide details the protocols for assessing library size distribution, sample heterogeneity, and batch effects.

Library Size Distribution Analysis

Purpose: To verify that sequencing depth (total reads per sample) is sufficient, comparable across samples, and free from failures.

Experimental Protocol: Quantifying Sequencing Depth

- Data Input: Start with the raw feature table (e.g., ASV/OTU table) or the output from

qiime2 demuxorDADA2. - Calculation: For each sample, sum the counts across all microbial features (taxa, genes). This yields the library size.

- Visualization: Generate a bar plot (samples on x-axis, library size on y-axis). Order samples by size.

- Statistical Summary: Calculate mean, median, standard deviation, and range. Flag samples where library size is below a pre-defined threshold (e.g., < 10,000 reads for 16S rRNA data) or is a statistical outlier (e.g., > 3 standard deviations from the mean).

Key Data Metrics & Interpretation

Table 1: Typical Library Size Metrics for 16S rRNA Amplicon Studies (Post-QC)

| Metric | Acceptable Range | Concerning Indication |

|---|---|---|

| Mean Library Size | Study-dependent; > 20,000 reads is often robust | Low mean may indicate poor sequencing run or over-dilution of pool. |

| Standard Deviation | Ideally < 50% of the mean | High variance suggests inconsistent sequencing performance or sample prep issues. |

| Minimum Sample Size | > 10,000 reads (conservative) | Samples with very low depth may need rarefaction or removal due to undersampling. |

| Outlier Samples | >3 SD from mean or visual inspection | May represent failed libraries or contamination; warrant investigation. |

Sample Heterogeneity Assessment

Purpose: To ensure the dataset captures expected biological variation and to identify potential sample swaps or extreme outliers.

Experimental Protocol: Beta Diversity Analysis

- Data Transformation: Rarefy the feature table to an even depth (if using metrics sensitive to depth like unweighted UniFrac) or use a compositional transform (e.g., CLR for Aitchison distance).

- Distance Matrix Calculation: Compute a beta diversity distance matrix (e.g., Bray-Curtis, Jaccard, Weighted/Unweighted UniFrac for taxonomy; Aitchison for composition).

- Ordination: Perform dimensionality reduction (e.g., Principal Coordinates Analysis - PCoA) on the distance matrix.

- Visualization: Plot samples in 2D/3D ordination space, colored by known metadata (e.g., body site, treatment group, donor).

- Statistical Test: Use PERMANOVA (Adonis) to test if pre-defined groups explain a significant proportion of variance. This validates expected heterogeneity.

Table 2: Common Beta Diversity Metrics for Sample Heterogeneity

| Metric | Best For | Interpretation of Spread |

|---|---|---|

| Bray-Curtis | General ecological dissimilarity (abundance-aware) | Tight clusters = low heterogeneity; dispersed points = high heterogeneity. |

| Unweighted UniFrac | Phylogenetic presence/absence differences | Captures deep phylogenetic divergence between communities. |

| Weighted UniFrac | Phylogenetic abundance-weighted differences | Emphasizes abundance changes in closely related taxa. |

| Aitchison Distance | Compositional data (requires CLR transform) | Robust to library size, focuses on relative log-ratios. |

Batch Effect Detection

Purpose: To identify and quantify non-biological technical variation introduced by sequencing run, extraction batch, or processing date.

Experimental Protocol: Multivariate Modeling & Visualization

- Metadata Annotation: Ensure metadata includes potential batch variables (e.g., sequencinglane, extractiondate, PCR_plate).

- Exploratory Ordination: As in Section 3, generate PCoA plots but color points by batch variable instead of biological group.

- Formal Statistical Testing: Use multivariate methods to partition variance:

- PERMANOVA: Test significance of batch variable.

- Distance-Based Redundancy Analysis (dbRDA): Constrain ordination by biological variable and visualize residuals for batch.

- Principal Variance Component Analysis (PVCA): Combine PCA and variance components analysis to quantify proportion of variance attributable to batch vs. biology.

- Batch-Corrected Visualization: Apply a batch correction method (e.g., ComBat-seq for counts, RUVseq, or limma's

removeBatchEffecton CLR-transformed data) and re-plot ordination.

Table 3: Batch Effect Detection Methods & Outputs

| Method | Input Data | Key Quantitative Output |

|---|---|---|

| PERMANOVA on Batch | Distance Matrix | R² and p-value for batch variable. R² > 0.05 often concerning. |

| PVCA | Normalized Feature Table | Variance proportion attributed to each batch and biological factor. |

| dbRDA | Distance Matrix + Model | Visual and statistical assessment of variance partition. |

| PCA on Controls | Features from Control Samples | Clustering of technical replicates from different batches indicates effect. |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 4: Key Solutions for Microbiome Data Sanity Checks

| Item / Solution | Function in Sanity Checks | Example or Note |

|---|---|---|

| QIIME 2 / R phyloseq | Primary environment for data handling, normalization, and distance calculation. | qiime diversity core-metrics-phylogenetic or phyloseq::plot_richness(). |

| Negative Extraction Controls | Identifies reagent or environmental contamination affecting library sizes. | Used to filter contaminant ASVs from feature table. |

| Technical Replicates (Mock Community) | Distinguishes technical noise from biological variation; assesses sequencing accuracy. | ZymoBIOMICS or ATCC mock microbial standards. |

| R Packages: vegan, ape | Statistical testing (PERMANOVA, Mantel test) and distance matrix computation. | vegan::adonis2() for PERMANOVA. |

| Batch Correction Tools (ComBat-seq, RUVSeq) | Statistical removal of batch effects post-detection for downstream analysis. | sva::ComBat_seq() for count data. |

| Interactive Visualization (ggplot2, plotly) | Creation of publication-quality and exploratory plots for outlier detection. | ggplot2::geom_boxplot() for library size plots. |

| DNA/RNA Stabilization Buffer | Preserves sample integrity from collection to extraction, reducing batch variation. | RNAlater, OMNIgene•GUT, etc. |

| Standardized DNA Extraction Kit | Minimizes technical variation in library prep across batches. | DNeasy PowerSoil Pro Kit, MagAttract PowerSoil DNA Kit. |

Exploratory data analysis (EDA) for high-dimensional microbiome data presents unique statistical challenges. The data generated from 16S rRNA gene sequencing or shotgun metagenomics is inherently compositional, sparse, and zero-inflated. Ignoring these intrinsic properties can lead to biased inference, false discoveries, and invalid conclusions in downstream analyses, such as differential abundance testing or association studies in drug development. This technical guide provides an in-depth examination of these three core properties—sparsity, zero-inflation, and compositionality—framing them as the foundational first step in any robust microbiome EDA pipeline.

Sparsity

Sparsity refers to the condition where most entries in the data matrix are zero. In microbiome studies, this arises because any single sample contains only a subset of the vast microbial diversity present in an ecosystem.

Quantitative Characterization

Table 1: Typical Sparsity Metrics in Microbiome Datasets

| Dataset Type | Average Sparsity (% Zeros) | Range Across Studies | Typical Sample Depth (Reads) | Reference Year |

|---|---|---|---|---|

| 16S rRNA (Gut) | 85-95% | 75-99% | 10,000 - 100,000 | 2023 |

| Shotgun Metagenomic (Soil) | 90-98% | 88-99.5% | 10-50 million | 2024 |

| Viral Metagenome | 95-99.9% | 94-99.99% | 1-5 million | 2023 |

Experimental Protocol for Assessing Sparsity

- Data Input: Load Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) count table (samples x features).

- Filtering: Apply a minimum prevalence filter (e.g., retain features present in >10% of samples) to remove ultra-rare taxa. Note: This step is optional for pure sparsity assessment but common in practice.

- Calculation:

- Total entries = number of samples (N) × number of features (p)

- Zero count = Sum of all entries where count == 0

- Sparsity % = (Zero count / Total entries) × 100

- Visualization: Generate a heatmap of the log-transformed count matrix to visually inspect the pattern of non-zero entries.

Zero-Inflation

Zero-inflation goes beyond simple sparsity; it describes a situation where the observed frequency of zeros is greater than expected under a standard count distribution model (e.g., Poisson or Negative Binomial). Zeros can be either "biological" (the microbe is truly absent) or "technical" (the microbe is present but undetected due to limited sequencing depth).

Quantitative Characterization

Table 2: Sources and Prevalence of Zero Inflation

| Zero Type | Estimated Contribution | Primary Cause | Detection Clue |

|---|---|---|---|

| Technical (Dropout) | 20-60% of all zeros | Low biomass, library preparation bias, low sequencing depth | Correlated with low sample total read depth. |

| Biological (True Absence) | 40-80% of all zeros | Genuine absence of taxon in the niche, competitive exclusion | May be uncorrelated with sequencing depth; can be ecologically structured. |

Experimental Protocol for Zero-Inflation Modeling

- Model Fitting: For each taxon j, fit two models to its count vector across samples i:

- A standard count model (e.g., Negative Binomial):

Count_ij ~ NB(μ, dispersion). - A zero-inflated model (e.g., Zero-Inflated Negative Binomial - ZINB):

Count_ij ~ π * 0 + (1-π) * NB(μ, dispersion), where π is the probability of a structural zero.

- A standard count model (e.g., Negative Binomial):

- Goodness-of-Fit Test: Perform a likelihood-ratio test (LRT) between the nested models.

- Interpretation: A significant p-value from the LRT indicates the taxon's counts are zero-inflated, justifying the use of zero-inflated models for differential analysis.

Title: Decision Flow for Interpreting Zero Counts in Microbiome Data

Compositionality

Compositionality means that the data conveys only relative abundance information. Sequencing data is constrained sum (e.g., to total library size), so an increase in the relative abundance of one taxon necessitates an apparent decrease in others. This leads to spurious correlations if not accounted for.

Core Principle: The Aitchison Simplex

Microbiome count data resides in a simplex space where only relative information is valid. Analyses must use compositional methods, such as centered log-ratio (CLR) transformations, or employ models specifically designed for compositional data.

Experimental Protocol for Compositional Data Analysis

- Normalization (Optional but Common): Convert raw counts to proportions (total-sum scaling) or use a rarefaction procedure. Note: Modern approaches often use direct modeling with offsets instead.

- Transformation for Euclidean Space:

- CLR Transformation: For a sample vector x with D taxa, calculate

clr(x) = [ln(x1 / g(x)), ..., ln(xD / g(x))], whereg(x)is the geometric mean of x. - Handle zeros: Add a pseudo-count or use a more advanced method like the

compositionspackage'sclr()with multiplicative replacement.

- CLR Transformation: For a sample vector x with D taxa, calculate

- Downstream Analysis: Perform PCA on the CLR-transformed matrix (creating "Aitchison distance") or use a compositional regression model like ALDEx2 or a Dirichlet-Multinomial model.

Title: Workflow Contrast: Ignoring vs. Accounting for Compositionality

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Analyzing Sparse, Zero-Inflated, Compositional Data

| Item/Tool | Function/Benefit | Example Package/Software |

|---|---|---|

| Zero-Inflated Models (GLMM) | Models both technical zeros and count distribution simultaneously, providing unbiased effect estimates. | glmmTMB, pscl (R) |

| Compositional Transform | Projects constrained data into unconstrained Euclidean space for standard statistical methods. | compositions::clr(), microViz::transform_clr() (R) |

| Compositional Distance Metric | Measures dissimilarity between samples while respecting compositionality. | Aitchison Distance, Bray-Curtis (robust) |

| Feature-Specific Regularization | Handles high-dimensionality and sparsity by shrinking noise features' effects. | maaslin2 (LASSO), metagenomeSeq (CSS + zero-inflated Gaussian) |

| Bayesian Multiplicative Replacement | Handles zeros in compositional transforms without arbitrary pseudo-counts. | zCompositions::cmultRepl() (R) |

| Dirichlet-Multinomial Model | A probabilistic model for over-dispersed, compositional count data. | DirichletMultinomial (R/Bioconductor) |

| Co-occurrence Network Inference | Estimates microbial associations while correcting for compositionality and sparsity. | SPIEC-EASI, FlashWeave |

| Experimental Positive Controls | Spike-in synthetic microbial communities to distinguish technical from biological zeros. | ZymoBIOMICS Microbial Community Standards |

Navigating the Data: Essential Methods, Visualizations, and Hands-On Applications for Microbiome EDA

Within the framework of a thesis on Exploratory Data Analysis for High-Dimensional Microbiome Data Research, dimensionality reduction is a critical step. Microbiome datasets, generated via 16S rRNA gene sequencing or shotgun metagenomics, are characterized by thousands of operational taxonomic units (OTUs) or genes across a limited number of samples. This high-dimensional, sparse, and compositional nature poses significant challenges for visualization and interpretation. Core ordination techniques transform these complex datasets into lower-dimensional spaces, preserving ecological distances to reveal patterns, clusters, and outliers essential for hypothesis generation in research and drug development.

This whitepaper provides an in-depth technical guide to four principal ordination methods: Principal Coordinate Analysis (PCoA), Non-Metric Multidimensional Scaling (NMDS), and the modern manifold techniques t-Distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP). We focus on their application, comparative strengths, and practical implementation in microbiome analysis.

Foundational Concepts & Distance Metrics

Ordination techniques require a measure of dissimilarity between samples. The choice of distance metric is paramount and should reflect the biological question.

Core Distance Metrics for Microbiome Data:

| Metric | Formula (for samples j and k) | Key Properties | Best Use Case |

|---|---|---|---|

| Bray-Curtis | $$BC{jk} = \frac{\sumi | x{ij} - x{ik} |}{\sumi (x{ij} + x_{ik})}$$ | Non-Euclidean, robust to outliers, handles zeros. Measures community composition dissimilarity. | General beta-diversity analysis comparing community structures. |

| Unweighted UniFrac | $$U_{jk} = \frac{\text{length of unshared branches}}{\text{total branch length of tree}}$$ | Incorporates phylogenetic tree. Sensitive to presence/absence of lineages. | Detecting deep phylogenetic community shifts. |

| Weighted UniFrac | $$W_{jk} = \frac{\text{sum (branch length × | abundance proportion difference |)}}{\text{total abundance-scaled branch length}}$$ | Incorporates phylogeny and relative abundance. | Detecting changes in abundant lineages. |

| Jaccard | $$J_{jk} = 1 - \frac{| A \cap B |}{| A \cup B |}$$ | Presence/Absence based. Ignores abundance. | Analyzing rare biosphere or strong turnover. |

| Aitchison | Euclidean distance on centered log-ratio (CLR) transformed data. | Proper metric for compositional data. Requires zero imputation (e.g., pseudocount). | Core method for analyzing compositional microbial abundance data. |

Core Ordination Techniques

Principal Coordinate Analysis (PCoA / Classical Multidimensional Scaling)

Methodology: PCoA aims to find a low-dimensional representation where the Euclidean distances between points approximate a given dissimilarity matrix. It performs an eigendecomposition of a double-centered distance matrix. The eigenvalues indicate the proportion of variance explained by each principal coordinate.

Protocol for Microbiome PCoA:

- Compute Dissimilarity: Calculate a full distance matrix (e.g., Bray-Curtis, UniFrac) from the OTU/ASV table.

- Transform Matrix: Perform double-centering:

G = -0.5 * (I - 11^T/n) * D^2 * (I - 11^T/n), whereDis the distance matrix. - Eigendecomposition: Solve

G = UΛU^T. Eigenvectors (U) are the coordinates. Eigenvalues (Λ) indicate variance. - Select Axes: Typically, plot the first 2-3 eigenvectors with the largest positive eigenvalues.

- Variance Interpretation: The percentage of variance explained by axis i is

(λ_i / Σ(λ_positive)) * 100.

Key Considerations: PCoA can handle non-Euclidean distances (like Bray-Curtis), but negative eigenvalues may arise, indicating the distance is not fully embeddable in Euclidean space. The variance explained is a crucial quantitative output.

Non-Metric Multidimensional Scaling (NMDS)

Methodology: NMDS is a rank-based method. It seeks a configuration where the ranking of distances between points in the low-dimensional space is as close as possible to the ranking of the original dissimilarities (monotonic relationship). It minimizes a stress function (e.g., Kruskal's stress) iteratively.

Protocol for Microbiome NMDS:

- Define Dimensions (k): Choose the number of ordination dimensions (k=2 or 3 for visualization).

- Initial Configuration: Generate a random starting configuration of points in k-dimensional space.

- Calculate Distances: Compute Euclidean distances between all points in the current configuration.

- Regress & Compare: Perform a monotonic regression (Shepard diagram) between the configuration distances and the original dissimilarities.

- Minimize Stress: Iteratively adjust point positions to minimize stress:

Stress = √[ Σ (d_orig - d_pred)^2 / Σ d_orig^2 ]. - Iterate: Use a numerical optimizer (e.g., gradient descent) over many iterations (e.g., 500) to find the best configuration.

- Assess Fit: Final stress value indicates goodness-of-fit: <0.05 = excellent, <0.1 = good, >0.2 = potentially misleading.

Key Considerations: NMDS is robust and makes no assumptions about the linear relationship between distance metrics. The final axes are arbitrary (rotatable) and must be interpreted relative to each other. Always run multiple random starts to avoid local minima.

t-SNE and UMAP

These are modern non-linear manifold learning techniques.

t-SNE (t-Distributed Stochastic Neighbor Embedding):

- Methodology: Models pairwise similarities in high-dimension as probabilities (using a Gaussian kernel) and in low-dimension as probabilities (using a Student's t-distribution). It minimizes the Kullback–Leibler divergence between these two distributions via gradient descent.

- Key Parameter:

perplexity, which balances local vs. global structure (typical range 5-50 for microbiome data). - Limitation: Cannot transform new data; global structure is not always preserved.

UMAP (Uniform Manifold Approximation and Projection):

- Methodology: Constructs a topological representation of the high-dimensional data (fuzzy simplicial complex) and optimizes a low-dimensional layout to be as topologically similar as possible. It uses cross-entropy as a cost function.

- Key Parameters:

n_neighbors(balances local/global structure) andmin_dist(controls clustering tightness). - Advantages: Faster, often better preserves global structure, and can transform new data.

Comparative Protocol for t-SNE/UMAP:

- Input: Start with a transformed feature table (e.g., CLR-transformed abundances, not a distance matrix).

- Parameter Selection: For UMAP:

n_neighbors=15-50,min_dist=0.1-0.5. For t-SNE:perplexity=30. - Dimensionality Pre-reduction: It is often advisable to first reduce dimensions via PCA (e.g., to 50 PCs) to denoise.

- Optimization: Run the algorithm (t-SNE or UMAP) on the PC scores or original features.

- Multiple Runs: Due to stochasticity, run multiple times with different random seeds to assess stability.

Quantitative Comparison of Techniques

| Technique | Input Type | Preserves | Key Output Metrics | Computational Load | Best for Microbiome... |

|---|---|---|---|---|---|

| PCoA | Distance Matrix | Global distances (optimally for Euclidean) | % Variance explained per axis (from eigenvalues). | Low | Hypothesis-driven analysis with interpretable variance. Standard for beta-diversity. |

| NMDS | Distance Matrix | Distance rank order (monotonic fit) | Stress value (goodness-of-fit). Iterations to convergence. | Medium | Exploratory analysis with any non-linear distance metric. |

| t-SNE | Feature Matrix (or Distance) | Local neighborhoods | KL Divergence (final cost). | High | Visualizing fine-grained clusters and sub-structure. |

| UMAP | Feature Matrix (or Distance) | Local & more global topology | Not typically a single fit metric. | Medium-High | Visualizing hierarchical clustering structure and patterns. |

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Microbiome Ordination Analysis |

|---|---|

| QIIME 2 (Core) | Plugin-based platform. qiime diversity pcoa, qiime diversity umap, and qiime emperor for visualization. Essential for standardized UniFrac/PCoA pipelines. |

| R (phyloseq & vegan) | phyloseq object manages OTU table, taxonomy, sample data, and tree. vegan::metaMDS() for NMDS, ape::pcoa() for PCoA. vegdist() computes distances (Bray-Curtis). |

| Python (scikit-bio, scikit-learn) | skbio.stats.ordination.pcoa and skbio.distance for PCoA. sklearn.manifold for t-SNE and UMAP. Enables integration into machine learning workflows. |

| FastTree / SEPP | Generates the phylogenetic trees required for calculating UniFrac distances. |

| GUniFrac R Package | Computes generalized UniFrac distances, offering a flexible parameter to balance abundance weighting. |

| Aitchison Imputation (e.g., zCompositions) | R package zCompositions provides methods (e.g., Bayesian-multiplicative replacement) to handle zeros for robust Aitchison distance calculation. |

| Emperor / ggOrdination | Visualization tools for creating interactive 3D plots (Emperor) or publication-quality static plots (ggplot2-based ggord, ggfortify). |

Experimental Workflow & Logical Pathway

The following diagram outlines the standard decision pathway for applying core ordination techniques in microbiome exploratory data analysis.

Diagram 1: Microbiome Ordination Technique Selection Workflow

Selecting the appropriate ordination technique is fundamental to effective exploratory data analysis in microbiome research. PCoA paired with a biologically relevant distance metric (e.g., Weighted UniFrac for abundance, Aitchison for composition) remains the gold standard for hypothesis-oriented beta-diversity assessment, providing clear variance metrics. NMDS offers robust, assumption-free exploration when distance metrics are non-linear. For uncovering complex, non-linear sub-structures within the data, t-SNE and UMAP are powerful, with UMAP generally preferred for its speed and better preservation of continuum relationships. Integrating these tools into a coherent analytical workflow, as guided by the research question and data properties, empowers researchers and drug developers to extract meaningful biological insights from the complexity of microbial ecosystems.

In the context of exploratory data analysis (EDA) for high-dimensional microbiome data, visualization is a critical bridge between raw sequencing output and biological insight. This technical guide details advanced plotting techniques essential for interpreting complex microbial community structures, their dynamics, and associations within a broader thesis on microbiome research and therapeutic discovery.

Foundational Visualization Techniques

Heatmaps

Heatmaps are indispensable for visualizing the relative abundance of microbial taxa (e.g., OTUs, ASVs) across multiple samples, revealing patterns of co-occurrence and sample clustering.

Experimental Protocol for Data Preparation:

- Data Input: Start with a filtered feature table (e.g., from QIIME 2, mothur) containing counts per taxon per sample.

- Normalization: Apply a compositional normalization like Centered Log-Ratio (CLR) transformation or convert to relative abundance (percentage). For differential abundance, use variance-stabilizing transformations (e.g., DESeq2).

- Filtering: Retain top N most abundant or most variable taxa to reduce dimensionality and improve clarity.

- Clustering: Perform hierarchical clustering on rows (taxa) and/or columns (samples) using distance metrics (Bray-Curtis, Euclidean) and linkage methods (Ward, average).

- Plotting: Map normalized abundance values to a color gradient. Include sidebars for sample metadata (e.g., disease state, treatment) and taxonomic classification.

Bar Plots

Bar plots, typically stacked, provide a straightforward view of taxonomic composition at a chosen level (e.g., Phylum, Genus) across sample groups.

Experimental Protocol:

- Aggregation: Aggregate sequence variants to the desired taxonomic level (Phylum, Class, etc.).

- Grouping: Average relative abundances within pre-defined sample groups (e.g., control vs. treated cohorts).

- Plotting: Generate stacked bars for each group. Use a consistent, color-blind friendly palette for taxa. Order taxa by overall abundance or a specific metric.

Violin Plots

Violin plots combine a box plot with a kernel density estimate to show the distribution, spread, and probability density of a specific metric (e.g., alpha diversity index, abundance of a key taxon) across sample groups.

Experimental Protocol for Alpha Diversity Visualization:

- Calculation: Compute alpha diversity indices (Shannon, Faith's PD, Observed Features) per sample using rarefied data.

- Metadata Integration: Merge diversity values with sample metadata grouping variable.

- Statistical Testing: Perform non-parametric tests (Kruskal-Wallis, pairwise Wilcoxon) between groups.

- Plotting: Plot the distribution of the diversity index per group, overlaying box plot elements (median, IQR) and individual data points. Annotate with statistical significance.

Network Graphs

Co-occurrence or correlation networks infer potential ecological interactions between microbial taxa from abundance patterns across samples.

Experimental Protocol for Network Inference:

- Correlation Calculation: Compute pairwise associations (SparCC, Spearman, Pearson) on CLR-transformed or proportionally normalized abundance data.

- Thresholding: Apply significance (p-value) and magnitude (correlation coefficient) thresholds to create an adjacency matrix.

- Network Construction: Define taxa as nodes and significant correlations as edges. Weight edges by correlation strength and sign (positive/negative).

- Topological Analysis: Calculate network properties (modularity, degree centrality, betweenness centrality).

- Visualization: Layout nodes using force-directed algorithms (Fruchterman-Reingold). Color nodes by taxonomy or module membership. Size nodes by degree centrality or abundance.

Data Presentation: Comparative Table of Visualization Techniques

| Visualization | Primary Use Case | Key Metrics Visualized | Data Preprocessing Needs | Common Tools/Packages |

|---|---|---|---|---|

| Heatmap | Pattern discovery, sample/taxon clustering | Relative abundance (transformed) | Normalization, filtering, clustering | R: pheatmap, ComplexHeatmap; Python: seaborn.clustermap |

| Bar Plot | Taxonomic composition summary | Mean relative abundance per group | Taxonomic aggregation, group averaging | R: ggplot2; Python: matplotlib, seaborn |

| Violin Plot | Distribution comparison across groups | Alpha diversity, specific taxon abundance | Index calculation, group assignment | R: ggplot2; Python: seaborn.violinplot |

| Network Graph | Interaction inference, system properties | Correlation coefficients, centrality measures | Correlation inference, thresholding | R: igraph, ggraph; Python: networkx, igraph |

Workflow Diagram

Microbiome Visualization Analysis Workflow

The Scientist's Toolkit: Key Reagents & Software

| Item | Function in Microbiome Visualization |

|---|---|

| QIIME 2 (Core 2024.5) | Primary pipeline for processing raw sequences into feature tables and performing initial diversity analyses. Provides q2-diversity and q2-taxa plugins for plotting. |

| R (v4.3+) with Packages | Statistical computing environment. phyloseq for data object management; ggplot2/ggpubr for static plots; vegan for ecological metrics; igraph for networks. |

| Python (v3.10+) with Libraries | Programming language for flexible analysis. biom-format for table handling; scikit-bio for diversity; matplotlib/seaborn for plotting; networkx for networks. |

| SparCC Algorithm | Tool for calculating robust correlations between compositional taxa, mitigating spurious correlations in microbiome data. |

| Graphviz Layout Engines | Used by igraph and networkx to generate clean, readable network graph layouts (e.g., neato, fdp). |

| ColorBrewer Palettes | Sets of colorblind-friendly, print-safe palettes (e.g., Set2, Set3, Paired) implemented in RColorBrewer and seaborn for categorical data (taxa). |

| Centered Log-Ratio (CLR) Transform | Essential normalization for compositional data prior to correlation analysis, available in the compositions R package or scikit-bio in Python. |

In the analysis of high-dimensional microbiome data, a fundamental challenge is the compositional nature of the data. Measurements, such as 16S rRNA gene amplicon sequencing reads or metagenomic counts, do not represent absolute abundances but rather relative proportions constrained to a constant sum (e.g., total library size). This compositional constraint induces spurious correlations and obscures true biological signals. Exploratory Data Analysis (EDA) for microbiome research must, therefore, begin with an appropriate transformation to address compositionality. This guide provides an in-depth technical overview of the Centered Log-Ratio (CLR), Additive Log-Ratio (ALR), and other key transformations, framed within the broader thesis that proper transformation is the critical first step for valid inference in microbial ecology and therapeutic development.

Core Transformations: Theory and Application

The following table summarizes the mathematical definitions, key properties, and primary use cases for the major compositional data transformations.

Table 1: Comparison of Core Compositional Data Transformations

| Transformation | Formula (for vector x with D parts) | Key Properties | Primary Use Case in Microbiome Research |

|---|---|---|---|

| Center Log-Ratio (CLR) | clr(x)_i = ln(x_i / g(x)) where g(x) is the geometric mean of all parts. |

Symmetric, isometric. Creates a Euclidean space. Parts sum to zero. | Default for many multivariate methods (PCA, PERMANOVA). Foundation for SparCC and ANCOM-BC. |

| Additive Log-Ratio (ALR) | alr(x)_i = ln(x_i / x_D) where x_D is an arbitrarily chosen reference part. |

Simple, asymmetric. Results in a (D-1)-dimensional real space. | Analyzing specific taxon ratios. Simpler interpretation vs. a chosen baseline (e.g., a common bacterium). |

| Isometric Log-Ratio (ILR) | ilr(x) = V^T * ln(x) where V is an orthonormal basis in the simplex. |

Isometric, orthonormal coordinates. Many possible balances. | Testing a priori phylogenetic or ecological balances (e.g., via PhILR). |

| Rarefaction | Random subsampling to an even sequencing depth. | Discards data, introduces variance. Not a transformation per se. | Largely deprecated for differential abundance. Sometimes used for alpha diversity estimation. |

| Total Sum Scaling (TSS) | x_i / sum(x) |

Simple normalization to proportions. Does not address compositionality. | Initial visualization. Input for downstream transformations (CLR, ALR). |

Experimental Protocol: A Standard Workflow for Compositional EDA

The following is a detailed methodology for conducting EDA on a typical 16S rRNA microbiome dataset (e.g., from QIIME 2 or mothur) with compositionality in mind.

Protocol 1: Core EDA Workflow with Compositional Transformations

Data Preprocessing & Import:

- Start with a Feature Table (OTU/ASV table) of raw counts and associated Metadata.

- Apply a prevalence filter (e.g., retain features present in >10% of samples).

- Pseudocount Addition: Add a small pseudocount (e.g., 1 or a fraction of the minimum positive count) to all zero values to enable log-transformation. Note: More sophisticated zero imputation (e.g., Bayesian or model-based) may be used in advanced pipelines.

Transformation & Normalization:

- Path A - For multivariate distance-based analysis: Apply the CLR transformation.

- Calculate the geometric mean

g(x)for each sample. - Compute

ln(x_i / g(x))for each taxon. - The resulting matrix is suitable for Aitchison distance calculation and ordination (PCA, PCoA).

- Calculate the geometric mean

- Path B - For specific hypothesis testing: Apply the ALR transformation using a stable, common taxon as the denominator.

- Compute

ln(x_i / x_reference)for all other taxai. - These log-ratios can be analyzed with standard statistical tests (e.g., t-tests, regression).

- Compute

- Path A - For multivariate distance-based analysis: Apply the CLR transformation.

Exploratory Visualization & Analysis:

- Perform Principal Component Analysis (PCA) on the CLR-transformed data.

- Generate biplots to visualize sample clustering and taxon loadings.

- Calculate and visualize Aitchison distances between sample groups.

- Use heatmaps of CLR-transformed abundances to identify taxon-wise patterns.

Downstream Statistical Inference:

- For differential abundance testing, employ compositionally aware tools (e.g., ANCOM-BC,

corncob, orDESeq2with careful interpretation) on the raw count data, as these models incorporate transformations internally.

- For differential abundance testing, employ compositionally aware tools (e.g., ANCOM-BC,

Visualizing the Analytical Workflow

Workflow for Compositional Analysis of Microbiome Data

Mathematical Relationship of ALR and CLR

The Scientist's Toolkit: Key Reagents & Computational Tools

Table 2: Essential Research Reagent Solutions for Compositional Microbiome Analysis

| Item / Tool | Function / Purpose | Key Considerations |

|---|---|---|

| 16S rRNA Gene Primer Sets (e.g., 515F/806R) | Amplify variable regions for sequencing. Defines taxonomic breadth. | Choice affects compositional summary. Use consistent set within study. |

| Mock Community Standards (e.g., ZymoBIOMICS) | Control for technical variation, batch effects, and calibration. | Essential for validating that observed log-ratio changes are biological. |

| QIIME 2 (qiime2.org) | End-to-end microbiome analysis platform. | Plugins like q2-composition offer ANCOM and other compositional methods. |

R Package: compositions |

Core suite for CoDA transformations (CLR, ALR, ILR). | Provides the fundamental clr(), alr(), ilr() functions. |

R Package: phyloseq / mia |

Data structure and tools for microbiome analysis. | Integrates with transformation packages for coherent EDA. |

R Package: ANCOMBC |

Differential abundance testing accounting for compositionality and sampling fraction. | State-of-the-art method for robust hypothesis testing. |

R Package: propr / ccrepe |

Measures pairwise (spurious) correlation and proportionality. | Identifies associations in compositional data (e.g., via ρp metric). |

| Graphviz (DOT Language) | Generates publication-quality diagrams of workflows and concepts. | Enables reproducible, code-based figure generation as used in this guide. |

Addressing compositionality via CLR, ALR, or ILR transformations is not an optional step but a mathematical necessity for valid exploratory data analysis in high-dimensional microbiome research. The choice of transformation should be guided by the biological question—whether it is an unsupervised exploration of community structure (CLR) or a focused analysis of specific taxon relationships (ALR/ILR). By integrating these transformations into a standardized EDA protocol, researchers and drug development professionals can uncover true biological signals, generate robust hypotheses, and build reliable models for therapeutic intervention and diagnostic development.

Exploratory data analysis (EDA) for high-dimensional microbiome data research necessitates robust statistical frameworks to describe microbial community structure. Within this thesis, diversity metrics serve as the cornerstone for initial hypothesis generation, enabling researchers to quantify and compare the compositional complexity within (alpha) and between (beta) samples. This guide provides a technical deep dive into the calculation, application, and interpretation of these foundational metrics.

Core Concepts & Quantitative Definitions

Alpha Diversity: Within-Sample Richness and Evenness

Alpha diversity measures the diversity of species within a single sample or habitat. Common metrics are summarized in Table 1.

Table 1: Common Alpha Diversity Metrics, Formulas, and Interpretation

| Metric | Formula | Sensitivity | Interpretation |

|---|---|---|---|

| Observed Features (Richness) | $S$ = Count of unique ASVs/OTUs | Insensitive to abundance | Pure count of species. Ignores evenness. |

| Shannon Index (H') | $H' = -\sum{i=1}^{S} pi \ln(p_i)$ | Moderate to abundant species | Combines richness and evenness. Increases with more species and more even abundances. |

| Simpson Index (λ) | $\lambda = \sum{i=1}^{S} pi^2$ | Dominant species | Probability two randomly selected individuals are the same species. Inverse (1-λ) is diversity. |

| Faith's Phylogenetic Diversity | $PD = \sum_{branch\ lengths}$ of phylogeny spanning all present species | Includes evolutionary distance | Sum of phylogenetic branch lengths represented in a community. |

| Pielou's Evenness (J') | $J' = \frac{H'}{\ln(S)}$ | Evenness only | Ratio of observed Shannon to maximum possible Shannon (if all species were equally abundant). |

Beta Diversity: Between-Sample Dissimilarity

Beta diversity quantifies the difference in species composition between samples. Key metrics and distances are in Table 2.

Table 2: Common Beta Diversity/Dissimilarity Metrics

| Metric | Formula (for samples j & k) | Quantitative vs. Qualitative | Phylogenetic? | ||||

|---|---|---|---|---|---|---|---|

| Jaccard Distance | $1 - \frac{ | A \cap B | }{ | A \cup B | }$ | Presence/Absence (Qualitative) | No |

| Bray-Curtis Dissimilarity | $1 - \frac{2 \sum \min(x{ij}, x{ik})}{\sum (x{ij} + x{ik})}$ | Abundance-weighted (Quantitative) | No | ||||

| Unweighted UniFrac | $\frac{\sum{i} bi | I(p{ij}>0) - I(p{ik}>0) | }{\sum{i} bi}$ | Presence/Absence | Yes | ||

| Weighted UniFrac | $\frac{\sum{i} bi | p{ij} - p{ik} | }{\sum{i} bi (p{ij} + p{ik})}$ | Abundance-weighted | Yes |

Experimental Protocols for Diversity Analysis

Standard Workflow for 16S rRNA Amplicon Data

Protocol: From Sequences to Diversity Matrices

- Sequence Processing & Denoising: Use DADA2, deblur, or QIIME 2 to correct errors, merge paired-end reads, remove chimeras, and generate an Amplicon Sequence Variant (ASV) table.

- Taxonomic Assignment: Assign taxonomy to ASVs using a reference database (e.g., SILVA, Greengenes).

- Phylogenetic Tree Construction: Generate a phylogenetic tree (e.g., with QIIME 2 via MAFFT/FastTree) for phylogenetic diversity metrics.

- Normalization (Rarefaction): Rarefy all samples to an even sequencing depth (e.g., the minimum read count) to correct for sampling heterogeneity. Note: Controversial; alternatives like DESeq2 or ANCOM exist for differential abundance.

- Metric Calculation: Using the normalized feature table (and tree), compute alpha diversity indices per sample and beta diversity distance matrices between all sample pairs.

- Statistical Testing & Visualization: Apply PERMANOVA (Adonis) on distance matrices for group comparisons. Visualize via PCoA (Principal Coordinates Analysis) plots.

Title: 16S Amplicon Diversity Analysis Workflow

Protocol for PERMANOVA Hypothesis Testing

Method: Permutational Multivariate Analysis of Variance (PERMANOVA) using the adonis2 function (R vegan package).

- Input: A beta diversity distance matrix (e.g., Bray-Curtis) and a sample metadata file with a grouping factor (e.g., Treatment vs. Control).

- Model Specification:

adonis2(distance_matrix ~ Group, data = metadata, permutations = 9999) - Procedure: The F-statistic is calculated based on the observed partitioning of variance. Group labels are randomly permuted (9,999 times) to generate a null distribution of F-statistics.

- Interpretation: The p-value is the proportion of permuted F-statistics greater than or equal to the observed F-statistic. A significant p-value (e.g., <0.05) indicates the group explains a non-random portion of the compositional variance.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Microbiome Diversity Studies

| Item | Function & Application |

|---|---|

| QIIME 2 Core Distribution | Reproducible, extensible microbiome analysis pipeline for processing sequences and calculating diversity metrics. |

| DADA2 R Package | High-resolution sample inference from amplicon data, producing more accurate ASVs than OTU clustering. |

| SILVA or GTDB Database | Curated, aligned reference databases for accurate taxonomic classification of 16S/18S/ITS sequences. |

| PhyloSeq R Package | Data structure and specialized functions for integrating phylogenetic tree, OTU table, and sample data for analysis. |

| vegan R Package | Comprehensive suite of functions for ecological diversity analysis (PERMANOVA, PCoA, alpha/beta metrics). |

| Mock Community (ZymoBIOMICS) | Defined microbial community standard used to validate sequencing accuracy, bioinformatics pipeline, and diversity recovery. |

| MoBio/Qiagen PowerSoil Pro Kit | Industry-standard kit for high-yield, inhibitor-free microbial DNA extraction from complex samples (soil, stool). |

| Illumina MiSeq System & Reagents | Platform of choice for 16S rRNA gene amplicon sequencing (2x300 bp paired-end). |

Interpretation and Reporting Guidelines

Alpha Diversity: Report multiple complementary indices (e.g., Observed, Shannon). Use non-parametric tests (Kruskal-Wallis) for group comparisons. Visualize with boxplots. Beta Diversity: Always pair distance metric choice (Bray-Curtis vs. UniFrac) with the biological question. Report PERMANOVA R² and p-value. Visualize with PCoA plots, coloring points by group and optionally displaying confidence ellipses.

Title: Selecting a Beta Diversity Metric

In the context of a broader thesis on Exploratory Data Analysis (EDA) for high-dimensional microbiome research, differential abundance (DA) testing serves as a cornerstone for hypothesis generation. Following initial steps like alpha/beta diversity analysis and ordination, DA testing rigorously identifies which microbial taxa, genes, or pathways exhibit statistically significant differences in abundance between predefined sample groups (e.g., healthy vs. diseased, treated vs. control). The high-dimensional, sparse, and compositional nature of microbiome data (e.g., 16S rRNA amplicon sequencing or metagenomic shotgun data) presents unique statistical challenges, driving the development and refinement of specialized tools. This technical overview examines three predominant methodologies—DESeq2, edgeR, and ANCOM-BC—detailing their theoretical frameworks, protocols, and application in generating robust, testable biological hypotheses.

Theoretical Foundations

DESeq2: Employs a negative binomial generalized linear model (GLM) with shrinkage estimators for dispersion and fold change. It is particularly adept at handling over-dispersed count data with small sample sizes by borrowing information across genes/taxa. Originally developed for RNA-seq, its application to microbiome data requires careful consideration of normalization via median-of-ratios or alternative scaling factors.

edgeR: Also utilizes a negative binomial model, offering both a common, trended, and tagwise dispersion estimation. It features robust capabilities for a wide range of experimental designs through its GLM framework. The robust=TRUE option in estimateDisp helps mitigate the influence of outliers common in microbiome datasets.

ANCOM-BC: Addresses the compositional nature of microbiome data directly. It fits a linear model on the log-transformed observed abundances, accounting for the sampling fraction (sample-specific scaling factor) and correcting for bias through an empirical Bayes procedure. Its core principle is to avoid identifying differentially abundant features based solely on relative proportions, thereby controlling for false positives due to compositionality.

Quantitative Comparison of Tool Characteristics

Table 1: Core Algorithmic and Practical Characteristics of DA Tools

| Feature / Parameter | DESeq2 | edgeR | ANCOM-BC |

|---|---|---|---|

| Core Statistical Model | Negative Binomial GLM | Negative Binomial GLM | Linear Model with Bias Correction |

| Key Innovation | Adaptive dispersion shrinkage; LFC shrinkage | Robust dispersion estimation; Flexibility | Sampling fraction correction; Compositionality |

| Primary Normalization | Median-of-ratios (geometric mean) | Trimmed Mean of M-values (TMM) | Sample-specific sampling fraction estimation |

| Handling of Zeros/Sparsity | Internally handles zeros; can be sensitive | Handles zeros via pseudo-counts (prior.count) | Log-transform with pseudo-count; CLR options |

| Control for Compositionality | No explicit control (post-hoc analysis advised) | No explicit control (post-hoc analysis advised) | Explicitly models and corrects for it |

| Typical Output Metrics | log2FoldChange, p-value, adjusted p-value (padj) | logFC, p-value, FDR | logFC (W statistic), p-value, adjusted p-value |

| Best Suited For | Small sample sizes; high sensitivity | Complex designs; large sample sizes | Standard designs where compositionality is a primary concern |

Experimental Protocol for a Typical Microbiome DA Analysis

A generalized workflow for applying these tools to 16S rRNA amplicon sequencing data is outlined below.

Protocol: Differential Abundance Testing from ASV/OTU Table

Input Data Preparation:

- Start with a quality-filtered Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) count table (rows: features, columns: samples).

- Prepare a sample metadata table with grouping variables (e.g., DiseaseState, Treatment, Age).

Preprocessing & Filtering:

- Low-abundance Filtering: Remove features with very low counts (e.g., present in less than 10% of samples or with less than 10 total reads) to reduce noise. Thresholds are tool and dataset specific.

- Data Object Creation: Import the filtered count table and metadata into the tool-specific object:

- DESeq2:

DESeqDataSetFromMatrix(). - edgeR:

DGEList()followed bycalcNormFactors(). - ANCOM-BC:

ancombc2_data()or similar function from theANCOMBCpackage.

- DESeq2:

Model Specification & Testing:

- DESeq2: Specify the design formula (e.g.,

~ DiseaseState). RunDESeq()which estimates size factors, dispersions, fits models, and performs Wald or LRT tests. - edgeR: Define design matrix using

model.matrix(). Estimate dispersions withestimateDisp(), then fit GLM withglmFit()and test withglmLRT()orglmQLFTest(). - ANCOM-BC: Call the core function (e.g.,

ancombc2()) specifying the fixed effect formula (e.g.,DiseaseState). The function internally corrects for sampling fraction and performs testing.

- DESeq2: Specify the design formula (e.g.,

Result Extraction & Interpretation:

- Extract results tables with log-fold changes, p-values, and adjusted p-values (FDR, e.g., Benjamini-Hochberg).

- Apply significance thresholds (e.g.,

abs(logFC) > 1 & padj < 0.05). - Visualize results via Volcano plots, heatmaps, or bar plots of significant taxa.

Diagram 1: Generalized DA Testing Workflow for Microbiome Data

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Microbiome DA Validation Experiments

| Reagent / Material | Function in Experimental Validation |

|---|---|

| DNA Extraction Kits (e.g., DNeasy PowerSoil Pro Kit) | Standardized, high-yield microbial genomic DNA isolation from complex samples (stool, soil, swabs) for sequencing library prep. |

| 16S rRNA Gene PCR Primers (e.g., 515F/806R for V4 region) | Amplify the target hypervariable region from extracted DNA for amplicon sequencing, defining the taxonomic resolution. |

| Quant-iT PicoGreen dsDNA Assay Kit | Fluorometric quantification of double-stranded DNA libraries post-amplification, ensuring accurate pooling for sequencing. |

| SPRIselect Beads | Size-selective magnetic beads for PCR clean-up, library size selection, and normalization prior to sequencing. |

| PhiX Control v3 | Spiked into sequencing runs for error rate monitoring and calibration, crucial for low-diversity microbiome libraries. |

| Mock Microbial Community DNA (e.g., ZymoBIOMICS D6300) | Positive control containing known, stable ratios of bacterial genomes to assess sequencing accuracy, bioinformatic pipeline performance, and potential technical bias in DA results. |

| QIIME 2, dada2, or USEARCH pipelines | Bioinformatic software suites for processing raw sequence data into the ASV/OTU count tables that serve as direct input for DESeq2, edgeR, or ANCOM-BC. |

Advanced Considerations and Hypothesis Generation

DA test results are the starting point for biological interpretation. Significant taxa should be evaluated in the context of:

- Effect Size: The magnitude of log-fold change indicates biological relevance beyond statistical significance.

- Taxonomic Identity: Linking ASVs to known genera or species (e.g., via SILVA or Greengenes databases) informs mechanistic hypotheses.

- Multiplicity Correction: The choice of FDR method impacts the stringency of the generated hypothesis list.

Diagram 2: From DA Results to Biological Hypothesis

DESeq2, edgeR, and ANCOM-BC represent distinct philosophical approaches to the same critical question in microbiome EDA: "What is differentially abundant?" DESeq2 and edgeR, as count-based models, offer high sensitivity and flexibility, while ANCOM-BC directly mitigates the fundamental issue of compositionality. The choice of tool should be guided by experimental design, data characteristics, and the specific biological questions at hand. Within an EDA framework, the judicious application of these tools, coupled with rigorous experimental design and appropriate controls, transforms high-dimensional microbial census data into a focused set of testable biological hypotheses, driving subsequent confirmatory research in microbiology and drug development.

Solving Real-World Problems: Troubleshooting Common Pitfalls and Optimizing Your Microbiome EDA Workflow

In high-dimensional microbiome data research, exploratory data analysis (EDA) is the critical first step for generating hypotheses and understanding microbial community dynamics. However, the biological signal is often obscured by technical artifacts (batch effects) and confounding biological variables (confounders). This whitepaper provides a technical guide to identifying, diagnosing, and correcting these issues to ensure robust downstream analysis and interpretation.

Identification and Diagnosis

Principal Component Analysis (PCA) and Visualization

Batch effects are identified by associating principal components (PCs) of variation with technical batches rather than biological conditions.

Experimental Protocol (PCA for Batch Diagnosis):

- Perform rarefaction or use a variance-stabilizing transformation (e.g., DESeq2's

varianceStabilizingTransformationfor count data) on the Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) table. - Compute PCA on the transformed data (using Euclidean distance) or on a suitable distance matrix (e.g., Aitchison distance for compositional data via a CLR transformation).

- Color-code the PCA plot (PC1 vs. PC2, PC1 vs. PC3) by batch identifier (e.g., sequencing run, extraction plate) and by primary biological condition (e.g., disease state).

- Statistically assess the association between PCs and metadata variables using PERMANOVA (adonis2 function in R's

veganpackage).

- Perform rarefaction or use a variance-stabilizing transformation (e.g., DESeq2's

Quantitative Data Summary: Table 1: Example PERMANOVA Results Demonstrating a Batch Effect.

Factor R² Value p-value Interpretation Sequencing Run 0.32 0.001 Strong technical variation. Disease State 0.15 0.001 Meaningful biological signal. Patient Age 0.04 0.12 Not a major driver of variance.

Diagram 1: Workflow for Identifying Batch Effects.

Identifying Confounding Variables

Confounders are covariates that correlate with both the independent variable of interest and the dependent outcome.

Experimental Protocol (Correlation Analysis):

- Calculate alpha-diversity indices (e.g., Shannon, Faith's PD) and beta-diversity distances (e.g., Weighted UniFrac) from the microbiome data.

- Test for associations between these diversity metrics and all available metadata (e.g., age, BMI, diet, antibiotic history, sample storage time) using linear models or non-parametric tests (e.g., Spearman correlation).

- For categorical variables of interest (e.g., case vs. control), use statistical tests (t-test, Wilcoxon) to check for significant differences in potential confounder distributions between groups.

Quantitative Data Summary: Table 2: Analysis of Potential Confounders in a Case-Control Study.

Variable Correlation with Shannon (p-value) Difference Case vs. Control (p-value) Suspected Confounder? Antibiotic Use (last 3mo) <0.001 0.002 Yes Sample Storage Time (days) 0.012 0.03 Yes Patient Age 0.15 0.45 No BMI 0.08 0.32 No

Correction Strategies

Batch Effect Correction Methods

Protocol for ComBat (Empirical Bayes):

- Input a transformed and normalized feature table (e.g., CLR-transformed counts).

- Specify the batch variable (e.g., sequencing run) and optional biological covariates to preserve (e.g., disease status) using the

sva::ComBatfunction in R. - The algorithm fits a model to the data, estimates batch effect parameters, and removes them via an empirical Bayes framework.

- Validate by re-running PCA and PERMANOVA to confirm reduction in batch association.

Protocol for MMUPHin (Meta-analysis Methods):

- Use the

MMUPHin::adjust_batchfunction with the normalized feature table, batch ID, and biological covariates. - This method simultaneously corrects for discrete batches and continuous confounders.

- It is particularly designed for microbiome data and can integrate multiple studies.

- Use the

Quantitative Data Summary: Table 3: Comparison of Batch Correction Methods on Simulated Microbiome Data.